Accelerating Discovery: Advanced Strategies for Reducing DFT Computational Cost in Catalyst Screening

This article provides a comprehensive guide for researchers, scientists, and drug development professionals seeking to accelerate catalyst discovery through Density Functional Theory (DFT).

Accelerating Discovery: Advanced Strategies for Reducing DFT Computational Cost in Catalyst Screening

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals seeking to accelerate catalyst discovery through Density Functional Theory (DFT). We explore the foundational principles behind DFT's computational cost, delve into practical methodologies for reduction, address common troubleshooting and optimization challenges, and critically compare validation techniques. By synthesizing current strategies from descriptor-based screening to machine learning integration, this resource aims to empower efficient and reliable high-throughput computational screening in biomedical and materials research.

Understanding the Bottleneck: Why DFT Calculations Are Computationally Expensive for Catalyst Screening

Topic: The Core Challenge: Scaling of DFT with System Size and Complexity

Troubleshooting Guides & FAQs

Q1: My DFT calculation for a >200-atom catalyst model fails with an "out of memory" error during the SCF cycle. What are my primary options to resolve this?

A: This is a classic scaling issue. Your options, in recommended order, are:

- Switch to a Linear-Scaling Functional: Use a functional with lower formal scaling (e.g., rev-vdW-DF2 over hybrid HSE06).

- Employ Numerical Aids: Activate

SCF:Kerkeror other charge density mixing to improve SCF convergence, reducing iterations. - Increase Parallelization & Memory: Distribute calculation across more CPU cores with efficient MPI/OpenMP settings.

- Downsample Integration Grids: Temporarily reduce the accuracy of the integration grid (

NGXFetc.) for testing, but revert for final production runs.

Q2: When screening bimetallic catalysts, geometry optimization becomes prohibitively slow. What methodology can I use to maintain accuracy while reducing cost?

A: Implement a multi-fidelity screening protocol:

- Initial Pre-Screening: Use a fast, lower-rung GGA functional (e.g., PBE) with relaxed convergence criteria (

EDIFF=1E-4,EDIFFG=-0.05). - Focused Screening: For top 10-20 candidates, re-optimize with a more accurate functional (e.g., RPBE, rev-vdW-DF2) and tighter criteria.

- High-Fidelity Validation: For the final 2-3 leads, perform single-point energy calculations with a hybrid functional or higher basis set quality. This workflow reduces the number of expensive calculations.

Q3: How do I quantitatively choose between a GGA and a meta-GGA functional for my transition metal oxide catalyst study, considering cost and accuracy?

A: Base the decision on a pilot study comparing key metrics for a representative, smaller system. The critical trade-off is between computational cost and the accurate description of electronic correlation.

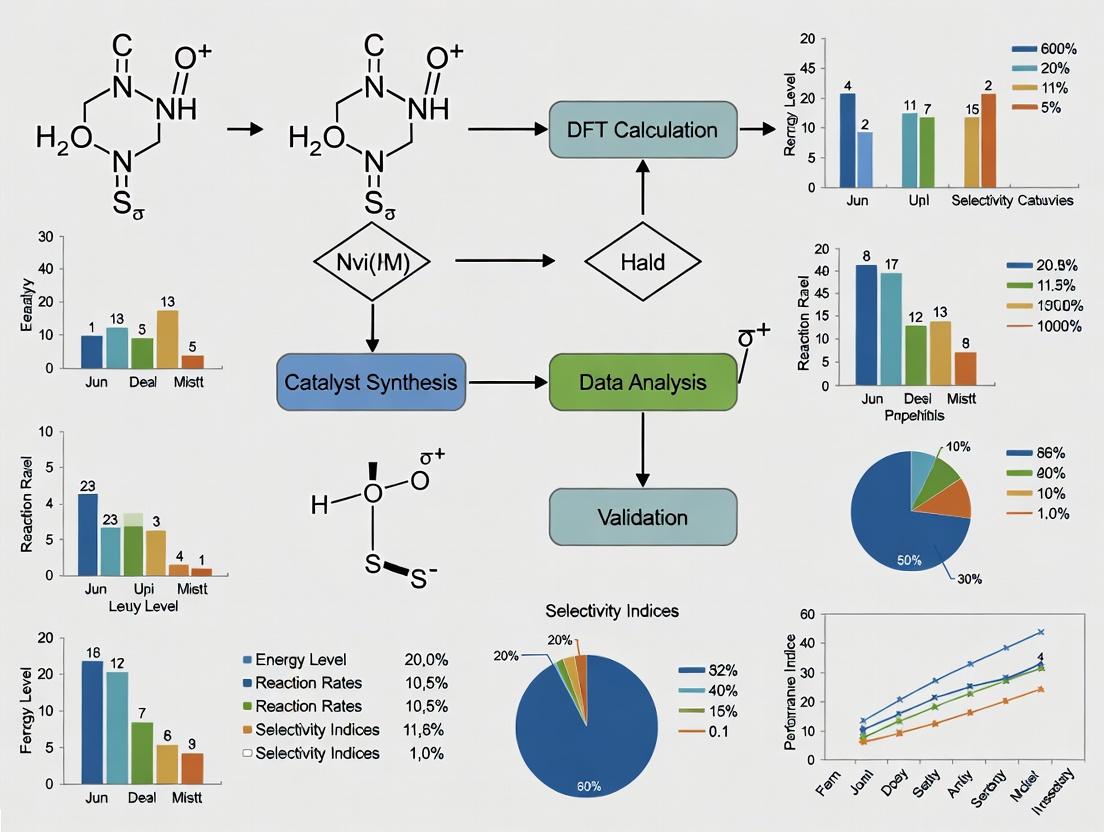

Diagram Title: Decision Workflow for DFT Functional Selection

Table 1: Quantitative Comparison of GGA vs. Meta-GGA for a Model NiO Cluster (Pilot Study)

| Metric | GGA (PBE) | Meta-GGA (r2SCAN) | Experimental Reference | Notes |

|---|---|---|---|---|

| Band Gap (eV) | 1.1 | 2.8 | 3.6-4.0 | r2SCAN significantly improves but may still underestimate. |

| Ni-O Bond Length (Å) | 1.97 | 1.93 | 1.92 | r2SCAN provides much better agreement. |

| Formation Energy (eV/atom) | -3.5 | -3.9 | -4.1 ± 0.2 | r2SCAN is closer to reference. |

| Avg. SCF Time (s) | 450 | 1200 | N/A | Meta-GGA cost is ~2.7x higher for this system. |

| Memory Overhead | Low | Moderate | N/A | Due to more complex functional form. |

Conclusion: If accurate electronic structure is critical, use r2SCAN. If exploring 1000s of structures where relative energetics are key, PBE may suffice.

Q4: What is a concrete protocol to benchmark the cost-accuracy trade-off of different k-point meshes for periodic slab models of catalysts?

A: Follow this systematic protocol to determine the optimal k-point density.

Experimental Protocol: K-Point Convergence Benchmark

- System: Create a standardized 2x2 surface slab model of your catalyst (e.g., Pt(111)).

- Calculation Setup: Use a fixed functional (e.g., PBE), pseudopotential, and plane-wave cutoff.

- Variable: Sequentially calculate the total energy using a series of k-point meshes: Γ-point only, 2x2x1, 4x4x1, 6x6x1, 8x8x1. Ensure the z-direction is always 1 for slabs.

- Data Collection: Record for each mesh: Total Energy (E_tot), Energy difference from finest mesh (ΔE), and Computational Time.

- Analysis: Plot ΔE vs. Time. The optimal mesh is at the "knee" of the curve, where energy gain diminishes relative to cost increase.

Diagram Title: K-Point Convergence Benchmarking Protocol

Table 2: K-Point Convergence for a 20-Atom Pt(111) Slab (PBE)

| K-Point Mesh | Total Energy (eV) | ΔE (meV) | Avg. SCF Time (min) | Force on Atom (max, eV/Å) |

|---|---|---|---|---|

| Γ-only | -36512.451 | 228.0 | 3.5 | 0.45 |

| 2x2x1 | -36512.647 | 32.0 | 8.1 | 0.12 |

| 4x4x1 | -36512.672 | 7.0 | 25.4 | 0.08 |

| 6x6x1 | -36512.677 | 2.0 | 71.8 | 0.06 |

| 8x8x1 | -36512.679 | 0.0 (ref) | 158.2 | 0.06 |

Recommendation: The 4x4x1 mesh provides an excellent trade-off, being within 7 meV of convergence at ~1/6th the cost of the 8x8x1 mesh.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Materials for DFT Catalyst Screening

| Item/Software | Primary Function | Role in Cost Reduction |

|---|---|---|

| VASP | Plane-wave DFT code with advanced functionals. | Robust PAW pseudopotentials and efficient iterative solvers reduce SCF steps. |

| Quantum ESPRESSO | Open-source plane-wave DFT code. | PWscf enables efficient parallelization across CPU cores, cutting wall time. |

| GPAW | DFT code using real-space grids or PAW. | Offers efficient LCAO mode for linear-scaling preliminary screenings. |

| ASE (Atomic Simulation Environment) | Python library for setting up/manipulating atoms. | Automates high-throughput workflows, managing 1000s of calculations with error handling. |

| SCAN & r2SCAN | Meta-GGA density functionals. | Provide higher accuracy without the O(N⁴) cost of hybrid functionals. |

| VESTA | 3D visualization for structural models. | Critical for verifying slab, cluster, and adsorbate models before costly computation. |

| ChemShell | QM/MM embedding environment. | Enables embedding a high-accuracy DFT region within a lower-level force field for large systems. |

Troubleshooting Guides & FAQs

Q1: My DFT calculation is extremely slow and exceeds my computational budget. How can I diagnose the primary cost driver? A: The three key suspects are your basis set size, functional complexity, and k-point mesh density. First, run a single-point energy calculation on a small, representative system with your current settings and note the time/wall-clock cost. Then, perform a series of simplified calculations:

- Reduce the basis set to a smaller tier (e.g., from def2-TZVP to def2-SVP). Re-run.

- Switch to a simpler functional (e.g., from a hybrid like HSE06 to a GGA like PBE). Re-run.

- Use a significantly coarser k-point mesh (e.g., Γ-point only). Re-run. Compare the computational times. The setting that yields the largest speed-up when simplified is your primary cost driver. For catalyst screening, a balanced approach is critical.

Q2: I am screening transition metal catalysts. My formation energy results vary wildly with different functionals. Which functional should I trust for accuracy and cost-efficiency? A: For transition metal systems, the choice is critical. GGAs (like PBE) are fast but often fail for strongly correlated electrons. Hybrids (like HSE06) are more accurate but ~100x slower. Meta-GGAs (like SCAN) offer a middle ground. Protocol: For your specific class of catalysts (e.g., MOFs, surfaces), select 2-3 benchmark systems with reliable experimental or high-level CCSD(T) formation energy data. Then:

- Perform geometry optimization and energy calculation with PBE, SCAN, and HSE06 using the same basis set and k-points.

- Calculate the Mean Absolute Error (MAE) for each functional against benchmark data.

- Choose the functional with the best accuracy-to-cost ratio for your screening campaign. Often, SCAN provides a good compromise.

Q3: How fine does my k-point mesh need to be for accurate surface adsorption energy calculations, and how can I reduce this cost? A: Adsorption energies can converge slowly with k-points. You must perform a k-point convergence test. Protocol:

- Optimize your slab and adsorbate structure using a moderate k-point mesh (e.g., 3x3x1).

- Perform single-point energy calculations on the optimized structure using a series of increasingly dense meshes: 2x2x1, 3x3x1, 4x4x1, 5x5x1, 6x6x1.

- Plot the adsorption energy vs. the inverse of the total number of k-points. The point where the energy change is less than 1 meV/atom is considered converged.

- Cost Reduction Tip: Use the Monkhorst-Pack scheme and consider symmetry reduction. For screening, you may use a slightly unconverged but consistent mesh, as energy differences often converge faster than absolute energies.

Q4: I get inconsistent bandgap predictions for my semiconductor photocatalyst candidates depending on my basis set. How do I choose? A: Bandgaps are famously functional-dependent, but basis set convergence is also vital. Pure DFT (GGA) underestimates bandgaps. Hybrids correct this. Follow this protocol for basis set selection:

- Start with a medium-quality basis set (def2-SVP) and a hybrid functional (HSE06).

- Perform a geometry optimization.

- Perform single-point calculations with increasingly larger basis sets (def2-TZVP, def2-QZVP) on the optimized geometry.

- Plot the bandgap value vs. basis set size. When the change is <0.05 eV, you have convergence. For high-throughput screening, use a consistent, medium-quality basis set and document this known systematic error.

Table 1: Relative Computational Cost & Accuracy of Common DFT Functionals

| Functional Class | Example | Relative Cost (vs. PBE) | Typical Use Case | Note for Catalysis |

|---|---|---|---|---|

| GGA | PBE | 1 | High-throughput screening, structural props. | Poor for band gaps, dispersion. |

| Meta-GGA | SCAN | 3-5 | Improved energetics, surfaces. | Better for correlated systems than PBE. |

| Hybrid | HSE06 | 50-100 | Accurate band gaps, defect energies. | Gold standard for electronic structure. |

| Double-Hybrid | B2PLYP | 200+ | Benchmark quantum chemistry. | Prohibitively expensive for screening. |

Table 2: Basis Set Convergence for Adsorption Energy of CO on Pt(111) (PBE Functional)

| Basis Set for Pt/CO | Total K-points | CPU Hours | Adsorption Energy (eV) | ΔE vs. QZ (eV) |

|---|---|---|---|---|

| def2-SVP | 400 | 12 | -1.65 | +0.18 |

| def2-TZVP | 400 | 85 | -1.80 | +0.03 |

| def2-QZVP | 400 | 320 | -1.83 | 0.00 |

Table 3: k-Point Convergence for Si Bandgap (HSE06 Functional, def2-TZVP basis)

| k-point Mesh | Total k-points | CPU Hours | Bandgap (eV) | ΔE vs. 6x6x6 (eV) |

|---|---|---|---|---|

| 2x2x2 | 4 | 8 | 1.08 | +0.05 |

| 4x4x4 | 32 | 45 | 1.12 | +0.01 |

| 6x6x6 | 216 | 280 | 1.13 | 0.00 |

Experimental Protocols

Protocol 1: Systematic Convergence Testing for High-Throughput Screening Setup Objective: To establish a cost-effective yet sufficiently accurate DFT parameter set for screening 1000+ catalyst materials.

- Select Benchmark Set: Choose 5-10 representative materials from your target space (e.g., metals, oxides, sulfides).

- Basis Set Test: Fix a functional (e.g., PBE) and a moderate k-point mesh. Calculate formation energy for all benchmarks using def2-SVP, def2-TZVP, and def2-QZVP. Determine the smallest basis set where MAE < 0.05 eV/atom vs. the largest set.

- k-point Test: Using the chosen basis set and functional, perform k-point convergence (as in FAQ A3) on the largest unit cell in your benchmark set.

- Functional Validation: Using the converged basis/k-points, compute key properties (e.g., adsorption energy of a key intermediate) with PBE, SCAN, and HSE06 for 3 critical systems. Compare to literature/experimental data. Select the functional that meets accuracy thresholds within the project's computational budget.

Protocol 2: Computational Adsorption Energy Workflow Objective: To reliably calculate the adsorption energy (E_ads) of a molecule on a catalyst surface.

- Slab Preparation: Cleave the surface. Build a slab with >15 Å vacuum. Fix bottom 2-3 atomic layers.

- k-point Convergence: Perform protocol as in FAQ A3 to determine adequate k-point sampling for the surface.

- Geometry Optimization: Optimize the clean slab structure using selected functional/basis/k-points. Optimize the isolated molecule in a large box.

- Adsorbate Optimization: Place the molecule on the slab surface. Optimize the full adsorbate-surface system.

- Energy Calculation: Perform a more precise single-point energy calculation on all three optimized structures (slab, molecule, adsorbate-system).

- Compute Eads: Eads = E(adsorbate-system) - E(slab) - E(molecule). Apply necessary corrections (e.g., BSSE, dispersion).

Visualizations

Diagram Title: DFT Cost Drivers and Their Trade-offs

Diagram Title: Protocol for DFT Parameter Convergence Testing

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for DFT Catalyst Screening

| Item / Software | Primary Function | Relevance to Cost Reduction Screening |

|---|---|---|

| VASP, Quantum ESPRESSO | Core DFT simulation engines. | Choice impacts license cost & scaling efficiency. Open-source options reduce overhead. |

| ASE (Atomic Simulation Environment) | Python scripting library for setting up, running, and analyzing calculations. | Automates high-throughput workflows, reducing manual setup time and errors. |

| pymatgen, Materials Project API | Databases and Python tools for material analysis. | Provides benchmark data and crystal structures, preventing unnecessary re-calculation. |

| JDFTx, GPAW | Plane-wave & real-space DFT codes. | Efficient for specific system types (e.g., electrolytes in JDFTx). |

| SLURM / PBS | Job scheduling for HPC clusters. | Enables efficient queue management for thousands of screening jobs. |

| Dispersion Corrections (D3, vdW-DF) | Empirical add-ons to account for van der Waals forces. | Essential for adsorption accuracy; low additional cost compared to functional choice. |

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: During catalyst screening, my DFT-calculated adsorption energies vary widely (>0.5 eV) between different surface models of the same material. Is this an error? A: Not necessarily. This often stems from model dependency. Key checks:

- Surface Convergence: Ensure your slab model is thick enough. The adsorption energy should converge with slab thickness (typically 3-5 atomic layers for metals). Perform a convergence test.

- k-point Convergence: Adsorption energies require a well-converged k-point mesh. Test increasing k-point density until energy changes are < 0.01 eV.

- Site Specificity: Verify you are calculating adsorption on the same crystallographic site (e.g., top, bridge, hollow) in each model. Different sites yield different energies.

- Functional Selection: Some functionals (e.g., PBE) are known to over-bind. Consider using RPBE or a hybrid functional (e.g., HSE06) for more accurate adsorption energies, though at higher cost.

Q2: My NEB (Nudged Elastic Band) calculation for a reaction pathway fails to converge or finds an unrealistic path. What steps should I take? A: This is common. Follow this protocol:

- Initial Path Quality: The initial guess path is critical. Use the IDPP (Image Dependent Pair Potential) method or manually place intermediates to ensure a physically reasonable guess.

- Spring Constant: Adjust the spring constant between images (typically 5.0 eV/Ų). Too high can cause instability; too low allows images to cluster.

- Optimizer: Switch from Quick-Min to FIRE or L-BFGS optimizers for better convergence.

- Image Number: Increase the number of images (e.g., from 5 to 9-11) to better resolve complex pathways with multiple shallow minima.

- Check Forces: Confirm convergence criteria (e.g., force tolerance < 0.05 eV/Å) are met for all images.

Q3: How can I reduce the computational cost of screening hundreds of potential catalyst surfaces without losing predictive accuracy for activity? A: Implement a tiered screening strategy:

- Tier 1 (Ultra-Fast): Use a lower-cost functional (e.g., PBE with a small basis set/pseudopotential) and a single descriptor (e.g., d-band center for metals, oxygen vacancy formation energy for oxides) to filter clearly inactive materials.

- Tier 2 (Standard): For promising candidates, calculate key adsorption energies (e.g., O, C, H, or relevant intermediates) with higher accuracy settings (converged k-points, slab thickness).

- Tier 3 (High-Accuracy): For the top 5-10 candidates, perform full reaction pathway analysis (NEB) and possibly use a hybrid functional or include solvation effects.

Q4: I get a "SCF convergence failed" error when calculating adsorption on a doped or defective surface. How do I resolve this? A: Doped/defective systems often have challenging electronic structures.

- Mixing Parameters: Increase the SCF step count (e.g., to 200-500) and adjust the mixing parameters (e.g., use DIIS mixing with a small mixing parameter like 0.05).

- Smearing: Apply a small smearing (e.g., Gaussian smearing, width = 0.1 eV) to aid convergence in metallic or small-bandgap systems.

- Initial Spin: For systems with potential magnetism, manually set initial magnetic moments on transition metal atoms.

- Charge: If your model is non-periodic (cluster) or has a dipole, consider charge corrections.

Experimental Protocols & Methodologies

Protocol 1: Convergence Testing for Adsorption Energy Calculations

- Objective: Determine computationally efficient yet accurate parameters for slab model DFT calculations.

- Procedure:

- Slab Thickness: Create slab models of your surface with 1 to 7 atomic layers. Fix the bottom 1-2 layers at bulk positions. Calculate the adsorption energy of a simple probe (e.g., CO) at the same site on each slab.

- Vacuum Depth: Using your converged slab thickness, vary the vacuum layer from 10 Å to 25 Å in 5 Å increments. Calculate the total energy of the clean slab.

- k-point Mesh: Using converged slab and vacuum parameters, calculate the adsorption energy using increasingly dense k-point meshes (e.g., 2x2x1, 3x3x1, 4x4x1, 5x5x1).

- Analysis: Plot adsorption energy vs. parameter value. The converged value is where the energy change is less than 0.01 eV.

Protocol 2: Computational Hydrogen Electrode (CHE) for Reaction Free Energy Diagrams

- Objective: Construct a free energy diagram for an electrocatalytic reaction (e.g., Oxygen Reduction Reaction - ORR) at a given potential.

- Procedure:

- Calculate the total DFT energy, EDFT, for all adsorbed intermediates (*, *O, *OH, *OOH).

- Apply necessary corrections (e.g., zero-point energy, enthalpy, entropy) from vibrational frequency calculations to get free energy at 298 K: G = EDFT + EZPE + ∫Cv dT - TS.

- For reactions involving H⁺ + e⁻, use the CHE method: G(H⁺ + e⁻) = ½ G(H₂) - eU, where U is the electrode potential vs. SHE.

- The free energy of an intermediate is then G(*) = G(slab + adsorbate) - G(slab) + ΔG_corrections.

- Plot G for each step along the reaction coordinate. The potential-dependent step is shifted by -eU.

Data Presentation: Common DFT Descriptors & Benchmarks

Table 1: Key Descriptors for Catalyst Screening and Their Computational Cost

| Descriptor | Definition (Typical Calculation) | Information Gained | Relative Computational Cost | Common Use Case |

|---|---|---|---|---|

| Adsorption Energy (E_ads) | Eads = E(slab+adsorbate) - E(slab) - E(adsorbategas) | Binding strength of a key intermediate; correlates with activity (Sabatier principle). | Low-Medium | Initial activity screening (e.g., O* for OER, CO* for CO2RR). |

| d-band Center (ε_d) | First moment of the projected d-band density of states of surface atoms. | Tendency to form bonds with adsorbates; lower ε_d = weaker binding. | Very Low (post-DFT) | Transition metal & alloy screening. |

| Reaction Energy (ΔE) | ΔE = Σ E(products) - Σ E(reactants) for an elementary step. | Energetic favorability of a single step. | Low-Medium | Identifying potential limiting steps. |

| Activation Energy (E_a) | Calculated via Climbing Image NEB or dimer method. | Kinetic barrier for an elementary step; determines reaction rate. | Very High | Detailed mechanistic study for top candidates. |

| Turnover Frequency (TOF) Estimate | Calculated from E_a using microkinetic or Bronsted-Evans-Polanyi (BEP) relations. | Estimated catalytic activity at operating conditions. | Medium (post-DFT analysis) | Linking DFT to experimental rates. |

Table 2: Typical Convergence Criteria for Accurate DFT Calculations

| Parameter | Typical Value for Metals/Oxides | Target Tolerance | Impact if Not Converged |

|---|---|---|---|

| Plane-wave Cutoff Energy | 400 - 550 eV | ΔE < 0.01 eV/atom | Inaccurate energies, poor geometry. |

| k-point Sampling (Slab) | (4x4x1) to (6x6x1) Monkhorst-Pack | ΔE < 0.01 eV/atom | Large errors in E_ads, especially for metals. |

| Slab Thickness | 3-5 atomic layers | ΔE_ads < 0.05 eV | Unphysical interaction through slab. |

| Vacuum Layer | >15 Å | ΔE_slab < 0.001 eV | Spurious interaction between periodic images. |

| Force Convergence | < 0.02 eV/Å | Geometry optimization | Inaccurate bond lengths & adsorption sites. |

| SCF Energy Convergence | < 1e-5 eV/atom | Electronic minimization | Inconsistent total energies. |

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for DFT-Based Catalyst Screening

| Item / Software | Function / Purpose | Key Consideration for Cost Reduction |

|---|---|---|

| VASP, Quantum ESPRESSO, CP2K | Core DFT simulation engines to solve the electronic structure problem. | Choose pseudopotential/functional wisely. GGA-PBE is faster than hybrid HSE06. Use GPU acceleration if available. |

| ASE (Atomic Simulation Environment) | Python library for setting up, running, and analyzing DFT calculations. | Enables automation of high-throughput screening workflows, reducing manual setup time. |

| pymatgen | Python library for materials analysis and manipulation of input files. | Streamlines creation of slab models, defect structures, and analysis of output data. |

| CatKit | Toolkit specifically designed for building and analyzing catalytic surfaces. | Provides standard surface generation, adsorption site identification, and descriptor calculation. |

| NEB & Dimer Methods | Algorithms (implemented in most DFT codes) for finding transition states and minimum energy paths. | The major computational bottleneck. Use carefully converged initial paths to minimize optimization steps. |

| Computational Cluster (HPC) | High-performance computing resources with many CPU/GPU cores. | Utilize queue systems effectively to run hundreds of calculations in parallel for screening. |

| BEEF-vdW Functional | A functional offering a good balance of accuracy for adsorption and computational cost, with error estimation. | Provides an ensemble of energies to assess uncertainty in predictions, avoiding over-reliance on single functional results. |

Troubleshooting Guides & FAQs for DFT Catalyst Screening

Q1: My DFT calculation of adsorption energy for a molecule on a metal surface shows large variance (>0.3 eV) between different k-point meshes. How do I determine an acceptable, cost-effective k-point sampling baseline? A: This indicates your system is sensitive to Brillouin zone integration. Follow this protocol:

- Convergence Test: Perform single-point energy calculations on your optimized structure using a series of increasingly dense k-point meshes (e.g., 2x2x1, 3x3x1, 4x4x1, 5x5x1, 6x6x1). Use Γ-centered grids for slabs.

- Baseline Establishment: Plot the target property (e.g., adsorption energy) against k-point density or computational cost (CPU-hours). The acceptable baseline is the point where the property change is less than your predefined threshold (e.g., 0.05 eV) for three consecutive density increases.

- Trade-off Table:

| k-point mesh | Adsorption Energy (eV) | ΔE vs. finest mesh (eV) | CPU-Hours | Recommended for |

|---|---|---|---|---|

| 2x2x1 | -1.85 | 0.22 | 45 | Initial Scoping |

| 3x3x1 | -1.98 | 0.09 | 98 | Baseline Screening |

| 4x4x1 | -2.03 | 0.04 | 175 | Validation Studies |

| 5x5x1 | -2.06 | 0.01 | 280 | High-Accuracy Refinement |

Q2: When screening transition metal catalysts, how do I choose between the generalized gradient approximation (GGA) and a more expensive hybrid functional? A: The choice hinges on the property of interest and the required chemical accuracy. GGA (e.g., PBE) is standard for structure and trends but can fail for reaction energies involving bonds with strong correlation.

- Protocol for Selection:

- Benchmark a Subset: Select 3-5 representative catalyst-molecule systems from your screening library.

- Parallel Calculations: Compute the key descriptor (e.g., d-band center, adsorption energy) using both GGA (PBE) and a hybrid functional (e.g., HSE06).

- Correlation Analysis: Plot the hybrid results against the GGA results. Establish the linear correlation (R²) and mean absolute error (MAE).

- Decision Rule: If R² > 0.95 and MAE for energy descriptors is < 0.1 eV, GGA is likely sufficient for relative ranking in high-throughput screening. Use hybrids only for final candidates.

Q3: My slab model for a surface reaction shows significant interaction between adsorbed species in neighboring periodic images. How can I mitigate this with minimal cost increase? A: This is a common finite-size error. Implement this stepwise protocol:

- Diagnose: Calculate your property with successively larger supercells (e.g., (2x2), (3x3), (4x4) surface unit cells).

- Extrapolate: Fit the property vs. 1/(supercell area) to a linear function. The y-intercept gives the estimate for the non-interacting, infinite separation limit.

- Establish Baseline: The acceptable supercell size is where the property is within your error tolerance of the extrapolated value. Often, a (3x3) or (4x4) cell is sufficient for isolated adsorbates.

| Supercell Size | Adsorption Energy (eV) | Energy vs. Inf. Limit (eV) | Atoms in Calculation | Recommendation |

|---|---|---|---|---|

| (2x2) | -2.10 | 0.15 | 48 | Too small for isolated species |

| (3x3) | -1.98 | 0.03 | 108 | Cost-effective Baseline |

| (4x4) | -1.96 | 0.01 | 192 | Use for charged/final states |

Q4: How do I decide if I need to include van der Waals (vdW) corrections in my screening workflow, given the 10-30% increase in computation time? A: Use this decision flowchart and protocol:

- Protocol: For your system class (e.g., organic molecules on metals), run a benchmark comparing PBE vs. PBE+D3 (or other vdW method) for:

- Adsorption geometries (distance to surface).

- Physisorption energies.

- Reaction barriers for vdW-influenced states.

- Rule: If vdW correction changes adsorption energies by > 0.1 eV or reverses the stability order of adsorption sites, it must be included in your baseline. For covalent/metallic systems only, it may be omitted.

Title: Decision Flowchart for Including vdW Corrections

Q5: What is a robust, step-by-step protocol for establishing a full workflow baseline (from geometry optimization to energy) for screening? A: Implement this hierarchical convergence protocol. Each step must be converged before proceeding.

Title: DFT Screening Workflow Baseline Protocol

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Software | Provider Examples | Function in DFT Catalyst Screening |

|---|---|---|

| VASP | University of Vienna, VASP Software GmbH | Industry-standard DFT code for periodic systems, essential for surface catalysis calculations. |

| Quantum ESPRESSO | Open-Source Project | Open-source suite for electronic-structure calculations using plane-wave basis sets and pseudopotentials. |

| GPAW | Technical University of Denmark | DFT code combining plane-wave and real-space grids, efficient for large-scale screening. |

| ASE (Atomic Simulation Environment) | Open-Source | Python library for setting up, running, and analyzing DFT calculations, crucial for workflow automation. |

| Materials Project API | LBNL, Materials Project | Database API for retrieving pre-computed bulk material properties to set up and validate your catalyst models. |

| CatKit & pymatgen | SUNCAT, Materials Virtual Lab | Python toolkits for building surface slabs, generating adsorption sites, and analyzing reaction networks. |

| High-Performance Computing (HPC) Core Hours | DOE INCITE, NSF XSEDE, Local Clusters | The essential "reagent" for production calculations. Trade-offs directly translate to core-hour budgets. |

| Standardized Catalysis Dataset (e.g., CatApp) | SLAC, SUNCAT | Benchmark datasets (e.g., adsorption energies) to validate your computational baseline's accuracy. |

Practical Strategies and Tools for Efficient DFT-Based Catalyst Screening

Leveraging Chemical Intuition and Descriptors for Pre-Screening

Technical Support Center

Troubleshooting Guide

Issue: Descriptor calculation fails for metal-organic complexes.

- Symptoms: Software error or infinite loop during descriptor generation (e.g., for COSMIC, SOAP, or MBTR descriptors).

- Probable Cause: The molecular geometry is invalid, contains unrealistic bond lengths/angles, or the metal coordination environment is not correctly perceived by the standard library (e.g., RDKit).

- Solution:

- Pre-optimize the initial guess geometry using a UFF or MMFF94 force field calculation.

- Explicitly define the bond orders and formal charges on the metal center.

- Use a cheminformatics library with enhanced inorganic chemistry support (e.g., ase or pymatgen) for descriptor generation.

- Preventive Measure: Implement a geometry sanitization and validation step in your pre-screening workflow before descriptor calculation.

Issue: Poor correlation between simple descriptors and DFT-calculated activation energy.

- Symptoms: Machine learning model trained on descriptors (e.g., electronegativity, d-band center estimates) shows R² < 0.6 on test set for predicting reaction energy barriers.

- Probable Cause: The chosen descriptors are not sufficiently expressive for the specific catalytic step (e.g., C-H activation vs. O-O coupling). The problem is under-defined.

- Solution:

- Incorporate problem-specific descriptors. For adsorption energy pre-screening, include atomic radii, coordination numbers, and valence electron counts.

- Use a dimensionality reduction technique (e.g., t-SNE) on a large pool of diverse descriptors to identify the most relevant clusters for your property.

- Combine with a low-level, semi-empirical method (e.g., PM7) to generate a cheap, intermediate-property descriptor.

- Protocol for Dimensionality Reduction:

- Calculate a pool of 200+ chemical descriptors (compositional, electronic, structural) for your training set.

- Scale all features using

StandardScaler. - Apply t-SNE (

perplexity=30,n_components=2) to reduce to 2D. - Color the t-SNE map by your target DFT property to visually identify separable clusters.

- Use feature importance analysis (e.g., SHAP) on the original high-dimensional data to select top descriptors from relevant clusters.

Issue: High false positive rate in catalyst pre-screening.

- Symptoms: Many candidates identified by the descriptor/ML model as "promising" fail upon subsequent full DFT evaluation due to unrealistic geometries or unfavorable side reactions.

- Probable Cause: The pre-screening model only predicts a primary activity descriptor (e.g., adsorption strength) but ignores stability, selectivity, or solvent effects.

- Solution: Implement a sequential filtering workflow.

- Filter 1: Use chemical intuition rules (e.g., must not contain precious metals, must be synthesizable) to narrow the search space.

- Filter 2: Apply a fast ML model for primary activity.

- Filter 3: Apply a secondary, stability-focused filter (e.g., a classification model predicting decomposition likelihood using formation energy descriptors).

Frequently Asked Questions (FAQs)

Q1: What are the most robust electronic descriptors for initial transition metal catalyst screening? A: For a rapid, low-cost pre-screen, the following descriptors, derivable from periodic table data or minimal computation, offer a good starting point:

- d-band center estimate: Calculated from the elemental d-band center and coordination environment. Correlates with adsorption strength.

- Work function: For surfaces, estimated from slab models or simple composite descriptors.

- Pauling electronegativity: Useful for predicting charge transfer.

- Valence electron count: Critical for organometallic complexes.

Q2: How can I generate a meaningful descriptor set for a novel organic ligand in organocatalysis? A: Follow this protocol using the RDKit library in Python:

Q3: My dataset of DFT-calculated properties is small (<100 data points). Can I still use ML for pre-screening? A: Yes, but with caution. Use simple, interpretable models (e.g., Ridge Regression, Gaussian Process Regression) and low-dimensional descriptor sets to avoid overfitting. Consider using a "delta-learning" approach where you predict the difference from a known, similar catalyst system, which requires less data.

Q4: How do I validate my pre-screening pipeline before running it on thousands of candidates? A: Perform a retrospective validation study:

- Take a known catalytic system with 10-20 experimentally validated catalysts and inactive analogs.

- Run your entire pipeline (descriptor calculation -> model prediction -> ranking).

- Calculate the enrichment factor (EF) in the top 20% of your ranked list. A good pre-screen should have EF > 3, meaning it concentrates true hits early in the list.

Data Presentation

Table 1: Comparison of Descriptor Types for Catalyst Pre-Screening

| Descriptor Type | Examples | Computational Cost | Typical Correlation (R²) with DFT ΔG‡ | Best For |

|---|---|---|---|---|

| Elemental / Compositional | Electronegativity, Ionic Radius, Group Number | Very Low (<1 sec) | 0.3 - 0.5 | Initial bulk composition scan |

| Geometric | Coordination Number, Voronoi Tessellation | Low (sec-min) | 0.4 - 0.6 | Surface adsorption on alloys |

| Electronic (Semi-Empirical) | PM7 HOMO/LUMO, Extended Hückel Charges | Medium (min-hours) | 0.5 - 0.7 | Organometallic & molecular catalysts |

| Machine-Learned (Representation) | SOAP, MBTR, CGCNN | Medium-High (hours) | 0.6 - 0.9 | High-accuracy screening of known spaces |

Table 2: Enrichment Factor (EF₁₀%) for Different Pre-Screening Methods in a Retrospective Study of CO₂ Reduction Catalysts

| Pre-Screening Method | Number of Descriptors | EF₁₀% (Validation Set) | Final DFT Candidates Required |

|---|---|---|---|

| Random Selection | N/A | 1.0 | 1000 |

| d-band Center Only | 1 | 2.1 | 476 |

| Linear Model (5 Descriptors) | 5 | 4.7 | 213 |

| Random Forest (20 Descriptors) | 20 | 8.3 | 120 |

| Graph Neural Network (CGCNN) | N/A | 12.5 | 80 |

Experimental Protocols

Protocol: Calculating d-band Center Descriptors for Bimetallic Surfaces. Objective: To estimate the d-band center (ε_d) for a surface alloy using a simple, linear interpolation model. Steps:

- Obtain Reference Values: From literature DFT databases (e.g., the CatApp or Materials Project), gather the following for pure metals A and B:

- Pure metal d-band center: εd(A), εd(B)

- Surface coordination number for your structure of interest (e.g., fcc(111): CN=9).

- Calculate Strain Effect: For each component in the alloy, calculate the strain-induced shift.

Δε_d(strain) = -β * (Δa/a_0), whereβ ≈ 1.5 eV/Åfor late transition metals,Δais the change in lattice constant,a_0is the equilibrium lattice constant. - Calculate Ligand Effect: Estimate the ligand effect from the difference in electronegativity.

Δε_d(ligand) ≈ γ * Δχ, whereγis an empirical parameter (~0.3 eV/Pauling unit) andΔχis the electronegativity difference between neighbor and host atoms. - Combine: The final descriptor for atom A in an A-B alloy:

ε_d(A, alloy) = ε_d(A, pure) + Δε_d(A, strain) + Δε_d(A, ligand). - Surface Average: Calculate the weighted average based on the surface composition.

Protocol: Building a Consensus Pre-Screening Model. Objective: To improve reliability by combining multiple simple models. Methodology:

- Data Preparation: Split your labeled DFT data (e.g., adsorption energies for 200 systems) into training (70%) and hold-out test (30%) sets.

- Model Training: Train three distinct, simple models on the same training set:

- Model M1: A linear model using 5 chemical descriptors.

- Model M2: A k-NN model using a different set of 3 structural descriptors.

- Model M3: A single decision tree using electronic descriptors.

- Generate Predictions: For each candidate in a large, unlabeled library, get predictions

P1, P2, P3from M1, M2, M3. - Apply Consensus Rule: Rank candidates by a consensus score

C = (Rank(P1) + Rank(P2) + Rank(P3)) / 3. LowerCindicates higher consensus. - Validation: On the hold-out test set, show that the top-ranked candidates by consensus score

Chave a higher hit rate and lower standard deviation in prediction error than any single model.

Mandatory Visualization

Diagram 1: Sequential Catalyst Pre-Screening Workflow

Diagram 2: Descriptor-Model-Validation Relationship

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Descriptor-Based Pre-Screening

| Item / Solution | Function / Purpose |

|---|---|

| RDKit | Open-source cheminformatics toolkit for calculating 200+ 2D/3D molecular descriptors (e.g., topological polar surface area, Morgan fingerprints) from SMILES strings. |

| DScribe or SOAPlite | Python libraries for calculating atomistic structure descriptors like Smooth Overlap of Atomic Positions (SOAP) and Atom-Centered Symmetry Functions (ACSF) for materials/surfaces. |

| Matminer | A library for generating materials science feature matrices from composition, crystal structure, and band structure. Provides connectors to major materials databases. |

| scikit-learn | Essential machine learning library for building, training, and validating regression/classification models (e.g., Ridge, Random Forest) using your descriptor sets. |

| CatLearn | Catalyst-specific ML platform built on top of ASE and scikit-learn. Offers pre-built workflows for adsorption energy prediction and uncertainty quantification. |

| Pymatgen & ASE | Core Python libraries for representing and manipulating atomic structures. Enable geometric descriptor calculation and integration with DFT codes. |

| Chemical Intuition Rule Sets | Curated lists of SMARTS patterns or logic rules (e.g., to filter out unstable functional groups, toxic moieties, or non-synthesizable complexes) for initial candidate pruning. |

High-Throughput Workflow Automation with DFT Codes (VASP, Quantum ESPRESSO, GPAW)

Troubleshooting Guides and FAQs

Q1: My VASP relaxation is stuck in a loop, oscillating between similar ionic steps. How do I break this cycle within a high-throughput screening framework?

A: This often indicates issues with step size or convergence criteria. First, check IBRION and POTIM. For structural relaxations, try IBRION = 2 (conjugate gradient) with a reduced POTIM = 0.1. Enable SYMPREC = 1E-4 to handle slight symmetry deviations. In an automated workflow, implement a conditional check: if the total energy change is less than 0.1 meV/atom for 5 consecutive steps, the job should be stopped and flagged for manual review, preventing wasted compute cycles.

Q2: I get a "Charge density does not converge" error in Quantum ESPRESSO during SCF for metallic systems. How can I fix this systematically?

A: Metallic systems require smearing. Use the smearing='mp' and degauss=0.02 parameters in the SYSTEM namelist. Increase mixing_beta to 0.3 or 0.4. For automated screening, implement a fallback protocol: if the default SCF fails, the workflow should automatically restart the calculation with increased mixing_beta and degauss, and a higher electron mixing_ndim (e.g., 8).

Q3: GPAW calculation crashes with "OutOfMemory" on a large slab model, despite free memory on the node. What is the cause?

A: This is typically due to the default domain decomposition. Use parsize and parsize_bands in the parallel dictionary to manually control domain decomposition. For a slab (planar) geometry, set parsize to split the grid primarily in the z-direction (e.g., 'parsize': (1, 1, 4) for 4 cores). In an HPC environment, integrate a resource-aware submission script that sets parsize based on the slab's aspect ratio and available cores.

Q4: During automated batch processing, VASP outputs the error "Error EDDDAV: Call to ZHEGV failed". What does this mean and how can the workflow handle it?

A: This is a linear algebra library error, often related to overlapping potentials or numerical instability. Automated responses should include: 1) Increasing PREC = Accurate. 2) Deleting the WAVECAR file to restart from a new guess. 3) Adding ADDGRID = .TRUE.. The workflow should attempt these fixes in order before escalating the job to a "failed" state.

Q5: How do I manage the computational cost when automating hundreds of catalyst surface energy calculations with different adsorbates?

A: Implement a tiered screening protocol. Use a fast, lower-precision method (e.g., GPAW with mode='lcao' and a single-zeta basis) for initial candidate filtering. Only the top candidates proceed to high-accuracy VASP or QE calculations. Cache and reuse wavefunctions from the clean slab calculation for all subsequent adsorbate calculations on that surface to dramatically reduce SCF steps.

Experimental Protocols for DFT-Based Catalyst Screening

Protocol 1: Adsorption Energy Calculation Workflow

- Clean Surface Relaxation: Build symmetric slab model (>15 Å vacuum). Relax ionic positions with fixed bottom 2 layers. Convergence:

EDIFFG = -0.02 eV/Å(VASP),forc_conv_thr=0.001 eV/Å(QE). - Adsorbate Geometry Optimization: Place adsorbate in multiple high-symmetry sites. Perform gas-phase calculation of the isolated molecule in a large box.

- Adsorption Energy Calculation: Use formula:

E_ads = E_(slab+ads) - E_slab - E_ads_gas. Correct for basis set superposition error (BSSE) using the counterpoise method for accurate benchmarking. - High-Throughtip Automation: Script the generation of all input files, submission to the queue, parsing of final energies, and calculation of

E_adsinto a database.

Protocol 2: Transition State Search for Activation Barriers

- Endpoint Stability: Confirm the optimized geometry of initial and final states (adsorbed configurations).

- Nudged Elastic Band (NEB) Initialization: Use the

IDPP(Image Dependent Pair Potential) method to generate 5-7 initial images along the reaction path. - NEB Calculation: Run with climbing image (CI-NEB). Key settings:

ICHAIN = 0,LCLIMB = .TRUE.(VASP);opt_scheme='ci-neb'inase.neb(GPAW). - Force Convergence: Use a tight threshold (< 0.05 eV/Å) for forces on the climbing image.

Table 1: Comparative Computational Cost of DFT Codes for a 50-Atom Metal Oxide Slab

| Code | Functional | Basis Set / Pseudopotential | Avg. Wall Time per SCF (s) | Memory per Core (MB) | Relative Cost per Simulation |

|---|---|---|---|---|---|

| VASP 6.3 | PBE | PAW (Standard) | 120 | 220 | 1.00 (Reference) |

| QE 7.1 | PBE | SSSP Efficiency | 95 | 180 | 0.79 |

| GPAW 22.8 | PBE | LCAO(SZ) | 15 | 90 | 0.12 |

| GPAW 22.8 | PBE | Plane-wave (600 eV) | 140 | 250 | 1.17 |

Table 2: Error Analysis in High-Throughput Adsorption Energies (vs. High-Precision Results)

| Automation Strategy | Mean Absolute Error (eV) | Max Error (eV) | Computational Time Saving |

|---|---|---|---|

| Single-Point on Fixed Bulk Geometry | 0.15 | 0.42 | 70% |

| Fixed Slab, Relaxed Adsorbate | 0.08 | 0.21 | 50% |

| Full Relaxation (Baseline) | 0.00 | 0.00 | 0% |

| Tiered Screening (LCAO -> PW) | 0.03 | 0.09 | 65% |

Visualizations

High-Throughput DFT Screening Workflow

SCF Convergence Troubleshooting Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Scripting Tools for DFT Automation

| Tool / Solution | Function in Workflow | Key Benefit for Cost Reduction |

|---|---|---|

| ASE (Atomic Simulation Environment) | Python framework to create, manipulate, and run calculations across VASP, QE, GPAW. | Unified interface prevents code-specific errors and automates pre/post-processing. |

| FireWorks / AiIDA Workflow Manager | Manages job dependencies, submission, and monitoring on HPC clusters. | Ensures optimal queue usage and automatic recovery from failures, saving compute time. |

| Pymatgen Structure Matcher | Algorithmically identifies duplicate structures in candidate pool. | Eliminates redundant calculations, directly reducing computational expense. |

| SSSP Pseudopotential Library | Curated, efficiency-tested pseudopotentials for Quantum ESPRESSO. | Provides reliable, lower-cutoff potentials that maintain accuracy while speeding calculations. |

| VASPKIT / Sumo | Command-line toolkits for VASP input generation and output analysis. | Automates symmetry analysis, band structure plotting, and error checking. |

| Custom Python Parsing Scripts | Extracts key metrics (energy, forces, eigenvalues) from diverse output files. | Enables rapid data aggregation from thousands of jobs for analysis. |

The Rise of Machine Learning Potentials and Surrogate Models

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My ML potential training fails with high validation loss, even with a seemingly diverse DFT dataset. What could be wrong? A: This is often a data quality or representation issue. First, verify the completeness of your reference DFT calculations. Ensure they include full convergence in k-points, energy cutoffs, and proper treatment of dispersion forces if needed. High loss can stem from:

- Inconsistent DFT Settings: Training data generated with varying parameters (e.g., different xc-functionals or convergence criteria). Protocol: Standardize all training data generation using a single, well-converged DFT protocol. Document functional (e.g., RPBE), cut-off energy, k-point grid, and smearing width.

- Poor Atomic Environment Sampling: Your dataset may miss critical configurations (e.g., transition states, defects, adsorbates). Protocol: Employ active learning or iterative sampling. Start with a small DFT-relaxed dataset, train a preliminary potential, run MD simulations, and extract configurations where model uncertainty (e.g., predicted variance) is high. Compute DFT energies for these and add them to the training set. Repeat.

Q2: My surrogate model for catalyst screening predicts formation energies that deviate significantly from DFT for new, unseen alloy compositions. How can I improve generalizability? A: This indicates model overfitting or inadequate feature engineering for the composition space.

- Action: Incorporate physically meaningful descriptors beyond basic composition. Use features like electronegativity differences, d-band center estimates (from simplified models), coordination numbers, or radial distribution function fingerprints.

- Protocol for Feature Generation:

- For a bulk or surface structure, calculate the Voronoi polyhedron for each atom.

- For each atom, compute the weighted average of properties (e.g., atomic number, group) from its nearest neighbors (within a cutoff radius).

- Use these local environment descriptors as input features alongside elemental properties.

- Verify: Perform leave-cluster-out cross-validation, where entire composition families (e.g., all Pt-Ni alloys) are held out during training and used only for testing.

Q3: When using an ML potential for molecular dynamics (MD), I observe unphysical bond breaking or energy drift at high temperatures. How do I diagnose this? A: This points to extrapolation beyond the potential's reliable domain or insufficient training on high-energy configurations.

- Diagnostic Steps:

- Check Configuration Robustness: Run a short MD simulation and save snapshots. For each snapshot, compute the model's uncertainty (if available) or the deviation between the ML-predicted energy and a single-point DFT calculation on a subset of frames. High deviations flag failure regions.

- Inspect Training Data: Ensure your training set includes configurations from high-temperature ab initio MD (AIMD) simulations, not just static relaxed structures.

- Protocol for High-Temperature Training Data Generation:

- Perform AIMD on a representative supercell of your catalyst system at the target temperature (e.g., 500K) for 20-50 ps.

- Extract uncorrelated frames (every 100 fs).

- Compute energies and forces for these frames using the same, consistent DFT setup as your static data.

- Add these to your training set with appropriate weights on force components.

Q4: The computational cost of generating the initial DFT dataset for training is itself prohibitive for my large catalyst library. Are there strategies to minimize this? A: Yes, a strategic down-selection is key.

- Strategy: Use a low-cost, high-throughput screening method (e.g., using a semi-empirical method or a very simple descriptor like the generalized coordination number) to filter candidate materials.

- Protocol for Tiered Screening:

- Tier 1: Screen thousands of candidates using a simple, interpretable model (e.g., linear scaling relations based on a few elemental properties). Select the top 20%.

- Tier 2: On the reduced set, perform more accurate but still affordable calculations (e.g., single-point DFT on fixed, guessed geometries). Select the top 50 from this tier.

- Tier 3: This set undergoes full DFT relaxation and electronic structure analysis. The results from this tier form your high-quality training dataset for the final surrogate model.

Table 1: Comparative Performance of ML Potentials for Catalytic Surface Simulations

| ML Potential Type | Typical Training Set Size (DFT Calculations) | Speed-up vs. DFT (MD step) | Mean Absolute Error (Energy) [meV/atom] | Typical Best Use Case in Catalyst Screening |

|---|---|---|---|---|

| Neural Network (e.g., ANI, NNP) | 10,000 - 100,000 | 10^3 - 10^4 | 1 - 5 | Reactive MD for adsorbate decomposition, diffusion on complex surfaces. |

| Gaussian Approximation (GAP) | 1,000 - 10,000 | 10^2 - 10^3 | 2 - 10 | Phase stability, defect properties in bulk catalyst materials. |

| Moment Tensor (MTP) | 5,000 - 50,000 | 10^3 - 10^4 | 1 - 8 | High-temperature stability of nanoparticle catalysts. |

| Graph Neural Network (e.g., M3GNet) | ~100,000 (from databases) | 10^2 - 10^3 | 3 - 15 | Preliminary screening of formation energies across wide composition spaces. |

Table 2: Cost-Benefit Analysis: Pure DFT vs. Surrogate Model Screening

| Screening Phase | Pure DFT High-Throughput (Estimated) | ML-Surrogate Model Approach (Estimated) | Key Benefit |

|---|---|---|---|

| Initial Candidate Generation | 10,000 CPU-hrs | 100 CPU-hrs (model training) + 1 CPU-hr (prediction) | >100x reduction in initial screening wall time. |

| Accuracy on Hold-out Test Set | N/A (Baseline) | MAE in formation energy: 20-50 meV/atom | Enables rapid prioritization with quantifiable error. |

| Time to First Prediction | Weeks to months (queue + compute) | Days (after model is trained) | Dramatically accelerated hypothesis testing. |

Experimental & Computational Protocols

Protocol 1: Generating a Robust Training Dataset for an Oxide-Supported Nanoparticle ML Potential

- System Preparation: Build initial structures for the metal nanoparticle (e.g., Pt55) and the oxide support (e.g., TiO2 slab).

- DFT Reference Calculations:

- Software: VASP (or Quantum ESPRESSO).

- Functional: RPBE + D3(BJ) dispersion correction.

- Convergence: Energy cutoff 520 eV, k-point spacing 0.03 Å⁻¹, electronic energy convergence 10^-6 eV.

- Sampling: Perform: a) Static relaxations of multiple nanoparticle isomers. b) Ab initio MD (AIMD) at 300K, 500K, and 800K for 20 ps each, saving frames every 50 fs. c) Nudged Elastic Band (NEB) calculations for key adsorbate diffusion steps.

- Data Extraction: Extract atomic positions, total energies, and atomic forces from all calculations. Assemble into a structured format (e.g., ASE database or .npz files).

Protocol 2: Active Learning Loop for ML Potential Development

- Train an initial ML potential (e.g., using DeePMD-kit or AMPTorch) on a seed DFT dataset (100-200 configurations).

- Deploy the potential in molecular dynamics simulations (e.g., LAMMPS) of the target system, exploring relevant temperatures and pressures.

- At regular intervals, compute the model's uncertainty per atom or use a committee of models to identify configurations with high predictive variance.

- Select the 50-100 most uncertain configurations and perform single-point DFT calculations on them.

- Add these new data points to the training set and retrain the model.

- Iterate steps 2-5 until the model's error on a fixed validation set plateaus and MD simulations show no unphysical events.

Diagrams

Title: ML Potential Development & Validation Workflow

Title: Tiered Catalyst Screening Strategy

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for ML-Driven Catalyst Screening

| Item / Software | Function in Research | Example/Note |

|---|---|---|

| VASP / Quantum ESPRESSO | Generates the reference DFT data (energies, forces, stresses) for training and final validation. | Essential for creating the "ground truth" dataset. RPBE-D3 is a common functional for catalysis. |

| Atomic Simulation Environment (ASE) | Python framework for setting up, manipulating, running, and analyzing atomistic simulations. Acts as a "glue" between DFT codes, ML libraries, and visualization. | Used to build catalyst surfaces, run NEB, and interface with ML packages. |

| DeePMD-kit / AMPTorch | Software packages specifically designed for training and deploying neural network-based interatomic potentials. | Converts DFT data into a ready-to-use ML potential for large-scale MD in LAMMPS. |

| LAMMPS | Classical molecular dynamics simulator with plugins to evaluate ML potentials, enabling large-scale, long-timescale simulations. | Used to run nanosecond-scale MD of catalytic systems at reaction conditions. |

| OCP / M3GNet Models | Pre-trained graph neural network models on massive materials datasets (e.g., OC20). Provide good initial potentials or feature representations for transfer learning. | Useful for quick property predictions or as a starting point for fine-tuning on a specific catalyst system. |

| pymatgen | Python library for materials analysis. Provides robust structure manipulation, feature/descriptor generation (e.g., local order parameters), and analysis tools. | Critical for converting crystal structures into numerical inputs (feature vectors) for surrogate models. |

Frequently Asked Questions (FAQs)

Q1: My calculated formation energy changes dramatically when I reduce the k-point density. Is this an error or expected behavior? A: This can be expected for certain systems. Metallic systems or those with dense electronic states near the Fermi level are highly sensitive to k-point sampling. A sparse grid may fail to integrate the density of states accurately, leading to significant errors in energy. Always perform a k-point convergence test for each unique material type in your screening project.

Q2: When simplifying a molecular catalyst's geometry for screening (e.g., removing ligands), how do I know which atoms are safe to remove? A: The core principle is to preserve the active site and its immediate electronic environment. Remove peripheral ligands that are not directly involved in bonding or charge transfer. However, you must verify that the simplified model reproduces key properties (e.g., frontier orbital shapes, spin density, binding energy trends) of the full system through validation calculations on a subset of candidates.

Q3: I used a highly reduced k-point grid for a high-throughput screening of 1000 materials. How reliable are the top 10 candidates identified? A: They are reliable as a first-pass filter. The goal of downsampling is to cheaply eliminate the vast majority of non-promising candidates. The top 10-50 candidates from the initial screen must be re-evaluated using higher-fidelity settings (denser k-points, full geometry) to confirm their ranking before any experimental suggestion.

Q4: Can I combine a reduced k-grid with a simplified geometry in the same calculation? A: Yes, this is a common tiered-screening approach. However, it compounds approximations. The recommended protocol is to apply one downsampling technique at a time during method validation to isolate its impact on accuracy.

Troubleshooting Guides

Issue: Total energy oscillates non-monotonically with increasing k-point density.

- Cause: This often occurs in metals or systems with symmetry-breaking. The changing grid may sample special points of the Brillouin zone with varying effectiveness.

- Solution: Switch from a regular Monkhorst-Pack grid to a Gamma-centered grid. Use an odd number of k-points in each direction (e.g., 3x3x3 instead of 4x4x4) to avoid sampling exactly at the Brillouin zone boundary. Consider using the tetrahedron method for metals instead of Gaussian smearing.

Issue: After removing solvent molecules or bulky ligands, my optimized structure of the active site collapses or distorts unrealistically.

- Solution: You have likely removed structurally important components. Apply constraints:

- Freeze: Keep the positions of key atoms (e.g., those bonding to removed ligands) fixed during optimization.

- Anchor: Add lightweight, terminating atoms (e.g., H atoms) to saturate dangling bonds left by removed fragments.

- Always compare the bond lengths and angles in the constrained core to the full system to ensure consistency.

Issue: A downsampled calculation predicts an incorrect ground state magnetic ordering or electronic structure.

- Cause: Reduced k-point grids can poorly describe magnetic interactions or band gaps, especially in correlated materials.

- Solution: Magnetic and electronic ground states are high-level properties. Do not use heavily downsampled parameters for their determination. Use the downsampled workflow only for pre-screening based on a simpler property (like formation enthalpy), then recalculate magnetic/electronic states for promising candidates with high accuracy.

Data Tables

Table 1: Typical K-Point Grid Convergence for Common Material Classes (Example Data)

| Material Class | Example System | Coarse Grid (Screening) | Fine Grid (Verification) | Energy Tolerance (meV/atom) |

|---|---|---|---|---|

| Bulk Metal | fcc Cu | 4x4x4 (MP) | 12x12x12 | < 1 |

| Semiconductor | Si | 3x3x3 (Gamma) | 9x9x9 | < 2 |

| 2D Sheet | Graphene | 6x6x1 (Gamma) | 18x18x1 | < 1 |

| Molecular Crystal | COF | 2x2x2 (Gamma) | 4x4x4 | < 5 |

| Insulating Oxide | MgO | 2x2x2 (MP) | 6x6x6 | < 3 |

MP: Monkhorst-Pack, Gamma: Gamma-centered grid.

Table 2: Impact of Common Geometric Simplifications on Catalytic Property Prediction

| Simplification | Typical Use Case | Computational Speed-up | Key Risk / Validation Needed |

|---|---|---|---|

| Remove Solvent/Implicit Model | Homogeneous catalyst | ~2-5x | Dielectric effects on reaction barriers |

| Truncate Peripheral Ligands | Organometallic complex | ~5-20x | Steric effects on substrate access |

| Substitute Heavy with Light Atoms (Pb → Si) | Perovskite screening | ~10x | Preserving orbital character & band edges |

| Use Cluster instead of Slab | Surface adsorption | ~50-100x | Edge effects on adsorbate binding energy |

Experimental Protocols

Protocol 1: K-point Convergence Test for High-Throughput Screening Setup

- Select Representative Systems: Choose 3-5 structures that span the chemical and structural diversity of your full screening library.

- Define Grid Sequence: Calculate total energy for each system using a series of increasingly dense k-point grids (e.g., 2x2x2, 3x3x3, 4x4x4, 6x6x6, 8x8x8). Use the same geometry and computational parameters for all.

- Reference Energy: Treat the energy from the densest grid as the reference (E_ref).

- Calculate Delta E: For each grid, compute ΔE = |Egrid - Eref| per atom.

- Determine Threshold: Identify the grid density where ΔE falls below your chosen tolerance (e.g., 5 meV/atom) for all representative systems. This is your screening grid.

- Apply Grid: Use this determined grid for the high-throughput screening of all materials.

Protocol 2: Validation of a Simplified Molecular Geometry

- Full System Calculation: Optimize the geometry of the full, unmodified catalyst molecule using high-quality settings (e.g., hybrid functional, dense basis set/grid, solvent model).

- Property Benchmark: From this calculation, extract key properties: HOMO/LUMO energy & shape, spin density on the metal center, and key bond lengths (e.g., M-Ligand).

- Simplified Model Calculation: Create the simplified model (e.g., ligand truncation). Optimize its geometry, potentially with constraints (see Troubleshooting).

- Comparative Analysis: Calculate the same properties from step 2 for the simplified model.

- Acceptance Criteria: If the property differences are within a defined threshold (e.g., HOMO shift < 0.2 eV, bond length change < 0.05 Å, similar spin density isosurface), the model is validated for screening. If not, revise the simplification strategy.

Visualizations

Tiered Screening Workflow for DFT Cost Reduction

Geometric Simplification Protocol for a Molecular Catalyst

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Software | Function in Downsampling Research |

|---|---|

| VASP / Quantum ESPRESSO / CASTEP | Primary DFT engines where k-point grids and geometry inputs are defined and tested. |

| pymatgen / ASE (Atomistic Simulation Environment) | Python libraries for automating the generation of k-point meshes and creating/modifying crystal/molecular structures. |

| High-Performance Computing (HPC) Cluster | Essential for running the large number of calculations required for convergence testing and high-throughput screening. |

| MPI (Message Passing Interface) | Enables parallelization of DFT calculations across multiple cores, making fine k-point grid calculations feasible. |

| Job Scheduler (Slurm, PBS) | Manages computational resources and queues the hundreds to thousands of individual calculations in a screening workflow. |

| Convergence Testing Scripts | Custom scripts (Python/Bash) to automatically launch series of calculations with varying k-point density and parse results. |

| Visualization Software (VESTA, JMol) | Used to inspect atomic structures before and after simplification to ensure chemical reasonableness. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQs for DFT-Based High-Throughput Screening (HTS)

Q1: My DFT calculation for a candidate catalyst diverges or fails to converge. What are the primary causes? A: This is often due to an unstable initial geometry or incorrect electronic structure guess. First, ensure your initial structure is pre-optimized with a faster, classical force field method (e.g., UFF). Second, adjust the SCF (Self-Consistent Field) convergence parameters. Increase the number of SCF cycles (e.g., to 500) and consider using a damping or smearing technique (e.g., Fermi-Dirac smearing of 0.1 eV) for metallic systems. Using a better initial guess from a atomic charge calculation can also help.

Q2: How do I validate that my reduced-cost DFT method (e.g., GFN-FF, semi-empirical) provides accuracy comparable to standard GGA/PBE for adsorption energies? A: You must perform a benchmark study. Select a subset of 20-50 candidate materials. Calculate the key descriptor (e.g., *OH adsorption energy) using both the high-level method (PBE-D3) and the reduced-cost method. Perform a linear regression analysis. A reliable reduced-cost method should yield an R² > 0.9 and a Mean Absolute Error (MAE) of less than 0.15 eV when compared to the benchmark.

Q3: My computed overpotential for the Oxygen Evolution Reaction (OER) seems physically unrealistic (e.g., > 2 V). What step is likely wrong? A: The error typically lies in the scaling relationship or the reference potential calculation. 1) Verify the stability of all intermediate adsorption geometries (*O, *OH, *OOH). 2) Double-check the calculation of the chemical potential of electrons (related to the Standard Hydrogen Electrode). Ensure you are using the accepted computational hydrogen electrode (CHE) model with the correct reference: U(SHE) = -4.44 V at the standard DFT level. 3) Confirm you are using the formula η_OER = max(ΔG1, ΔG2, ΔG3, ΔG4)/e - 1.23 V.

Q4: When screening enzyme mimetics, how do I handle the simulation of solvent effects efficiently in a high-throughput workflow? A: For high-throughput screening, explicit solvent models are too costly. Use an implicit solvation model (e.g., SMD, COSMO). Ensure the dielectric constant matches your solvent (ε=78.4 for water). For proton-coupled electron transfer (PCET) reactions critical to mimetics, you must also consistently apply a correction for the H+ free energy in the chosen implicit solvent model. The SMD model implemented in VASP, Gaussian, or ORCA is recommended.

Experimental Protocols for Key Validation Steps

Protocol 1: Benchmarking Reduced-Cost Computational Methods

- Curation of Test Set: Assemble a diverse test set of 30 known catalysts (e.g., metals, oxides, single-atom sites) from literature.

- Descriptor Calculation (High-Level): For each material, compute the adsorption energy of a key reaction intermediate (e.g., CO2˙⁻ for CO2RR) using a robust DFT functional (e.g., RPBE-D3(BJ)) with a plane-wave basis set (cutoff > 500 eV) and fine k-point grid.

- Descriptor Calculation (Low-Level): Repeat the calculation for the same geometries using the reduced-cost method (e.g., GFN2-xTB, PM7).

- Statistical Analysis: Plot the low-level vs. high-level energies. Calculate the Pearson correlation coefficient (R), R², MAE, and Root Mean Square Error (RMSE). The method is viable if MAE < 0.2 eV and R² > 0.85.

Protocol 2: Calculating the Theoretical Overpotential for OER

- Surface Model Construction: Build a stable slab model (e.g., 3-5 layers) of your catalyst surface with a >15 Å vacuum. Fix the bottom 1-2 layers.

- Intermediate Adsorption: Optimize the geometry for the clean surface and with each OER intermediate (*OH, *O, *OOH) adsorbed at the active site.

- Free Energy Calculation: Compute the Gibbs free energy change (ΔG) for each of the four OER steps using: ΔG = ΔEDFT + ΔZPE - TΔS + ΔGU + ΔGpH Where ΔEDFT is the DFT total energy difference, ΔZPE is zero-point energy correction, TΔS is the entropy contribution (from vibrational frequencies), ΔGU is the effect of applied bias (ΔGU = -eU), and ΔGpH = kB T × ln(10) × pH.

- Potential Determining Step: Identify the step with the largest positive ΔG. The theoretical overpotential is η = (max[ΔG1, ΔG2, ΔG3, ΔG4] / e) - 1.23 V.

Table 1: Performance Benchmark of Reduced-Cost Methods for Adsorption Energy (ΔE_AD) Prediction

| Reduced-Cost Method | Reference DFT Method | Test System (Descriptor) | Mean Absolute Error (MAE) [eV] | R² Value | Avg. Computational Time Saved |

|---|---|---|---|---|---|

| GFN2-xTB | RPBE-D3/def2-TZVP | *OOH on TM-N-C (OER) | 0.18 | 0.91 | ~95% |

| PM6 | B3LYP-D3/6-31G* | *COOH on Au surfaces (CO2RR) | 0.32 | 0.79 | ~98% |

| SQM (DFTB3) | PBE-D3/PAW | *N2 on Fe-SAM (NRR) | 0.22 | 0.88 | ~90% |

| Classical Force Field (ReaxFF) | PBE-D3/PAW | *H on Pt-alloys (HER) | 0.45 | 0.65 | ~99% |

Table 2: Key Experimental Validation Metrics for Predicted Top-Performing Catalysts

| Catalyst Material (Predicted) | Target Reaction | Predicted Overpotential (η) / Activity Descriptor | Experimental Validation Metric | Reported Performance (Top Performer) |

|---|---|---|---|---|

| NiFe Prussian Blue Analogue | OER | η = 0.35 V @ 10 mA/cm² | Overpotential @ 10 mA/cm² | η = 0.27 ± 0.05 V (1M KOH) |

| CoPc/MXene Composite | CO2 to CO | ΔG(*COOH) = 0.45 eV | Faradaic Efficiency for CO | FE_CO = 92% @ -0.7 V vs. RHE |

| FeN4-C Single-Atom Site | ORR | Onset Potential = 0.92 V vs. RHE | Half-wave Potential (E_1/2) | E_1/2 = 0.85 V vs. RHE (0.1M KOH) |

Visualizations

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 3: Essential Computational Tools for DFT Screening

| Item/Category | Example(s) | Primary Function in Workflow |

|---|---|---|

| Atomic Structure Database | Materials Project, OQMD, ICSD | Provides crystallographic data for bulk and surfaces to build initial computational models. |

| Automation & Workflow Manager | ASE (Atomic Simulation Environment), FireWorks, AiiDA | Scripts and manages thousands of DFT calculations, handling job submission, monitoring, and data retrieval. |

| Reduced-Cost DFT Method | GFN-xTB, DFTB, PM7 | Performs initial geometry optimization and rapid property screening, filtering 1000s of candidates down to 10s. |

| High-Fidelity DFT Code | VASP, Quantum ESPRESSO, CP2K, Gaussian | Performs accurate, final electronic structure calculations on short-listed candidates with explicit solvation/dispersion. |

| Post-Processing & Analysis | pymatgen, custom Python scripts (NumPy, pandas), Matplotlib | Analyzes output files to compute descriptors (adsorption energies, d-band centers, overpotentials) and creates visualizations. |

| Descriptor Library | CatKit, dscribe | Generates common catalyst descriptors (coordination numbers, symmetry functions) for machine learning readiness. |

Solving Common Pitfalls and Optimizing DFT Calculations for Speed

Troubleshooting Guides & FAQs

Q1: My calculation stops with "BRMIX: very serious problems" or the total energy is oscillating wildly. What is wrong?

A: This is a classic sign of electronic convergence failure. It often occurs with metallic systems or systems with a small band gap.

- Primary Fix: Adjust the mixing parameters for the self-consistent field (SCF) cycle. Increase

AMIX(e.g., from 0.2 to 0.4) andBMIX(e.g., from 0.0001 to 0.001). For difficult metallic systems, useISYM = 0andICHARG = 2(read charge density) on a second run. - Advanced Method: Employ the Kerker preconditioning (

IMIX = 1) or use a more robust algorithm like the blocked Davidson (ALGO = Normal) instead of the default RMM-DIIS (ALGO = Fast). For hybrid calculations,ALGO = Allis sometimes necessary. - Protocol: Start a new calculation from the previous converged charge density (

ICHARG=1) with the modifiedAMIX,BMIX, andIMIXparameters. Monitor the energy difference in theOSZICARfile.

Q2: My ionic relaxation is stuck in a loop, cycling between similar structures without reaching the force criteria.

A: This indicates ionic convergence failure, often due to the electronic structure not being fully converged at each ionic step or the step size being too large.

- Primary Fix: Tighten the electronic convergence criteria (

EDIFF) for the inner SCF loop (e.g., from1E-4to1E-5or1E-6) to ensure accurate forces at each geometry step. - Secondary Fix: Change the optimization algorithm. Switch from the conjugate gradient (

IBRION = 2) to the quasi-Newton (BFGS) method (IBRION = 1), which often has better convergence properties. You can also reduce the initial step size (POTIM = 0.1). - Protocol: Restart the relaxation from the last reasonable structure (

CONTCAR->POSCAR) withIBRION=1,EDIFF=1E-6, andPOTIM=0.1.

Q3: How do I know if my k-point mesh is dense enough for a converged total energy?

A: k-point convergence must be tested systematically. A mesh that is too sparse introduces significant error, while too dense wastes computational resources—a critical balance in catalyst screening.

- Protocol: Perform a series of single-point energy calculations on the same geometry, incrementally increasing the k-point mesh density (e.g., 3x3x3, 5x5x5, 7x7x7). Plot the total energy against the inverse of the k-point count (or mesh dimension). The mesh is considered converged when the energy change is less than your target accuracy (typically 1-5 meV/atom for catalysts).

Q4: I am screening transition metal oxide catalysts. Which convergence parameters are most critical to standardize?

A: For consistent and reliable results across a materials set, you must standardize:

- k-point Density: Converged for the largest unit cell in your set.

- Energy Cutoff (

ENCUT): Converged to at least 1 meV/atom. Use the highestENMAXfrom the POTCAR files as a safe baseline. - Force Convergence Criterion (

EDIFFG): Use a consistent, stringent value (e.g., -0.01 eV/Å) for all ionic relaxations. - SCF Convergence (

EDIFF): Use a tight criterion (e.g.,1E-6eV) to ensure accurate energies and forces.

Q5: How can I reduce computational cost during screening without sacrificing reliability for convergence?

A: This is the core of efficient high-throughput DFT.

- Strategy 1: Use a lowered precision preset (

PREC = Normal) for initial ionic relaxations, and only final single-point energies withPREC = Accurate. - Strategy 2: Implement a two-step k-point approach: relax structures with a moderate k-mesh, then compute the final energy with a denser, converged mesh.

- Strategy 3: For large cells, start relaxations from pre-converged charge densities of similar, smaller systems to reduce initial SCF steps.

- Strategy 4: Automate convergence testing scripts to establish material-class-specific defaults before launching large screens.

Data Tables

Table 1: Typical Convergence Thresholds for Catalyst Screening

| Parameter | Symbol (VASP) | Low Precision/Relax | High Precision/Final Energy | Unit |

|---|---|---|---|---|

| Electronic Convergence | EDIFF |

1E-4 | 1E-6 (or 1E-7) | eV |

| Force Convergence | EDIFFG |

-0.02 | -0.01 | eV/Å |

| k-point Mesh (Bulk) | KPOINTS |

~20-30 / Å⁻³ | Converged (~50-100 / Å⁻³) | k-points per reciprocal ų |

| Plane-Wave Cutoff | ENCUT |

1.1*max(ENMAX) | 1.3*max(ENMAX) | eV |

| SCF Mixing Parameter | AMIX |

0.2 | 0.05 | - |

Table 2: Troubleshooting Matrix for Common Issues

| Symptom | Likely Culprit | Immediate Action | Long-Term Solution |

|---|---|---|---|

| SCF oscillation, BRMIX error | Electronic (Charge) | Increase AMIX, BMIX; Use ALGO=Normal |

Test IMIX, LMAXMIX for elements |

| Ionic relaxation loops | Ionic (Forces) | Tighten EDIFF to 1E-6; Try IBRION=1 |

Ensure k-points/ENCUT are converged |

| Energy jumps with k-points | k-point Sampling | Increase k-mesh uniformly | Perform formal k-point convergence test |

| Inconsistent formation energies | Inconsistent Parameters | Standardize ENCUT, k-grid, EDIFFG across set |

Create project-wide INCAR templates |

Experimental Protocols

Protocol 1: Systematic k-point Convergence Test

- Input Preparation: Fully relax a representative structure (e.g., a bulk unit cell of your catalyst) using a moderate, safe set of parameters (

ENCUT=520 eV,KSPACING=0.3). - Single-Point Series: Using the converged geometry, perform a series of static (

NSW=0) calculations. Incrementally increase the k-mesh density. For a cubic cell, use equivalent meshes: 2x2x2, 3x3x3, 4x4x4, 5x5x5, 6x6x6, 7x7x7. - Data Extraction: From each

OUTCAR, extract the total energy (energy(sigma->0)). - Analysis: Plot Total Energy (eV) vs. N_k⁻¹/³ (proportional to k-spacing). The converged region is where the curve plateaus. Select the coarsest mesh within your target accuracy (e.g., 2 meV/atom of the asymptotic value).

Protocol 2: Diagnosing and Fixing SCF Divergence

- Identification: Monitor the

OSZICARfile. IfdEorFchanges sign repeatedly without decreasing belowEDIFF, the SCF is diverging. - Step 1 (Restart with Mixing): Copy the last

CHGCARandWAVECAR(if available) to a new directory. Create a newINCARwith: - Step 2 (If Step 1 Fails): Remove

WAVECARand setICHARG=2to restart from superposition of atomic charge densities withALGO=All. For spin-polarized systems, check initial magnetic moments. - Step 3 (For Metals): Consider enabling Fermi-level smearing (

ISMEAR=1,SIGMA=0.2) and settingLMAXMIX=4for d-elements or6for f-elements.

Visualization

Diagram 1: DFT Convergence Diagnosis Workflow