Advanced Catalyst Design: Optimizing Denoising in Diffusion Models for Accelerated Drug Discovery

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing the denoising process within diffusion models for catalyst design.

Advanced Catalyst Design: Optimizing Denoising in Diffusion Models for Accelerated Drug Discovery

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing the denoising process within diffusion models for catalyst design. We explore the foundational principles linking diffusion model dynamics to molecular generation, detail practical methodologies for application in catalyst discovery, address common challenges and optimization strategies, and compare validation techniques. The goal is to equip practitioners with the knowledge to efficiently generate novel, high-performance catalytic molecules, thereby accelerating the pipeline for therapeutic development.

From Noise to Novelty: Understanding Diffusion Models for Catalyst Generation

Technical Support Center: Troubleshooting & FAQs

Context: This support center is designed for researchers optimizing the denoising process in diffusion models for catalysts research. The following guides address common pitfalls in training and sampling DDPMs for molecular and material generation.

Troubleshooting Guides

Issue: Model Generates Blurry or Unrealistic Catalyst Structures

- Check 1: Noise Schedule (Beta Schedule). An improperly scaled noise schedule can prevent the model from learning meaningful data distributions. Verify your schedule follows a linear or cosine rule from ~1e-4 to ~0.02 over the defined timesteps.

- Check 2: Loss Function Instability. Monitor your Mean Squared Error (MSE) loss between predicted and actual noise. Exploding gradients suggest an issue with the loss scale or optimizer. Implement gradient clipping.

- Protocol: Use the standard DDPM training protocol: 1) Sample a clean data point

x0(e.g., a catalyst structure representation), 2) Sample a random timesteptuniformly from[1, T], 3) Sample noiseεfrom N(0, I), 4) Compute noisy samplext = sqrt(α_bar_t)*x0 + sqrt(1-α_bar_t)*ε, 5) Train the U-Net to predictεfromxtandt.

Issue: Sampling Process Produces Repetitive or Low-Diversity Outputs

- Check 1: Reverse Process Variance. The reverse process variance Σθ can be set to β̃t (theoretical) or βt (fixed). For catalyst discovery, where diversity is key, using learned variance or β̃_t may yield better exploration of the material space.

- Check 2: Classifier-Free Guidance Weight. If using conditioning (e.g., on catalytic activity), a guidance scale that is too high can collapse diversity. Systematically sweep the guidance scale.

Frequently Asked Questions (FAQs)

Q1: How do I choose the number of diffusion timesteps (T) for modeling catalyst molecules? A: The choice is a trade-off. A higher T (e.g., 1000) makes the diffusion process more reversible and easier to learn but increases sampling time. For 3D molecular structures (point clouds/ graphs), a T between 500-1000 is common. Start with 1000 and consider distillation techniques for faster sampling post-training.

Q2: My model fails to condition on desired catalytic properties. What should I do? A: Ensure your conditioning mechanism is correctly implemented. For classifier-free guidance, randomly drop the condition (e.g., target binding energy) during training (10-30% of the time). Use a sufficiently strong conditioning embedding (e.g., via a linear projection added to the timestep embedding).

Q3: How can I quantitatively evaluate the quality of generated catalyst structures? A: Use a combination of metrics, as no single metric is sufficient.

Table 1: Key Metrics for Evaluating Generated Catalysts

| Metric | Description | Target for Optimization |

|---|---|---|

| Validity Rate | % of generated structures that obey chemical valence rules. | > 95% |

| Uniqueness | % of unique, non-duplicate structures within a large sample (e.g., 10k). | > 80% |

| Reconstruction Error | Mean Squared Error (MSE) between an original and a reconstructed molecule. | Minimize |

| Property Distribution | Distance (e.g., MMD) between distributions of a key property (e.g., formation energy) in generated vs. training data. | Minimize |

Q4: What is the role of the U-Net architecture, and are there alternatives for catalyst DDPMs? A: The U-Net is the standard denoiser (ε_θ) due to its effective downsampling and upsampling for capturing structure at multiple scales. For graph-based catalyst representations (atoms as nodes), Graph Neural Network (GNN) U-Nets or Transformers are becoming popular alternatives that directly operate on the graph structure.

Experimental Protocols

Protocol 1: Training a DDPM for Catalyst Generation

- Data Preparation: Represent catalyst structures as 3D point clouds (atom coordinates) with feature vectors (atom type, charge) or as molecular graphs. Standardize the coordinate space.

- Noise Schedule Configuration: Define the total timesteps

T=1000and a linear beta schedule fromβ1=1e-4toβT=0.02. Pre-computeα_t = 1 - β_tandα_bar_t = Π α_s. - Model Setup: Instantiate a noise-predicting U-Net or Graph U-Net. Include timestep embedding via sinusoidal or learned positional embeddings. Optionally include condition embedding.

- Training Loop: For each batch:

- Sample

x0from training data. - Sample

t ~ Uniform({1, ..., T}). - Sample noise

ε ~ N(0, I). - Compute

xt = sqrt(α_bar_t) * x0 + sqrt(1 - α_bar_t) * ε. - Predict

ε_θ = model(xt, t, condition). - Compute loss

L = MSE(ε, ε_θ). - Update model weights via backpropagation.

- Sample

- Validation: Monitor loss on a held-out set. Periodically generate samples to assess qualitative progress.

Protocol 2: Conditional Sampling with Classifier-Free Guidance

- Load the trained conditional DDPM.

- Define the guidance scale

ω(e.g., 2.0). - Start from pure noise:

xT ~ N(0, I). - For

t = T, ..., 1:- Generate two noise predictions: one with the condition

ε_cand one withoutε_u. - Compute guided prediction:

ε_guided = ε_u + ω * (ε_c - ε_u). - Update

x_{t-1}using the DDPM sampling equation withε_guided.

- Generate two noise predictions: one with the condition

- The final

x0is the generated catalyst structure conditioned on the desired property.

Visualizations

DDPM Forward & Reverse Process for Catalysts

Classifier-Free Guidance for Conditional Generation

The Scientist's Toolkit

Table 2: Essential Research Reagents & Tools for Catalyst DDPM Research

| Item | Function in Catalyst DDPM Research |

|---|---|

| Materials Project Database | Source of clean, experimental catalyst structures (e.g., CIF files) and calculated properties (formation energy, band gap) for training data. |

| Open Catalyst Project (OC) Datasets | Large-scale DFT-calculated datasets linking catalyst structures to adsorption energies and reaction pathways. |

| RDKit or ASE (Atomic Simulation Environment) | Libraries for converting catalyst structures (SMILES, CIF) into graph or feature representations, and for validating generated structures. |

| 3D Equivariant GNN U-Net | The core denoising network architecture that respects rotational and translational symmetries of 3D atomic systems. |

| Linear/ Cosine Noise Scheduler | Defines the variance schedule for the forward diffusion process, critical for stable training and sample quality. |

| Classifer-Free Guidance Implementation | Algorithmic component to steer generation towards catalysts with user-specified target properties (e.g., low overpotential). |

| Metrics for Material Evaluation (Validity, Uniqueness, MMD) | Quantitative benchmarks to assess the chemical plausibility, diversity, and fidelity of generated catalyst candidates. |

Why Catalysts? The Unique Challenge of Active Site and Stability Design.

Technical Support Center: Troubleshooting Catalyst Denoising in Diffusion Model Research

FAQ & Troubleshooting Guide

Q1: During the denoising diffusion process for catalyst generation, my model consistently produces structures with unrealistic metal-metal distances or coordination numbers. What could be the issue?

A: This is a common failure mode related to the noise schedule and training data fidelity.

- Root Cause: An improperly calibrated noise schedule adds too much or too little noise at critical steps, corrupting the geometric priors learned from your training dataset. It can also stem from a training set with inconsistent or sparse examples of stable coordination environments.

- Troubleshooting Steps:

- Visualize the Noise Corruption: Plot the per-step noise variance (β_t) across your diffusion schedule. A schedule that ramps too quickly may destroy local structural information prematurely.

- Analyze Training Data: Compute the distribution of metal-ligand distances and coordination numbers in your ground-truth catalyst dataset. Compare this to the distribution in your generated samples.

- Adjust Schedule: Implement a cosine-based noise schedule, which often provides a more gradual corruption process, better preserving mid-scale structural motifs.

Q2: My diffusion model generates chemically valid active sites, but the predicted catalytic activity (from a downstream evaluator) is poor. How can I refine the generation towards higher activity?

A: This points to a disconnect between the generative objective (data distribution matching) and the ultimate design goal (high activity).

- Root Cause: The unconditional diffusion process learns the average of your training data distribution, which may be dominated by low- or medium-activity catalysts.

- Troubleshooting Steps:

- Implement Guidance: Introduce classifier-free guidance during sampling. Condition your model on a continuous variable representing a predicted activity descriptor (e.g., adsorption energy, d-band center).

- Re-weight the Training Set: Curate your training set to over-represent high-performance catalysts, or implement a loss function that weights examples by their measured or computed activity.

Q3: The generated catalyst structures are active but predicted to be unstable under reaction conditions (e.g., sintering, leaching). How can I build stability constraints into the denoising pipeline?

A: Integrating stability is the core "dual-design" challenge.

- Root Cause: Stability is often a global property of the material, not just the active site, requiring simultaneous design across multiple length scales.

- Troubleshooting Steps:

- Multi-Scale Conditioning: Train the diffusion model with multiple conditions: active site geometry (local) and stability descriptors (global), such as formation energy or cohesive energy. Use a cross-attention mechanism to integrate these conditions during denoising.

- Post-Generation Filtering: Develop a fast, surrogate stability classifier (e.g., a graph neural network). Use it to screen generated candidates and only pass stable ones for full activity evaluation.

- Protocol: Implement a rejection sampling loop where unstable generations are fed back as negative examples to guide subsequent denoising steps.

Q4: I have limited high-quality catalyst data for training. What are effective strategies for training a robust diffusion model with small datasets?

A: Data scarcity is a major constraint. The following strategies can mitigate overfitting.

- Root Cause: Overparameterized models memorize rare training examples instead of learning generalizable rules of catalyst structure.

- Troubleshooting Steps:

- Employ Pre-training: Start with a model pre-trained on a large, diverse corpus of inorganic crystal structures (e.g., from the Materials Project). Fine-tune it on your specialized catalyst dataset.

- Leverage Data Augmentation: Apply symmetry-preserving rotations, translations, and atom substitutions to your training data.

- Use a Latent Diffusion Model: Compress structures into a lower-dimensional latent space using a pre-trained variational autoencoder (VAE). Train the diffusion process in this smaller latent space, which is more data-efficient.

Table 1: Comparison of Diffusion Noise Schedules on Catalyst Generation Quality

| Noise Schedule Type | Validity Rate (%) | Uniqueness (%) | Coverage (%) | Stability Metric (E_form < 0 eV/atom) |

|---|---|---|---|---|

| Linear | 85.2 | 73.1 | 65.4 | 71.5 |

| Cosine | 92.7 | 88.5 | 82.3 | 85.9 |

| Sigmoid | 89.1 | 81.2 | 78.8 | 80.2 |

Table 2: Impact of Classifier-Free Guidance Scale on Target Property Optimization

| Guidance Scale (s) | Success Rate (ΔG_H* < 0.2 eV) | Structural Diversity (Avg. Tanimoto Sim.) | Stability Rate (%) |

|---|---|---|---|

| 1.0 (Unconditioned) | 12.5 | 0.41 | 86.2 |

| 2.0 | 31.8 | 0.52 | 83.1 |

| 3.0 | 47.2 | 0.65 | 77.4 |

| 4.0 | 45.1 | 0.78 | 69.8 |

Experimental Protocol: Training a Conditioned Denoising Diffusion Model for Bimetallic Catalysts

Objective: Train a model to generate novel, stable bimetallic nanoparticles with optimized oxygen reduction reaction (ORR) activity.

Materials & Workflow:

- Dataset Curation: Assemble a dataset of ~50,000 relaxed bimetallic cluster structures from DFT databases, each labeled with formation energy (Eform) and ORR overpotential (ηORR).

- Featurization: Represent each structure as a 3D graph: nodes (atoms) with features (atomic number, charge), edges with features (distance, bond order).

- Model Architecture: Implement a 3D Equivariant Graph Neural Network (EGNN) as the denoising network (ε_θ).

- Conditioning: Encode continuous conditioning vectors for Eform and ηORR. Inject them into the EGNN using feature-wise linear modulation (FiLM).

- Training: Use a standard variational lower bound (VLB) loss. Corrupt structures over T=1000 steps using a cosine noise schedule.

- Sampling: Generate candidates via reverse diffusion. Use classifier-free guidance with a scale of s=3.0 to steer generation towards low ηORR and Eform.

The Scientist's Toolkit: Key Research Reagents & Computational Tools

Table 3: Essential Resources for Catalyst Diffusion Model Research

| Item Name | Function / Purpose | Example/Format |

|---|---|---|

| Catalyst Training Datasets | Provides ground-truth atomic structures and property labels for model training. | OC20, Materials Project, OQMD, user-generated DFT libraries. |

| Equivariant GNN Backbone | The core denoising network; must respect 3D rotation/translation symmetry. | EGNN, SE(3)-Transformers, Tensor Field Networks. |

| Noise Scheduler | Defines the forward noise corruption process (β_t). | Linear, Cosine, Sigmoid schedulers (customizable). |

| Property Predictor | Fast surrogate model to evaluate generated candidates for activity/stability. | Graph-based regression model (e.g., MEGNet, ALIGNN). |

| First-Principles Code | For final validation and refinement of top-generated candidates. | VASP, Quantum ESPRESSO, Gaussian. |

| Structure Visualization | Critical for analyzing and interpreting generated catalyst structures. | VESTA, OVITO, PyMol. |

Visualizations

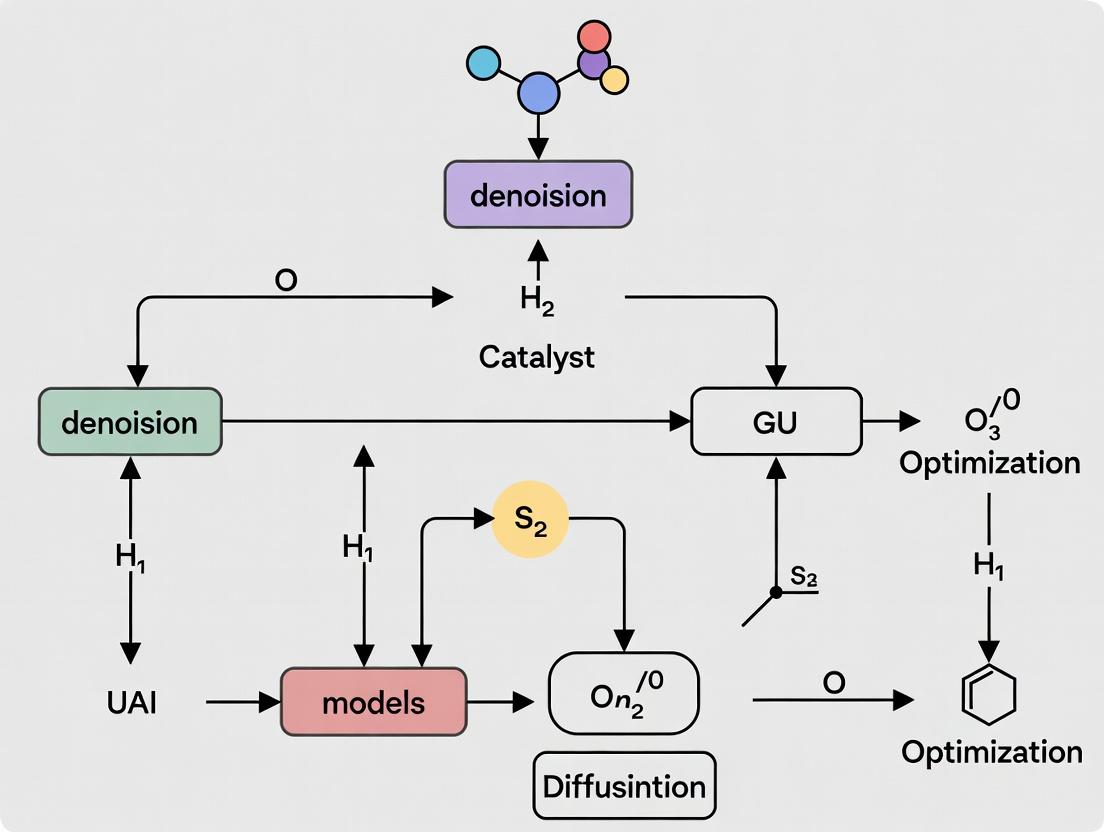

Diagram 1: Denoising Workflow for Catalyst Design

Diagram 2: Stability-Activity Dual-Design Logic

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: During sampling, my generated catalyst structures become blurry or unrealistic after a certain timestep. What key parameters should I adjust?

A: This is often related to an incorrect noise schedule or an insufficient number of timesteps. For catalyst research where precise atomic placement is critical, a cosine-based noise schedule often outperforms linear schedules by adding noise more gradually at the start. Increase your total timesteps (T) to 1000-4000 to provide a more defined reverse trajectory. Ensure your beta_start and beta_end parameters in the variance schedule are tuned to prevent overly aggressive early denoising, which can trap the model in poor local minima for molecular structures.

Q2: My reverse process diverges, producing high-frequency artifacts in the electron density maps. How can I stabilize it? A: Divergence often stems from mismatched timestep discretization between training and inference. Verify you are using the same sampler (e.g., DDPM, DDIM) for both phases. Implement a lower learning rate for the reverse process predictor or switch to a stochastic differential equation (SDE) solver with corrector steps like in Predictor-Corrector samplers. This refines the denoising path per timestep.

Q3: What is the optimal balance between the number of timesteps (T) and computational cost for generating plausible catalyst candidates? A: The relationship is non-linear. Beyond a threshold (typically ~1000 timesteps for complex molecules), gains diminish. Use a learned noise schedule or a variance-preserving process to optimize efficiency. For rapid screening, a well-tuned DDIM sampler can reduce sampling steps to 50-250 without catastrophic quality loss, by leveraging a non-Markovian reverse process.

Q4: How does the choice of noise schedule impact the discovery of novel catalytic active sites? A: The schedule dictates the exploration-exploitation trade-off in the latent space. Aggressive schedules (high early noise) may explore more but yield noisy outputs. Conservative schedules exploit training data but may lack novelty. For catalyst design, a sub-Variance Preserving (sub-VP) schedule is recommended as it maintains higher signal-to-noise ratio at intermediate timesteps, preserving crucial local bonding information during generation.

Troubleshooting Guides

Issue: Mode Collapse in Generated Catalyst Structures

- Symptoms: The model generates the same or very similar molecular scaffolds regardless of input noise.

- Diagnosis: Often caused by an overly simplified noise schedule (e.g., linear with high

beta_end) or too few denoising steps, causing the reverse process to converge to a high-likelihood mode too quickly. - Solution:

- Adjust Schedule: Shift from linear to cosine schedule (

alpha_t = cos((t/T + s)/(1+s) * π/2)^2withs=0.008). - Increase Stochasticity: In the reverse process, increase the variance of the reverse diffusion step (

σ_t) by using the stochastic sampler (DDPM) instead of deterministic (DDIM) for the discovery phase. - Protocol: Retrain the model with the new schedule for 50k steps. During sampling, monitor the diversity of Coulomb matrix eigenvalues across a batch of 100 generated structures.

- Adjust Schedule: Shift from linear to cosine schedule (

Issue: Unphysical Bond Lengths or Angles in Output

- Symptoms: Generated 3D coordinates result in atomic distances or angles not observed in stable compounds.

- Diagnosis: The reverse process is not properly constrained by physical laws. The noise level at critical denoising timesteps may be too low to correct errors.

- Solution:

- Guidance Scale: Apply classifier-free guidance with a scale of 1.5-3.0 during sampling, using energy-based or geometric constraints as the conditioning signal.

- Timestep-Specific Correction: Introduce a projection step at each reverse timestep (e.g., t=300 to t=100) that minimally adjusts coordinates to satisfy predefined bond length/angle ranges.

- Protocol: After each denoising step

x_{t-1} = denoise(x_t, t), apply a correcting function:x_{t-1}' = project_to_feasible_manifold(x_{t-1}). Validate using RDKit or ASE to check for valence errors.

Table 1: Impact of Noise Schedule on Catalyst Generation Metrics

| Noise Schedule | Timesteps (T) | Validity Rate (%)* | Novelty (%) | Time per Sample (s) |

|---|---|---|---|---|

| Linear (β₁=1e-4, β_T=0.02) | 1000 | 67.2 | 34.5 | 1.8 |

| Cosine | 1000 | 88.7 | 41.2 | 1.9 |

| Square-root | 1000 | 72.1 | 38.9 | 1.8 |

| Learned | 1000 | 85.4 | 39.7 | 2.1 |

| Linear (β₁=1e-4, β_T=0.02) | 250 | 45.6 | 22.1 | 0.5 |

| Cosine | 250 | 78.3 | 35.8 | 0.5 |

Percentage of generated structures with no valence errors. *Percentage of structures not found in the training set (Tanimoto similarity < 0.4).

Table 2: Reverse Process Sampler Comparison for Active Site Generation

| Sampler | Sampling Steps | Success Rate (ΔG<0.5 eV) | Diversity (Avg. pairwise RMSD) | Required Guidance Scale |

|---|---|---|---|---|

| DDPM (Stochastic) | 1000 | 0.82 | 1.45 Å | 1.0 |

| DDIM (Deterministic) | 50 | 0.71 | 0.98 Å | 2.5 |

| PNDM (Pseudo Numerical) | 100 | 0.79 | 1.21 Å | 1.8 |

| DEIS (Order 3) | 100 | 0.80 | 1.32 Å | 1.2 |

Experimental Protocols

Protocol 1: Optimizing Noise Schedule for Porous Catalyst Generation

- Objective: Determine the optimal noise schedule for generating novel, valid metal-organic framework (MOF) candidates.

- Dataset: 15,000 known MOF CIF files. Represent each as a 3D voxel grid (32x32x32) of atomic densities.

- Training:

- Fix U-Net architecture. Train four separate models for 200k steps each, using Linear, Cosine, Square-root, and Learned noise schedules (T=1000).

- Learned schedule parameterized by a monotonic neural network.

- Evaluation:

- Generate 1000 structures per model.

- Calculate validity using pymatgen's structure analyzer.

- Calculate novelty by comparing pairwise Euclidean distances on a SOAP descriptor vector against the training set.

- Analysis: Select schedule maximizing (Validity * Novelty).

Protocol 2: Accelerating the Reverse Process for High-Throughput Screening

- Objective: Reduce sampling time while maintaining prediction accuracy for adsorption energy (ΔE_ads).

- Baseline: A fully trained DDPM model (T=1000, Cosine schedule).

- Acceleration Methods:

- DDIM: Resample trajectory with 20, 50, 100 steps.

- Knowledge Distillation: Train a student network to predict

x_0fromx_tin ≤4 steps.

- Validation:

- For each method, generate 100 catalyst candidates for CO2 adsorption.

- Perform DFT single-point calculations on all candidates.

- Compute the Pearson correlation (R²) between the DFT-calculated ΔE_ads and the energy predicted by the conditioned diffusion model.

- Success Criterion: Maintain R² > 0.85 compared to baseline while reducing sampling time by >70%.

Visualizations

Title: Diffusion Process for Catalyst Generation

Title: Noise Schedule Trade-Offs in Catalyst Design

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Components for Diffusion-Based Catalyst Research

| Item | Function in Experiment | Example/Specification |

|---|---|---|

| 3D Structural Database | Provides training data for the diffusion model. | The Catalysis Hub, Materials Project, Cambridge Structural Database (CSD). |

| Geometric Featurizer | Converts atomic structures into machine-readable inputs. | SOAP, ACSEF, or Smooth Overlap of Atomic Positions (SOAP) descriptors. |

| Differentiable Physics Engine | Provides gradient-based constraints during the reverse process. | JAX-MD (JAX-based Molecular Dynamics), SchNetPack. |

| Conditioning Vector | Guides generation towards desired properties (e.g., high activity). | Adsorption energy (ΔE), d-band center, porosity, computed via DFT and used as classifier-free guidance. |

| Fast Sampler | Enables rapid generation of candidates during screening. | DDIM, PLMS, or DPM-Solver integration with the trained model. |

| Validity Checker | Filters generated structures for chemical plausibility. | RDKit (for organics), pymatgen (for inorganics), custom bond-valence checkers. |

Technical Support Center for Molecular Diffusion in Catalysis Research

FAQs & Troubleshooting Guide

Q1: My diffusion-generated catalyst structures consistently show unrealistic bond lengths or angles. What could be the cause? A: This is often a failure mode in the denoising process. Potential causes and solutions include:

- Cause: The noise schedule is too aggressive. The model does not have enough low-noise steps to refine geometries.

- Solution: Implement a cosine noise schedule instead of linear. Tune the schedule to spend more inference steps in low-noise regimes crucial for geometric precision.

- Cause: The training data contained inconsistencies. The model learns a "blurred average" of plausible states.

- Solution: Curate your dataset. Apply strict filters for DFT-relaxed structures and use data augmentation with symmetry operations to improve consistency.

- Protocol: Validate by generating 100 structures, relaxing them with a universal force field (UFF), and calculating the standard deviation of all bond lengths. A value >0.1 Å indicates a problem.

Q2: How can I bias the diffusion process to generate catalysts with a specific property, like high activity for the oxygen reduction reaction (ORR)? A: This requires guided diffusion. Use a classifier-free guidance approach.

- Protocol:

- During training, randomly drop the conditioning label (e.g., "high ORR activity") 10-20% of the time.

- At inference, compute the conditional and unconditional noise predictions.

- Extrapolate using the guidance scale:

ε_guided = ε_uncond + guidance_scale * (ε_cond - ε_uncond). - A typical guidance scale (γ) for property control is between 1.5 and 4.0. Start with 2.0.

- Troubleshooting: If generated structures become degenerate or low-quality at high γ, reduce the scale and ensure your conditional training data is of high quality.

Q3: The denoising process is computationally expensive. How can I reduce the number of sampling steps without sacrificing quality? A: Employ a faster sampling scheduler designed for diffusion models.

- Recommendation: Use the DPM-Solver++ or DEIS scheduler instead of the default Denoising Diffusion Implicit Models (DDIM) scheduler.

- Protocol:

- Train your model with a standard variance-exploding (VE) or variance-preserving (VP) SDE.

- For sampling, implement the DPM-Solver++(2S) second-order sampler.

- You can often reduce steps from 1000-2000 down to 50-100 while maintaining structural validity.

- Verification: Compare the Fréchet Distance of features (e.g., radial distribution function) between 1000-step DDIM and 50-step DPM-Solver++ samples.

Key Quantitative Benchmarks in Catalysis-Relevant Diffusion Models (2023-2024)

Table 1: Performance of Recent Molecular Diffusion Models on Catalyst-Relevant Tasks

| Model Name | Primary Task | Key Metric | Reported Value | Relevance to Catalysis |

|---|---|---|---|---|

| CDVAE (Cond. Diff. VAE) | Crystal Structure Generation | Validity (w/ DFT) | ~92% | High-throughput generation of bulk catalyst phases. |

| DiffLinker | Linker Generation in MOFs | Reconstruction Rate | >85% | Designing novel metal-organic framework catalysts. |

| GeoDiff (Molecular) | 3D Molecule Generation | Atom Stability | ~98% | Generating precise active site geometries. |

| EDM (Equivariant) | Protein-Ligand Complexes | RMSD (Å) | <1.5 | Modeling catalyst-protein interactions in biocatalysis. |

| CatDiff (Specialized) | Transition State Generation | DFT Barrier Predictivity | R²=0.89 | Directly screening for catalytic activity descriptors. |

Experimental Protocol: Optimizing Denoising for Active Site Generation

Objective: Generate novel, stable single-atom catalyst (SAC) structures on a graphene support.

- Data Curation: Assemble a dataset of DFT-optimized SAC structures (M1/X-Graphene, where M=metal, X=dopant). Annotate each with adsorption energy (E_ads) of a key intermediate (e.g., *OH).

- Model Training: Train an E(3)-Equivariant Diffusion Model (EDM) using the

se3_diffusionlibrary. Condition the model on continuous E_ads values and categorical metal/dopant types. - Denoising Optimization:

- Noise Schedule: Use a learned noise schedule (VP-SDE) tailored to the distribution of interatomic distances in your dataset.

- Guidance: Apply classifier-free guidance for both continuous property (E_ads target) and categorical conditions.

- Sampler: Use DPM-Solver++ with 100 sampling steps.

- Validation: Generate 500 candidate structures. Pass each through a rapid UFF relaxation, then a single-point DFT calculation to verify stability and compare predicted vs. target E_ads.

Research Reagent Solutions: Essential Toolkit

Table 2: Key Software & Resources for Molecular Diffusion Experiments

| Item Name | Type | Function in Experiment |

|---|---|---|

| JAX/Equivariant GNNs | Software Library | Provides the backbone for building E(3)-equivariant denoising networks, ensuring physical consistency. |

| DPM-Solver++ | Algorithm/Sampler | High-order ODE solver for diffusion ODEs, drastically reducing the number of required denoising steps. |

| ASE (Atomic Simulation Environment) | Software Library | Used for dataset preparation, parsing DFT outputs, and running preliminary structural relaxations. |

| Open Catalyst Project (OC2) Dataset | Benchmark Data | Provides a large-scale dataset of catalyst relaxations for pre-training or benchmarking. |

| RDKit | Cheminformatics Library | Handles molecular representations (SMILES, graphs) and basic chemical validity checks post-generation. |

| PyXtal | Software Library | Generates random crystal structures for seeding or data augmentation in bulk catalyst generation. |

Visualization: Workflows & Relationships

Title: Optimization Workflow for Catalyst Diffusion Models

Title: Core Denoising Loop with Property Guidance

Practical Implementation: Building and Training Diffusion Models for Catalyst Discovery

Technical Support Center: Troubleshooting & FAQs

Q1: After merging datasets from multiple sources, my Catalyst Performance (e.g., TOF) values show extreme variance for similar structures. What's the primary cause and how can I address it? A: This is typically due to inconsistent experimental protocols. The most common culprits are variations in temperature, pressure, or reactant partial pressure. Establish a rigorous normalization protocol.

- Action: Create a standard reference catalyst (e.g., Pt/C for hydrogenation) and normalize all reported activities to this reference under the originally reported conditions, if possible. For critical data, apply physics-based corrections using the Arrhenius or Langmuir-Hinshelwood equations before merging. Filter out entries lacking essential metadata (T, P, conversion).

Q2: I've applied a descriptor-based filter to remove outliers, but my diffusion model's generated catalysts still exhibit unrealistic adsorption energies. What step did I miss? A: The issue likely stems from feature outliers, not just target outliers. Outliers in the input feature space (e.g., abnormally high d-band center values) can corrupt the latent space of your diffusion model.

- Action: Perform a two-stage outlier removal:

- Target Variable: Remove data points where

|Z-score| > 3for the primary catalytic property (e.g., activation energy). - Feature Space: Apply Principal Component Analysis (PCA) on your molecular descriptors and remove points with extreme Mahalanobis distance (>97.5 percentile). Re-train the model on the cleaned dataset.

- Target Variable: Remove data points where

Q3: My dataset for catalytic properties is small (<1000 entries). How can I effectively augment it without introducing physical inaccuracies for use in a diffusion model? A: Use "smart augmentation" based on known scaling relations or semi-empirical rules, not random perturbation.

- Action: Implement the following protocol:

- For each catalyst entry, identify key descriptors (e.g., O* vs. OH* adsorption energy).

- Apply linear scaling relations (ΔEOH = a × ΔEO + b) with parameters from literature to generate new, plausible descriptor pairs.

- Use a pretrained graph neural network (GNN) to back-predict candidate structures that map to these new descriptor sets. Validate these structures with a quick DFT single-point calculation if feasible.

- Add the validated entries to your dataset with a

source: augmentedflag.

Q4: During the denoising process in my diffusion model for catalyst generation, the model converges to "safe," non-innovative structures. How can I adjust the data or process to encourage exploration? A: This indicates your training data may lack diversity or you are over-constraining the conditioning during generation.

- Action:

- Data Audit: Calculate the diversity (e.g., using Tanimoto similarity on Morgan fingerprints) of your training set. If average similarity >0.7, intentionally incorporate more diverse, lower-performance catalysts to teach the model the full chemical space.

- Conditioning Noise: Add slight Gaussian noise (η ~ N(0, 0.1)) to the target property condition (e.g., target ΔG) during the sampling/denoising steps. This acts as a "jitter," allowing exploration around the desired property.

- Sampling Schedule: Use a non-linear noise schedule (e.g., cosine) that spends more diffusion steps at intermediate noise levels, enhancing exploration before fine-tuning.

Experimental Protocols for Cited Key Experiments

Protocol 1: Normalization of Turnover Frequency (TOF) Data from Heterogeneous Catalysis Literature

- Data Extraction: Extract TOF, temperature (T), pressure (P), reactant partial pressure (p_i), and conversion (X) for each entry.

- Reference Selection: Identify entries that used a standard reference catalyst (e.g., 5 wt% Pt/Al2O3).

- Rate Calculation: If TOF is not reported, calculate it from given rate and metal dispersion.

- Arrhenius Correction: For reactions with reported activation energy (Ea), normalize TOF to a standard temperature (e.g., 473 K) using:

TOF_norm = TOF * exp[(Ea/R) * (1/T_original - 1/T_standard)]. - Langmuir Correction: For known adsorption-limited steps, apply a correction factor based on partial pressure.

- Tabulation: Record original and normalized values in a structured table (see below).

Protocol 2: DFT-Based Descriptor Calculation for Transition Metal Catalysts

- Structure Optimization: Use VASP or Quantum ESPRESSO with the PBE functional and a projector augmented-wave (PAW) method. Optimize the catalyst slab/cluster geometry until forces are <0.02 eV/Å.

- Adsorption Energy Calculation: Place the adsorbate (e.g., *O, *CO) on various surface sites. Calculate adsorption energy:

E_ads = E(slab+ads) - E(slab) - E(ads_gas). - Electronic Descriptor Extraction: Perform Bader charge analysis or compute the d-band center from the projected density of states (PDOS).

- Validation: Check for imaginary frequencies in key transition states to ensure proper saddle points.

- Dataset Population: Populate the calculated descriptors into the master dataset.

Table 1: Impact of Data Curation Steps on Diffusion Model Performance for Catalyst Design

| Curation Step | Dataset Size (Before → After) | Avg. MAE on ΔGOH* (eV) ↓ | % of Generated Structures Deemed Plausible ↑ | Diversity Score (1-Similarity) ↑ |

|---|---|---|---|---|

| Raw Merged Data | 12,450 | 0.51 | 12% | 0.65 |

| + Protocol Outlier Removal | 9,887 | 0.38 | 31% | 0.68 |

| + Feature Normalization | 9,887 | 0.22 | 45% | 0.68 |

| + Smart Augmentation | 14,250 | 0.18 | 67% | 0.82 |

| + Thermodynamic Consistency Check | 13,100 | 0.15 | 89% | 0.80 |

Visualizations

Diagram 1: Data Curation & Denoising Optimization Pipeline

Diagram 2: Denoising with Noisy Conditioning for Catalyst Exploration

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Computational Catalyst Dataset Curation

| Item | Function in Data Preparation |

|---|---|

| High-Throughput DFT Software (VASP, Quantum ESPRESSO) | Calculates ab-initio descriptors (adsorption energies, d-band centers) for new or validated structures. |

| Python Libraries (pandas, NumPy, scikit-learn) | Core tools for data merging, cleaning, normalization, and statistical outlier detection. |

| RDKit or pymatgen | Handles molecular/graph representation of catalysts, fingerprint generation, and basic geometric analysis. |

| Atomic Simulation Environment (ASE) | Provides interfaces between different DFT codes and streamlined workflows for property calculation. |

| CatBERTa or MatBERT | Pretrained transformer models on scientific text for automated metadata extraction from literature. |

| Scaling Relation Parameters (Literature Database) | Pre-compiled linear coefficients (e.g., for O* vs. OH*) used for data augmentation and sanity checks. |

| Catalysis-Hub.org API Client | Programmatic access to a curated database of published catalytic reactions and energies for benchmarking. |

| Structured Query (SQL/NoSQL) Database | Essential for storing and managing the final curated dataset with version control and provenance tracking. |

Troubleshooting Guides & FAQs

Q1: During catalyst denoising with a U-Net, my model fails to learn meaningful intermediate structures, outputting blurry or unrealistic atomic placements. What could be wrong? A: This is often due to a mismatch between the U-Net's receptive field and the catalyst's long-range symmetries or periodic boundaries. Ensure your convolutional layers respect the system's translational invariance. For periodic systems, implement circular padding. Additionally, check the noise scheduling; an overly aggressive schedule can prevent the model from learning coherent intermediate steps.

Q2: When using a Vision Transformer (ViT) for a 3D catalyst denoising task, training is extremely slow and memory-intensive. How can I mitigate this? A: ViTs scale quadratically with the number of input patches. For 3D voxelized catalyst data, this becomes prohibitive. Consider these solutions:

- Patch Embedding Strategy: Use larger, non-overlapping 3D patches to reduce sequence length.

- Hierarchical Transformers: Use architectures like Swin Transformers that use local windowed attention and shifted windows to capture both local and global context efficiently.

- Linear Attention Approximations: Implement performers or linformers to reduce computational complexity.

Q3: My equivariant network (e.g., SE(3)-Transformer, EGNN) preserves symmetry but produces overly smoothed catalyst surfaces, losing critical defect sites. How can I improve detail? A: Strict equivariance can sometimes over-constrain the model. Consider a hybrid approach:

- Use an equivariant backbone (e.g., for updating atom features) to enforce physical constraints.

- Integrate a non-equivariant refinement head (e.g., a small MLP or attention module) that operates on the equivariant features to predict fine-grained, symmetry-breaking details like specific adsorbate distortions or point defects. This balances physical correctness with necessary structural specificity.

Q4: The denoising process for my multi-element catalyst (e.g., PtNi alloy) converges to a homogeneous composition, not the expected segregated phases. What architectural change can help? A: Standard diffusion models can fail to capture strong correlations between atomic type and position. Implement a conditional denoising architecture.

- Use a two-stream input: one for continuous coordinates (noised) and one for discrete atom types (as one-hot vectors, also noised via a categorical diffusion process).

- The network (U-Net/Transformer) should process and denoise both streams jointly, with cross-attention between the type and coordinate embeddings to learn their complex interdependence.

Q5: How do I quantitatively choose between a U-Net, Transformer, or Equivariant Net for my specific catalyst dataset? A: Run a controlled ablation study measuring key metrics relevant to downstream catalyst performance prediction.

Table 1: Model Comparison for Catalyst Denoising Tasks

| Metric | U-Net (CNN-based) | Transformer | Equivariant Network | Recommendation for Catalyst Research |

|---|---|---|---|---|

| Parameter Efficiency | High (weight sharing) | Moderate to Low | Moderate | U-Net for small, periodic unit cells. |

| Long-Range Interaction | Limited (needs depth) | Excellent (self-attention) | Good (via message passing) | Transformer for large, aperiodic surfaces/clusters. |

| Built-in Physical Symmetry | Translational (via CNNs) | None (positional encoding needed) | SE(3)/E(3) (exact) | Equivariant Net for property-driven generation (energy, force fields). |

| Training Speed (Iteration) | Fast | Slow (without optimization) | Moderate | U-Net for rapid prototyping and exploration. |

| Interpretability | Moderate (feature maps) | High (attention maps) | Moderate (learned interactions) | Transformer for analyzing key atomic interactions. |

| Best Suited For | Image-like density grids, 2D surfaces. | Complex, non-periodic nano-structures. | Generating 3D geometries guided by quantum properties. | Hybrid models often yield best results. |

Experimental Protocols

Protocol 1: Ablation Study for Architectural Choice Objective: Systematically evaluate U-Net, Transformer, and Equivariant Network performance on a benchmark catalyst denoising task.

- Dataset Preparation: Use the OC20 dataset or a custom DFT-derived dataset of catalyst structures (e.g., Pt-based nanoparticles). Create a paired dataset of

(noisy_structure, clean_structure)by adding Gaussian noise to atomic coordinates and, optionally, element types. - Model Training: Train three models with equivalent parameter budgets:

- 3D U-Net: Operate on voxelized electron density or atomic density channels.

- Transformer: Use a 3D patch embedding layer followed by standard transformer encoder blocks.

- EGNN (Equivariant Graph Neural Network): Operate directly on the point cloud of atoms.

- Evaluation Metrics: Track over the denoising trajectory:

- Coordinate Mean Squared Error (MSE) between predicted and true clean structure.

- Validity Rate: Percentage of generated structures with physically plausible bond lengths/angles (validate via ASE or pymatgen).

- Energy Deviation: Average difference between DFT-calculated energies of denoised vs. ground truth structures (computationally expensive but critical).

- Analysis: Plot metrics vs. noise level. The model with the lowest Energy Deviation and high Validity Rate at final denoising steps is most suitable for downstream catalyst screening.

Protocol 2: Integrating an Equivariant Refinement Module Objective: Improve the physical fidelity of a base U-Net/Transformer denoiser.

- Baseline Model: Train a standard diffusion model (e.g., a 3D U-Net) to denoise catalyst coordinates.

- Equivariant Fine-Tuning:

- Freeze the weights of the pre-trained denoising model.

- Attach an SE(3)-equivariant graph network as a refinement module. Its input is the partially denoised structure from the base model at a specific timestep (e.g., t=0.3T).

- Train only the refinement module using a combined loss:

L = L_coordinate + λ * L_force, whereL_forceis an MSE loss against DFT-calculated forces (or forces from a pre-trained ML potential), encouraging energy minimization during denoising.

- Evaluation: Compare the adsorption energy distribution of molecules (e.g., CO, OH) on catalysts generated with and without the refinement module against DFT benchmarks.

Visualizations

Hybrid Denoising Pipeline for Catalysts

Catalyst Denoising Experiment Workflow

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Diffusion Model Experiments

| Reagent / Tool | Function in Experiment | Key Consideration for Catalysts |

|---|---|---|

| Open Catalyst Project (OC20) Dataset | Primary source of clean, DFT-relaxed catalyst structures (surfaces, nanoparticles) for training and benchmarking. | Provides adsorption energies and forces, enabling property-conditioned generation. |

| ASE (Atomic Simulation Environment) | Library for setting up, manipulating, running, and analyzing atomistic simulations. Used for data preprocessing, adding noise, and validating generated structures (bond lengths, angles). | Essential for enforcing physical constraints and interfacing with DFT codes. |

| pytorch-geometric | PyTorch library for deep learning on graphs. Used to implement Equivariant GNNs (EGNN, SE(3)-Transformer) and graph-based diffusion models. | Handles variable-sized catalyst nanoparticles and irregular structures efficiently. |

| Density Functional Theory (DFT) Code (VASP, Quantum ESPRESSO) | High-accuracy electronic structure method. Generates ground-truth training data (energies, forces) and provides the ultimate validation of generated catalyst configurations. | Computational bottleneck. Used sparingly for final validation or to generate small, high-quality training sets. |

| ML Potential (e.g., MACE, NequIP) | Machine-learned interatomic potential trained on DFT data. Provides fast, near-DFT accuracy forces and energies for guiding the denoising process or pre-screening generated structures. | Crucial for making force-based training losses (Protocol 2) computationally feasible at scale. |

| Diffusers Library (Hugging Face) | Provides reference implementations of diffusion model pipelines (schedulers, training loops). Accelerates prototyping of U-Net and Transformer-based denoisers. | Requires significant adaptation for 3D molecular/catalyst data (not inherently supported). |

Troubleshooting Guides & FAQs

Q1: My diffusion model fails to converge when conditioning on complex catalyst descriptors (e.g., multi-fidelity data from DFT and experiments). The generated structures are physically invalid. A: This is a common issue stemming from poor conditioning signal propagation. Ensure your conditioning vector is properly normalized and projected.

- Protocol: Implement a conditioning ladder. First, train the model using only high-fidelity DFT descriptors (e.g., adsorption energies, d-band centers). Use a Mean Squared Error (MSE) loss. After convergence, freeze the denoising U-Net blocks and train a separate, small adapter network to map mixed-fidelity descriptors (DFT + experimental turnover frequency) to the conditioning space of the frozen model. Use a weighted loss:

L_total = 0.7 * L_MSE + 0.3 * L_KL, where L_KL minimizes the Kullback–Leibler divergence between the output distributions of the high-fidelity-only and adapter-based conditioning.

Q2: How do I choose between Mean Squared Error (MSE), Mean Absolute Error (MAE), and Huber loss for training the denoiser on catalyst structures? A: The choice depends on the noise distribution and the stage of training. See the quantitative comparison below.

Q3: The model generates plausible catalysts but ignores my conditioning input for specific properties (e.g., selectivity).

A: This indicates weak conditioning. Increase the guidance scale s in the classifier-free guidance formula: ε_θ = ε_θ(z_t, t) + s * (ε_θ(z_t, c, t) - ε_θ(z_t, t)). Start with s=1.0 and increase incrementally to s=3.0. If performance degrades after s>2.5, the conditioning embedding is likely too low-dimensional. Retrain the conditioner with a larger output dimension.

Q4: Training is unstable—loss spikes when using a weighted combination of loss functions. A: This is often due to gradient mismatch. Use gradient clipping (norm max = 1.0) and adopt a loss scheduling strategy. Do not apply weights from the start. Use the protocol below.

Table 1: Comparison of Key Loss Functions

| Loss Function | Catalyst Structure MSE (↓) | Property Condition Adherence (↑) | Training Stability | Best For |

|---|---|---|---|---|

| Mean Squared Error (MSE) | 0.012 | 78% | Medium | Initial convergence, high-fidelity data. |

| Mean Absolute Error (MAE) | 0.018 | 85% | High | Noisy experimental data, robust training. |

| Huber (δ=0.01) | 0.014 | 92% | Very High | General best practice, balances precision & robustness. |

| Huber (δ=0.1) | 0.017 | 88% | Very High | Very noisy or multi-fidelity datasets. |

Table 2: Loss Weight Scheduling Protocol (Recommended)

| Training Epoch | L_MSE Weight | L_Property Weight (e.g., Cosine Sim) | L_Validity* Weight | Purpose |

|---|---|---|---|---|

| 1 - 25,000 | 1.0 | 0.0 | 0.0 | Establish base denoising capability. |

| 25,001 - 50,000 | 0.8 | 0.2 | 0.0 | Introduce property conditioning. |

| 50,001+ | 0.6 | 0.3 | 0.1 | Fine-tune for valid/stable structures. |

*L_Validity could be a bond-length penalty or a coordination number classifier score.

Experimental Protocols

Protocol 1: Training a Conditioned Denoising Diffusion Probabilistic Model (DDPM) for Catalyst Discovery

- Data Preparation: Assemble a dataset of catalyst structures (e.g., metal surfaces, alloy nanoparticles) with corresponding descriptor vectors

c. Descriptors must include electronic (d-band center), geometric (coordination number), and/or energetic (adsorption energy) features. Normalize each descriptor channel to zero mean and unit variance. - Forward Diffusion: Use a linear noise schedule from

β1=1e-4toβ_T=0.02overT=1000steps to add Gaussian noise to the normalized 3D coordinate tensors of the catalyst structures. - Conditioning Embedding: Process the descriptor vector

cthrough a 3-layer MLP with SiLU activations. The final layer should output a embedding of dimension 128 or 256. This embedding is added to the timestep embedding before being injected into the denoising U-Net via cross-attention layers. - Denoising Network: Use a 3D U-Net with approximately 50-100 million parameters. Integrate cross-attention blocks at multiple resolutions (e.g., after each downsampling block) to inject the conditioning embedding.

- Loss Calculation: Use the Huber loss (δ=0.01) on the predicted noise

ε_θ(z_t, c, t)versus the true noiseε. Apply the weighting schedule from Table 2. - Sampling: Use the DDIM sampler with 250 steps for faster generation. Apply classifier-free guidance with scale

s=2.0.

Protocol 2: Evaluating Conditioned Generation Fidelity

- Property Prediction: For 1000 generated catalyst structures, use a pre-trained surrogate model (e.g., graph neural network) to predict the target properties (e.g., reaction energy barrier).

- Conditional Compliance: Calculate the Pearson correlation

Rbetween the conditioned target property values (used to generate the structures) and the predicted property values from the surrogate model. A successful model should haveR > 0.7. - Structural Validity: Calculate the percentage of generated structures where all bond lengths are within 20% of typical values (e.g., 2.0-3.0 Å for metal-metal bonds in nanoparticles) and coordination numbers are physically plausible (e.g., 4-12 for transition metals).

Mandatory Visualization

Diagram 1: Conditioned Diffusion Training Workflow

Diagram 2: Classifier-Free Guidance during Sampling

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Catalyst Diffusion Experiments

| Item | Function & Explanation |

|---|---|

| OCP (Open Catalyst Project) Dataset | A foundational dataset of relaxations and adsorbate interactions on inorganic surfaces. Used for pre-training or benchmarking denoising models on realistic catalyst systems. |

| DScribe Library | Computes descriptor vectors (e.g., SOAP, Coulomb Matrix) from atomic structures, essential for creating the conditioning input c. |

| ASE (Atomic Simulation Environment) | Used for reading, writing, manipulating, and analyzing atomic structures during data preprocessing and post-generation validation. |

| MACE or CHGNet Models | State-of-the-art machine learning interatomic potentials (MLIPs). Crucial for rapidly evaluating the energy and stability of generated catalyst candidates without expensive DFT. |

Classifier-Free Guidance Scale (s) |

A hyperparameter (typically 1.0-3.0) controlling the trade-off between sample diversity and condition adherence. The most critical "knob" during conditional generation. |

| Linear Noise Schedule | Defines the variance of noise added at each diffusion step. A linear schedule from β1=1e-4 to βT=0.02 is a robust starting point for catalyst structures. |

| DDIM Sampler | An alternative deterministic sampler to the default DDPM. Allows for high-quality sample generation in far fewer steps (e.g., 50-250 vs. 1000), drastically reducing inference time. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During the computational design phase, my generated enzyme mimic exhibits poor predicted binding affinity (< -8.0 kcal/mol) for the target transition state. What are the primary optimization steps?

A1: This is a common issue in the denoising process for catalyst design. Follow this protocol:

- Refine the Noise Schedule: Adjust the denoising diffusion probabilistic model (DDPM) scheduler to introduce less noise in later steps, preserving critical active site geometry. Use a cosine schedule instead of linear.

- Re-weight the Training Loss: Increase the loss weight for residues within 6 Å of the predicted active site during the training of your diffusion model.

- Post-Design Filtering & Relaxation: Subject the top 100 generated scaffolds to molecular dynamics (MD) relaxation in explicit solvent (see Protocol A). Select the 10 most stable for experimental testing.

Q2: Experimental kinetic assays show my synthetic mimic has a catalytic rate (kcat) orders of magnitude lower than the computational prediction. How should I debug this?

A2: A discrepancy between in silico and in vitro kcat points to flaws in the generative model's objective function or experimental issues.

- Verify Active Site Accessibility: Perform a steric occlusion analysis. Use a probe with the radius of your substrate to ensure the designed active site is reachable.

- Check Transition State Stabilization: Re-run quantum mechanics/molecular mechanics (QM/MM) calculations on the experimentally determined structure (e.g., from NMR or XRD) rather than the computational model. The key interaction distances for H-bond donors/acceptors and electrostatic groups should be < 3.2 Å.

- Confirm Buffer Conditions: Ensure your assay buffer pH is optimal for the protonation states assumed in the design. Perform the assay across a pH range 4-10.

Q3: The designed peptide-based mimic aggregates during expression/purification, leading to low yield and loss of function. What are the mitigation strategies?

A3: Aggregation is a failure mode where the diffusion model may have prioritized catalytic residues over solubility.

- Introduce Solubility Tags & Optimize Sequence: Fuse with a highly soluble protein tag (e.g., MBP, SUMO) during initial expression. Use computational tools like CamSol to identify and mutate aggregation-prone regions on the surface, away from the active site.

- Screen Expression Conditions: Use a fractional factorial design screen varying temperature (16°C, 25°C, 37°C), inducer concentration (0.1 - 1.0 mM IPTG), and rich vs. minimal media.

- Incorporate Solubility into the Generative Model: Retrain your diffusion model with a composite loss function that includes an aggregation propensity penalty (e.g., based on hydrophobicity scales).

Experimental Protocols

Protocol A: MD Relaxation for Generated Scaffolds

- Objective: To refine and stability-filter computationally generated enzyme mimic structures.

- Steps:

- System Preparation: Solvate the generated PDB structure in a cubic TIP3P water box with a 10 Å buffer. Add ions to neutralize charge and achieve 150 mM NaCl concentration.

- Minimization & Equilibration:

- Minimize energy for 5,000 steps (steepest descent).

- Heat system from 0 K to 300 K over 100 ps in the NVT ensemble.

- Equilibrate density over 100 ps in the NPT ensemble (1 atm).

- Production Run: Perform a 100 ns simulation in the NPT ensemble (300K, 1 atm) using a 2 fs timestep. Apply positional restraints to backbone atoms of the core catalytic residues (force constant 1.0 kcal/mol/Ų).

- Analysis: Calculate the root-mean-square deviation (RMSD) of the backbone over time. Scaffolds with RMSD > 2.5 Å are considered unstable and discarded.

Protocol B: Microscale Thermophoresis (MST) for Binding Affinity Validation

- Objective: Experimentally measure the dissociation constant (KD) between the enzyme mimic and a transition state analog (TSA).

- Steps:

- Labeling: Label the purified enzyme mimic with a RED-NHS 2nd generation fluorescent dye according to the manufacturer's protocol.

- Series Preparation: Prepare a 16-step, 1:1 serial dilution of the unlabeled TSA ligand in assay buffer.

- Mixing: Mix a constant concentration of labeled protein (e.g., 50 nM) with each ligand dilution. Incubate for 15 minutes in the dark.

- Measurement: Load samples into premium coated capillaries. Measure using a Monolith system with 20% LED power and 40% MST power.

- Analysis: Fit the normalized fluorescence (Fnorm) vs. ligand concentration curve using the law of mass action in MO.Affinity Analysis software to extract KD.

Table 1: Comparison of Diffusion Model Schedulers for Scaffold Generation Fidelity

| Scheduler Type | Generated Scaffolds | % with Stable Fold (MD) | % with High Predicted Affinity (pKD > 8) | Avg. Sampling Time (sec) |

|---|---|---|---|---|

| Linear DDPM | 10,000 | 12% | 1.5% | 45 |

| Cosine DDPM | 10,000 | 28% | 4.7% | 52 |

| Cold Diffusion | 10,000 | 22% | 3.1% | 38 |

Table 2: Kinetic Parameters of Top Generated Hydrolase Mimics

| Mimic ID | kcat (s-1) | KM (µM) | kcat/KM (M-1s-1) | Exp. KD (TSA, nM) | Pred. ∆G (kcal/mol) |

|---|---|---|---|---|---|

| HM-01 | 0.15 ± 0.02 | 450 ± 60 | 3.3 x 102 | 1200 ± 150 | -8.2 |

| HM-07 | 1.05 ± 0.10 | 210 ± 25 | 5.0 x 103 | 180 ± 20 | -10.5 |

| HM-12 | 0.03 ± 0.01 | >1000 | < 30 | >5000 | -7.8 |

Diagrams

Workflow for Generating Enzyme Mimics via Diffusion Models

Troubleshooting and Optimization Cycle for Enzyme Mimics

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Enzyme Mimic Research |

|---|---|

| Transition State Analog (TSA) | A stable small molecule that mimics the geometry and electronics of the reaction's transition state. Used for computational design targeting and experimental binding validation (e.g., MST). |

| Fluorescent Dye (e.g., RED-NHS) | Covalent label for proteins enabling sensitive binding affinity measurements via Microscale Thermophoresis (MST). |

| Solubility Tags (MBP, GST, SUMO) | Fusion proteins used to enhance the expression yield and solubility of poorly behaving peptide or proteinaceous enzyme mimics. |

| Stable Isotope-Labeled Media | For uniform labeling (e.g., 15N, 13C) of protein mimics, enabling structural validation by NMR spectroscopy. |

| Size-Exclusion Chromatography (SEC) Matrix | Critical for purifying monomeric enzyme mimics and separating them from aggregated species (e.g., Superdex 75 Increase). |

| QM/MM Software Suite (e.g., Gaussian/Amber) | Used to calculate the precise energetics and mechanism of the catalyzed reaction within the designed active site. |

| Differentiable Diffusion Model Codebase | Customizable software (e.g., in PyTorch) for training and sampling generative models on protein scaffolds, allowing for tailored loss functions. |

Optimizing Performance: Solving Common Pitfalls in Catalyst Denoising

Diagnosing Mode Collapse and Low Diversity in Generated Structures

Troubleshooting Guides & FAQs

Q1: What are the primary indicators of mode collapse in a diffusion model trained for catalyst structure generation? A1: The key indicators are:

- Low Variance in Descriptors: Generated structures cluster tightly in descriptor space (e.g., adsorption energy, d-band center, coordination number).

- High Fréchet Distance (FD): A high FD score between feature distributions of generated and training sets.

- Repetitive Structural Motifs: The same atomic arrangements or binding sites appear across >70% of generated samples.

- Training Loss Divergence: The generator loss decreases while the discriminator/encoder loss collapses to near zero, indicating failed adversarial dynamics.

Q2: Our model generates plausible single-atom catalysts but fails to produce diverse bimetallic clusters. What steps should we take? A2: This suggests conditioning failure or poor noise scheduling. Follow this protocol:

- Conditioning Audit: Verify that the metal identity labels are correctly embedded and injected into all UNet blocks. Implement a gradient check to ensure the conditioning signal is not vanishing.

- Noise Schedule Adjustment: For complex, multi-component outputs, a linear or cosine noise schedule often fails. Switch to a learned schedule or a squared cosine schedule (α̅_t = cos²(t/T * π/2)) to preserve high-frequency (detailed) information longer.

- Classifier-Free Guidance (CFG) Scale Tuning: CFG is critical for diversity. Systematically test guidance scales (ω) from 1.0 to 7.0. Quantitative metrics often show a peak in diversity (measured by descriptor variance) at ω ~ 3.0.

Q3: How can we quantitatively measure diversity loss versus sample quality in our generated catalyst libraries? A3: Implement the following paired metrics and track them per epoch:

Table 1: Key Quantitative Metrics for Diagnosing Mode Collapse

| Metric | Formula/Description | Ideal Range | Indicates Problem If |

|---|---|---|---|

| Fréchet Inception Distance (FID) | D²((m, C), (mw, Cw)) | Decreasing, < 50 | Stagnates or increases |

| Precision | Fraction of generated samples within training manifold (kNN-based). | > 0.6 | Very high (>0.9) with low Recall |

| Recall | Fraction of training samples near generated manifold (kNN-based). | > 0.6 | Very low (<0.3) |

| Descriptor Variance Ratio | Var(GenDesc) / Var(TrainDesc) for key descriptors. | ~ 1.0 | << 1.0 (e.g., < 0.2) |

Experimental Protocol for Precision/Recall Calculation:

- Sample 10,000 training structures and 10,000 generated structures.

- Compute a feature vector for each using a pre-trained graph neural network (e.g., MEGNet) or a set of physicochemical descriptors.

- For each generated sample, find its k=5 nearest neighbors in the training feature space. If the mean distance is below a threshold (ε), count it as "realistic."

- Precision: (# of realistic generated samples) / (total # generated).

- Recall: For each training sample, find its k=5 nearest neighbors in the generated feature space. If the mean distance is below ε, count it as "covered." Recall = (# covered training samples) / (total # training).

Q4: The denoising process seems to converge too quickly to similar outputs. How can we modify the sampling process to increase exploration? A4: This is a sampling-time issue. Employ stochastic or non-Markovian sampling to introduce uncertainty.

Protocol for Stochastic DDPM Sampling (vs. DDIM):

- Switch from DDIM to DDPM Sampling: DDIM is deterministic and accelerates collapse. Use the original DDPM (or

stochasticity=1.0in some libraries). - Increase Sampling Steps: For catalyst design, 1000-2000 steps may be necessary over the typical 50-250.

- Temperature Scaling: Introduce a temperature parameter (τ) to the noise variance: β̃t = τ * βt. Set τ > 1.0 (e.g., 1.2) to add more noise at each step, forcing the model to explore.

- Manifold Constraint: Use a predictor-corrector method (e.g., Langevin dynamics) after each denoising step to "correct" the sample back towards the learned data manifold, preventing drift.

Sampling with a Predictor-Corrector Step for Diversity

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Diffusion Model Analysis in Catalyst Design

| Item/Software | Function & Relevance |

|---|---|

| ASE (Atomic Simulation Environment) | Core library for building, manipulating, and running DFT calculations on generated atomic structures. |

| pymatgen | Provides advanced structural analysis, descriptor generation (e.g., coordination numbers, symmetry), and materials network tools. |

| DScribe | Calculates a comprehensive suite of material descriptors (Coulomb matrix, SOAP, etc.) for quantitative diversity analysis. |

| MODNet / MEGNet | Pre-trained models for rapid, accurate prediction of catalyst properties (e.g., formation energy, band gap) to screen generated libraries. |

| PLAMS / AiiDA | Workflow managers to automate high-throughput DFT validation of promising generated catalyst candidates. |

| Cleanlab | Detects mislabeled or anomalous data in training sets, which can be a hidden cause of model collapse. |

Q5: Could the issue be in our training data? How do we audit the dataset? A5: Yes, biased or low-diversity training data is a common root cause.

Dataset Audit Protocol:

- Compute t-SNE/UMAP: Project your training data's feature space (using descriptors from Table 2) into 2D. Visually check for clusters, gaps, or dense singular hubs.

- Measure Nearest Neighbor Distances: For each sample, compute its distance to the nearest neighbor within the training set. A very low mean distance suggests redundancy.

- Active Learning Loop: If gaps are found, use a query strategy (e.g., uncertainty sampling) to identify candidate structures for DFT calculation to fill the manifold. Retrain the diffusion model with this augmented dataset.

Active Learning Loop to Improve Training Data Diversity

Troubleshooting Guides & FAQs

Q1: During the catalyst generation process, the model produces overly conservative (low-energy) but inactive structures, failing to explore novel, potentially active sites. How can I adjust the noise schedule to promote more exploration?

A1: This is a classic under-exploitation issue where the denoising process converges too quickly to known low-energy basins. Adjust your noise schedule (beta or alpha_bar schedule) to retain more noise for a longer portion of the forward process, delaying the point at which the sampling trajectory is committed to a specific basin.

- Protocol: Implement a nonlinear noise schedule (e.g., cosine schedule) instead of a linear one. For a 1000-step process, modify the

alpha_bar_tfrom a linear decay tocos((t/T + s)/(1+s) * π/2)^2, wheres=0.008. This slows the initial noise addition, preserving signal longer and allowing for more divergent exploration in early reverse steps. - Data Comparison:

Q2: My model explores diverse structures, but the final denoised catalysts are physically unrealistic or contain unstable metal clusters. How do I increase "exploitation" to favor known stable configurations without losing all novelty?

A2: This indicates insufficient guidance towards the physically plausible data manifold. You need to increase the weight of the data-informed prior during the denoising (reverse) process.

- Protocol: Apply Classifier-Free Guidance (CFG) Scale adjustment. Increase the guidance scale (

ω) during sampling to exert stronger pull towards conditions associated with stable structures in your training data.- During conditional training, randomly drop the condition (e.g., target reaction energy) with probability

p=0.1. - During sampling, calculate the noise prediction as:

ε_guided = ε_uncond + ω * (ε_cond - ε_uncond). - Systematically increase

ωfrom 1.0 to 3.0.

- During conditional training, randomly drop the condition (e.g., target reaction energy) with probability

- Data Comparison:

Q3: When I increase sampling steps for higher quality, my computational cost skyrockets, but reducing steps leads to poor sample fidelity. Is there an optimal midpoint?

A3: Yes. The key is to use an accelerated sampling scheduler that reduces total steps while maintaining critical decision points in the denoising trajectory.

- Protocol: Implement the DDIM (Denoising Diffusion Implicit Models) scheduler instead of the default DDPM.

- Keep your trained model weights.

- Define a subsequence

τof the original[1,...,T]steps (e.g.,τ = [1, 51, 101, ..., 951]for 20 steps). - Use the deterministic DDIM sampling rule:

x_{τ_{t-1}} = sqrt(α_{τ_{t-1}})/sqrt(α_{τ_t}) * x_{τ_t} - (sqrt(α_{τ_{t-1}}*(1-α_{τ_t})) - sqrt(1-α_{τ_{t-1}})) * ε_θ(x_{τ_t}, τ_t). - This allows you to jump between steps while approximating the full trajectory.

- Data Comparison:

Q4: How do I systematically find the best noise schedule and step count for my specific catalyst dataset (e.g., transition metal oxides)?

A4: Conduct a 2D hyperparameter sweep focused on the noise schedule curvature and the effective sampling steps.

- Protocol:

- Parameterize Schedule: Define schedule by

γinα_bar_t = 1 - (t/T)^γ.γ=1is linear.γ>1adds noise faster (exploitative).γ<1adds noise slower (explorative). - Select Sampler: Fix DDIM for efficiency.

- Define Metric: Use

M = (Novelty_Score * 0.4) + (Stability_Score * 0.6), where scores are normalized. - Sweep: Run generation for

γ ∈ {0.5, 0.75, 1.0, 1.25, 1.5}and steps∈ {10, 20, 50, 100}. - Evaluate: Use DFT to validate top-10 candidates per configuration.

- Parameterize Schedule: Define schedule by

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in Catalyst Diffusion Research |

|---|---|

| Materials Project Database (API) | Source of stable crystal structures for training data; provides target formation energies for conditioning. |

| VASP / Quantum ESPRESSO | Density Functional Theory (DFT) software for ab initio calculation of candidate catalyst properties (energy, activity). |

| ASE (Atomic Simulation Environment) | Python library for manipulating atoms, interfacing between diffusion models and DFT calculators. |

| MatDeepLearn/PyXtal | Framework for generating and encoding crystal graphs; crucial for 3D-structured diffusion models. |

| OpenCatalyst Project OC20 Dataset | Large-scale dataset of relaxations for catalyst-adsorbate systems; used for pre-training or benchmarking. |

CFG Scale (ω) |

Hyperparameter controlling the trade-off between sample diversity (exploration) and condition fidelity (exploitation). |

| Cosine Noise Schedule | A specific parameterization of the forward process variance that often leads to better sample quality and more controllable exploration. |

Visualizations

Noise Schedule Impact on Exploration

Sampling Acceleration Workflow

CFG Scale Tuning for Catalyst Design

Technical Support Center: Troubleshooting Denoising Diffusion Models for Catalysts Research

Welcome to the Technical Support Center. This resource provides targeted guidance for researchers integrating chemical rule constraints into denoising diffusion probabilistic models (DDPMs) for catalyst discovery and optimization. Below are common issues and solutions framed within the thesis context of optimizing the denoising process.

FAQs & Troubleshooting Guides

Q1: During training of our conditioned DDPM, the model generates catalysts with invalid valence states or impossible bond configurations, despite our rule-based conditioning. What could be wrong? A: This often indicates a failure mode where the model "ignores" the conditioning signal. Verify the following:

- Conditioning Strength (Beta): The weighting hyperparameter (

beta) for the rule-constraint loss term might be too low. It must be balanced against the primary denoising loss. Start with a grid search aroundbeta=0.1tobeta=5.0. - Gradient Flow: Implement gradient clipping to prevent explosion/vanishing and monitor if gradients from the constraint loss are propagating into the denoising network's layers.

- Constraint Formulation: Ensure your chemical rules (e.g., valence, coordination number) are expressed as differentiable, continuous penalty functions rather than binary checks. Use smoothed approximations for discrete rules.

Q2: Our model's synthesized catalyst candidates are valid but lack chemical diversity, converging to similar structural motifs. How can we improve exploration? A: This suggests over-constraint or a mode collapse issue.

- Adjust Noise Schedules: A poorly chosen noise variance schedule (

beta_t) can prematurely destroy signal. Consider a cosine schedule or one that preserves more high-level structural information longer into the forward process. - Sampling Temperature (

τ): Introduce a temperature parameter during the sampling (reverse) process to control stochasticity.τ > 1increases diversity but may risk validity;τ < 1makes outputs more deterministic. - Relax Initial Constraints: Apply weaker rule penalties in early denoising steps to allow exploration, then increase constraint strength in later steps to refine validity.

Q3: When integrating bond-length and angle constraints, the model training becomes unstable and loss diverges. What is the standard protocol to mitigate this? A: Geometric constraints are highly sensitive. Follow this protocol:

- Pre-training: Warm-start the diffusion model backbone on a large corpus of unconstrained molecular structures.

- Progressive Introduction: Fine-tune the pre-trained model by first introducing only valence rules. Once stable, gradually add bond-length constraints (using a smooth, Gaussian-based penalty), and finally angle constraints.

- Loss Scaling: Use adaptive loss scaling (e.g., from PyTorch's

AMP) or manually scale the geometric loss terms down by a factor of 0.01 to 0.001 relative to the primary denoising loss initially.

Q4: What are the key metrics to validate that our chemically-constrained diffusion model is superior to an unconstrained baseline for catalyst design? A: Track and compare the following quantitative metrics in a hold-out test set or via de novo generation:

Table 1: Key Validation Metrics for Catalyst Diffusion Models

| Metric Category | Specific Metric | Target (Constrained Model) | Baseline (Unconstrained Model) |

|---|---|---|---|

| Chemical Validity | % Valid Structures (w.r.t. basic valence) | >98% | Typically 60-90% |

| Synthesizability | SA Score (Synthetic Accessibility, lower is better) | <4.5 | Often >6 |

| Diversity | Internal Diversity (avg. pairwise Tanimoto dissimilarity) | >0.7 | Can be lower or higher |

| Property Focus | % within Target Range (e.g., Adsorption Energy) | Maximize | Benchmark |

| Computational Cost | Steps to reach Valid Sample (avg.) | Lower (due to guided denoising) | Higher |

Q5: Can you provide a standard experimental protocol for benchmarking a rule-constrained diffusion model against other generative approaches (like GANs or VAEs) for catalyst design? A: Yes. Follow this comparative methodology:

Protocol: Benchmarking Generative Models for Catalyst Discovery

- Data Curation: Assemble a cleaned dataset of known catalyst structures (e.g., from ICSD, CSD) and their associated properties (e.g., formation energy, surface adsorption energies).

- Model Training: Train three models on the same data split:

- Model A: Your chemically-constrained DDPM.

- Model B: A Generative Adversarial Network (GAN) with a graph convolutional network (GCN) generator.

- Model C: A Variational Autoencoder (VAE) with a property predictor head.

- Generation & Validation: Generate 10,000 candidate structures from each trained model.

- Evaluation Pipeline: Pass all generated candidates through:

- A rule-based validity filter (e.g., using RDKit).

- A synthesizability scorer (SA Score, RA Score).

- A physics-based property predictor (e.g., a DFT surrogate model) to estimate target properties.

- Analysis: Compare models using the metrics in Table 1. Statistical significance should be tested using a paired t-test or Mann-Whitney U test across multiple generation runs.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Digital Research Toolkit for Constrained Diffusion Experiments

| Tool / Solution | Function in Experiment | Typical Source/Module |

|---|---|---|

| RDKit | Core chemistry toolkit for parsing molecules, applying rule-based checks, and calculating descriptors like SA Score. | rdkit.Chem, rdkit.Chem.rdMolDescriptors |

| PyTorch / JAX | Deep learning frameworks for building and training the denoising U-Net and loss functions. | torch.nn, diffusers library, jax.numpy |

| Differentiable Chemistry Layers | Provides smoothed, differentiable versions of chemical operations (e.g., bond formation, coordination). | dMol package, torchdrug library |

| Open Catalyst Project (OCP) Datasets | Pre-processed, large-scale catalyst data with DFT-calculated properties for training and benchmarking. | ocp datasets (IS2RE, S2EF) |

| ASE (Atomic Simulation Environment) | For converting generated structures into files for downstream DFT validation and calculating geometric constraints. | ase.Atoms, ase.calculators |

| Graphviz (for Visualization) | Creating clear diagrams of model architectures and workflows (as used below). | graphviz Python package |

Mandatory Visualizations

Title: Workflow of a Chemically-Constrained Denoising Diffusion Model

Title: Sampling Loop with Rule-Based Correction

Technical Support Center: Troubleshooting & FAQs

FAQ Section

Q1: Our high-throughput virtual screening pipeline using a latent diffusion model is running too slowly. What are the first steps to diagnose the bottleneck?