Beyond Novelty: Advanced Strategies for Ensuring Molecular Validity in AI-Generated Catalysts

This article provides a comprehensive guide for researchers and drug development professionals on ensuring molecular validity in AI-generated catalyst structures.

Beyond Novelty: Advanced Strategies for Ensuring Molecular Validity in AI-Generated Catalysts

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on ensuring molecular validity in AI-generated catalyst structures. We explore foundational concepts of molecular realism, detail state-of-the-art generative and validation methodologies, address common pitfalls in synthesis and stability, and present comparative analyses of validation tools. The content bridges the gap between computational discovery and experimental feasibility, offering practical strategies for accelerating the development of viable catalytic compounds.

What Makes a Catalyst 'Valid'? Defining Molecular Realism in Computational Design

Technical Support Center

Frequently Asked Questions & Troubleshooting Guides

Q1: My DFT simulation of a generated metal-organic framework (MOF) catalyst fails with a "SCF convergence" error. What are the primary causes? A: This typically indicates an invalid initial geometry. Common causes include:

- Unphysical bond lengths/angles: Generated structures may place atoms too close (<0.5 Å for non-bonded) or with strained coordination.

- Unreasonable charge state: The electronic configuration assigned to the metal center is not stable for the given geometry.

- Protocol: First, run a forced geometry pre-optimization using a universal force field (UFF) or GFN-FF. Then, check metal oxidation state compatibility with your intended adsorbates using a ligand field theory approach before submitting to higher-level DFT.

Q2: After synthesis guided by a generated structure, my catalyst shows no activity. Characterization reveals an amorphous material. Where did the process fail? A: This suggests the generated structure lacked synthetic feasibility. The issue often lies in the neglect of kinetic stability.

- Troubleshooting Steps:

- Pre-Screen: Use a solvation energy model (e.g., COSMO-RS) to assess ligand solubility and a simple kinetic barrier estimator for ligand exchange steps.

- Post-Characterization: Compare your PXRD pattern with the simulated one from the generated structure. Use XPS to verify the expected oxidation states of metals.

Q3: My machine learning model generates catalyst structures with high predicted activity, but over 60% are flagged as "invalid" by my basic valence checker. How can I improve validity rates? A: The model is likely optimizing for a target property without a strong constraint on chemical rules.

- Solution: Implement a validity-centric pipeline. Integrate a robust graph-based validity checker (e.g., using RDKit's

SanitizeMol) as a hard filter during generation, not after. Retrain your model using a loss function that penalizes invalid valences and coordination numbers.

Q4: During molecular dynamics (MD) simulation of a generated enzyme catalyst, the structure denatures/unfolds within the first 100 ps. What does this imply? A: This is a strong computational indicator of an invalid or unstable fold. The generated protein backbone or side-chain packing is likely non-physical.

- Protocol: Before long MD, always perform:

- Short, restrained minimization: Gradually relax the structure.

- Ramachandran plot check: Identify sterically impossible phi/psi angles.

- Packaging check: Use a tool like SCWRL4 to fix side-chain clashes in generated structures.

Experimental Protocol: Validating Generated Single-Atom Catalyst (SAC) Structures

Objective: To computationally validate the stability and synthesizability of a machine-generated Single-Atom Catalyst (M-N-C type) before experimental resource commitment.

Methodology:

- Initial Valence Filtering: Pass the generated structure through SMARTS-based pattern matching to ensure plausible coordination (e.g., N~4 for Fe in graphene).

- Geometry Optimization: Use DFT (PBE-D3 level) with a plane-wave basis set to relax the structure. Projector augmented-wave (PAW) pseudopotentials are recommended.

- Stability Metrics Calculation:

- Binding Energy (Eb):

Eb = E(M-N-C) - E(N-C) - E(M_atom). A highly negative Eb indicates stability. - Ab Initio Molecular Dynamics (AIMD): Run a 10 ps NVT simulation at 500 K. Structure integrity confirms thermal stability.

- Synthetic Feasibility Score: Calculate the dissolution potential of the metal atom from the support using a computational hydrogen electrode (CHE) model for relevant solvent conditions.

- Binding Energy (Eb):

- Activity Pre-screening: Perform a CO adsorption energy calculation as a proxy for catalytic activity (ideal range: -0.8 to -1.2 eV).

Key Data from Recent Studies (2023-2024):

| Validation Step | Metric | Threshold for "Valid" | Failure Rate in Unfiltered Generated Libraries* |

|---|---|---|---|

| Valence/Coordination | Plausible Coordination Number | Within ±1 of typical integer | 45-65% |

| DFT Geometry Opt. | SCF Convergence & Force Norm | Converged, max force < 0.05 eV/Å | 25-40% |

| Stability (Eb) | Metal Binding Energy | Eb < -2.0 eV | 30-50% |

| Stability (AIMD) | Structure Retention at 500K | Metal atom remains bonded | 15-25% |

| Synthetic Feasibility | Dissolution Potential | > 0.5 V vs SHE | 50-70% |

*Data synthesized from recent literature on ML-generated catalyst libraries.

The Scientist's Toolkit: Research Reagent Solutions for Catalyst Validation

| Item | Function in Validation |

|---|---|

| RDKit | Open-source cheminformatics toolkit for SMARTS-based valence checking, substructure matching, and molecular descriptor calculation. |

| ASE (Atomic Simulation Environment) | Python package for setting up, running, and analyzing results from DFT and MD calculations; interfaces with major codes (VASP, Quantum ESPRESSO). |

| VASPKIT | Post-processing toolkit for VASP outputs to efficiently compute binding energies, density of states, and reaction pathways. |

| PLATON/CHECKCIF | For crystalline materials, this software performs a thorough geometrical and topological analysis to detect structural inconsistencies. |

| COSMO-RS | A conductor-like screening model used to predict solvation energies and solubility, critical for assessing synthetic feasibility. |

| Avogadro | Molecular editor and visualizer for manual inspection and correction of generated structures before simulation. |

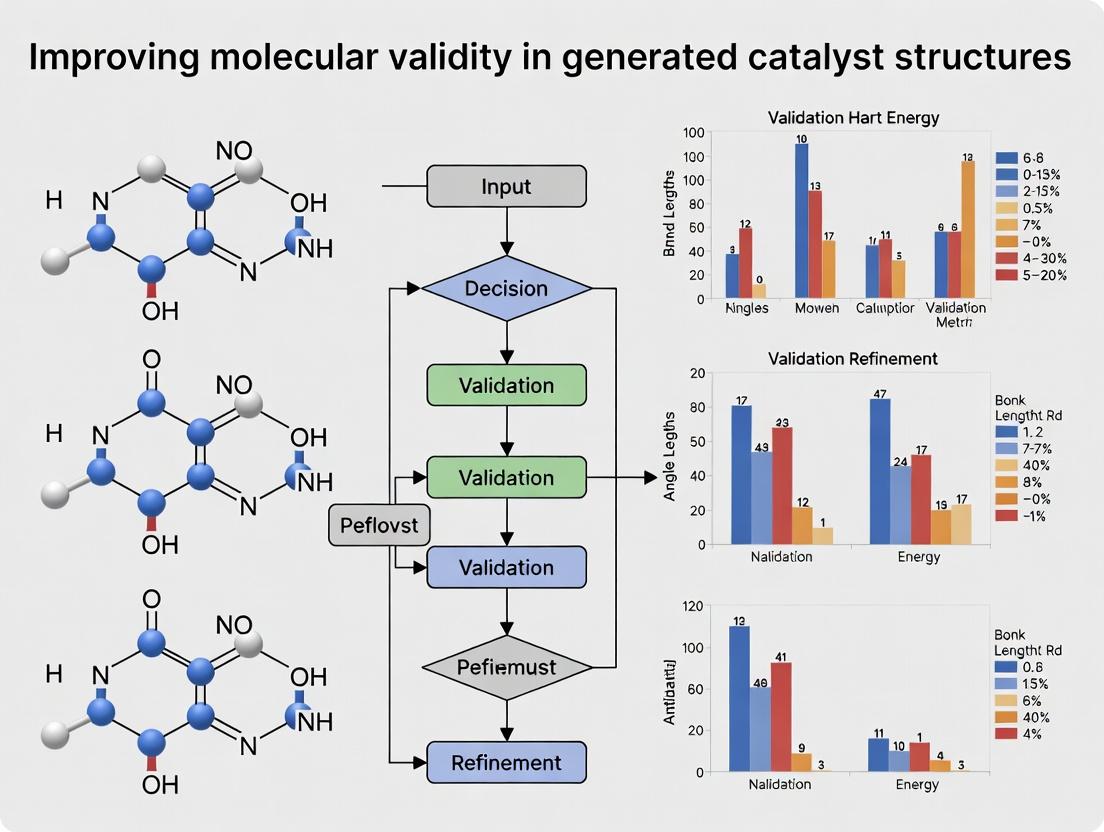

Visualization: Catalyst Validity Screening Workflow

Visualization: Causes of Invalid Catalyst Structures

Troubleshooting Guides & FAQs

FAQ 1: My simulated metal-organic framework (MOF) catalyst shows unrealistic coordination numbers for the transition metal center. What could be wrong? Answer: This typically violates valence principles. First, verify the oxidation state you've assigned to the metal. A Co(II) center will not stably support 8 neutral amine ligands. Check your source code or GUI settings for forcefield parameters or DFT functionals that may incorrectly handle electron donation. Use a smaller ligand set and incrementally increase complexity, validating the coordination number against known crystal structures (e.g., from the Cambridge Structural Database) at each step.

FAQ 2: After generating a promising catalyst structure, my computational stability calculations (e.g., molecular dynamics) show rapid bond dissociation. How do I diagnose this? Answer: Rapid dissociation often stems from poor geometry and steric strain. Calculate the ligand bite angles and metal-ligand distances. Compare them to ideal values for that coordination geometry (e.g., 90°/180° for octahedral). Excessive strain can destabilize the complex. Introduce conformational sampling or geometry optimization steps prior to the stability run to relax the structure into a local energy minimum.

FAQ 3: I've designed an organocatalyst, but quantum chemistry calculations predict a high-energy LUMO, suggesting poor electrophilicity. Is this a validity issue? Answer: Yes, this relates to electronic stability and reactivity. A very high LUMO may indicate an over-stabilization of the catalyst's reactive center, rendering it inert. This can occur if the functional groups used to enforce geometric constraints are overly electron-donating. Troubleshoot by systematically substituting electron-withdrawing groups (e.g., -CF3 for -CH3) and recalculating orbital energies. The goal is a balance between kinetic stability (for isolation) and appropriate reactivity.

Experimental Protocol for Validating Catalyst Geometry and Stability

Protocol Title: Integrated Computational-Experimental Validation of Generated Bifunctional Catalyst Structures.

Methodology:

- In Silico Generation & Pre-Screening: Generate candidate structures using a script (e.g., in Python with RDKit) that enforces basic valence rules. Perform a preliminary conformational search using MMFF94s forcefield.

- Geometry Optimization & Frequency Analysis: Optimize the top 10 conformers using DFT (e.g., B3LYP-D3/def2-SVP basis set). Perform a vibrational frequency calculation to confirm a true local minimum (no imaginary frequencies) and to calculate thermodynamic corrections.

- Stability Assessment via Molecular Dynamics (MD): Solvate the optimized structure in an explicit solvent box (e.g., acetonitrile). Run a 5 ns NVT MD simulation at 300 K using GAFF2 forcefield and AM1-BCC charges. Monitor key bond distances (e.g., metal-ligand, reactive site bonds) for dissociation events.

- Experimental Correlation (Synthesis & Characterization): Synthesize the top 2-3 computationally validated candidates. Characterize using:

- X-ray Crystallography: For definitive geometric validation.

- Thermogravimetric Analysis (TGA): For thermal stability assessment.

- Solution NMR Spectroscopy: To confirm structural integrity and geometry in solution.

Quantitative Data Summary: DFT Validation Metrics for Catalyst Candidates

| Candidate ID | Metal Oxidation State | Coordination Number | Avg. M-L Bond Length (Å) | HOMO-LUMO Gap (eV) | Imaginary Frequencies (Post-Opt) | MD Stability (Bond Break Event <5 ns?) |

|---|---|---|---|---|---|---|

| Cat-A1 | Ru(II) | 6 | 2.05 ± 0.08 | 3.45 | 0 | No |

| Cat-B3 | Pd(IV) | 6 | 2.12 ± 0.15 | 1.98 | 1 | Yes (at 2.1 ns) |

| Cat-C7 | Organo (N/A) | N/A | N/A | 5.10 | 0 | N/A |

Visualization: Catalyst Design & Validation Workflow

Title: Catalyst Validity Design-Validate Loop

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Catalyst Validity Research |

|---|---|

| DFT Software (e.g., Gaussian, ORCA) | Performs quantum mechanical calculations to optimize geometry, calculate electronic structure (HOMO/LUMO), and predict spectroscopic properties. |

| Molecular Dynamics Package (e.g., GROMACS, OpenMM) | Simulates the physical movements of atoms over time to assess thermodynamic stability and solvation effects. |

| Cambridge Structural Database (CSD) | Repository of experimental crystal structures for validating calculated bond lengths, angles, and coordination geometries. |

| Ligand Libraries (e.g., amino acids, phosphines, salen derivatives) | Building blocks for catalyst design. Pre-parameterized libraries streamline computational modeling. |

| Nudged Elastic Band (NEB) Tool | Computes the minimum energy pathway for reactions, crucial for probing catalytic mechanism stability. |

| Automation Scripts (Python/RDKit) | For high-throughput generation of candidate structures with embedded valence and steric filters. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: Common Failure Modes & Solutions

Q1: My catalyst structure shows unrealistic metal-ligand bond lengths. What is the likely cause and how can I fix it? A: This is often due to an incorrect force field parameterization or an inaccurate charge assignment for the transition metal center. Transition metals require specialized parameter sets. To resolve:

- Use a quantum mechanical (QM) method (e.g., DFT with a functional like B3LYP and basis set def2-SVP) to optimize the metal coordination sphere.

- Derive RESP or ESP charges for the metal complex.

- In molecular dynamics (MD) simulations, employ a bonded model for the metal-ligand interactions using the QM-derived parameters, not a non-bonded model.

Q2: My generated catalyst exhibits spontaneous ligand dissociation during molecular dynamics (MD) simulation. What does this indicate? A: This is a critical failure mode indicating thermodynamic instability. It suggests either:

- Incorrect Coordination Number: The metal center is assigned an unnatural coordination number (e.g., a tetrahedral Pd(II) complex, which is rare versus square planar).

- Weak-Field Ligand Set: The chosen ligands provide a crystal field stabilization energy (CFSE) too low for the metal oxidation state.

- Protocol Issue: The simulation may have been started from an unrelaxed, high-energy structure.

- Solution: Always perform a multi-step minimization (steepest descent followed by conjugate gradient) of the solvated system before MD. Re-evaluate the metal/ligand combination using inorganic chemistry principles.

Q3: Why does my catalyst structure fail geometry validation (e.g., in Mogul) with unusual metal-ligand-ligand angles? A: This points to a lack of "catalyst-specific realism" in the generation algorithm. Many generative models do not adequately learn the stereoelectronic preferences of transition metals (e.g., trans influence in square planar complexes). To correct:

- Post-Processing Filter: Implement a rule-based filter that rejects structures with bond angles deviating >15° from idealized geometries (e.g., 90°/180° for octahedral, 90°/120°/180° for trigonal bipyramidal).

- Conformational Search: Perform a constrained conformational search around the metal center, holding metal-ligand bonds fixed, to relax ligand clashes.

Q4: I observe unrealistic spin states in my generated Fe(III) catalyst. How do I ensure the correct spin state? A: Spin state failure is common for first-row transition metals (Fe, Co, Mn). You must explicitly define and validate the spin state. * Protocol: Perform a single-point energy calculation at multiple spin states (e.g., high-spin, intermediate-spin, low-spin for Fe(III)) using a QM method known for good performance on spin states (e.g., TPSSh/def2-TZVP). The ground state is the one with the lowest energy. Always specify the spin multiplicity in subsequent calculations.

Detailed Experimental Protocols

Protocol 1: QM Validation of Metal Coordination Sphere

- Objective: Generate a quantum-mechanically valid starting structure for a generated catalyst.

- Methodology:

- Isolate the metal atom and its directly coordinated atoms (first coordination sphere) from the generated structure.

- Terminate dangling bonds from removed ligands with hydrogen atoms (cap atoms).

- Optimize the geometry of this truncated cluster using Density Functional Theory (DFT).

- Software: Gaussian, ORCA, or PySCF.

- Functional: B3LYP-D3(BJ) for general use; TPSSh for spin-state sensitive metals.

- Basis Set: def2-SVP for geometry optimization.

- Solvation Model: Implicit solvent (e.g., SMD, CPCM) appropriate to your experimental conditions.

- Calculate vibrational frequencies to confirm a true energy minimum (no imaginary frequencies).

- Extract the optimized metal-ligand bond lengths and angles for use in force field parameterization or as a validation benchmark.

Protocol 2: Stability Assessment via Short MD Simulation

- Objective: Probe the kinetic stability of a generated catalyst in a simulated solvent environment.

- Methodology:

- System Preparation: Place the QM-validated catalyst structure in a cubic simulation box (e.g., 10 Å padding) filled with explicit solvent molecules (e.g., water, acetonitrile, toluene).

- Parameterization: Assign force field parameters. Use a specialized force field (e.g., MCPB.py for AMBER, metal.center for CHARMM) or manually add bonds/angles for the metal center based on Protocol 1 results.

- Minimization: Perform energy minimization in two stages: (i) restrain heavy atoms of the catalyst, minimize solvent; (ii) minimize entire system without restraints.

- Equilibration: Run a short (50-100 ps) NVT simulation at 298 K, followed by a 100 ps NPT simulation at 1 bar to equilibrate solvent density, with positional restraints on the catalyst heavy atoms.

- Production Run: Run an unrestrained MD simulation for 5-10 ns at constant temperature (298 K) and pressure (1 bar). Monitor the root-mean-square deviation (RMSD) of the metal coordination sphere and check for ligand dissociation events.

Table 1: Idealized Bond Length Ranges for Common Transition Metal Coordination Motifs

| Metal Center | Common Oxidation State | Coordination Geometry | Typical Ligand | Bond Length Range (Å) | Notes |

|---|---|---|---|---|---|

| Pd | +2 | Square Planar | N (amine) | 2.00 - 2.10 | trans influence can lengthen bonds. |

| Pd | +2 | Square Planar | P (phosphine) | 2.20 - 2.35 | Strong trans influence. |

| Pt | +2 | Square Planar | N (pyridine) | 2.00 - 2.05 | Less labile than Pd analogues. |

| Ru | +2 | Octahedral | N (bipyridine) | 2.05 - 2.15 | In polypyridyl complexes. |

| Fe | +2 (HS) | Octahedral | N (porphyrin) | 2.00 - 2.10 | High-spin (HS). Low-spin (LS) is shorter. |

| Rh | +1 | Square Planar | P (phosphite) | 2.20 - 2.30 | In hydroformylation catalysts. |

| Ir | +3 | Octahedral | C (cyclopentadienyl) | 2.10 - 2.20 | In Crabtree-type catalysts. |

Table 2: Common Failure Mode Diagnostic Checklist

| Failure Symptom | Primary Diagnostic Check | Recommended Corrective Action |

|---|---|---|

| Unphysical bond length | Compare to CSD (Cambridge Structural Database) statistics. | Re-parameterize using QM (Protocol 1). |

| Ligand dissociation in MD | Check coordination number & CFSE. | Apply geometry filter; use stronger field ligand. |

| Incorrect spin state | Perform multi-reference character check. | Run spin state energy ordering calculation. |

| Poor geometry score | Analyze metal-ligand-ligand angles. | Perform constrained conformational search. |

| Unstable in solvent | Calculate solvation free energy. | Adjust ligand hydrophobicity/hydrophilicity. |

Diagrams

Title: Catalyst Validation and Correction Workflow

Title: Common Failure Modes in Transition Metal Catalysts

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Catalyst Validation

| Item | Function | Example/Note |

|---|---|---|

| Quantum Chemistry Software | Performs DFT calculations for geometry optimization, electronic structure, and spin-state analysis. | ORCA (free), Gaussian, Q-Chem. |

| Molecular Dynamics Engine | Simulates catalyst behavior in explicit solvent over time to assess stability. | GROMACS (free), AMBER, NAMD. |

| Specialized Force Field | Provides accurate parameters for metal-ligand bonds, angles, and dihedrals. | MCPB.py (for AMBER), metal.center (for CHARMM). |

| Chemical Database | Source of experimentally validated structural data for bond length/angle comparison. | Cambridge Structural Database (CSD). |

| Geometry Analysis Tool | Programmatically checks generated structures against geometric rules. | CCDC Python API, RDKit. |

| Implicit Solvent Model | Accounts for solvation effects in QM calculations when explicit solvent is prohibitive. | SMD, CPCM. |

| Wavefunction Analysis Software | Analyzes QM output to determine metal oxidation state, bond orders, and orbital contributions. | Multiwfn (free), NBO. |

The Role of Molecular Graphs vs. 3D Conformers in Validity Assessment

Troubleshooting Guide & FAQs

Q1: When validating AI-generated catalyst structures, my molecular graph-based validity score is high, but the 3D conformer shows severe steric clashes. Which assessment should I trust? A1: Trust the 3D conformer assessment. Molecular graphs represent topological connectivity but lack spatial atomic coordinates. A high graph-based score confirms correct atom/ bond types but not physical feasibility. The 3D conformer reveals actual atomic distances. Proceed with a conformational search and geometry optimization using the 3D structure as the starting point. If clashes persist, the generated structure is likely invalid.

Q2: My generated transition metal complex is topologically valid but produces energetically unstable 3D conformers. How can I diagnose the issue? A2: This often indicates incorrect stereochemistry or coordination geometry not captured by the 2D graph. Follow this diagnostic protocol:

- Generate multiple conformers (e.g., using RDKit's

ETKDGor CREST). - Perform a quick quantum mechanical (QM) single-point energy calculation (e.g., DFT with a minimal basis set like PM6).

- Compare the computed metal-ligand bond lengths and angles against known crystal structures in databases like the Cambridge Structural Database (CSD). Invalid geometries will show significant deviations.

Q3: Are there specific functional groups or catalyst motifs where molecular graph validity is most likely to diverge from 3D conformer validity? A3: Yes. See the table below for high-risk motifs.

| Motif Type | Graph-Based Assessment Pitfall | Recommended 3D Validation Check |

|---|---|---|

| Chelating Ligands | May show correct donor atoms but incorrect bite angles. | Calculate M–L1–L2 bite angle; compare to typical range (e.g., 85°-95° for many bidentate ligands). |

| Bulky Ligands (e.g., tBu, Ph) | Shows proper connectivity but misses steric shielding. | Calculate steric maps (e.g., using SambVca) or measure percent buried volume (%Vbur). |

| Macrocycles | Valid ring connectivity but incorrect ring conformation/planarity. | Check for strained dihedral angles and out-of-plane deviations. |

| Chiral Centers | May specify stereochemistry but can generate racemized or inverted 3D conformers. | Verify absolute configuration (R/S) matches the specified graph stereochemistry. |

Q4: What is a standard experimental protocol to systematically compare graph vs. 3D validity for a set of generated catalyst candidates? A4: Comparative Validity Assessment Protocol

Materials:

- Input: Set of AI-generated molecular structures (SMILES strings).

- Software: RDKit (or similar) for graph operations, Conformer generation toolkit (RDKit, OpenBabel, OMEGA), Quantum Chemistry package (xtb, Gaussian, ORCA) for optimization.

- Reference Data: CSD or ICSD for known catalyst geometries.

Method:

- Graph Validity: For each SMILES string, use RDKit to check for valency errors, unusual bond orders, and forbidden atom patterns. Record a binary valid/invalid flag.

- 3D Conformer Generation: For graph-valid molecules, generate an initial 3D conformer using the ETKDG method.

- Geometry Optimization: Refine the 3D structure using a force field (MMFF94s) or semi-empirical QM method (xtb GFN2).

- 3D Validity Metrics: Calculate the following for the optimized structure:

- Steric Strain: Total energy from the force field or QM calculation.

- Clash Score: Number of atom pairs within 70% of their combined van der Waals radii.

- Geometric Deviation: For known motifs, compute RMSD of key distances/angles to CSD averages.

- Thresholding: Define thresholds for each 3D metric (e.g., clash score > 5 = invalid). Compare final 3D validity outcome to the initial graph validity.

Q5: What are the essential computational tools and reagents for this field? A5: Research Reagent Solutions

| Item Name | Function/Description | Example/Provider |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for graph operations, SMILES parsing, and basic 3D conformer generation. | www.rdkit.org |

| CREST | Conformer Rotamer Ensemble Sampling Tool for robust, first-principles based conformer sampling. | Grimme Group, University of Bonn |

| xtb | Semi-empirical quantum chemistry program for fast geometry optimization and energy calculation. | Grimme Group, University of Bonn |

| Cambridge Structural Database (CSD) | Repository of experimental organic and metal-organic crystal structures for geometric reference data. | CCDC |

| SambVca | Web-based tool for calculating steric parameters of catalysts, like percent buried volume (%Vbur). | Cavallo Group |

| ETKDG Algorithm | Distance geometry-based method for generating statistically good 3D conformers from 2D graphs. | Implemented in RDKit |

Workflow & Relationship Diagrams

Title: Validity Assessment Workflow: Graph vs. 3D Conformer

Title: Complementary Roles of Graph and 3D Validity Checks

Key Databases and Benchmarks for Valid Catalyst Structures (e.g., OCELOT, Catalysis-Hub)

Troubleshooting Guides and FAQs

FAQ 1: I have downloaded a catalyst structure from OCELOT, but my DFT calculation fails with convergence errors. What could be wrong?

- Answer: This is often due to initial geometries with unrealistic bond lengths or angles. OCELOT contains computationally generated structures that may not be fully relaxed. Protocol: Always perform a preliminary geometry optimization using a low-level method (e.g., GFN-xTB) before starting high-level DFT calculations. Check for atoms placed impossibly close (<0.5 Å) and manually adjust.

FAQ 2: When comparing my calculated adsorption energy for a reaction on Catalysis-Hub to the referenced value, I find a large discrepancy (>0.5 eV). How should I proceed?

- Answer: Systematically verify your computational setup against the benchmark. Protocol: 1) Confirm you are using the exact functional, basis set/pseudopotential, and dispersion correction cited. 2) Ensure your slab model has the same Miller index, thickness, and cell size. 3) Check the adsorbate placement and coverage. 4) Replicate the referenced energy referencing method (e.g., which gas-phase energies are used for H₂, O₂).

FAQ 3: How do I handle a "structure invalid" flag for a generated metal-organic framework (MOF) catalyst when checking against the Cambridge Structural Database (CSD)?

- Answer: The flag typically indicates unrealistic metal-linker bonds. Protocol: 1) Use the CSD Python API to query known M-L bond distances for your metal and linker type. 2) Calculate the root-mean-square deviation (RMSD) of your generated structure's bonds from the CSD-derived distribution. 3) Apply a constrained optimization, forcing bonds within the typical range (mean ± 3σ) before further validation.

Key Databases and Benchmarks: Quantitative Comparison

| Database/Benchmark Name | Primary Content | Key Metrics Provided | Update Frequency | Access Method |

|---|---|---|---|---|

| OCELOT | AI-generated, potentially novel inorganic crystal and catalyst structures. | Formation energy, site diversity, synthetic accessibility score. | Ongoing (model updates) | Python library (ocelot.chemist.org) |

| Catalysis-Hub | Experimentally & computationally derived surface reaction energies & barriers. | Adsorption energies, activation barriers, turnover frequencies. | Weekly | Web interface & API (www.catalysis-hub.org) |

| Cambridge Structural Database (CSD) | Experimentally determined 3D structures of organic & metal-organic crystals. | Bond lengths, angles, torsions, coordination geometries. | Quarterly | Web interface & API (www.ccdc.cam.ac.uk) |

| Materials Project | Computed properties of known and predicted inorganic materials. | Formation energy, band gap, elastic tensor, surface energies. | Biannual | Web interface & API (materialsproject.org) |

| NOMAD | A repository for raw & processed computational materials science data. | Input files, output files, parsed properties (energies, forces). | Continuous | Web interface & API (nomad-lab.eu) |

Experimental Protocol: Validating a Generated Catalyst Structure

This protocol integrates key databases to assess the molecular validity of a computationally generated catalyst structure within the context of thesis research.

1. Initial Structure Acquisition & Pre-screening

- Input: A candidate catalyst structure (e.g., a bimetallic surface or MOF) from a generative model.

- Tools: OCELOT library, Pymatgen/ASE libraries.

- Method:

a. Calculate the basic stability descriptor:

formation_energy_per_atomusing Pymatgen'sEFormationanalyzer. b. Filter out candidates with positive formation energy (>50 meV/atom) as likely unstable.

2. Geometric Validation Against Known Structures

- Tools: CSD Python API (csd-python-api), CCDC's

Moleculetools. - Method:

a. For each molecular fragment or building block, perform a substructure search in the CSD.

b. Extract all matching bond distances and angles to create a probability distribution.

c. For your generated structure, compute the Z-score for each bond:

(d_generated - d_mean_CSD) / d_stdev_CSD. d. Flag any bond with |Z-score| > 3 as a "geometric outlier" for manual inspection.

3. Functional Property Benchmarking

- Tools: Catalysis-Hub API, Automated Computational Workflow (e.g., FireWorks).

- Method:

a. Identify a key probe reaction (e.g., CO oxidation, O₂ reduction).

b. Query Catalysis-Hub for this reaction on similar material classes to get benchmark adsorption energies (

E_ads_bench± stdev). c. Perform DFT calculations on your generated structure using the exact same functional, settings, and gas-phase references as the benchmark data. d. Calculate the deviation:ΔE = E_ads_calculated - E_ads_bench. Validate if|ΔE|is within 2 standard deviations of the benchmark spread.

4. Final Validity Scoring

- Generate a composite validity score (e.g., 0-1) weighted from: i) Formation energy (40%), ii) Geometric Z-score distribution (30%), iii) Property deviation ΔE (30%). Structures scoring above 0.7 proceed to advanced characterization.

Workflow Diagram: Catalyst Validation Protocol

Research Reagent Solutions Toolkit

| Item Name | Function in Validation Protocol | Example Source / Specification |

|---|---|---|

| CSD Python API | Programmatic access to query and analyze millions of experimental crystal structures for geometric validation. | Cambridge Crystallographic Data Centre (CCDC) |

| Catalysis-Hub API | Retrieves benchmark reaction energies and barriers for specific materials and adsorbates to calibrate calculations. | Catalysis-Hub.org |

| Pymatgen Library | Python library for analyzing materials data, crucial for calculating formation energies and structural manipulation. | Materials Virtual Lab |

| ASE (Atomic Simulation Environment) | Python library for setting up, running, and analyzing results from electronic structure calculations (DFT). | https://wiki.fysik.dtu.dk/ase/ |

| GFN-xTB Code | Fast semi-empirical quantum method for pre-optimizing generated structures and checking for obvious instability. | Grimme Group, University of Bonn |

| VASP / Quantum ESPRESSO | High-accuracy DFT software for computing electronic energies and properties for final benchmarking. | Commercial / Open-Source |

| FireWorks Workflow Manager | Automates and manages the sequence of computational jobs (pre-opt, DFT, analysis) for high-throughput validation. | Materials Project Team |

Building Validity In: Generative AI Models and Post-Generation Correction Techniques

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Valence-Aware Graph Neural Network (V-GNN) fails to generate chemically valid molecular graphs, often producing atoms with impossible valences (e.g., pentavalent carbon). What are the primary debugging steps? A1: This typically indicates a failure in the constraint enforcement layer. Follow this protocol:

- Constraint Logging: Implement logging for the valence calculation at each node before and after the hard constraint projection step (e.g., the integer programming or rule-based correction).

- Check Allowed States: Verify the predefined "allowed connectivity" matrix (e.g., based on atom type and formal charge) is complete and correct for your periodic table scope. A missing entry for a common oxidation state will cause failures.

- Sanity Check on Small Graphs: Test the model's generation step on a trivial, single-atom seed. It should only propose attachments that satisfy that atom's valence rules.

Q2: During autoregressive generation with valency constraints, my model becomes exceptionally slow after ~20 steps. How can I improve inference speed? A2: The combinatorial explosion of the validity check is likely the cause. Implement these solutions:

- Cached Valence Tracking: Maintain a dynamic vector of remaining valence capacity for every node in the partial graph, updated after each action, rather than recomputing from scratch.

- Pruned Action Space: At each step, pre-filter the action mask to eliminate actions that would immediately violate valence rules based on the cached valence state, before scoring by the policy network.

- Approximate Projection: For the V-GNN, consider switching from an exact integer solver to a fast, approximate iterative projection for inference, reserving the exact solver for training or final validation.

Q3: How do I quantitatively evaluate whether my "valid" generated catalyst structures are also realistic and not just formally valid? A3: Formal valence correctness is a minimum bar. Use this multi-metric validation protocol:

Table 1: Key Metrics for Evaluating Generated Catalyst Structures

| Metric Category | Specific Metric | Target/Threshold for Realism | Tool/Library |

|---|---|---|---|

| Geometric | Ring Strain Estimation (via RDKit) | Low strain energy (< 20 kcal/mol for key rings) | RDKit MMFF94 or UFF |

| Steric | Vina Score (Docking) | Negative score indicating favorable binding | AutoDock Vina |

| Electronic | Partial Charge Range (QEq) | Charges within typical bounds for element/ hybrid. | RDKit ComputeGasteigerCharges |

| Stability | DFT-based Single-Point Energy | Relative energy within ~50 kcal/mol of known stable conformers | ORCA, Gaussian |

Experimental Protocol for Metric Calculation:

- Input: Generate 1000 formally valid SMILES strings from your model.

- Sanitization: Use RDKit (

Chem.SanitizeMol) withcatchErrors=Trueto filter any remaining valence errors. - Conformer Generation: For the sanitized set, generate a single 3D conformer using RDKit's

EmbedMolecule(ETKDGv3). - Metric Pipeline: Pass the 3D conformers through the sequential analysis in Table 1, starting with fast geometric/steric checks before more costly electronic/stability calculations.

Q4: When training an autoregressive model with a hard validity reward, the policy collapses to a few safe actions. How can I maintain diversity? A4: This is a classic exploration-exploitation issue with sparse rewards.

- Curriculum Learning: Start training on small, simple graphs (e.g., C1-C10 chains) where the chance of valid action is high, then gradually increase the maximum allowed size and complexity.

- Entropy Regularization: Add a strong entropy bonus term (

β * H(π)) to the policy gradient loss during early training to encourage action diversity. - Reward Shaping: Don't just reward complete validity. Add intermediate rewards for subgraphs that are locally valid (e.g., correct functional groups) to provide a denser learning signal.

Q5: What is the recommended hardware setup for training these large, constrained generative models on catalyst-sized molecules (≤ 100 heavy atoms)? A5: Memory is often the limiting factor. The following configuration is recommended:

Table 2: Recommended Research Reagent Solutions & Hardware

| Item / Reagent | Function / Specification | Notes for Catalyst Research |

|---|---|---|

| GPU (Training) | NVIDIA A100 80GB or H100 80GB | Essential for batch processing large graphs. The 80GB VRAM handles the dense adjacency matrices for ~100-atom systems. |

| CPU & RAM | 64-core CPU, 512GB DDR4 RAM | For parallel data preprocessing, feature extraction, and running validation chemistry pipelines (RDKit, DFT pre-optimization). |

| Software Library | PyTorch Geometric (PyG) or DGL | Use with custom MessagePassing layers that integrate valence checks. |

| Validity Solver | Gurobi or SCIP Optimizer | For the exact integer linear programming (ILP) projection in V-GNNs. A commercial academic license for Gurobi is highly recommended for speed. |

| Conformer Generator | RDKit's ETKDGv3 | The standard for initial 3D coordinate generation from SMILES/ graphs. Critical for downstream steric validation. |

Visualizations

Valence-Aware GNN Generation Loop

Autoregressive Training & Generation Pipeline

Technical Support Center

Troubleshooting Guides

Issue 1: Generated SMILES strings are syntactically invalid or chemically impossible.

- Q: Why does my SMILES-based model frequently output strings like

C(Cor atoms with five explicit bonds? - A: This is a common issue in autoregressive SMILES generation. The model may not have fully learned the strict grammar rules of SMILES or chemical valence constraints.

- Solution:

- Data Preprocessing: Ensure your training set contains only valid, canonical SMILES. Use toolkits like RDKit to filter and standardize input data.

- Rule-Based Post-Processing: Implement a post-generation validation step using RDKit's

Chem.MolFromSmiles(). Discard or correct molecules that fail to parse. - Constrained Generation: Employ algorithms like the Branch-and-Bound method during inference to ensure the generation process only produces tokens that lead to valid SMILES and obey valence rules.

Issue 2: 3D-generated molecules have unrealistic bond lengths, angles, or severe steric clashes.

- Q: My 3D coordinate model produces catalyst structures where atoms are overlapping or bonds are abnormally long/short. How can I fix this?

- A: This indicates the model's failure to learn the physical and geometric constraints of molecular systems.

- Solution:

- Energy Minimization: Pass all generated 3D structures through a force field (e.g., MMFF94, UFF) or a quick quantum mechanical (QM) minimization step using RDKit or ORCA. This will relax the geometry.

- Training Data Augmentation: Train your model on conformationally-relaxed and energy-minimized 3D structures, not raw crystal or DFT output data which may contain noise.

- Loss Function Modification: Incorporate a geometric penalty term (e.g., harmonic potential for bond lengths and angles) into your training loss function to steer the model towards physically plausible configurations.

Issue 3: Model generates chemically valid but catalytically inactive or unstable structures.

- Q: The molecules are valid, but they lack common catalytic motifs (e.g., specific ligand-metal coordination) or contain unstable functional groups.

- A: Validity does not guarantee functionality. The model may be learning general chemistry but not the specific constraints of catalyst design.

- Solution:

- Transfer Learning: Fine-tune a pre-trained generative model on a smaller, high-quality dataset of known catalyst structures relevant to your target reaction.

- Reinforcement Learning (RL): Use an RL framework with a reward function that scores generated structures not only for validity (using RDKit) but also for desired catalytic properties (e.g., presence of a metal coordination site, quantified via a scoring function or a simple surrogate model).

FAQs

Q: For improving validity specifically, which approach has a higher initial success rate: SMILES-based or 3D-based generation? A: SMILES-based generation typically yields a higher percentage of syntactically and chemically valid molecules (e.g., >90% with modern models) because it operates on a learned grammar. 3D coordinate generation often produces a lower initial validity rate concerning physical realism (e.g., <50% without refinement) due to the continuous and unconstrained nature of coordinate space. However, 3D methods directly address conformational validity, which SMILES ignores.

Q: What are the computational resource trade-offs between these methods? A: SMILES-based models are generally faster to train and sample from, as they deal with discrete sequences. 3D generation is more computationally intensive, requiring significant resources for both training (handling 3D point clouds or graphs) and post-processing (geometry optimization). See Table 1.

Q: How can I combine the strengths of both approaches in my catalyst design pipeline? A: A common hybrid pipeline is: 1) Use a SMILES-based model to generate a large pool of valid, candidate scaffold structures. 2) Convert the top candidates to 3D conformers. 3) Use a 3D refinement model or classical computational chemistry methods (e.g., DFT) to optimize the geometry and evaluate the catalytic site.

Q: What are the key metrics to track for validity in my experiments? A:

- For SMILES: Percentage of parseable SMILES (RDKit success rate), percentage of molecules with correct valence, and percentage of unique molecules.

- For 3D: Percentage of molecules without steric clashes (using van der Waals radius checks), average deviation of bond lengths/angles from ideal values (e.g., from the Cambridge Structural Database), and the energy strain after force field minimization.

Table 1: Comparison of SMILES vs. 3D Coordinate-Based Generation

| Aspect | SMILES-Based Generation | 3D Coordinate-Based Generation |

|---|---|---|

| Primary Validity Focus | Syntax & Chemical Valence (2D) | Geometric & Conformational Validity (3D) |

| Typical Initial Validity Rate* | High (e.g., 85-95%) | Lower (e.g., 10-50% before refinement) |

| Key Invalidity Artifacts | Incorrect syntax, invalid valence | Unphysical bond lengths/angles, steric clashes |

| Common Post-Processing | Rule-based filtering, validity checks | Force field/Energy minimization, clash removal |

| Training Data Complexity | Lower (1D sequences) | Higher (3D point clouds/graphs, often requiring aligned data) |

| Computational Cost (Training) | Lower | Significantly Higher |

| Implicit 3D Information | None | Explicit |

*Rates are illustrative and depend heavily on model architecture and training data.

Experimental Protocols

Protocol 1: Benchmarking Validity in SMILES-Based Generation Objective: To measure and compare the chemical validity rate of different SMILES generative models. Materials: See "Research Reagent Solutions" below. Procedure:

- Train or load a pre-trained generative model (e.g., a Transformer or RNN model) on a dataset of catalyst SMILES strings.

- Use the model to generate 10,000 novel SMILES strings.

- For each generated string, use RDKit's

Chem.MolFromSmiles()function to attempt to parse it into a molecule object. - Record a successful parse as a "valid" molecule.

- Further filter the valid molecules using RDKit's

SanitizeMol()check to ensure correct valence. - Calculate the percentage of parseable and sanitizable molecules out of the 10,000 generated.

Protocol 2: Assessing Geometric Validity in 3D Generation Objective: To quantify the geometric realism of molecules generated by a 3D coordinate model. Materials: See "Research Reagent Solutions" below. Procedure:

- Generate 1,000 molecular structures (atomic coordinates and types) using your 3D model.

- For each structure, use Open Babel to assign bond orders and create a preliminary molecular graph.

- Apply a quick force field minimization (e.g., using UFF in RDKit) with a step limit (e.g., 200 steps) to relax severe clashes.

- Calculate the following metrics for each minimized structure:

- Steric Clash Score: Count of atom pairs where the interatomic distance is less than 70% of the sum of their van der Waals radii.

- B Length Deviation: RMSD of all bond lengths compared to typical bond lengths from a reference database.

- Define a validity threshold (e.g., zero steric clashes and bond length RMSD < 0.2 Å). Calculate the percentage of generated molecules that meet this threshold.

Diagrams

SMILES vs 3D Generation Workflow

Hybrid Validity Optimization Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Validity Research |

|---|---|

| RDKit | Open-source cheminformatics toolkit. Core function: Parsing SMILES, checking chemical validity (sanitization), generating 3D conformers (ETKDG), force field minimization, and calculating molecular descriptors. |

| Open Babel | Chemical toolbox for format conversion and interoperation. Core function: Handling various chemical file formats, useful in pre-processing 3D data and intermediate file conversions. |

| PyTorch / TensorFlow | Deep learning frameworks. Core function: Building, training, and deploying generative models (e.g., LSTMs, Transformers for SMILES; GNNs for 3D graphs). |

| ORCA / Gaussian | Quantum chemistry software packages. Core function: Providing high-accuracy ground truth geometries and energies for training 3D models or for final validation of generated catalyst structures. |

| Cambridge Structural Database (CSD) | Repository of experimentally determined organic and metal-organic crystal structures. Core function: Source of ground-truth, physically realistic bond lengths, angles, and torsional angles for training and benchmarking 3D generative models. |

| MMFF94/UFF Force Fields | Molecular mechanics force fields. Core function: Rapid energy minimization and geometric validation of generated 3D structures to remove steric clashes and improve conformational realism. |

Within the broader thesis on "Improving molecular validity in generated catalyst structures research," a critical technical challenge is correcting chemically impossible or unstable structures produced by generative models. Post-hoc validity correction applies a suite of computational checks and fixes to ensure molecules obey valency rules, have plausible stereochemistry, and possess synthetically accessible functional groups. This guide details the implementation using RDKit, a core cheminformatics toolkit.

Troubleshooting Guides & FAQs

Q1: My correction script fails with "AtomValenceException." What does this mean and how do I fix it?

A1: This RDKit exception indicates an atom exceeds its allowed number of covalent bonds (e.g., a carbon with 5 bonds). The most common fix is to apply SanitizeMol with the SANITIZE_CLEANUP flag, which attempts to adjust hydrogen counts and remove explicit valence errors.

Q2: After correction, my 3D conformer generation fails. Why? A2: Invalid or undefined stereochemistry is a common cause. Before generating 3D coordinates, ensure tetrahedral and double-bond stereochemistry is properly assigned.

Q3: How can I detect and remove unstable or highly reactive functional groups from generated catalysts? A3: Use a substructure search with predefined SMARTS patterns for undesirable groups (e.g., peroxides, reactive halides). The following table lists common patterns for catalyst stability filtering.

| Unstable Group | SMARTS Pattern | Rationale for Removal |

|---|---|---|

| Peroxide | [OX2][OX2] |

Prone to hazardous decomposition. |

| Acid Halide | [CX3](=[OX1])[F,Cl,Br,I] |

Highly reactive, moisture-sensitive. |

| Epoxide | [OX2]1[CX4][CX4]1 |

May undergo uncontrolled ring-opening. |

| α-Halo Ketone | [CX3](=[OX1])[CX4][F,Cl,Br,I] |

Alkylating agent, potential toxicity. |

Q4: What is the standard workflow for applying a full post-hoc correction pipeline? A4: A robust, sequential pipeline is recommended. The following diagram outlines the logical flow from a raw generated structure to a valid, sanitized molecule.

(Post-Hoc Validity Correction Workflow)

Experimental Protocol: Validity Correction for Generated Organocatalysts

This protocol is cited from benchmark studies in the thesis.

1. Input Preparation: Compile generated molecular structures (e.g., from an RNN or GAN) as a list of SMILES strings in a .smi file.

2. Initial Sanitization Script:

3. Success Metrics: Track the validity rate before and after correction.

| Correction Step | Valid Structures (%) | Average MW | Structures Removed |

|---|---|---|---|

| Pre-Correction | 65.2 | 348.7 | N/A |

| Post Sanitization | 89.5 | 346.1 | 8.1% (Unparseable) |

| Post Stability Filter | 85.3 | 341.9 | 4.2% (Unstable Groups) |

The Scientist's Toolkit: Research Reagent Solutions

| Item (Software/Library) | Primary Function | Relevance to Validity Correction |

|---|---|---|

| RDKit (2023.09.x+) | Open-source cheminformatics. | Core library for molecule I/O, sanitization, substructure filtering, and stereochemistry handling. |

| Open Babel / Pybel | Chemical file format conversion. | Useful for preprocessing structures from various generative model outputs (e.g., .xyz, .mol2). |

| molVS | Molecule validation and standardization. | Provides additional standardization rules (tautomer normalization, charge neutralization) post-RDKit. |

| Custom SMARTS Library | User-defined substructure patterns. | Essential for filtering catalyst-specific unstable moieties not in general-purpose filters. |

| Jupyter Notebook | Interactive computing environment. | Platform for developing, debugging, and visualizing the correction pipeline step-by-step. |

Incorporating Synthetic Accessibility (SA) and Retrosynthetic Scores

Troubleshooting Guide & FAQs

Q1: My generative model produces catalyst structures with high predicted activity but extremely low SA scores. How can I adjust my model to prioritize synthetic feasibility?

A: This is a common issue where the model learns the target property (activity) without the synthetic constraint. Implement a multi-objective optimization or a constrained generation protocol.

- Solution: Integrate the SA score directly into the loss function or as a post-generation filter. Use a weighted sum:

Total Loss = α * (Activity Loss) + β * (SA Loss), where β is gradually increased during training. Alternatively, use a reinforcement learning framework with the SA score as part of the reward. - Protocol: Method for Weighted Loss Adjustment

- Pre-train your generative model (e.g., GNN, Transformer) on a broad chemical corpus.

- Fine-tune on your catalytic reaction data using a loss function focused on activity prediction.

- In a subsequent fine-tuning phase, incorporate the SA score loss. Start with β=0.1 and increase by 0.1 every 5 epochs until reaching β=0.5.

- Validate each epoch on a held-out set, monitoring both activity prediction RMSE and average SA score of generated structures.

Q2: When using retrosynthetic scoring, what is a reasonable threshold for considering a generated catalyst "synthesizable"?

A: Thresholds depend on the specific retrosynthetic analysis tool (e.g., ASKCOS, AiZynthFinder, IBM RXN). The scores are not universally calibrated. You must establish a baseline using known, easily synthesized catalysts from your domain.

- Data Summary: Common Retrosynthetic Score Benchmarks

| Tool | Score Type | Typical Threshold for "Readily Synthesizable" | Notes |

|---|---|---|---|

| ASKCOS | Tree Score (0-1) | > 0.6 | Based on probability of reaction success at each step. |

| AiZynthFinder | Combined Score (0-1) | > 0.7 | Product of policy and feasibility probabilities. |

| IBM RXN | Reaction Confidence (0-1) | > 0.9 (per step) | Applied to each retrosynthetic step; a low-probability step breaks the route. |

- Protocol: Establishing a Domain-Specific Threshold

- Compile a reference set of 20-50 catalyst molecules known to be synthesized in 3-5 steps from commercial building blocks.

- Run batch retrosynthetic analysis on this set using your chosen tool.

- Calculate the 25th percentile of the resulting scores. Use this value as your initial conservativethreshold (e.g., "Score ≥ X").

- Manually inspect the proposed routes for molecules scoring just above and below this threshold to calibrate.

Q3: The computational cost of calculating SA and retrosynthetic scores for every generated structure is prohibitive. Are there efficient approximations?

A: Yes. Use fast, rule-based SA estimators during generation and reserve full retrosynthetic analysis for final candidate ranking.

- Strategy: Implement a two-stage filtering pipeline.

- Stage 1 (Fast Filter): Use a rapid SA scorer like

RDKit's SA_Score(based on fragment contributions) orSYBA(a Bayesian classifier) to screen out egregiously complex structures in real-time during generation. Set a lenient cutoff (e.g., SA_Score < 6). - Stage 2 (Deep Analysis): For the top N candidates (e.g., 100-200) that pass Stage 1 and have high activity scores, perform full retrosynthetic analysis. Use this to rank and prioritize candidates for experimental validation.

- Stage 1 (Fast Filter): Use a rapid SA scorer like

Q4: How do I handle cases where a promising catalyst has a poor SA score due to one complex subunit? Can I deconstruct it into simpler analogs?

A: This is a key application of retrosynthetic analysis in catalyst design. Use the retrosynthetic tree to identify the problematic substructure ("synthon") and propose simpler isosteric replacements.

- Protocol: Catalyst Simplification via Retrosynthesis

- Input the high-activity, low-SA catalyst into the retrosynthetic tool.

- Analyze the generated tree(s) to identify the earliest precursor that introduces the complex subunit.

- Isolate that subunit as the "complexity hotspot."

- Search a database (e.g., ChEMBL, Enamine) for commercially available fragments that mimic the key pharmacophoric features of the hotspot but with higher SA scores.

- Propose 2-3 simplified analog structures and re-run your activity prediction model to check for maintained performance.

Experimental Workflow for Molecular Validity

Title: Catalyst Generation & Synthetic Feasibility Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item / Tool | Function / Purpose |

|---|---|

| RDKit | Open-source cheminformatics toolkit; provides the fast SA_Score function based on molecular fragment complexity. |

| SYBA (SYnthetic Bayesian Accessibility) | A fast, fragment-based classifier for estimating synthetic accessibility; useful for high-throughput initial filtering. |

| ASKCOS | A retrosynthetic planning software suite that evaluates synthesis pathways and provides a probabilistic "Tree Score". |

| AiZynthFinder | Open-source tool using a Monte Carlo tree search for retrosynthetic route finding; outputs a combined confidence score. |

| IBM RXN | Cloud-based platform using transformer models for retrosynthesis prediction and reaction condition recommendation. |

| Commercial Building Block Libraries (e.g., Enamine REAL, Mcule, MolPort) | Databases of readily purchasable chemical fragments; crucial for checking precursor availability in proposed routes. |

| Reinforcement Learning (RL) Framework (e.g., custom, OpenAI Gym) | Allows the generative model to be trained with a reward function combining property prediction and SA/retrosynthetic scores. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My generated transition-metal complex has unrealistic bond lengths or angles. What are the most common causes and solutions? A1: Unrealistic geometry typically stems from inadequate force field parameters or incorrect oxidation/coordination state assignment.

- Action: 1) Verify the assigned metal oxidation state matches your ligand set. 2) Use a specialized force field (e.g., UFF4MOF, GFN-FF) for geometry optimization before DFT. 3) Employ a conformation generator that respects metal coordination geometry (e.g., as implemented in RDKit's

ETKDGv3with constraints).

Q2: During automated library generation, I encounter chemically impossible or highly strained coordination geometries. How can I filter these out programmatically? A2: Implement a multi-step steric and electronic validation filter.

- Action: 1) Apply a ligand cone angle and steric parameter (e.g., Tolman cone angle, Buried Volume %Vbur) threshold based on known complexes. 2) Use a rule-based filter to flag unrealistic denticity or coordination numbers for the metal center (e.g., no 8-coordinate Pt(II)). 3) Perform a quick semi-empirical (e.g., GFN2-xTB) single-point energy calculation to flag extreme outliers.

Q3: My DFT calculations on generated complexes fail to converge or yield unrealistic electronic energies. What initial checks should I perform? A3: This often points to incorrect spin state or problematic initial guess.

- Action: 1) Systematically check plausible spin states for the metal center. Use a higher-quality initial guess (SCF=QC in Gaussian, Always in ORCA). 2) Ensure your basis set includes adequate functions for relativistic effects (e.g., SDD pseudopotential for 4d/5d metals) and polarization for coordinating atoms. 3) Start with a cheaper method (e.g., PBE-D3/def2-SVP) to pre-optimize before high-level calculation.

Q4: How can I ensure the generated metal-ligand bonds are valid (i.e., not dative vs. covalent, correct bond order)? A4: This requires pre-defined bond connectivity rules and post-calculation analysis.

- Action: 1) In your generation algorithm, use a curated "ligand connection dictionary" that defines the specific donor atom and formal bond type for each ligand. 2) After geometry optimization, analyze the Mayer bond order or Wiberg bond index via wavefunction analysis to confirm bonding expectations.

Q5: What are the best practices for handling solvation and counterions in a high-throughput generation and screening pipeline? A5: Consistency in implicit and explicit solvation is key for valid comparisons.

- Action: 1) For generation, use a standardized protonation state model (e.g., at pH 7.4). 2) For charged complexes, always include an appropriate, explicitly modeled counterion placed via electrostatic potential during pre-optimization. 3) Use a consistent implicit solvation model (e.g., SMD, COSMO-RS) across all single-point energy calculations.

Experimental Protocols

Protocol 1: Initial Library Generation and Rule-Based Filtering

- Input: SMILES strings of metal precursors (e.g.,

[Pd+2]) and a curated list of ligand SMILES. - Ligation: Use a combinatorial algorithm (e.g., Python script using RDKit) to create complexes via predefined coordination atoms. Assign initial coordination geometry (e.g., square planar for Pd(II)).

- Filtering: Apply SMARTS-based rules to remove complexes where ligand steric clash score exceeds threshold or metal coordination number is invalid.

- Output: A filtered set of 3D structures in

.sdfformat.

Protocol 2: Pre-Optimization and Validity Check

- Software: Employ molecular mechanics with metal-aware force field (UFF4MOF) via Open Babel or RDKit.

- Steps: Optimize generated 3D structure with constraints on metal-ligand distance ranges. Calculate ligand steric descriptors (e.g.,

%Vburusing SambVca 2.1 web tool). - Validation: Check final geometry against Cambridge Structural Database (CSD) mined parameters (Table 1). Discard complexes outside 3σ of mean bond lengths.

Protocol 3: DFT-Level Single-Point Validation

- Method: Use ORCA 5.0.3. Employ

PBE0functional withD3(BJ)dispersion correction. - Basis Set:

def2-SVPfor all atoms, withdef2/Jauxiliary basis for Coulomb fitting. - Solvation: Apply

CPCM(water)solvation model. For charged systems, include explicit counterion. - Calculation: Perform single-point energy, frequency (to confirm no imaginary frequencies), and NBO/Mulliken population analysis.

- Output: Final electronic energy, molecular orbitals, and bond order data for validity assessment.

Data Presentation

Table 1: Valid Geometric Parameter Ranges for Common Transition Metal Centers (Mined from the Cambridge Structural Database, filtered for R < 0.05 and no errors)

| Metal Center & Oxidation State | Common Coordination Geometry | Typical M-L Bond Length Range (Å)* | Allowed Coordination Numbers | Typical Spin States |

|---|---|---|---|---|

| Pd(II) | Square Planar | 1.95 - 2.05 (Pd-N); 2.25 - 2.40 (Pd-P) | 4, (5) | Singlet |

| Pt(II) | Square Planar | 1.95 - 2.10 (Pt-N); 2.25 - 2.35 (Pt-P) | 4 | Singlet |

| Ru(II) | Octahedral | 2.00 - 2.10 (Ru-Npyridine); 2.15 - 2.30 (Ru-Cl) | 6 | Singlet, Triplet |

| Fe(II) High-Spin | Octahedral | 2.10 - 2.20 (Fe-Namine) | 6, 5 | Quintet, Triplet |

| Ir(III) | Octahedral | 2.00 - 2.10 (Ir-C); 2.05 - 2.15 (Ir-N) | 6 | Singlet, Triplet |

*L = donor atom from organic ligand.

Table 2: Comparison of Methods for Pre-Optimization of Generated Complexes

| Method | Speed (Complexes/Hr) | Average RMSD vs. DFT Geometry (Å) | Handles Unusual Coordination? | Recommended Use Case |

|---|---|---|---|---|

| UFF (Generic) | 10,000 | 0.45 | Poor | Initial very fast screening |

| UFF4MOF | 2,500 | 0.15 | Good | General-purpose for MOFs/Organometallics |

| GFN-FF | 1,500 | 0.12 | Very Good | Diverse systems with unknown parameters |

| GFN2-xTB | 500 | 0.08 | Excellent | Final pre-filter before DFT |

The Scientist's Toolkit

Key Research Reagent Solutions & Essential Materials

| Item | Function/Description |

|---|---|

| RDKit (Open-Source Cheminformatics) | Core library for manipulating molecular structures, generating 3D conformers, and applying SMARTS-based chemical rules. |

| CREST & xTB (Semi-empirical Suite) | Conformer-rotamer ensemble sampling (CREST) and fast quantum chemical calculation (xTB) for pre-screening stability. |

| Cambridge Structural Database (CSD) | Repository of experimentally determined crystal structures to derive validation rules for bond lengths/angles. |

| ORCA / Gaussian (DFT Software) | High-level quantum chemistry packages for final electronic structure validation, spin-state, and bonding analysis. |

| SambVca Web Tool | Calculates steric maps and percent buried volume (%Vbur), critical for assessing ligand crowding. |

| Custom Python Validation Scripts | Scripts to automate the application of geometric and electronic filters across the generated library. |

| def2 Basis Set Family | Balanced basis sets (e.g., def2-SVP, def2-TZVP) with appropriate effective core potentials for transition metals. |

| COSMO-RS / SMD Solvation Models | Continuum solvation models to approximate the effect of solvent on complex stability and properties. |

Mandatory Visualizations

Diagram 1 Title: Automated Workflow for Valid Transition-Metal Complex Generation

Diagram 2 Title: Troubleshooting Unrealistic Complex Geometries

Diagnosing and Fixing Common Invalidity Issues in Generated Catalysts

Troubleshooting Unrealistic Bond Lengths and Angles in Metal-Ligand Interactions

Frequently Asked Questions (FAQs)

Q1: During my catalyst generation, my metal-ligand complexes show Co-N bond lengths of 3.2 Å, far beyond the typical 1.9-2.1 Å range. What is the primary cause? A1: Unrealistically long bonds often stem from incorrect force field parameterization or missing bond order assignments in the molecular builder software. The metal center may not be correctly recognized as coordinatively unsaturated, leading to a lack of defined bonds to donor atoms.

Q2: My generated metallocene catalyst shows a bent ligand geometry with a Cp-M-Cp angle of 120° instead of the expected 180°. How do I diagnose this? A2: This typically indicates a conflict between the hybridization state assigned to the metal and steric repulsion parameters. Check the metal's coordination number and oxidation state definitions in your modeling software. Improper torsional potentials around the metal-ligand pivot can also force unrealistic bending.

Q3: After energy minimization, my transition metal complex collapses, with bond angles deviating >30° from ideal crystal structure data. What should I check first? A3: First, verify the integrity of the initial coordination geometry. Then, scrutinize the non-bonded (van der Waals and electrostatic) parameters for the metal ion. Incorrect partial charges or missing polarization terms can lead to unrealistic collapse during minimization.

Q4: I am seeing inconsistent M-L-M' angles in my generated bimetallic catalyst. What are the key computational parameters to adjust? A4: Focus on the harmonic angle force constants (kθ) and equilibrium angles (θ0) in the force field for the M-L-M' term. Compare your set values against benchmarked databases. Also, ensure the metal atom types are correctly differentiated if the metals are different.

Q5: How can I prevent unrealistic bond lengths when using machine-learned potentials for high-throughput catalyst generation? A5: Ensure your training dataset for the ML potential includes diverse, high-quality crystallographic data with the specific metal and ligand types you are modeling. Regularly validate generated structures against a hold-out set of known, stable complexes. Implement a post-generation filter based on known bond length distributions.

Troubleshooting Guide

Step 1: Initial Validation Check

- Action: Compare generated bond lengths/angles against crystallographic databases (CSD, PDB).

- Protocol:

- Query the Cambridge Structural Database (CSD) for your specific metal-ligand motif.

- Extract mean and standard deviation for the bond lengths and angles in question.

- Calculate the Z-score: (Generated Value - Database Mean) / Database Standard Deviation.

- Flag any parameter with |Z-score| > 3.0 as a critical outlier.

Step 2: Force Field Parameter Audit

- Action: Systematically review and correct force field parameters.

- Protocol:

- Identify the atom types assigned to your metal and coordinating atoms.

- Locate the corresponding bond (kᵣ, r₀) and angle (kθ, θ₀) parameters in your force field file (e.g., .frcmod, .par, .lib).

- Cross-reference these values with published parameters from reliable sources like the Merck Molecular Force Field (MMFF), Generalized Amber Force Field (GAFF), or literature specific to your metal.

- Replace incorrect parameters and ensure consistency across all defined terms.

Step 3: Electronic State Verification

- Action: Confirm the metal oxidation state and spin multiplicity.

- Protocol:

- Manually calculate the expected formal oxidation state based on ligand donor types and overall complex charge.

- Check if the software's assigned atomic partial charges are consistent with this state.

- For DFT-based optimizations, explicitly set the correct multiplicity and verify convergence of the wavefunction.

- Run a single-point electronic calculation to check for unusual charge distributions or spin contamination.

Step 4: Constrained Refinement Protocol

- Action: Apply distance and angle restraints to guide the structure to a realistic geometry.

- Protocol:

- Define acceptable ranges for problematic bonds/angles based on Step 1 data.

- Apply harmonic positional restraints with a force constant of 500-1000 kJ/mol·nm² (or kcal/mol·Å²) during initial minimization.

- Gradually reduce the force constant over a series of minimization steps (e.g., 1000 → 500 → 100 → 0).

- Monitor the deviation from the target value at each stage to ensure stable refinement.

Data Presentation

Table 1: Typical Bond Length Ranges for Common Metal-Ligand Interactions

| Metal (M) | Ligand (L) | Typical M-L Bond Length (Å) | Common Source of Error |

|---|---|---|---|

| Fe (II/III) | N (porphyrin) | 1.98 - 2.05 | Incorrect metal hybridization (sp² vs sp³d²) |

| Pt (II) | N (amine) | 2.00 - 2.10 | Missing trans influence parameterization |

| Zn (II) | O (carboxylate) | 1.95 - 2.10 | Overestimated vdW radius for Zn |

| Ru (II) | Cl⁻ | 2.35 - 2.45 | Wrong partial charge on Cl in ionic complexes |

| Mg (II) | O (water) | 2.00 - 2.15 | Lack of explicit polarization model |

Table 2: Benchmarking Generated Structures Against the CSD

| Metric | Acceptable Threshold | Critical Failure Threshold | Corrective Action | ||||

|---|---|---|---|---|---|---|---|

| Bond Length Z-score | Z | < 2.0 | Z | > 3.0 | Re-parameterize bond term | ||

| Angle Deviation | < 10° | > 25° | Re-parameterize angle term | ||||

| Torsion Outlier | Match known conformer | Unobserved steric clash | Adjust torsional barrier | ||||

| Coordination Number | Matches oxidation state | Under/Over coordination | Check ligand assignment |

Experimental Protocols

Protocol A: Crystallographic Database Validation for Generated Catalysts

- Export Coordinates: Save your generated catalyst structure as a .mol2 or .pdb file.

- CSD Query: Use the CSD Python API (csd-python-api) to perform a conformational search.

- Define Motif: Search using a simplified SMILES string focusing on the metal and first-shell donors (e.g., "[Fe]~N1C=CC=C1" for an Fe-pyridine bond).

- Filter Results: Restrict hits to structures with R-factors < 0.05 and no disorder.

- Statistical Analysis: Calculate the median and interquartile range for your bond/angle of interest. Plot your generated value against the distribution.

Protocol B: Parameterization of a Novel Metal-Ligand Term for Force Fields

- Training Set: Compile 10-20 high-resolution crystal structures containing the M-L bond from the CSD.

- QM Target Data: Perform geometry optimization at the B3LYP/def2-SVP level (for 1st row TMs) or PBE0/LANL2DZ (for heavier metals) in a vacuum for each fragment.

- Derive Equilibrium Values: Calculate the average bond length (r₀) and angle (θ₀) from the QM-optimized fragments.

- Derive Force Constants:

- Perform a frequency calculation on the optimized fragment.

- For bond stiffness (kᵣ), use the harmonic approximation related to the stretching frequency (ν) via μ (reduced mass): kᵣ = (2πcν)²μ.

- For angle stiffness (kθ), use the projected frequency from the Hessian matrix.

- Implement & Test: Add the new parameters to your force field library and run a minimization on a test complex not in the training set. Validate against QM geometry.

Diagrams

Title: Troubleshooting Workflow for Metal-Ligand Geometry

Title: Root Causes of Unrealistic Geometry

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Molecular Validation

| Item | Function in Troubleshooting |

|---|---|

| Cambridge Structural Database (CSD) | Provides empirical distributions of bond lengths and angles from validated crystal structures for benchmarking. |

| Merck Molecular Force Field (MMFF94) | A well-validated force field with broad parameter coverage for organic and organometallic fragments. |

| Generalized Amber Force Field (GAFF2) | A flexible force field for drug-like molecules; often used as a base for adding metal parameters. |

| Gaussian, ORCA, or PySCF | Quantum chemistry software for generating target QM geometries and energies to validate/derive force field parameters. |

| CSD Python API | Enables automated querying and statistical analysis of the CSD directly within modeling scripts. |

| Metal-Ligand Parameter Database (e.g., MCPB.py) | Provides pre-derived parameters for metal centers for use in molecular dynamics simulations (e.g., with AMBER). |

| Visualization Software (VMD, PyMOL) | Critical for visually inspecting distorted geometries and identifying steric clashes or mis-assignments. |

| Conformational Search Algorithm (e.g., CREST) | Systematically explores potential energy surfaces to identify if a distorted geometry is a trapped intermediate or an artifact. |

Resolving Steric Clashes and Conformational Strain in Complex Scaffolds

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During catalyst design, my computational model suggests a low-energy conformation, but synthesis fails due to implausible bond angles in a fused ring system. What went wrong?

A: The discrepancy often arises from neglecting conformational strain in transition states. The computational model likely minimized the ground state, not the transition state geometry required for synthesis.

- Protocol: Employ a Constrained Systematic Conformational Search.

- Identify the fused ring core and define all rotatable bonds outside it as flexible.

- Apply torsional constraints to the ring system based on known crystallographic data (e.g., from the CSD) to maintain feasible bond angles.

- Perform a Monte Carlo or Molecular Dynamics simulation at 500-700 K to sample high-energy intermediates.

- Cluster results and re-optimize top clusters with DFT (e.g., ωB97X-D/def2-SVP level).

- Data: Typical strain energy thresholds for synthesizable scaffolds:

| Scaffold Type | Acceptable Strain Energy (kcal/mol) | Common Failure Point |

|---|---|---|

| Fused Alicyclic (6,6) | < 12 | Transannular H---H clashes |

| Fused Alicyclic (5,7) | < 20 | Inverted ring puckering |

| Bridged Bicyclic | < 25 | Bridgehead bond elongation > 0.05 Å |

| Metallocycle (Pd, Pt) | < 15 | M-L Bond angle distortion > 15° |

Q2: After introducing a bulky substituent to improve selectivity, molecular dynamics shows the catalyst collapsing into a non-productive pose. How can I rigidify the scaffold?

A: This is a classic steric clash issue. Strategic rigidification is key.

- Protocol: Scaffold Rigidification via in silico Propping.

- Run a short (10 ns) MD simulation of the collapsed structure in explicit solvent.

- Analyze the trajectory to identify the primary atomic pairs involved in the clash (VMD, PyMOL).

- Introduce a rigidity element: Use a scaffold-editing tool (e.g., RDKit, Schrodinger's CombiGlide) to:

- Option A: Replace a single bond with a double bond to restrict rotation.

- Option B: Insert a small alkyne spacer to push groups apart.

- Option C: Form a macrocycle or staple distant parts.

- Re-run MD and compare RMSD and radius of gyration to the original design. A successful rigidification reduces RMSD fluctuation by >40%.

Q3: My DFT calculations show a favorable ΔG, but the catalyst is inactive. Could hidden steric clashes in the substrate-bound state be the cause?

A: Absolutely. The catalyst's apo state may be valid, but the substrate-bound state must be evaluated.

- Protocol: Binding Pose Strain Analysis.

- Dock the proposed substrate into your catalyst active site (Glide, GOLD, AutoDock Vina). Generate top 20 poses.

- For each pose, perform a Conformational Strain Energy Calculation:

- Fully optimize the substrate in vacuo. Record energy Esubstratevacuum.

- Fully optimize the catalyst in vacuo. Record energy Ecatalystvacuum.

- Optimize the catalyst-substrate complex. Record Ecomplex.

- Calculate the strain: ΔEstrain = Ecomplex - [Esubstratevacuum + Ecatalystvacuum]

- Poses with ΔEstrain > 8-10 kcal/mol are likely artifacts and indicate severe steric incompatibility.

Experimental Workflow for Molecular Validity

Title: Catalyst Validity Screening Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Resolving Steric/Strain Issues |

|---|---|

| GFN2-xTB Software | Fast, semi-empirical quantum method for initial conformational searches and identifying severe clashes in large systems. |

| Cambridge Structural Database (CSD) | Repository of experimental crystal structures to validate plausible bond lengths, angles, and torsions for novel scaffolds. |

| Conformer Generation Algorithm (ETKDG) | Distance geometry-based method (in RDKit) for generating diverse, realistic initial 3D conformers for screening. |

| Non-Covalent Interaction (NCI) Plot Index | Visualizes steric clashes (red isosurfaces) and stabilizing interactions (green) in DFT-optimized structures. |

| Molecular Mechanics Force Field (MMFF94) | Used for preliminary, high-throughput minimization and clash detection before more costly DFT calculations. |

| Density Functional Theory (ωB97X-D) | DFT functional including dispersion correction, essential for accurate final strain energy calculations. |

| Explicit Solvent MD Box (TP3P Water) | Molecular dynamics in explicit solvent reveals solvation-driven collapse or clashes not seen in vacuo. |

| Torsional Drive Scan Scripts | Automated scripts (e.g., with Gaussian or ORCA) to systematically rotate bonds and map strain energy profiles. |

Addressing Unstable Oxidation States and Unlikely Coordination Geometries

Technical Support Center

Troubleshooting Guide & FAQs

Q1: During my DFT calculation of a transition metal catalyst, I get a "SCF convergence failure" error. The metal center has a proposed +1 oxidation state that is uncommon. How do I proceed? A: This often indicates an unrealistic electronic configuration. First, verify the plausibility of the +1 state for your metal in that ligand field. Consult calculated Hume-Rothery stability parameters or standard reduction potentials.

- Protocol: Perform a preliminary chemical intuition check using the Lever's Electronic Parameter (LEP) sum. Calculate the sum of the ligand parameters. For an unlikely oxidation state, this sum will often be inconsistent with the metal's common stabilization ranges.