Beyond Trial-and-Error: How Reinforcement Learning is Revolutionizing Multi-Objective Catalyst Design for Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on applying reinforcement learning (RL) to optimize catalysts across multiple, often competing, objectives like activity, selectivity, and stability.

Beyond Trial-and-Error: How Reinforcement Learning is Revolutionizing Multi-Objective Catalyst Design for Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying reinforcement learning (RL) to optimize catalysts across multiple, often competing, objectives like activity, selectivity, and stability. We explore the foundational concepts of RL in a chemical context, detail practical methodologies and recent applications, address common challenges and optimization strategies, and compare RL's performance against traditional computational and experimental approaches. The synthesis offers a roadmap for integrating this powerful AI paradigm into next-generation catalyst discovery pipelines.

Reinforcement Learning 101 for Catalysis: Core Concepts and Why It's a Game-Changer

Defining the Multi-Objective Optimization Problem in Catalyst Design

Within the broader thesis on using reinforcement learning (RL) for multi-objective catalyst optimization, the precise definition of the optimization problem is the critical first step. This involves moving from a single metric (e.g., yield) to a multi-dimensional objective space where competing goals must be balanced. For catalytic systems, particularly in pharmaceutical development, this typically includes activity, selectivity, and stability.

Core Objectives and Quantitative Targets

The primary objectives are derived from both catalytic performance metrics and practical development constraints. Current literature and industrial targets emphasize the following:

Table 1: Typical Multi-Objective Targets in Heterogeneous Catalysis for Pharmaceutical Applications

| Objective | Metric | Target Range | Measurement Technique |

|---|---|---|---|

| Activity | Turnover Frequency (TOF) | > 10 s⁻¹ | Kinetic analysis of initial rates |

| Selectivity | % Desired Product (e.g., enantiomeric excess) | > 99% | GC/MS, HPLC, Chiral SFC |

| Stability | Time to 10% activity loss (T₉₀) | > 100 hours | Long-duration flow reactor test |

| Cost | Noble metal loading (wt%) | < 0.5% | X-ray fluorescence (XRF) |

| Environmental Impact | E-factor (kg waste/kg product) | < 25 | Process mass intensity calculation |

Mathematical Problem Formulation

The optimization problem is formally defined for an RL agent. The state (s) is the catalyst descriptor space (composition, structure, synthesis parameters). The action (a) is a modification to this space. The reward (R) is a scalar function of the multiple objectives.

Multi-Objective Reward Function:

R(s,a) = w₁ * f(Activity) + w₂ * g(Selectivity) + w₃ * h(Stability) - w₄ * i(Cost)

where wᵢ are weights defining the trade-off preference, and f, g, h, i are normalization functions scaling each objective to a comparable range (e.g., 0-1).

The goal of the RL agent is to learn a policy π(a|s) that maximizes the expected cumulative reward over time, effectively navigating the Pareto frontier of optimal catalyst designs.

Experimental Protocols for Objective Quantification

Protocol 4.1: High-Throughput Kinetic Screening for Activity & Selectivity Objective: Simultaneously determine TOF and selectivity for catalyst libraries. Materials: Automated liquid handling station, parallel pressure reactors, GC/MS with autosampler. Procedure:

- Catalyst Array Preparation: Using an automated dispenser, prepare 96-well plate with varied catalyst compositions (e.g., varying ratios of Pd, Pt, support).

- Reaction Initiation: Under inert atmosphere, add 2 mL of substrate solution (0.1 M in appropriate solvent) to each well using the liquid handler.

- Kinetic Monitoring: Seal reactors, pressurize with H₂ (if applicable), and agitate at constant temperature (e.g., 80°C). At fixed time intervals (t=1, 5, 10, 30 min), automatically sample 100 µL from each well via robotic needle.

- Analysis: Inject samples into GC/MS. Quantify substrate and products using calibrated internal standards.

- Data Processing: Calculate initial rate (activity) and product distribution (selectivity) for each well.

Protocol 4.2: Accelerated Stability Assessment (T₉₀) Objective: Measure catalyst deactivation time efficiently. Materials: Fixed-bed flow reactor, online mass spectrometer, temperature-controlled furnace. Procedure:

- Catalyst Loading: Pack 50 mg of catalyst (sieve fraction 150-200 µm) into a stainless-steel microreactor tube.

- Conditioning: Activate catalyst under H₂ flow (20 mL/min) at 300°C for 2 hours.

- Stability Run: Switch to reaction feed (e.g., 5% reactant in H₂) at specified temperature and pressure. Use online MS to monitor product concentration continuously.

- Termination: Run until product signal drops to 90% of its maximum steady-state value. Record total time as T₉₀.

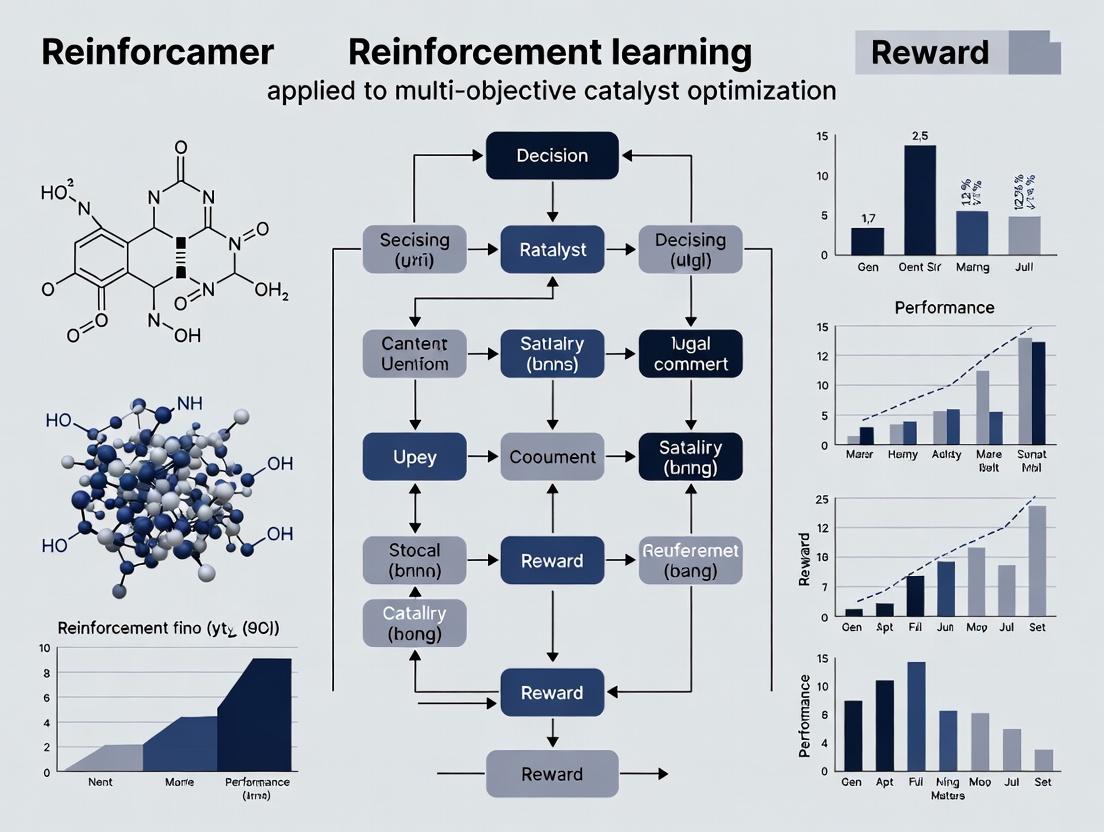

Visualizing the RL-Optimization Framework

Diagram Title: RL Workflow for Catalyst Multi-Objective Optimization

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Catalyst Design & Screening Experiments

| Item | Function | Example/Supplier (Informational) |

|---|---|---|

| Precursor Salts | Source of active metal components for catalyst synthesis. | Pd(OAc)₂, H₂PtCl₆·6H₂O (e.g., Sigma-Aldrich) |

| Porous Supports | Provide high surface area and stabilize metal nanoparticles. | γ-Al₂O₃, SiO₂, CeO₂, Carbon Black |

| High-Throughput Reactor Blocks | Enable parallel testing of up to 96 catalyst variants under controlled conditions. | Parr Instrument Company parallel reactor systems |

| Chiral Ligand Libraries | Induce enantioselectivity in asymmetric hydrogenation reactions. | Josiphos, BINAP derivative libraries |

| Internal Standards (GC/MS) | Enable accurate quantification of reaction conversion and selectivity. | Dodecane, Biphenyl, Chiral derivatizing agents |

| Automated Sorbent Cartridges | For rapid post-reaction purification before analysis in high-throughput workflows. | Silica or alumina cartridges for solid-phase extraction |

| Online Mass Spectrometer (MS) | Real-time monitoring of gas-phase products for kinetic and stability studies. | Hiden Analytical HPR-20 systems |

Application Notes: RL for Multi-Objective Catalyst Optimization

Reinforcement Learning (RL) provides a paradigm for automated chemical discovery by framing the search for optimal catalysts as a sequential decision-making problem. The agent (an algorithm) interacts with an environment (experimental or computational setup) by selecting actions (e.g., changing ligand, metal center, or reaction conditions) to maximize a cumulative reward signal encoding multiple, often competing, objectives (e.g., activity, selectivity, stability, cost).

Key RL Components in Chemical Context:

- Agent: Optimization algorithm (e.g., Deep Q-Network, Policy Gradient method).

- Environment: High-throughput experimentation (HTE) robot, computational simulator (DFT, molecular dynamics), or a hybrid.

- State (St): Representation of the current catalyst or reaction system (e.g., molecular descriptor vector, spectroscopic fingerprint, or a textual representation like SMILES).

- Action (At): A modification to the catalyst structure or conditions (e.g., "substitute phenyl group for methyl," "increase temperature by 10°C").

- Reward (Rt+1): A scalar, multi-objective function computed after the action. For example: R = w1Yield + w2Selectivity - w3CatalystCost - w4EnergyInput.

Table 1: Recent RL Applications in Catalyst Discovery (2022-2024)

| RL Algorithm | Catalyst Type / Reaction | Environment | Key Objectives (Reward Components) | Reported Performance vs. Baseline |

|---|---|---|---|---|

| Deep Q-Network (DQN) | Heterogeneous (CO2 hydrogenation) | Computational microkinetic model | Activity (TOF), Selectivity (to CH4) | Found 5 novel alloy candidates with >15% higher predicted selectivity than random search. |

| Proximal Policy Optimization (PPO) | Homogeneous (C-H activation) | HTE robotic platform | Yield, Turnover Number (TON), Ligand Cost | Achieved target yield (>90%) in 50% fewer experimental cycles than Bayesian optimization. |

| Soft Actor-Critic (SAC) | Enzyme (asymmetric synthesis) | Hybrid (ML-predicted ΔG & wet-lab validation) | Enantiomeric excess (ee), Reaction Rate, Solvent Greenness | Identified 3 mutant variants with ee >99% and 2x rate improvement over wild-type. |

| Multi-Objective DQN (MO-DQN) | Photocatalyst (water splitting) | Density Functional Theory (DFT) simulator | Band Gap, pH Stability, Abundance of Elements | Generated a Pareto front of 12 materials balancing stability and activity. |

Experimental Protocols

Protocol 2.1: Implementing an RL Agent for Computational Catalyst Screening

This protocol outlines steps for an *in silico RL-driven search for heterogeneous catalysts.*

Materials & Setup:

- Computational Environment: DFT software (e.g., VASP, Quantum ESPRESSO) or a pre-trained surrogate model for property prediction.

- Agent Code: Python with RL libraries (TensorFlow, PyTorch, RLlib, Stable-Baselines3).

- State Space Definition: A fixed-length feature vector per catalyst (e.g., elemental composition, coordination numbers, orbital occupation, known descriptors).

Procedure:

- Initialization: Define the initial catalyst pool (e.g., 100 bulk structures from a materials database). Set the state representation S0.

- Action Selection: At iteration t, the agent, based on its current policy π(At|St), selects an action. Example actions: "Replace element X with Y from pre-defined list," or "Apply strain ±2%."

- Environment Step: The new candidate structure is generated and its properties (e.g., adsorption energy Eads, activation barrier Ea) are computed via the simulator.

- Reward Calculation: Compute Rt+1 = f(Ea, Eads, selectivity metric). A common simple form: R = -αEa + βΔS. Terminate episode if stability criteria fail.

- Agent Update: Store transition (St, At, Rt+1, St+1) in replay buffer. Sample a batch and update the agent's neural network parameters via gradient descent to maximize expected cumulative reward.

- Iteration: Set St = St+1. Repeat from Step 2 for a set number of episodes or until reward convergence.

- Validation: Perform full mechanistic DFT analysis on top 5-10 candidates identified by the RL agent.

Protocol 2.2: RL-Driven High-Throughput Experimental Optimization

This protocol integrates an RL agent with an automated flow reactor system for homogeneous catalyst optimization.

Materials & Setup:

- HTE Platform: Automated liquid handler, parallel micro-batch or flow reactors, inline GC/HPLC for analysis.

- Control Software: Custom Python API to link RL agent commands to robotic instructions.

- Chemical Space: A set of tunable parameters: Ligand (L1-L20), Metal precursor (M1-M5), Additive (A1-A10), Concentration, Temperature, Pressure.

Procedure:

- Calibration: Run a small set of diverse pre-defined experiments to establish baseline performance and noise levels.

- Episode Definition: One episode = one complete catalytic reaction and analysis cycle.

- State Encoding: After each experiment, encode the result as a state vector: [LigandID, MetalID, Additive_ID, Yield, Selectivity, TON].

- Action Space: The agent selects from a discrete set of modifications (e.g., "change ligand to L7," "increase temperature by 15°C").

- Robotic Execution: The action is translated into specific robotic instructions (volumes, positions, reactor settings). The reaction is executed and analyzed.

- Real-Time Reward: The analytical result is parsed. A composite reward is calculated, e.g., R = (Yield/100) + (ee/100) - (0.1 * CostIndex). CostIndex is based on ligand/metal price.

- Agent Learning: The agent updates its policy using the collected (state, action, reward) tuple. An ε-greedy or similar strategy balances exploration and exploitation.

- Loop: The next action is selected and executed. The cycle continues for a predetermined budget (e.g., 200 episodes).

- Hit Validation: Manually reproduce and characterize the top 3-5 catalyst formulations discovered.

Diagrams

Title: RL Cycle in Catalyst Optimization

Title: Integrated Multi-Objective Catalyst Discovery Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for RL-Driven Catalyst Discovery

| Item / Reagent | Function / Role in RL Experiment |

|---|---|

| High-Throughput Experimentation (HTE) Robotic Platform | Serves as the physical "Environment." Automates the execution of actions (mixing, reacting, analyzing) with high reproducibility, enabling rapid data (state/reward) generation. |

| Modular Ligand & Precursor Libraries | Provides a well-defined, actionable chemical space. Each unique component is a discrete action the RL agent can select (e.g., "add ligand L12"). |

| In-line or At-line Analytical Instrument (e.g., UPLC, GC-MS) | Quantifies reaction outcomes (yield, ee, conversion) to calculate the immediate reward signal immediately after the action is taken, closing the RL loop. |

| Computational Catalyst Simulator (e.g., DFT Code) | Serves as a virtual "Environment" for low-cost, high-speed initial exploration. Provides predicted properties for reward calculation before costly wet-lab experiments. |

| Surrogate Machine Learning Model (e.g., Graph Neural Network) | Can act as a fast, approximate simulator within the RL loop, predicting catalyst performance from structure to accelerate agent training. |

| RL Software Framework (e.g., Stable-Baselines3, Ray RLlib) | Provides pre-implemented, robust agent algorithms (PPO, SAC, DQN) that researchers can customize and deploy, avoiding the need to code from scratch. |

| Chemical Descriptor Software (e.g., RDKit, Matminer) | Translates chemical structures (SMILES, CIF files) into numerical state vectors (descriptors, fingerprints) that the RL agent can process. |

The search for novel, high-performance catalysts is a critical multi-objective optimization challenge in chemistry and materials science. Objectives typically include maximizing catalytic activity (e.g., turnover frequency, TOF), selectivity towards a desired product, stability/lifetime, and minimizing cost. Within a thesis on Using reinforcement learning for multi-objective catalyst optimization research, the choice between Model-Free (MF) and Model-Based (MB) Reinforcement Learning (RL) paradigms constitutes a fundamental strategic decision. This document provides application notes and protocols for implementing these paradigms in a virtual or robotic high-throughput experimentation (HTE) workflow for heterogeneous catalyst discovery.

Core Paradigms: Comparative Analysis

Model-Free RL learns an optimal policy (or value function) directly from interactions with the experimental environment (e.g., a robotic testing platform or simulation) without constructing an explicit model of the environment's dynamics. It is highly flexible and can discover complex, non-intuitive catalyst compositions.

Model-Based RL first learns a predictive model of the environment's dynamics (e.g., how catalyst composition and synthesis variables affect performance metrics). This model is then used for planning (e.g., via simulation) or to guide policy learning, often leading to higher sample efficiency.

Table 1: High-Level Comparison of RL Paradigms for Catalyst Search

| Feature | Model-Free RL | Model-Based RL |

|---|---|---|

| Core Principle | Learn policy/value directly from experience. | Learn a model of environment dynamics, then plan/learn. |

| Sample Efficiency | Lower; requires many experimental iterations. | Higher; can utilize simulated data from the model. |

| Computational Cost | Lower per sample, but needs many samples. | Higher per sample for model learning, but fewer samples needed. |

| Exploration Strategy | Primarily through policy stochasticity (e.g., ε-greedy). | Can use model uncertainty to drive targeted exploration. |

| Handling of Multi-Objective | Directly via reward shaping or Pareto-front methods. | Can simulate objectives separately within the model. |

| Best Suited For | High-fidelity simulators or very rapid HTE platforms. | Expensive, slow, or resource-intensive real-world experiments. |

| Common Algorithms | DQN, PPO, SAC, MO-PPO, MORL. | PETS, World Models, MuZero, PILCO. |

Table 2: Quantitative Performance Metrics from Recent Studies (2023-2024)

| Study Focus | RL Paradigm & Algorithm | Simulated/Real Exp. | Sample Efficiency (# Experiments to Reach Target) | Performance Gain vs. Random Search | Key Catalyst Search Objective |

|---|---|---|---|---|---|

| Pd-alloy ORR Catalyst | MF: Multi-Objective SAC | Simulation (DFT proxy) | ~3000 | 8x faster | Maximize activity, minimize Pd content |

| Methane Oxidation | MB: Gaussian Process Dyna (PETS) | Robotic HTE (Autolab) | < 100 | 15x faster | Light-off temperature & stability |

| CO2 Reduction (Cu-Zn) | MF: PPO with Reward Shaping | Simulation (Microkinetic Model) | ~5000 | 5x faster | Selectivity to C2+ products |

| Propane Dehydrogenation | MB: Probabilistic Ensemble Model | Real Fixed-Bed Reactor | ~50 | 20x faster | Propylene yield & stability over time |

Experimental Protocols

Protocol 3.1: Model-Free RL Workflow for Catalyst Optimization (e.g., using PPO)

Objective: To discover a bimetallic catalyst (M1-M2/support) that maximizes conversion (X) and selectivity (S) in a target reaction.

I. Reagent & Equipment Setup

- Robotic Synthesis Platform: For automated ink preparation, impregnation, or co-precipitation.

- High-Throughput Testing Reactor: 16- or 48-channel parallel fixed-bed or gas-flow reactor system with online GC/MS.

- RL Server: GPU-equipped workstation running the RL algorithm (Python, Ray RLlib, Stable-Baselines3).

II. Experimental Procedure

- State Space Definition: Digitally encode the catalyst as a state vector

s. E.g.,[M1_amt, M2_amt, calcination_temp, support_type_encoded]. - Action Space Definition: Define actions as incremental changes to synthesis parameters. E.g.,

ΔM1_amt ∈ [-0.5, 0.5 wt%],Δcalcination_temp ∈ [-10, +10 °C]. - Reward Function Design: Construct a composite reward

R = w1*X + w2*S - w3*Cost(s), where weightsware tuned or part of a multi-objective Pareto search. - Initialization: Start with a small, diverse set of 10-20 catalyst formulations (initial policy or random).

- Iterative RL Loop:

a. Synthesis & Testing: The robotic platform prepares and tests the batch of catalysts defined by the current RL policy.

b. Data Ingestion: Performance metrics (X, S) are fed into the reward calculator.

c. Policy Update: The MF-RL agent (e.g., PPO) updates its policy network using the collected

(s, a, r, s')transitions. d. Action Proposal: The updated policy proposes a new batch of catalyst formulations for the next experiment. - Termination: Loop continues until performance plateaus or a computational budget is exhausted (e.g., 200 experimental cycles).

III. Data Analysis

- Plot cumulative reward vs. experiment number.

- Perform post-hoc characterization (XRD, XPS, TEM) on top-performing catalysts identified by the RL agent to extract scientific insights.

Protocol 3.2: Model-Based RL Workflow for Catalyst Optimization (e.g., using PETS)

Objective: To efficiently optimize a tri-metallic catalyst for a slow, resource-intensive photocatalytic reaction.

I. Reagent & Equipment Setup

- Manual or Semi-Automated Synthesis: Due to complexity, synthesis may be manual but meticulously documented.

- Photoreactor with Online Analysis: Single or low-parallelism reactor with sensitive product detection (e.g., LC-MS).

- Probabilistic Model Server: Workstation for training dynamics models (e.g., using PyTorch).

II. Experimental Procedure

- Dynamics Model Training Phase:

a. Collect an initial, space-filling dataset

D0of ~50 catalyst formulations and their performance. b. Train an ensemble of probabilistic neural networks (PNNs) to modelf(s, a) → (s', r), wheres'is the predicted performance. c. Validate model predictions against a held-out test set of catalysts. - Model-Predictive Control (MPC) Loop:

a. Planning: Given the current best catalyst state

s_t, use the trained dynamics model to simulate the outcomes of thousands of possible actionsaover a planning horizon (e.g., Cross-Entropy Method). b. Action Selection: Select the action sequencea_tthat maximizes the expected cumulative reward according to the model. c. Real Experiment: Execute the first recommended action (e.g., change two metal ratios) in the lab, synthesize and test the new catalyst. d. Model Update: Augment the datasetDwith the new real result(s_t, a_t, r_t, s_{t+1}). Periodically retrain the dynamics model. - Termination: Continue until performance targets are met or the model predictions become highly certain, indicating convergence.

III. Data Analysis

- Analyze the learned dynamics model: which input features (synthesis parameters) does the model identify as most predictive?

- Compare the predicted vs. actual performance scatter plot to assess model fidelity.

Visualizations

Title: Model-Free RL Closed-Loop Catalyst Search Workflow

Title: Model-Based RL with MPC for Catalyst Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools for RL-Driven Catalyst Search

| Item | Function & Relevance | Example/Specification |

|---|---|---|

| Robotic Liquid Handler | Enables reproducible, high-throughput synthesis of catalyst libraries by automating precursor dispensing. | Hamilton Microlab STAR, Chemspeed Technologies SWING. |

| Parallel Pressure Reactor | Allows simultaneous testing of multiple catalyst candidates under controlled, relevant conditions (T, P). | AMTEC SPR, Parr Multi-Reactors. |

| Online Gas Chromatograph (GC) | Provides rapid, quantitative analysis of reaction products for immediate reward calculation. | Compact GC systems (e.g., Interscience Trace 1300) coupled to each reactor channel. |

| DFT Simulation Software | Provides a surrogate environment for Model-Free RL training where real experiments are impossible. | VASP, Quantum ESPRESSO; used to calculate adsorption energies as activity proxies. |

| Microkinetic Modeling Package | Creates a simplified, computationally tractable model of surface reactions for MB-RL's dynamics model. | CatMAP, KMOS. |

| RL Algorithm Library | Provides tested implementations of MF and MB algorithms, reducing development time. | Stable-Baselines3 (MF), Ray RLlib (MF/MB), mbrl-lib (MIT, for MB). |

| Probabilistic Deep Learning Framework | Essential for building the dynamics models (e.g., ensemble NN, Gaussian Processes) in MB-RL. | PyTorch with Pyro or GPyTorch, TensorFlow Probability. |

| Standard Metal Salt Precursors | High-purity, soluble salts for reproducible synthesis. | e.g., Tetrachloroplatinic acid (H2PtCl6), Palladium(II) nitrate hydrate, Chloroauric acid (HAuCl4). |

| High-Surface-Area Supports | Standardized supports to isolate active phase effects. | e.g., Gamma-Alumina (γ-Al2O3), Carbon black (Vulcan XC-72), Silica (SiO2). |

Catalyst optimization via reinforcement learning (RL) presents a unique challenge: the state and action spaces are inherently high-dimensional and continuous. A catalyst's "state" is a complex function of its composition, structure, surface morphology, and operating conditions, while an "action" may involve doping, thermal treatment, or morphology alteration. This document provides application notes and protocols for effectively representing these spaces within an RL framework for multi-objective optimization (e.g., activity, selectivity, stability).

Table 1: Common Catalyst State Descriptors and Their Typical Dimensionality

| Descriptor Category | Specific Descriptors | Raw Dimensions | Typical Reduced Dimensions (Post-Processing) | Data Source |

|---|---|---|---|---|

| Compositional | Elemental fractions, doping concentrations | 10-50 (for multi-metallics) | 3-10 (via PCA) | High-throughput experiment libraries |

| Structural | XRD patterns, EXAFS spectra | 1000-5000 points | 10-50 (via autoencoder) | Synchrotron datasets |

| Electronic | d-band center, Bader charges, DOS | 5-20 | 5-20 (often used directly) | DFT calculations |

| Morphological | Particle size distribution, surface area, facet ratios | 5-15 | 5-15 | TEM/N2 physisorption |

| Operational | Temperature, pressure, feed concentration | 3-10 | 3-10 | Reaction kinetics data |

Table 2: Performance of Dimensionality Reduction Techniques on Catalyst Data

| Technique | Avg. Variance Retained (%) | Avg. Reconstruction Error (MSE) | Computational Cost | Suitability for RL State Embedding |

|---|---|---|---|---|

| PCA | 75-90 | 0.05-0.15 | Low | Good for linear manifolds |

| t-SNE | N/A (non-linear) | N/A | Medium | Visualization only, not for RL state |

| UMAP | N/A (non-linear) | 0.02-0.08 | Medium | Excellent for preserving topology |

| Variational Autoencoder (VAE) | 85-95 | 0.01-0.05 | High (requires training) | Best for generative action sampling |

Experimental Protocols

Protocol 3.1: Constructing a Continuous Catalyst State Vector from Multi-Modal Data

Objective: Integrate heterogeneous characterization data into a fixed-length, continuous vector for RL state representation.

Materials:

- Catalyst samples (e.g., Pt-Pd-Au trimetallic nanoparticles on Al2O3).

- Characterization suite (XRD, XPS, BET, TEM).

- Computing workstation with Python (scikit-learn, TensorFlow/PyTorch).

Procedure:

- Data Acquisition: For each catalyst sample (

i), collect:- XRD: Preprocess pattern (background subtract, normalize). Interpolate to fixed 2θ grid (e.g., 1000 points).

- XPS: Extract elemental surface concentrations (at.%) for relevant elements (5-10 values).

- BET/TEM: Record surface area (m²/g), average particle size (nm), and size distribution quartiles (3 values).

- Per-Modality Reduction:

- Train a dedicated PCA model on XRD patterns from a reference library. Reduce each pattern to 20 principal components (PCs).

- Use XPS and morphological data directly (no reduction, ~10-15 dimensions).

- State Vector Assembly: Concatenate reduced features into a single vector

s_i:s_i = [XRD_PC1, ..., XRD_PC20, XPS_Pt, XPS_Pd, XPS_Au, Surface_Area, Particle_Size, ...](Total dimension: ~35-50). - Normalization: Apply standard scaling (z-score) to each dimension across the entire dataset.

Protocol 3.2: Defining and Parameterizing the Catalyst Action Space

Objective: Define a continuous, tractable action space for an RL agent to propose new catalyst formulations or treatments.

Materials: Catalyst precursor solutions, impregnation setup, calcination furnace, DFT software (e.g., VASP).

Procedure:

- Action Definition: An action

a_tis defined as a vector modifying the current catalyst state.- For composition:

a_comp = [Δmol%_Pt, Δmol%_Pd, Δmol%_Au, Δmol%_Promoter](bounded between -1 and 1, representing relative changes). - For synthesis:

a_synth = [ΔImpregnation_Time(min), ΔCalcination_Temp(°C), ΔCalcination_Time(hr)].

- For composition:

- Action Validation via Simulation (Surrogate Model):

- Before wet-lab experimentation, predict the outcome of action

a_tfrom states_t. - Input

s_tanda_tinto a pre-trained forward model (e.g., a neural network) that maps(s_t, a_t)to a predicted next-states_{t+1}and performance metrics (activity, selectivity). - Filter or penalize actions leading to predicted states outside physically plausible bounds (e.g., negative concentrations).

- Before wet-lab experimentation, predict the outcome of action

- Experimental Implementation: Execute the validated action via automated synthesis robots (e.g., liquid handling for impregnation, robotic furnace for thermal treatment).

Visualizations

Diagram 1: RL Catalyst Optimization Workflow

(Title: RL Catalyst Optimization Loop)

Diagram 2: High-Dimensional State Vector Construction

(Title: Building a Unified Catalyst State Vector)

The Scientist's Toolkit: Key Research Reagent Solutions & Materials

Table 3: Essential Tools for Catalyst State/Action Space Research

| Item | Function in Context | Example Product/Model |

|---|---|---|

| High-Throughput Synthesis Robot | Enables precise execution of RL-proposed actions (composition, synthesis variables) in parallel. | Chemspeed Technologies SWING, Unchained Labs Freeslate. |

| Automated Characterization Suite | Rapid generation of multi-modal state data (XRD, XPS) with minimal latency for RL feedback. | Malvern Panalytical Empyrean XRD with automatic sample changer, Thermo Fisher Scientific ESCALAB Xi+ XPS. |

| DFT Simulation Software | Computes electronic structure descriptors (d-band center) to enrich state representation and predict action outcomes. | VASP, Quantum ATK, Gaussian. |

| Variational Autoencoder (VAE) Framework | Software library for building non-linear dimensionality reduction models to encode high-dimensional states. | PyTorch Lightning, TensorFlow Probability. |

| Reinforcement Learning Library | Provides state-of-the-art algorithms (SAC, PPO) capable of handling continuous action spaces. | Ray RLlib, Stable-Baselines3, Acme. |

| Catalyst Precursor Libraries | Well-characterized, stable metal salts and support materials for reproducible action implementation. | Sigma-Aldrich Catalyst Precursor Library, Strem Chemicals High-Purity Metal Salts. |

| Surrogate Model Training Platform | Cloud/GPU resources for training fast neural network models that predict catalyst performance from state-action pairs. | Google Cloud AI Platform, NVIDIA NGC containers with PyTorch/TensorFlow. |

A Practical Workflow: Implementing RL for Catalyst Optimization from Scratch

Within the broader thesis on Using reinforcement learning for multi-objective catalyst optimization research, this protocol details the construction of a foundational Reinforcement Learning (RL) pipeline. The goal is to accelerate the discovery and optimization of heterogeneous catalysts (e.g., for CO₂ hydrogenation or methane reforming) by simulating catalyst-Environment interactions, where an RL Agent learns to propose catalyst formulations (e.g., metal ratios, supports, dopants) that maximize multiple performance objectives (e.g., activity, selectivity, stability).

Foundational Components & Definitions

Table 1: Core RL Components in Catalyst Simulation

| Component | Role in Catalyst Optimization | Typical Instantiation |

|---|---|---|

| Agent | The learner/optimizer that proposes new catalyst configurations. | Neural network policy (e.g., PPO, SAC actor). |

| Environment | Simulator that evaluates a catalyst's performance. | DFT microkinetic model, kinetic Monte Carlo (kMC), or a surrogate model (e.g., ML predictor). |

| State (s) | Representation of the current catalyst and process conditions. | Vector of descriptors (e.g., composition, adsorption energies, temperature, pressure). |

| Action (a) | A modification to the catalyst or process. | Continuous: Changing dopant concentration. Discrete: Selecting a primary metal from a set. |

| Reward (R) | Quantitative feedback on catalyst performance. | Scalar function combining multiple objectives (e.g., R = αActivity + βSelectivity - γ*Cost). |

| Policy (π) | The Agent's strategy for selecting actions given a state. | Mapping from state space to action probabilities. |

Detailed Experimental Protocol: RL Pipeline Construction

Protocol 3.1: Environment Setup via Computational Catalyst Simulation

- Objective: Create a reproducible simulation environment that maps a catalyst descriptor vector to performance metrics.

- Materials:

- Software: Python, ASE (Atomic Simulation Environment), CatMAP, or custom kinetic code.

- Computational Resources: High-performance computing (HPC) cluster for DFT or local GPU for surrogate model inference.

- Procedure:

- Define State Space: Select a set of

ncatalyst descriptors (e.g., formation energy, d-band center, O and C adsorption energies) to form ann-dimensional state vector. - Build Simulator Core: Implement a microkinetic model or a pre-trained machine learning model (e.g., neural network, Gaussian process) that takes the state vector and process conditions as input.

- Implement

step(action)Function:- Input: An

action(catalyst modification). - Process: Update the current state based on the action. Query the simulator core for new performance metrics (turnover frequency, selectivity).

- Output:

(next_state, reward, done, info).doneisTrueif performance targets are met or a step limit is exceeded.

- Input: An

- Validate: Run the environment with a set of known catalyst descriptors and verify outputs against established literature or DFT data.

- Define State Space: Select a set of

Protocol 3.2: Agent Architecture & Training Loop

- Objective: Implement and train an RL Agent to interact with the catalyst Environment.

- Materials:

- Libraries: RLlib, Stable-Baselines3, or custom PyTorch/TensorFlow.

- Hardware: GPU (e.g., NVIDIA V100 or A100) for accelerated neural network training.

- Procedure:

- Agent Initialization: Choose an RL algorithm suited for continuous/discrete action spaces (e.g., PPO for mixed, SAC for continuous). Initialize policy and value networks.

- Training Loop:

- Hyperparameter Tuning: Systematically vary learning rate, discount factor (γ), and reward scale. Use a hyperparameter optimization framework (e.g., Optuna).

Protocol 3.3: Multi-Objective Reward Engineering

- Objective: Design a reward function that balances competing catalyst optimization goals.

- Procedure:

- Identify Objectives: Define primary (e.g., reaction rate) and secondary (e.g., selectivity towards desired product, catalyst cost) objectives.

- Normalization: Scale each objective to a common range (e.g., 0-1) using theoretical maxima/minima or historical data.

- Weighted Sum Formulation:

R = w₁·Norm(TOF) + w₂·Norm(Selectivity) - w₃·Norm(Noble_Metal_Loading). - Constraint Handling: Add large negative reward penalties for physically implausible or unstable configurations (e.g., positive formation energy).

- Validation: Test the reward function on a small set of known optimal and sub-optimal catalysts to ensure it correlates with overall desired performance.

Data Presentation & Validation

Table 2: Example RL Training Results for CO₂ Hydrogenation Catalyst Optimization

| Training Episode | Agent-Proposed Catalyst (e.g., Cu:Zn:Zr Ratio) | Simulated TOF (s⁻¹) | CH₃OH Selectivity (%) | Reward (w₁=0.5, w₂=0.5) |

|---|---|---|---|---|

| 0 (Baseline) | 1:1:1 | 0.005 | 65 | 0.65 |

| 500 | 3:1:2 | 0.012 | 78 | 0.85 |

| 1000 | 5:2:1 | 0.021 | 82 | 0.96 |

| 1500 | 4:1:3 | 0.018 | 95 | 1.02 |

| 2000 (Final) | 4:1:3 | 0.018 | 95 | 1.02 |

Visualizations

Title: RL Agent-Environment Interaction Loop for Catalyst Optimization

Title: Stepwise Protocol for Building the Catalyst RL Pipeline

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Computational Tools

| Item / Software | Function in Catalyst RL Pipeline | Example / Note |

|---|---|---|

| ASE (Atomic Simulation Environment) | Python library for setting up, running, and analyzing atomistic simulations. Often used to generate initial data for Environment models. | Used to calculate adsorption energies via DFT. |

| CatMAP | Microkinetic modeling package for heterogeneous catalysis. Can serve as the core of the Environment simulator. | Translates descriptor states (ΔE_ads) to activity/selectivity. |

| RLlib / Stable-Baselines3 | Scalable RL libraries providing high-quality implementations of algorithms (PPO, SAC, DQN). | Speeds up Agent development and training. |

| Optuna / Ray Tune | Hyperparameter optimization frameworks. Crucial for tuning Agent and reward function parameters. | Automates the search for optimal learning rates, network architectures. |

| Surrogate ML Model (e.g., GNN) | Fast approximate model of catalyst performance. Replaces expensive DFT/kMC in the Environment for faster training. | Trained on historical DFT data; predicts properties from composition/structure. |

| High-Performance Computing (HPC) Cluster | Provides the computational power for first-principles calculations used to validate final Agent proposals. | Essential for generating reliable training data and final validation. |

Designing Effective Multi-Objective Reward Functions (Pareto Frontiers)

Within the broader thesis on Using reinforcement learning for multi-objective catalyst optimization research, designing reward functions that accurately balance competing objectives is paramount. Catalysis research often involves trade-offs between activity, selectivity, stability, and cost. A Pareto-optimal approach, generating a frontier of non-dominated solutions, is essential for guiding experimental campaigns and computational searches in drug development and materials science.

Foundational Concepts & Current Search Synthesis

A live internet search confirms the central role of multi-objective reinforcement learning (MORL) in scientific domains. Key paradigms include:

- Single-policy methods: Use a scalarized reward function (weighted sum of objectives) to converge to a single point on the Pareto front.

- Multi-policy methods: Aim to approximate the entire Pareto frontier, often using population-based algorithms or multiple training runs with varied preferences.

Current research emphasizes reward function design that ensures Pareto-compliant behavior, where improving an agent's scalarized reward corresponds to moving toward the Pareto frontier. Challenges include handling objectives of different scales, sparse rewards, and dynamic preferences.

Table 1: Common Multi-Objective Scalarization Methods

| Method | Formula (for objectives J₁, J₂) | Key Property | Best Use Case |

|---|---|---|---|

| Linear Scalarization | R = w₁J₁ + w₂J₂ | Can only find convex Pareto frontiers. | Known, convex objective spaces. |

| Chebyshev (Tchebycheff) | R = maxᵢ [wᵢ | Jᵢ* - Jᵢ | ] | Can find any Pareto-optimal point. | General use, non-convex frontiers. |

| Hypervolume Indicator | Measures volume dominated wrt reference point. | Directly optimizes for coverage and convergence. | Evolutionary algorithms (NSGA-II, MOEA/D). |

| Thresholded Objectives | R = Σᵢ f(Jᵢ > Tᵢ) | Encourages satisfying hard constraints. | Mandatory minimum performance levels. |

Application Notes for Catalyst Optimization

Note 1: Reward Shaping for Chemical Objectives

- Activity (Yield/TOF): Dense reward shaped as incremental improvement toward target. Use logarithmic scaling if spans orders of magnitude.

- Selectivity: Reward proportional to desired product fraction. Can be combined with a penalty for byproducts.

- Stability (Catalytic Lifetime): Sparse reward for maintaining activity over a defined number of cycles/time steps.

- Cost/LCA Score: Negative reward proportional to the expense or environmental impact of materials/processes.

Note 2: Handling Conflicting Objectives (e.g., Activity vs. Stability) A common Pareto trade-off in catalysis. The reward function must not allow trivial maximization of one at total expense of the other. Protocol: Use a Constraint Optimization approach where stability is a threshold objective, and activity is maximized subject to that constraint.

Experimental Protocols

Protocol 1: Benchmarking Reward Functions with a Known Catalyst Simulator

- Objective: Evaluate the efficacy of different scalarization methods in discovering Pareto-optimal catalyst formulations (e.g., varying metal ratio, support, ligand).

- Materials: Computational catalyst model (e.g., microkinetic model, DFT-based surrogate), RL library (RLlib, Stable-Baselines3), multi-objective optimization library (PyGMO, pymoo).

- Method:

- Define state space (catalyst descriptors), action space (composition changes), and environment (simulator).

- Implement 3-4 reward functions (e.g., Linear, Chebyshev, Hypervolume-based).

- Train a PPO or SAC agent with each reward function for a fixed number of episodes.

- Record all evaluated catalysts and their performance vectors (activity, selectivity).

- Post-training, compute the hypervolume indicator of the discovered non-dominated set relative to a predefined reference point.

- Analysis: Compare the hypervolume and spread of solutions from each reward function. The superior function yields a larger hypervolume with well-distributed points.

Protocol 2: Iterative Human-in-the-Loop Preference Elicitation

- Objective: Refine the Pareto frontier based on expert scientist feedback, aligning computational search with practical laboratory feasibility.

- Method:

- Initial RL run produces a candidate Pareto frontier.

- Present a diverse subset of 5-10 catalyst proposals from the frontier to domain experts.

- Experts rank proposals or provide pair-wise preferences based on tacit knowledge (e.g., synthetic accessibility, predicted toxicity).

- Use preference-based reinforcement learning (PbRL) or Bayesian optimization to update the reward function, incorporating expert preferences.

- Retrain the RL agent or guide the search with the updated reward model.

- Repeat for 2-3 cycles to converge on a practically relevant Pareto frontier.

Visualizations

Title: RL Agent Interaction with Pareto Frontier

Title: MORL Catalyst Optimization Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for MORL Catalyst Research

| Item | Function in Research | Example/Supplier (Illustrative) |

|---|---|---|

| High-Throughput Experimentation (HTE) Robot | Physically validates RL-proposed catalyst libraries, generating ground-truth multi-objective data. | Chemspeed, Unchained Labs |

| Microkinetic Modeling Software | Provides a simulated environment for RL agent training before costly real-world testing. | CATKINAS, KineticsTM |

| Multi-Objective Optimization Library | Benchmarks RL results and computes Pareto frontiers/hypervolume. | PyGMO, pymoo, Platypus |

| Reinforcement Learning Framework | Implements and trains agents (e.g., PPO, SAC) with custom reward functions. | RLlib (Ray), Stable-Baselines3 |

| Catalyst Characterization Suite | Provides state descriptors (e.g., particle size, oxidation state) for the RL state space. | XRD, XPS, TEM instruments |

| Computational Chemistry Suite | Calculates objective proxies (e.g., binding energies for activity/selectivity) via DFT. | VASP, Gaussian, Quantum ESPRESSO |

This application note details a case study on using reinforcement learning (RL) for the discovery of selective hydrogenation catalysts, framed within a multi-objective catalyst optimization thesis. Selective hydrogenation is critical for fine chemical and pharmaceutical synthesis, where achieving high selectivity for a desired product over competing reactions is paramount. Traditional catalyst discovery is slow and costly. This study demonstrates an RL-driven closed-loop system that integrates computational prediction, robotic synthesis, and high-throughput testing to rapidly identify optimal multi-metallic catalyst formulations for the selective hydrogenation of alkynes to alkenes.

Application Notes

RL Framework and Agent Training

The core of the system is a Deep Q-Network (DQN) agent. The agent's state space is defined by catalyst descriptors: elemental composition (e.g., Pd, Cu, Ag, Au ratios), support material (e.g., Al2O3, C), and synthesis conditions (precursor concentration). The action space is the modification of these parameters within a defined step size. The reward function (R) is a weighted multi-objective sum:

R = w1 * (Selectivity) + w2 * (Conversion) - w3 * (Cost of Noble Metals)

where weights (w1, w2, w3) are tuned to prioritize selectivity while maintaining activity and cost-effectiveness.

The agent was trained over 50 episodes, with each episode comprising 20 experimental cycles. Exploration (ε-greedy) started at 80% and decayed to 10%.

Key Quantitative Results

The RL-optimized catalyst was compared against a standard Lindlar catalyst (Pd/Pb-CaCO3) and a randomly screened library.

Table 1: Performance Comparison for Phenylacetylene to Styrene Hydrogenation

| Catalyst Formulation (Pd-based) | Selectivity (%) @ 90% Conversion | Turnover Frequency (h⁻¹) | Normalized Cost Index |

|---|---|---|---|

| Lindlar (Baseline) | 85 ± 3 | 450 | 1.00 |

| Best Random Screen (PdCu/Al2O3) | 88 ± 4 | 520 | 0.75 |

| RL-Optimized (PdCuAg/Au-doped C) | 96 ± 2 | 610 | 0.65 |

Table 2: RL Training Metrics (Averaged over Last 10 Episodes)

| Metric | Value |

|---|---|

| Average Reward per Episode | 82.4 |

| Steps to Converge on Optimal Candidate | 14 |

| Exploration Rate (Final) | 0.10 |

Experimental Protocols

Protocol 1: RL-Driven Catalyst Synthesis Workflow

Objective: To prepare catalyst candidates as directed by the RL agent’s action output. Materials: See "Scientist's Toolkit" below. Procedure:

- Precursor Solution Preparation: Based on the agent-specified composition, calculate volumes of 10 mM metal salt solutions (e.g., PdCl2, Cu(NO3)2, AgNO3, HAuCl4).

- Wet Impregnation: Add the calculated precursor mix to 100 mg of the specified support material (e.g., mesoporous carbon) in a 4 mL vial. Add deionized water to the incipient wetness point.

- Drying & Calcination: Sonicate for 10 min, then dry in an oven at 120°C for 2 hours. Transfer to a tube furnace and calcine under static air at 300°C for 2 hours (ramp rate: 5°C/min).

- Reduction: Switch to 5% H2/Ar flow (50 sccm) and reduce at 200°C for 1 hour.

- Passivation: Cool to room temperature under Ar, then expose to 1% O2/Ar for 30 minutes to passivate the surface.

- Transfer: The prepared catalyst is automatically weighed and transferred to the reaction robotics platform.

Protocol 2: High-Throughput Hydrogenation Screening

Objective: To evaluate catalyst performance (conversion and selectivity) and feed data into the RL state. Procedure:

- Reaction Setup: In a 96-well parallel pressure reactor array, add 5 mL of 10 mM phenylacetylene in toluene to each well containing 2 mg of catalyst.

- Reaction Execution: Seal the array, purge with H2 three times, and pressurize to 5 bar H2. Heat to 50°C with stirring at 1000 rpm for 30 minutes.

- Quenching & Analysis: Rapidly cool the array to 10°C. Take a 100 µL aliquot from each well, dilute, and analyze by automated GC-MS.

- Data Processing: Calculate conversion of phenylacetylene and selectivity to styrene. Transmit these values, along with the catalyst descriptor set, to the RL agent to update the state and compute the reward.

Visualizations

Title: RL-Driven Catalyst Discovery Closed Loop

Title: Multi-Objective Reward Function Pathway

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item/Reagent | Function in Protocol | Example Specification/Note |

|---|---|---|

| Metal Salt Precursors | Source of active metal components. | 10 mM aqueous solutions of PdCl2, Cu(NO3)2, AgNO3, HAuCl4. |

| Functionalized Support Materials | High-surface-area carrier for metal dispersion. | Mesoporous Carbon, γ-Al2O3, TiO2 (200-400 m²/g). |

| Parallel Pressure Reactor Array | Enables high-throughput catalytic testing under controlled conditions. | 96-well, glass-lined, with individual magnetic stirring. |

| Automated GC-MS System | For rapid, quantitative analysis of reaction mixtures. | Fast GC column (<5 min run time), robotic autosampler. |

| Robotic Liquid Handler | Precise dispensing of precursors and reagents for synthesis. | Capable of handling µL to mL volumes with inert atmosphere. |

| Tube Furnace with Gas Control | For controlled catalyst calcination and reduction. | Programmable, with multiple gas lines (Air, H2/Ar). |

| Substrate Solution | Standardized reaction feedstock for consistent screening. | 10 mM Phenylacetylene in anhydrous, inhibitor-free Toluene. |

Application Notes and Protocols

Within the context of advancing multi-objective catalyst optimization research, integrating Reinforcement Learning (RL) with high-throughput experimentation (HTE) and lab automation creates a closed-loop, autonomous discovery pipeline. This paradigm accelerates the exploration of complex chemical spaces by using experimental data to directly train and refine RL policies that guide subsequent experiments toward optimal, multi-property targets (e.g., activity, selectivity, stability).

1. Core Autonomous Experimentation Workflow Protocol

Protocol Title: Closed-Loop RL-Driven Catalyst Screening and Optimization Objective: To autonomously explore a multi-dimensional catalyst composition space (e.g., ratios of metals, dopants, supports) to maximize a composite reward function.

Materials & Setup:

- Automated Synthesis Platform: Liquid-handling robotics or automated impregnation system for reproducible catalyst precursor preparation.

- High-Throughput Characterization: Automated physisorption/chemisorption analyzer, robotic XRD, or rapid-throughput spectroscopy.

- Automated Catalytic Testing Reactor: Parallel or rapid serial microreactor system with integrated online GC/MS or MS for product analysis.

- Centralized Data Lake: A structured database (e.g., SQL/NoSQL) with automatic ingestion from all instruments, tagged with unique experiment IDs.

- RL Agent Server: Compute node running the RL algorithm (e.g., customized OpenAI Gym environment), interfaced with the data lake and experiment planner.

Detailed Procedure:

- Parameterization & Initialization: Define the search space (e.g., continuous variables: Metal A loading 0.1-5 wt%, Metal B loading 0.1-5 wt%; discrete variables: support type [Al2O3, SiO2, TiO2]). Formulate a normalized, multi-objective reward function R = w₁Yield + w₂Selectivity - w₃*Cost.

- Initial Seed Dataset Generation: Execute a space-filling Design of Experiments (DoE, e.g., Sobol sequence) of 20-50 experiments using the automated platforms (Steps 3-5) to provide initial data for RL model pre-training.

- Automated Synthesis: The robotic platform prepares catalyst samples according to the specified composition, followed by standardized calcination/reduction protocols.

- Automated Characterization & Testing: Samples are transferred (manually or via robotic arm) to characterization stations and finally to the testing reactor. Performance data is automatically parsed and stored.

- RL Agent Cycle:

a. State Representation: The agent receives the current state

s_t, defined as the set of all experimental data from the last completed batch. b. Action Selection: The agent’s policy network proposes the next batch of experimental conditionsa_t(catalyst compositions) to test. c. Experiment Execution: The proposed actions are queued and executed via the automated platforms (Steps 3-4). d. Reward Calculation & Update: Upon completion, rewardsr_tare computed from the new data. The agent updates its policy using the collected transition (s_t,a_t,r_t,s_{t+1}), typically via an off-policy algorithm like Soft Actor-Critic (SAC) or a Bayesian optimization-inspired approach. - Termination: The loop (Step 5) continues until a performance threshold is met or a computational budget (number of experiments) is exhausted. The system outputs the Pareto front of optimal catalyst compositions.

2. Protocol for Adaptive Multi-Objective Reward Shaping

Protocol Title: Dynamic Weight Adjustment for RL-Guided Pareto Front Exploration Objective: To dynamically adjust the weights in the multi-objective reward function, enabling guided exploration of the Pareto front.

Procedure:

- Initialize multiple RL agents in parallel, each with a different static set of weights

[w₁, w₂, w₃]in its reward function. - After each batch of experiments, aggregate all data from all agents.

- Perform a global Pareto front analysis.

- Identify underexplored regions of the Pareto front (gaps between solutions).

- Dynamically spawn new RL agents with reward weights biased to target those gaps, or re-weight existing agents' objectives.

- Continue the main loop, allowing agents with successful policies (high cumulative reward) to propose more experiments.

Quantitative Data Summary

Table 1: Performance Comparison of Optimization Methods for Catalyst Discovery

| Method | Avg. Experiments to Target | Pareto Front Coverage (AUC) | Material/Time Cost Saved vs. Grid Search | Key Algorithm(s) Used |

|---|---|---|---|---|

| Full Grid Search | ~5000 (Exhaustive) | 100% (Baseline) | 0% | N/A |

| Traditional DoE + RSM | ~200-500 | ~60-75% | ~60-90% | Polynomial Regression |

| Bayesian Optimization | ~100-300 | ~70-85% | ~85-95% | Gaussian Process (GP) |

| RL (Off-policy) | ~50-150 | ~80-95% | ~95-98% | Soft Actor-Critic (SAC), TD3 |

| RL (Multi-Agent) | ~80-200 | ~90-98% | ~90-97% | Multi-Objective SAC, Q-learning |

Table 2: Exemplar Reagent Solutions for Heterogeneous Catalyst RL-HTE

| Reagent/Material | Function in RL-HTE Pipeline |

|---|---|

| Precursor Solutions (e.g., H₂PtCl₆, Rh(NO₃)₃) | Standardized, robotically dispensable sources of active metal components for precise compositional control. |

| Modular Catalyst Supports (e.g., γ-Al₂Oₜ pellets, TiO₂ powders) | Uniform, high-surface-area substrates enabling reproducible synthesis and testing. |

| Automated Microreactor Cartridges | Standardized, disposable reaction vessels for high-throughput, parallelized activity testing. |

| Internal Analytical Standards (e.g., 1% Ne in He, deuterated solvents) | Ensures data fidelity and enables cross-batch calibration of GC/MS or MS detection. |

| Solid-Phase Extraction (SPE) Plates | For automated, high-throughput post-reaction quench and clean-up of liquid-phase catalytic mixtures. |

Visualizations

Title: Autonomous RL-Driven Experimentation Loop

Title: Multi-Agent RL for Pareto Front Exploration

Overcoming Roadblocks: Solutions for Sparse Rewards, Exploration, and Data Efficiency

Tackling the Sparse/Delayed Reward Problem in Chemical Reactions

Within the thesis "Using Reinforcement Learning for Multi-Objective Catalyst Optimization," a central computational challenge is the sparse/delayed reward problem. In chemical reaction optimization, an RL agent (e.g., selecting catalyst formulations, reactants, or conditions) often only receives a meaningful reward—such as final yield, selectivity, or turnover number—after a full experimental cycle. This sparse feedback, devoid of intermediate guidance, drastically slows learning and requires prohibitively many real-world experiments. These Application Notes detail protocols and strategies to mitigate this problem, enabling more efficient RL-driven discovery.

Core Strategies and Quantitative Comparisons

Recent research has focused on three primary strategies to address reward sparsity in chemical RL: reward shaping, model-based RL, and hierarchical RL. The quantitative efficacy of these approaches, based on recent literature, is summarized below.

Table 1: Comparison of Strategies for Mitigating Sparse/Delayed Rewards in Chemical Reaction RL

| Strategy | Key Mechanism | Reported Efficiency Gain* (vs. Baseline RL) | Key Limitations | Representative Application |

|---|---|---|---|---|

| Reward Shaping | Provides auxiliary, informative rewards (e.g., intermediate spectroscopic signals). | 2-5x reduction in required experiments | Requires domain knowledge to design non-cheatable rewards. | Optimizing Pd-catalyzed C-N coupling using in-situ IR yield estimates as intermediate reward. |

| Model-Based RL | Learns a forward model of reaction dynamics to generate "imagined" rollouts and rewards. | 5-20x reduction in experiments | Model bias/error can lead to exploitation of inaccuracies. | Flow reactor optimization for photocatalytic C–C coupling using a probabilistic neural network model. |

| Hierarchical RL | Uses a meta-policy to set sub-goals (e.g., reach intermediate X), with lower-level policies achieving them. | 3-10x reduction in experiments | Increased algorithmic complexity. | Multi-step synthesis planning where each step is a sub-task with its own reward. |

| Inverse Reinforcement Learning | Infers a dense reward function from expert demonstrations (e.g., prior literature data). | N/A (enables initialization) | Dependent on quality and breadth of demonstration data. | Inferring cost functions for solvent selection from historical reaction databases. |

*Efficiency gain typically measured in number of experimental iterations required to reach a target performance threshold.

Detailed Experimental Protocols

Protocol 3.1: Reward Shaping Using In-Situ Spectroscopic Monitoring

Objective: To provide dense, intermediate rewards for an RL agent optimizing a palladium-catalyzed Suzuki-Miyaura cross-coupling reaction. Materials: See Scientist's Toolkit (Section 5). Procedure:

- Setup Automated Flow Reactor: Configure a continuous flow system with an in-line FTIR spectrometer cell. The system must allow for computer-controlled adjustment of key parameters (e.g., temperature, residence time, reagent stoichiometry).

- Define State & Action Spaces:

- State (s): Current reaction conditions (T, t, [Pd], [Base]) + real-time FTIR peak ratio (Aryl-Boronic Acid / Biaryl Product).

- Action (a): Discrete or continuous adjustments to T (±5°C), t (±10%), or stoichiometry (±5%).

- Design Reward Function:

- Intermediate Reward (rt):

r_t = α * Δ[Product]_IR, where Δ[Product]IR is the change in the normalized product IR peak area since the last action. - Final Reward (r_T):

r_T = β * Final GC Yield + γ * Selectivity. - Total Reward:

r = r_t + r_T.

- Intermediate Reward (rt):

- Agent Training: Initialize a policy gradient (e.g., REINFORCE) or actor-critic (e.g., DDPG) agent. For each episode (full reaction run):

- The agent interacts with the flow system every t minutes.

- It observes state st, takes action at, and receives shaped reward rt.

- After a fixed number of steps or upon reaction completion, the agent receives rT and updates its policy.

- Validation: Run the final optimized policy for n=3 replicates and compare yield/selectivity to a traditionally optimized (e.g., DoE) control.

Protocol 3.2: Model-Based RL with Probabilistic Ensemble Models

Objective: To reduce physical experiments by using a learned dynamics model for agent pre-training and simulation. Materials: Access to a historical dataset of ~100-500 prior experiments for the reaction class of interest. Procedure:

- Dynamics Model Training:

- Data: Assemble dataset

D = {(s_t, a_t, s_{t+1}, r_t)}. - Architecture: Train a probabilistic ensemble of 5 neural networks to predict

(Δs_{t+1}, r_t). Each network outputs a Gaussian distribution to capture uncertainty. - Loss: Negative log-likelihood of the observed state transitions and rewards.

- Data: Assemble dataset

- Agent Training in Model (Dyna-Style):

- Real Experiment Loop: The agent performs k real experiments, collecting data to augment dataset

Dand retrain the ensemble model. - Model Rollouts: Between real experiments, the agent conducts M simulation episodes using the learned model. Starting from a random state from

D, it takes actions according to its current policy, using the model's predictions (with uncertainty-aware sampling) to generate simulated trajectories and rewards. - Policy Update: The agent updates its policy (e.g., using SAC or PPO) on both real and simulated data.

- Real Experiment Loop: The agent performs k real experiments, collecting data to augment dataset

- Iterative Optimization: Repeat the cycle of (model retraining -> model rollouts -> policy update -> real experiments) until target performance is met. The model's uncertainty estimates can be used to guide exploration in the real system (e.g., via Upper Confidence Bound).

Visualizations of Key Methodologies

Diagram 1: Reward Shaping Workflow for Chemical RL

Diagram 2: Model-Based RL Cycle for Chemistry

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Implementing RL with Dense Reward Feedback

| Item / Reagent | Function / Role in Protocol | Example Product / Specification |

|---|---|---|

| Automated Flow Chemistry System | Enables precise, robotic control of reaction parameters (flow rate, T, P) and rapid iteration. | Vapourtec R-Series, Syrris Asia Flow System. |

| In-Line Spectroscopic Analyzer | Provides real-time, non-destructive data for intermediate reward shaping (FTIR, UV-Vis, Raman). | Mettler Toledo ReactIR (Flow Cell), Ocean Insight Spectrometers. |

| Liquid Handling Robot | For automated preparation of catalyst/reagent libraries in batch optimization tasks. | Hamilton ML STAR, Chemspeed Technologies SWING. |

| Reaction Data Management Software | Logs all experimental parameters and outcomes, creating the essential dataset for RL. | Titian Mosaic, CDD Vault, Benchling. |

| Probabilistic Machine Learning Library | Facilitates the construction of uncertainty-aware dynamics models for model-based RL. | PyTorch with torch.distributions, TensorFlow Probability. |

| High-Throughput Analytics | Provides the final, high-fidelity reward signals (yield, selectivity). | UHPLC/MS (Agilent, Waters), GC/MS (Shimadzu). |

| Reinforcement Learning Framework | Provides algorithms (PPO, SAC) and environment interfaces for agent development. | OpenAI Gym/Gymnasium, Ray RLlib, Stable-Baselines3. |

| Modular Catalysis Kits | Well-characterized ligand/metal precursor libraries for efficient exploration space definition. | Sigma-Aldrich Catalyst Kits, Strem Screening Libraries. |

Application Notes

In the context of multi-objective catalyst optimization, the chemical space of potential materials is combinatorially vast. Reinforcement Learning (RL) provides a principled framework to navigate this space by treating the sequential selection and testing of candidate catalysts as a Markov Decision Process. The core challenge is the exploration-exploitation dilemma: allocating resources between testing novel, high-risk compositions (exploration) and refining known, promising candidates to meet multiple objectives like activity, selectivity, and stability (exploitation).

Table 1: Key Quantitative Metrics in RL-Driven Catalyst Discovery

| Metric | Typical Target Range | Role in Balancing E/E |

|---|---|---|

| Prediction Uncertainty (σ) | 0.05-0.5 eV (for energy) | High σ triggers exploration; low σ triggers exploitation. |

| Acquisition Function Value | User-defined scale (e.g., UCB κ=2-4) | Quantifies the trade-off between mean reward (μ) and uncertainty (κ*σ). |

| Pareto Front Size | 10-50 non-dominated candidates | Defines the current optimal set for multi-objective exploitation. |

| Sample Efficiency Gain | 2x-10x over random search | Measures the effectiveness of the RL policy. |

| Regret (Simple / Cumulative) | Minimization goal | Quantifies the opportunity cost of exploration. |

Protocols

Protocol 1: Setting Up the RL Agent and Environment for Catalyst Optimization

Objective: Initialize an RL loop for closed-loop, multi-property catalyst discovery. Materials: See "Scientist's Toolkit" below. Procedure:

- Environment Definition:

- Define the state (S) as the complete characterization data of the tested catalyst set (e.g., composition descriptors, synthesis conditions, measured properties).

- Define the action (A) space as the set of all possible next experiments (e.g., "synthesize Co₀.₇Fe₀.₂Mn₀.₁O_x at 500°C").

- Define the reward (R) as a scalar function of multiple objectives. A common approach is calculated as:

R = w₁*Activity_Normalized + w₂*Selectivity_Normalized - w₃*Cost_Normalized, where wᵢ are weights.

- Agent Initialization:

- Choose an RL algorithm suitable for continuous or large discrete action spaces (e.g., Deep Q-Network (DQN) or Policy Gradient).

- Initialize a surrogate model (e.g., Gaussian Process or Neural Network) to predict rewards and uncertainties for untested actions.

- Set the acquisition function (e.g., Upper Confidence Bound, UCB:

μ + κ*σ) to govern the E/E trade-off. A high κ promotes exploration.

- Initial Data Seeding:

- Run 20-50 random experiments (pure exploration) to create an initial dataset for training the initial surrogate model.

- Iteration Loop:

- The agent uses the current policy to select the next action (candidate) by optimizing the acquisition function.

- Execute the action in the lab (synthesis, characterization, testing).

- Observe the new state and multi-objective reward.

- Append the new (state, action, reward, next state) tuple to the replay buffer.

- Retrain the surrogate model and update the RL agent policy every N iterations (e.g., N=10).

Protocol 2: Implementing a Multi-Objective Adaptive E/E Strategy

Objective: Dynamically adjust the exploration parameter (κ) based on learning progress. Procedure:

- Monitor Performance:

- Track the moving average of rewards and the rate of discovery of new Pareto-optimal candidates over a window of the last 20 iterations.

- Adjust κ Dynamically:

- If Pareto front growth rate < threshold (e.g., 1 new candidate in 20 runs): Increase κ by 10-20% to force more exploration of uncharted regions.

- If reward stagnation (moving average change < 1%) for >30 runs: Slightly decrease κ to focus on local exploitation (refinement) around high-performing candidates.

- If a high-reward candidate is found: Temporarily reduce κ for the next 5-10 actions to perform local exploitation (e.g., gradient-based search in descriptor space).

- Cycle Validation:

- Every 50 iterations, run 3-5 validation experiments on the current Pareto-optimal candidates to ensure reward predictions remain accurate and to prevent agent overfitting to model biases.

Visualizations

Title: RL Closed-Loop for Catalyst Optimization Workflow

Title: Agent Strategies for Navigating Chemical Space

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions & Computational Tools

| Item/Category | Function/Role in E/E Balance | Example/Notes |

|---|---|---|

| High-Throughput (HT) Synthesis Robot | Enables rapid execution of exploration actions (synthesis). | Fluidics-based platforms for automated precursor dispensing. |

| HT Characterization Suite | Provides fast state (property) evaluation for feedback. | Parallel photoreactors, GC/MS autosamplers, physisorption analyzers. |

| Chemical Descriptor Software | Encodes catalysts into numerical state vectors for the RL agent. | Dragon, RDKit; computes compositional, structural, & electronic features. |

| RL/ML Library | Core engine for the agent's policy and surrogate models. | TensorFlow, PyTorch, Stable-Baselines3, GPyTorch (for GPs). |

| Multi-Objective Optimization Lib | Manages the Pareto front for exploitation targeting. | pymoo, DEAP (for NSGA-II, etc.). |

| Acquisition Function Module | Directly implements the E/E trade-off logic. | Custom code or BoTorch for functions like UCB, Expected Improvement. |

| Laboratory Information Management System (LIMS) | Central replay buffer; logs all (state, action, reward) tuples. | Enables reproducible policy training and data provenance. |

Application Notes

Within multi-objective catalyst optimization research, sample inefficiency remains a primary barrier to deploying Reinforcement Learning (RL). Each experimental cycle (e.g., synthesizing and testing a novel catalyst formulation) is costly and time-consuming. This document details protocols for integrating transfer learning and priors to drastically reduce the number of required experimental samples.

1. Leveraging Transfer Learning from Simulation to Physical Experiments The core strategy involves pre-training RL agents in high-fidelity computational simulations before fine-tuning with physical lab data.

Key Quantitative Data:

Table 1: Impact of Transfer Learning on Experimental Sample Efficiency

| Approach | Total Physical Samples Required for Target Performance | Reduction vs. Baseline | Key Simulation Parameters |

|---|---|---|---|

| Baseline RL (No Transfer) | 500 - 700 | 0% | N/A |

| Policy Transfer from DFT/MD Sim | 150 - 200 | ~70% | Density Functional Theory (DFT) accuracy; ~10⁶ simulation steps. |

| Domain Adaptation via Dynamics Randomization | 80 - 120 | ~85% | Randomized adsorption energies (±0.2 eV), reaction barriers (±0.15 eV). |

| Multi-Task Pre-training on Related Catalytic Families | 100 - 150 | ~75% | Pre-training on 3-5 related reaction networks (e.g., CO₂ reduction pathways). |

Protocol 1: Simulation-to-Reality Transfer for Catalyst Discovery Objective: Pre-train an RL agent to optimize a catalyst descriptor space (e.g., composition, morphology) for target objectives (activity, selectivity, stability) using simulation proxies, then adapt to physical electrochemical testing. Materials: See "The Scientist's Toolkit" below. Procedure:

- High-Fidelity Simulation Environment Setup:

- Implement a reward function Rsim = w₁TOFsim + w₂*Selectivity*sim - w₃DeactivationRatesim, where weights (wᵢ) reflect the multi-objective balance.

- Use DFT-calculated parameters (e.g., adsorption energies, activation barriers) to build a microkinetic model for the target reaction (e.g., Oxygen Evolution Reaction).

- Introduce randomized physical parameter distributions (dynamics randomization) to bridge the simulation-reality gap.

- Pre-training Phase:

- Train an RL agent (e.g., Soft Actor-Critic) in the simulation environment for a minimum of 5x10⁵ steps.

- Save the policy network (π_sim) and the learned state-value function.

- Fine-Tuning Phase with Physical Experiments:

- Initialize the physical experiment RL agent with the weights from π_sim.

- Freeze the initial layers of the actor-network to preserve foundational feature representations.

- Replace the final simulation-specific layers and train only these, plus the critic network, using sparse physical reward signals.

- Employ a prioritized experience replay buffer, heavily weighting initial physical experiments.

2. Incorporating Expert Priors into the RL Loop Integrating domain knowledge as priors guides exploration towards promising regions of the catalyst design space.

Key Quantitative Data:

Table 2: Effect of Prior Integration on RL Convergence

| Prior Type | Integration Method | Convergence Acceleration | Risk of Premature Convergence |

|---|---|---|---|

| Descriptor Bounds | Action space constraints | 30-40% Faster | Low |

| Physical Knowledge Models | Reward shaping (+ R_prior) | 50-60% Faster | Medium |

| Spectral/Fingerprint Data | Auxiliary prediction tasks | 40-50% Faster | Low |

| Human Expert Ranking | Pre-training via Behavior Cloning | 60-70% Faster | High (Requires Regularization) |

Protocol 2: Reward Shaping with Physicochemical Priors Objective: Incorporate known scaling relationships (e.g., Sabatier principle, Bronsted-Evans-Polanyi relations) to shape the reward signal and penalize physically implausible catalyst candidates. Procedure:

- Prior Model Formulation:

- Encode the prior as an additional reward component: Rtotal = Rexperimental + λ * Rprior*.

- For OER, Rprior could be a Gaussian function of the adsorption free energy difference (ΔGO – ΔGOH), peaking at the theoretical optimal value (~2.4 eV).

- The scaling factor λ starts high (e.g., 0.7) and is annealed to 0.1 over the experiment to gradually shift from prior-guided to data-driven optimization.

- RL Loop Integration:

- At each RL step, calculate Rprior based on the candidate catalyst's computed descriptors (from a fast surrogate model or database lookup).

- Add Rprior to the sparse experimental reward (which may be 0 for several steps until characterization is complete).

- Update the agent's policy using this combined reward, effectively biasing exploration toward descriptor regions favored by the prior.

Visualizations

Sim-to-Real RL Transfer Workflow for Catalysis.

Integration of Expert Priors via Reward Shaping.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for RL-Driven Catalyst Optimization

| Item / Solution | Function in Protocol |

|---|---|

| High-Throughput Electrochemical Workstation | Automated, parallelized testing of catalyst activity (current density), stability (chronoamperometry), and selectivity (product detection). |

| Inkjet-based Catalyst Deposition System | Precise, automated synthesis of catalyst libraries on electrode arrays from precursor inks, enabling rapid sample preparation. |

| On-line Mass Spectrometry (MS) / Gas Chromatography (GC) | Real-time or rapid cyclic measurement of reaction products for selectivity calculation, a critical reward signal component. |

| DFT Simulation Software (e.g., VASP, Quantum ESPRESSO) | Generates high-fidelity data for pre-training (adsorption energies, activation barriers) and calculating prior rewards. |

| Open Catalyst Library Datasets | Provides pre-computed descriptor data for related materials, enabling multi-task pre-training and warm-starting priors. |

| Modular RL Framework (e.g., Ray RLlib, custom PyTorch) | Flexible platform for implementing custom environments, reward functions, and network architectures for transfer learning. |

Within the broader thesis on Using reinforcement learning for multi-objective catalyst optimization research, a primary constraint is the prohibitive computational cost of high-fidelity simulations, such as Density Functional Theory (DFT) for catalyst property prediction. This Application Note details protocols for integrating surrogate models and multi-fidelity optimization to manage these costs while maintaining robust design cycles for catalytic materials and drug-like molecular discovery.

Table 1: Computational Cost & Accuracy of Model Fidelities in Catalyst Screening

| Fidelity Level | Example Method | Avg. Time per Evaluation | Typical Error vs. Experiment | Primary Use Case |

|---|---|---|---|---|

| Low | Quantitative Structure-Property Relationship (QSPR), Force Fields | < 1 sec | High (20-50%) | Initial large-scale screening, RL policy pre-training |

| Medium | Semi-empirical Methods (e.g., PM7, DFTB), Coarse-grained MD | 1 min - 1 hr | Moderate (10-25%) | Intermediate refinement, multi-fidelity model building |

| High | Ab Initio (DFT), Molecular Dynamics (all-atom) | 10 hrs - days | Low (1-10%) | Final validation, high-quality data generation for surrogates |

Table 2: Surrogate Model Performance Comparison for Catalytic Property Prediction

| Surrogate Model Type | Training Data Size Required | Prediction Speed | Key Advantage | Typical R² Score (on test set) |

|---|---|---|---|---|

| Gaussian Process (GP) | Small-Medium (100-1k samples) | Fast | Uncertainty quantification | 0.70 - 0.90 |

| Graph Neural Network (GNN) | Large (>10k samples) | Very Fast | Natural encoding of molecular structure | 0.80 - 0.95 |

| Random Forest (RF) | Medium-Large | Very Fast | Handles diverse feature types, robust | 0.75 - 0.90 |

| Multi-fidelity Deep Neural Net | Mixed-fidelity datasets | Fast | Leverages low-fidelity data efficiently | 0.85 - 0.98 |

Experimental Protocols

Protocol 3.1: Building a Multi-Fidelity Dataset for Catalyst Optimization

Objective: To create a structured dataset combining computational results of varying fidelities for a target catalytic property (e.g., adsorption energy, activation barrier).

Materials: See Scientist's Toolkit (Section 5).

Procedure:

- Define Design Space: Specify the chemical space (e.g., set of dopant metals, support oxides, ligand environments).

- Stratified Sampling: Perform Latin Hypercube Sampling (LHS) across the design space to select ~10,000 candidate structures.

- High-Fidelity Data Generation (Seed Data):

- Randomly select 100-200 candidates from the LHS set.

- Perform high-fidelity DFT calculations (see Protocol 3.2) for target properties.

- Quality Control: Calculate energy convergence criteria; re-run non-converged calculations with adjusted parameters.

- Medium/Low-Fidelity Data Generation:

- For a larger subset (e.g., 2,000 candidates), run semi-empirical PM7 or DFTB calculations.

- For the entire LHS set, calculate low-fidelity descriptors (e.g., COMFA, Dragon descriptors, simple group contributions).

- Data Alignment & Storage: Store all results in a structured database (e.g., SQL, Parquet), linking each candidate structure to its properties at each fidelity level via a unique identifier.

Protocol 3.2: High-Fidelity DFT Calculation for Catalytic Adsorption Energy