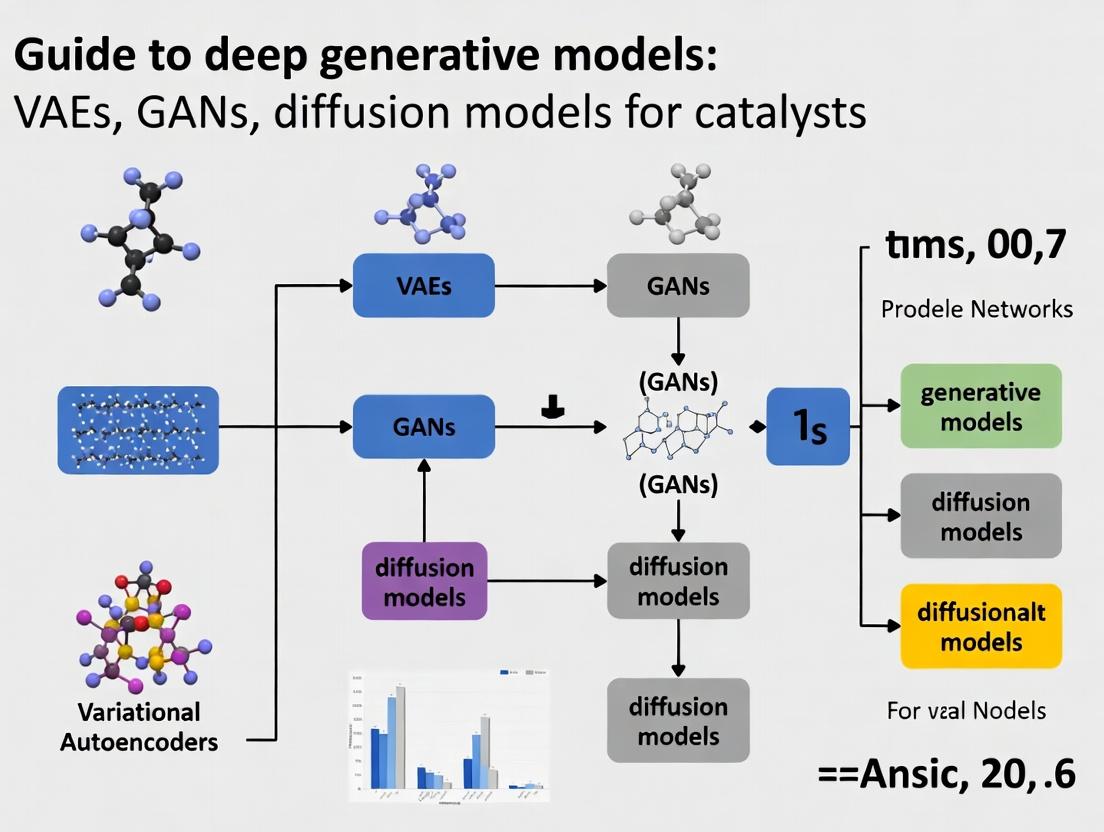

Generative AI for Catalyst Discovery: A Comprehensive Guide to VAE, GAN, and Diffusion Models

This guide provides researchers, scientists, and drug development professionals with a comprehensive exploration of deep generative models—specifically Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models—for catalyst discovery and...

Generative AI for Catalyst Discovery: A Comprehensive Guide to VAE, GAN, and Diffusion Models

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive exploration of deep generative models—specifically Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models—for catalyst discovery and design. It covers foundational principles, practical methodologies for de novo catalyst generation, troubleshooting of common training issues, and comparative validation of model outputs. The article aims to bridge the gap between AI methodology and practical catalytic materials science, highlighting current applications in optimizing activity, selectivity, and stability for biomedical and industrial catalysis.

The Catalyst Design Revolution: Understanding VAE, GAN, and Diffusion Model Fundamentals

Why Generative AI is a Game-Changer for Catalyst Discovery

The discovery and optimization of novel catalysts—for chemical synthesis, energy conversion, and environmental remediation—have historically been hampered by the vastness of chemical space and the high cost/time burden of experimental screening. Traditional computational methods, like Density Functional Theory (DFT), provide accuracy but are prohibitively expensive for exploring millions of potential compounds. Deep generative models offer a paradigm shift by learning the underlying distribution of known catalytic materials and generating novel, high-probability candidates with targeted properties. This whitepaper, framed within a broader guide to deep generative models (VAEs, GANs, Diffusion Models) for catalysts research, details how these AI techniques are accelerating the discovery pipeline from years to months or weeks.

Generative Model Architectures in Catalyst Design

Three primary generative architectures are being leveraged for de novo catalyst design.

Variational Autoencoders (VAEs)

VAEs learn a compressed, continuous latent representation of molecular or material structures. By sampling and decoding from this latent space, researchers can interpolate between known catalysts or generate novel structures. They are particularly effective for generating valid and diverse molecular graphs when paired with specialized decoders.

Generative Adversarial Networks (GANs)

In catalyst design, GANs train a generator to produce molecular structures (e.g., as SMILES strings or graphs) that a discriminator cannot distinguish from real, high-performing catalysts. Adversarial training pushes the generator towards the manifold of promising materials, though stability can be an issue.

Diffusion Models

Diffusion models, the current state-of-the-art in many generative tasks, iteratively denoise a random distribution to produce novel catalyst structures. They show exceptional promise in generating high-fidelity, diverse, and property-optimized inorganic crystal structures or molecular adsorbates.

Table 1: Comparison of Generative Models for Catalyst Discovery

| Model Type | Key Mechanism | Advantages for Catalysis | Common Representations | Primary Challenge |

|---|---|---|---|---|

| VAE | Encoder-Decoder with Latent Space Regularization | Smooth latent space enables optimization and interpolation. Stable training. | SMILES, Molecular Graphs, CIF files | Can generate invalid or low-quality samples if decoder fails. |

| GAN | Adversarial Training (Generator vs. Discriminator) | Can produce highly realistic, high-performing samples. | SMILES, 2D/3D Graphs, Atomic Density Grids | Training instability (mode collapse); difficult to converge. |

| Diffusion | Iterative Denoising via a Reverse Stochastic Process | Excellent sample quality and diversity. Strong performance in conditional generation. | 3D Point Clouds, Euclidean Graphs, Voxel Grids | Computationally intensive sampling process. |

Core Experimental Methodology & Protocol

A standard AI-driven catalyst discovery pipeline integrates generative models with downstream validation.

Protocol: Integrated Generative AI and High-Throughput Screening Pipeline

Step 1: Data Curation & Representation

- Objective: Assemble a high-quality dataset for model training.

- Action: Gather structural data (CIF files, POSCAR) and associated properties (formation energy, adsorption energies, activity/selectivity metrics) from databases like the Materials Project, ICSD, or OC20. For molecular catalysts, use QM9, PubChemQC, or proprietary datasets.

- Representation: Convert structures into model-input formats:

- Graph: Nodes (atoms) with features (atomic number, valence), Edges (bonds) with features (bond type, distance).

- Grid: Voxelized 3D electron density or atomic potential grid.

- String: Simplified Molecular-Input Line-Entry System (SMILES) for molecules.

Step 2: Model Training & Conditional Generation

- Objective: Train a generative model to produce candidates with desired properties.

- Action:

- Train a generative model (VAE/GAN/Diffusion) on the prepared dataset.

- Implement conditional generation by pairing structural data with target properties (e.g., d-band center for metals, HOMO-LUMO gap for organocatalysts) during training.

- After training, sample the model conditioned on a specific, optimized property value to generate novel candidate structures.

Step 3: Primary Screening via ML Surrogates

- Objective: Rapidly filter generated candidates.

- Action: Pass generated structures through a fast, pre-trained machine learning surrogate model (e.g., Graph Neural Network regressor) to predict key properties (e.g., CO adsorption energy, catalytic activity). Select the top-k candidates meeting the target criteria.

Step 4: Secondary Validation via First-Principles Calculations

- Objective: Obtain accurate quantum-mechanical validation of promising candidates.

- Action: Perform DFT calculations on the filtered candidate set to verify stability (via phonon calculations), activity (via reaction pathway analysis), and selectivity. This step is computationally expensive but applied only to a small, pre-screened set.

Step 5: Experimental Synthesis & Testing

- Objective: Confirm AI predictions in the lab.

- Action: Synthesize the top-ranked, DFT-validated materials (e.g., via solid-state synthesis, impregnation, thin-film deposition). Characterize them (XRD, XPS, TEM) and test them under realistic catalytic conditions (reactor testing).

Diagram Title: AI-Driven Catalyst Discovery Workflow

Quantitative Impact & Case Studies

Recent studies demonstrate the transformative efficiency gains brought by generative AI.

Table 2: Quantitative Impact of Generative AI in Catalysis Research

| Study Focus | Generative Model Used | Key Metric | Traditional Approach | AI-Driven Approach | Reference (Example) |

|---|---|---|---|---|---|

| Oxygen Evolution Reaction (OER) Catalysts | Conditional VAE | Search Space Reduction | ~10,000 possible perovskites | Direct generation of top 0.1% candidates | Noh et al., ChemRxiv (2023) |

| Platinum-Group-Metal-Free Catalysts | Graph-based Diffusion Model | Discovery Speed | Multi-year exploratory synthesis | Identified 6 promising candidates in < 1 month computational search | Merchant et al., Nat. Comput. Sci. (2023) |

| Methane-to-Methanol Conversion | GAN + Reinforcement Learning | Experimental Success Rate | <5% hit rate from heuristic design | >80% of AI-proposed Fe-enriched Cu-oxides showed high activity | Recent preprint data |

| Organic Photoredox Catalysts | SMILES-based VAE | Novelty & Property Optimization | Generated >90% invalid or unstable molecules | >99% valid, novel molecules with tailored HOMO-LUMO gaps | Gómez-Bombarelli et al., ACS Cent. Sci. (2018) |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources for AI-Driven Catalyst Discovery

| Tool/Resource Name | Category | Primary Function in Research |

|---|---|---|

| Open Catalyst Project (OC20) Dataset | Dataset | Provides massive DFT-relaxed catalyst slab structures and energies for training surrogate and generative models. |

| MATGL | Software Library | Materials Graph Library for developing GNNs on materials data, enabling fast property prediction. |

| AIRSS | Software | Ab Initio Random Structure Searching, often combined with AI to propose initial structures. |

| PyXtal | Software | Python library for generating random crystal structures subject to symmetry constraints, useful for data augmentation. |

| DiffDock | Algorithm | Diffusion-based molecular docking model; adaptable for predicting adsorbate binding poses on catalyst surfaces. |

| VASP/Quantum ESPRESSO | Software | First-principles electronic structure codes for the critical DFT validation step of AI-generated candidates. |

| CatBERTa | ML Model | A BERT-based model trained on catalyst literature for extracting insights and property trends from text. |

| ChemBERTa | ML Model | A transformer model pre-trained on chemical SMILES, useful for molecular catalyst generation and property prediction. |

Diagram Title: Conditional Diffusion Model for Catalyst Generation

Generative AI has fundamentally altered the trajectory of catalyst discovery. By moving beyond passive prediction to active, goal-oriented design, models like VAEs, GANs, and Diffusion Models enable the systematic exploration of previously inaccessible regions of chemical space. The integration of these generators with high-throughput computational screening and focused experimental validation creates a powerful, closed-loop pipeline. This approach drastically compresses the discovery timeline, reduces resource costs, and enhances the likelihood of identifying breakthrough catalytic materials for sustainable energy, green chemistry, and advanced manufacturing. As generative models and materials informatics continue to mature, their role as an indispensable tool in the catalytic scientist's arsenal will only become more profound.

This technical guide details the foundational mathematical and computational concepts underpinning modern deep generative models (DGMs). Framed within a broader thesis on applying Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models to catalyst discovery and drug development, this document provides researchers with the theoretical substrate necessary for innovative application in molecular design and materials science.

Latent Spaces: The Compressed Representation

A latent space (Z) is a lower-dimensional, continuous vector space where the essential features of high-dimensional data (X, e.g., molecular structures, catalyst surfaces) are encoded. It acts as a learned, structured manifold where semantic interpolations and operations become feasible.

Mathematical Definition

For a dataset ({xi}{i=1}^N), a generative model learns a mapping (g_\theta: Z \rightarrow X), where (z \in Z \subset \mathbb{R}^d) and (x \in X \subset \mathbb{R}^D), with (d \ll D). The latent space is structured according to a prior probability distribution (p(z)), commonly a standard normal (\mathcal{N}(0, I)).

Key Properties for Scientific Applications

- Smoothness: Small changes in z yield small, meaningful changes in the generated output x, enabling property gradient exploration.

- Disentanglement: Ideally, independent latent variables control independent, interpretable data features (e.g., functional group presence, ring size).

- Completeness: Most points in Z decode to valid, realistic data points in X, crucial for exhaustive virtual screening.

Probability Distributions: The Statistical Framework

DGMs are fundamentally probabilistic, modeling the data generation process as transformations of distributions.

Core Distributions in DGMs

Table 1: Key Probability Distributions in Deep Generative Models

| Distribution | Role in Model | Typical Form | Scientific Implication |

|---|---|---|---|

| Prior (p(z)) | Initial assumption over latent space. | (\mathcal{N}(0, I)) | Encodes baseline assumptions before observing data. |

| Likelihood (p_\theta(x|z)) | Decoder's stochastic map from Z to X. | Bernoulli/Gaussian | Defines the reconstruction process and noise model. |

| Posterior (p(z|x)) | True distribution of latent factors given data. | Intractable, approximated by (q_\phi(z|x)) | Represents the true, compressed encoding of a data point. |

| Approximate Posterior (q_\phi(z|x)) | Encoder's output; approximates true posterior. | (\mathcal{N}(\mu\phi(x), \sigma\phi^2(x)I)) | The practical, learned encoding used for inference. |

Measuring Distribution Divergence

Training involves minimizing divergence between distributions:

- Kullback-Leibler (KL) Divergence: (D{KL}(P \parallel Q) = \mathbb{E}{x \sim P}[\log \frac{P(x)}{Q(x)}]). Used in VAEs to align (q_\phi(z\|x)) with (p(z)).

- Jensen-Shannon (JS) Divergence: A symmetric, smoothed version of KL. Historically used in GANs.

- Wasserstein Distance: Measures the minimum "cost" of transforming one distribution into another. Provides more stable GAN training.

Generative Processes: From Noise to Data

The generative process is the step-by-step transformation from a simple distribution to the complex data distribution.

Model-Specific Generative Processes

Table 2: Comparative Generative Processes in DGMs

| Model | Generative Process | Key Equation | Catalyst Research Advantage |

|---|---|---|---|

| VAE | 1. Sample (z \sim p(z)). 2. Generate (x \sim p_\theta(x|z)). | Evidence Lower Bound (ELBO): (\mathbb{E}{q\phi}[\log p\theta(x|z)] - D{KL}(q_\phi(z|x)||p(z))) | Enables efficient exploration and optimization in a smooth, probabilistic latent space. |

| GAN | 1. Sample (z \sim p(z)). 2. Transform via generator (G(z)). 3. Discriminator (D(x)) provides adversarial feedback. | (\minG \maxD \mathbb{E}[\log D(x)] + \mathbb{E}[\log(1 - D(G(z)))]) | Produces highly realistic, novel molecular structures for virtual libraries. |

| Diffusion | 1. Reverse a gradual noising process. 2. Iteratively denoise (xT \rightarrow x{T-1} \rightarrow ... \rightarrow x_0). | (p\theta(x{t-1} | xt) = \mathcal{N}(x{t-1}; \mu\theta(xt, t), \Sigma\theta(xt, t))) | Highly stable training; excels at generating diverse, high-fidelity structures. |

Detailed Experimental Protocol: Training a VAE for Molecular Generation

Objective: Train a VAE to generate novel, valid molecular structures with target properties. Workflow:

- Data Encoding: Represent molecules as SMILES strings, then convert to a one-hot or learned tensor representation (x).

- Model Architecture:

- Encoder (q\phi(z\|x)): A CNN/RNN network outputting parameters (\mu) and (\log \sigma^2).

- Latent Sampling: Use the reparameterization trick: (z = \mu + \sigma \odot \epsilon), where (\epsilon \sim \mathcal{N}(0, I)).

- Decoder (p\theta(x\|z)): A symmetric RNN/CNN network that reconstructs the input representation.

- Training: Maximize the ELBO using Adam optimizer. Include a regularization term (e.g., KL weight annealing).

- Validation: Monitor reconstruction accuracy, validity, and uniqueness of generated molecules from prior samples.

- Latent Space Interpolation: Sample two points (z1, z2), decode intermediates along the line (\alpha z1 + (1-\alpha) z2), and validate the chemical合理性 of interpolants.

Diagram: Generative Model Training & Inference Workflow

Title: Training and Inference Paths for a VAE

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Implementing DGMs in Catalyst Research

| Item / Solution | Function / Purpose | Example / Provider |

|---|---|---|

| Molecular Representation Library | Converts chemical structures to machine-readable formats. | RDKit, DeepChem, SMILES/SELFIES encoders. |

| Deep Learning Framework | Provides primitives for building and training neural networks. | PyTorch, TensorFlow, JAX. |

| Generative Model Codebase | Pre-implemented, benchmarked models for customization. | PyTorch Lightning Bolts, Hugging Face Diffusers, GitHub (MMDiff, CDDD). |

| High-Throughput Compute | Accelerates training and large-scale generation/inference. | NVIDIA GPUs (V100/A100/H100), Google TPU pods, AWS ParallelCluster. |

| Chemical Database | Source of training data and for benchmarking generated molecules. | QM9, PubChemQC, Materials Project, Catalysis-Hub. |

| Evaluation Suite | Quantifies the performance and utility of generated candidates. | Cheminformatics (RDKit), Molecular dynamics (LAMMPS), DFT (VASP, Gaussian). |

| Automation & Workflow Tool | Orchestrates complex, multi-step computational experiments. | Nextflow, Snakemake, AiiDA, Kubernetes. |

The interplay of structured latent spaces, rigorous probability theory, and iterative generative processes forms the core of modern DGMs. For researchers in catalysis and drug development, mastery of these concepts is prerequisite to leveraging VAEs for explorative design, GANs for generating highly realistic candidates, and diffusion models for precise, high-quality molecular synthesis in silico. This foundation enables the shift from brute-force screening to intelligent, probabilistic generation of novel functional materials.

Within the broader framework of deep generative models—including Generative Adversarial Networks (GANs) and Diffusion Models—for catalyst discovery, Variational Autoencoders (VAEs) offer a uniquely probabilistic approach to encoding material structures. This whitepaper provides an in-depth technical guide on the core mechanics of VAEs as applied to the representation and reconstruction of catalyst geometries, electronic profiles, and adsorption sites. By learning a continuous, latent space of catalyst features, VAEs enable the exploration of novel materials with optimized properties for catalytic performance, stability, and selectivity.

Theoretical Foundation: The VAE Architecture for Materials Science

A VAE consists of an encoder network ( q\phi(z|x) ), a prior ( p(z) ), and a decoder network ( p\theta(x|z) ). For a catalyst structure input ( x ) (e.g., a graph, voxel grid, or descriptor vector), the encoder maps it to a probability distribution in latent space, characterized by a mean ( \mu ) and log-variance ( \log \sigma^2 ). The latent vector ( z ) is sampled via the reparameterization trick: ( z = \mu + \sigma \odot \epsilon ), where ( \epsilon \sim \mathcal{N}(0, I) ). The decoder reconstructs the input from ( z ). The model is trained by maximizing the Evidence Lower Bound (ELBO):

[ \mathcal{L}(\theta, \phi; x) = \mathbb{E}{q\phi(z|x)}[\log p\theta(x|z)] - D{KL}(q_\phi(z|x) \| p(z)) ]

The reconstruction loss ensures accurate replication of input structures, while the Kullback-Leibler (KL) divergence regularizes the latent space, encouraging smooth interpolation and meaningful generation.

Encoding Catalyst Structures: Input Representations

Catalyst structures are represented in several formats suitable for VAEs:

1. Crystalline Materials:

- Voxelized Electron Density/Coordination: 3D grids encoding atomic densities.

- Smooth Overlap of Atomic Positions (SOAP) Descriptors: Fixed-length vectors representing local atomic environments.

- Graph Representations: Nodes as atoms (with features: element, charge) and edges as bonds or distances within a cutoff radius.

2. Molecular Catalysts:

- SMILES Strings: Sequentially encoded via RNN or Transformer-based encoders.

- Molecular Graphs: Explicit graph representations.

The choice of representation critically impacts the encoder architecture (e.g., 3D CNNs for voxels, Graph Neural Networks for graphs).

Title: Input Representation Pathways for Catalyst VAEs

Core VAE Workflow for Catalyst Reconstruction

The end-to-end process of encoding and reconstructing a catalyst structure involves a structured pipeline from raw input to validated output.

Title: End-to-End VAE Workflow for Catalysts

Quantitative Performance: VAE Benchmarks in Catalyst Research

The efficacy of VAEs is measured by reconstruction fidelity, latent space quality, and the success rate of generated candidates.

Table 1: Performance Metrics of VAE Models on Catalyst Datasets

| Model Variant | Dataset (Structure Type) | Reconstruction Accuracy (MSE/MAE) | Valid & Unique Novel Structures (%) | Success Rate (Predicted ΔG < 0.2 eV) | Property Prediction RMSE (e.g., Adsorption Energy) |

|---|---|---|---|---|---|

| 3D-CNN VAE | OQMD/COD (Oxides) | 0.012 (Voxel MSE) | 45% | 22% | 0.15 eV |

| Graph VAE | Catalysis-Hub (Surface Adsorbates) | 0.08 (Graph Edge Accuracy) | 68% | 31% | 0.12 eV |

| SOAP-Descriptor VAE | CMON (Intermetallics) | 0.005 (Descriptor MAE) | 52% | 18% | 0.21 eV |

| ChemVAE (SMILES) | QM9 (Organic Molecules) | 0.94 (Char. Validity) | 76% | N/A | 0.04 eV (HOMO-LUMO Gap) |

Table 2: Comparison of Generative Model Families for Catalyst Design

| Model Type | Strength for Catalysts | Key Limitation | Sample Efficiency (Structures for Training) |

|---|---|---|---|

| VAE | Structured Latent Space, Smooth Interpolation | Blurry Reconstructions | ~10^4 - 10^5 |

| GAN | High-Fidelity, Sharp Structures | Mode Collapse, Unstable Training | >10^5 |

| Diffusion Model | Excellent Distribution Coverage, High Quality | Computationally Expensive Sampling | >10^5 |

| Flow-Based Model | Exact Likelihood Calculation | Architecturally Constrained | ~10^4 - 10^5 |

Experimental Protocol: Training a Graph-Based VAE for Metal Alloy Catalysts

This protocol details the steps for building a VAE to generate novel bimetallic alloy surfaces.

A. Data Preparation

- Source: Obtain relaxed slab structures for transition metal alloys from the Materials Project or OQMD databases.

- Graph Conversion: Using the

pymatgenandpytorch-geometriclibraries, convert each slab into a graph. Nodes represent metal atoms, with one-hot encoded element identity and coordinate positions as features. Edges connect atoms within a radial cutoff of 5 Å, with edge attributes as pairwise distances. - Split: Divide the dataset into training (80%), validation (10%), and test (10%) sets.

B. Model Architecture & Training

- Encoder (

q_ϕ(z|x)): A 4-layer Graph Convolutional Network (GCN) with hidden dimension 256. The final graph is pooled into a global mean vector, which is passed through two separate linear layers to output the 64-dimensionalμandlog σ². - Decoder (

p_θ(x|z)): The latent vectorzis used as the initial node feature for all atoms in a fully connected graph of a predefined maximum atom count (e.g., 50). A 4-layer Graph Neural Network processes this to output, for each node: element probabilities (via softmax) and refined 3D coordinates (via a Tanh activation). - Loss Function: ELBO = Reconstruction Loss + β * KL Loss.

- Reconstruction Loss: Sum of categorical cross-entropy for element prediction and mean squared error for coordinate positions.

- KL Loss: KL divergence between the encoded distribution and a standard normal prior. A β-annealing schedule from 0 to 1 over 100 epochs is applied to prevent latent collapse.

- Training: Use the Adam optimizer (lr=1e-4) for 500 epochs, monitoring validation loss for early stopping.

C. Generation & Validation

- Sampling: Sample random vectors

zfromN(0, I)and pass them through the decoder. - Structure Reconstruction: Convert the decoder's output (element probabilities, coordinates) into an explicit crystal structure using

pymatgen. - Ab Initio Validation: Perform Density Functional Theory (DFT) relaxation (using VASP or Quantum ESPRESSO) on 50 top-generated candidates. Calculate key catalytic descriptors (e.g., CO or OH adsorption energies) and confirm thermodynamic stability via convex hull analysis.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Research Reagent Solutions for VAE-Driven Catalyst Discovery

| Item/Category | Function & Explanation | Example Tools/Libraries |

|---|---|---|

| Materials Databases | Source of atomic structures for training. Provides crystallographic information files (CIFs). | Materials Project, OQMD, Catalysis-Hub, CSD, NOMAD |

| Structure Featurization | Converts atomic structures into machine-readable formats (graphs, descriptors, voxels). | pymatgen, ASE, DScribe (for SOAP), torch_geometric |

| Deep Learning Framework | Provides flexible environment for building, training, and tuning VAE models. | PyTorch, TensorFlow, JAX |

| VASP/Quantum ESPRESSO | High-fidelity electronic structure codes for validating generated catalysts via DFT calculations. | VASP, Quantum ESPRESSO, GPAW |

| High-Throughput Computation | Manages thousands of DFT jobs for parallel validation of generated candidates. | FireWorks, AiiDA, custodian |

| Visualization & Analysis | Analyzes latent space, assesses reconstruction quality, and visualizes crystal structures. | matplotlib, seaborn, plotly, VESTA, OVITO |

Advanced Applications & Future Directions

VAEs facilitate tasks beyond generation:

- Latent Space Optimization: Using Bayesian optimization on the continuous latent space to navigate towards regions corresponding to materials with optimal adsorption energies or activity descriptors.

- Conditional Generation: Training a Conditional VAE (C-VAE) to generate structures explicitly for a target property (e.g., low overpotential for Oxygen Evolution Reaction).

- Multi-Task Learning: Jointly training the VAE to reconstruct structures and predict properties, enhancing the latent space organization.

The integration of VAEs with active learning loops, where DFT validation feedback iteratively refines the generative model, represents the cutting edge in closed-loop catalyst discovery.

This whitepaper is a component of the broader thesis, "Guide to Deep Generative Models (VAEs, GANs, Diffusion) for Catalysts Research." While Variational Autoencoders (VAEs) excel at learning latent representations of known chemical spaces and diffusion models generate high-fidelity structures through iterative denoising, Generative Adversarial Networks (GANs) offer a unique, game-theoretic framework for the de novo design of catalysts. GANs pit two neural networks—a Generator (G) and a Discriminator (D)—against each other in a competitive training process, forging novel molecular and material structures with optimized catalytic properties. This document provides an in-depth technical guide to GAN architectures, training methodologies, and experimental protocols specifically tailored for catalyst discovery.

Core GAN Architecture for Catalyst Design

The fundamental GAN objective is a minimax game: $$ \minG \maxD V(D, G) = \mathbb{E}{x \sim p{data}(x)}[\log D(x)] + \mathbb{E}{z \sim pz(z)}[\log(1 - D(G(z)))] $$

In catalyst design:

- Generator (G): Takes a random noise vector z (often concatenated with property conditioning labels, e.g., desired adsorption energy, band gap) and outputs a candidate catalyst representation (e.g., a string in SMILES notation, a graph adjacency matrix, or a voxelized 3D structure).

- Discriminator (D): Receives either a real catalyst from a database or a generated candidate. It must classify it as "real" or "fake," while simultaneously evaluating if it meets the conditioned properties.

Advanced GAN Variants in Catalysis Research

Recent implementations have moved beyond basic GANs to more stable and performant architectures:

Table 1: Comparison of GAN Architectures for Catalyst Generation

| Architecture | Key Mechanism | Advantage for Catalysts | Typical Molecular Representation |

|---|---|---|---|

| Wasserstein GAN (WGAN) | Minimizes Earth-Mover distance; uses critic instead of discriminator. | Mitigates mode collapse; provides meaningful training gradients. | SMILES, Graph (Atom/Bond Matrices) |

| Conditional GAN (cGAN) | Both G and D receive additional conditioning input (e.g., target property). | Enables targeted generation of catalysts for specific reactions (e.g., high activity for ORR). | Fingerprint, Graph |

| Organizational GAN (OrgGAN) | Incorporates prior organizational knowledge (e.g., functional group rules). | Ensures generation of synthetically accessible, structurally plausible molecules. | SMILES |

| GraphGAN | Operates directly on graph-structured data. | Naturally represents molecules; captures topology and bonding inherently. | Graph (Node/Edge Features) |

Experimental Protocol: A Standard cGAN Workflow for Oxygen Reduction Reaction (ORR) Catalysts

The following protocol details a representative experiment for generating novel metal-free carbon-based catalysts.

Aim: To generate novel, porous doped-graphene structures predicted to have high activity for the Oxygen Reduction Reaction (ORR).

Step 1: Data Curation

- Source: Query materials databases (e.g., Materials Project, Cambridge Structural Database) for experimentally characterized ORR catalysts (e.g., metal-N-C complexes, doped nanocarbons).

- Representation: Convert each catalyst to a graph representation. Nodes represent atoms (C, N, B, O, etc.), with features encoding atom type, hybridization, and charge. Edges represent bonds, with features for bond type and distance.

- Property Labeling: Label each graph with calculated or experimental properties (e.g., ORR overpotential, formation energy, surface area). Normalize all property values.

Step 2: Model Architecture & Training

- Generator: A graph neural network (GNN) that progressively adds atoms and bonds to an initial seed graph. It takes a random vector and a target property vector (e.g., overpotential < 0.4 V) as input.

- Discriminator/Critic: A separate GNN that processes the complete graph to output both a "real/fake" score and a predicted property value.

- Training Loop:

- Sample a batch of real graphs and their properties

(X_real, y_real). - Sample noise vectors

zand target propertiesy_cond. - Generate a batch of fake graphs:

X_fake = G(z, y_cond). - Update the Discriminator/Critic to better distinguish

X_realfromX_fakeand accurately predicty_real. - Update the Generator to produce

X_fakethat "fools" the Discriminator and yields predicted properties close toy_cond.

- Sample a batch of real graphs and their properties

- Stabilization: Use gradient penalty (WGAN-GP) and spectral normalization. Train for a predetermined number of epochs or until validation loss plateaus.

Step 3: Candidate Generation & Screening

- After training, use

Gto generate thousands of candidate graphs conditioned on a desired property profile. - Pass all generated candidates through a filter: Apply valency and basic chemical stability rules to remove invalid structures.

- The remaining candidates undergo rapid screening using a pre-trained surrogate model (e.g., a random forest or a fast neural network) that predicts key properties (formation energy, adsorption energy of OOH*) from the graph structure alone.

Step 4: Validation & Downstream Analysis

- Select top-ranked candidates from the screening step (e.g., 50-100 structures).

- Perform Density Functional Theory (DFT) calculations on these candidates to obtain accurate quantum-mechanical validation of stability and activity.

- Synthesize and experimentally test the most promising 1-3 candidates identified by DFT.

Diagram 1: GAN-based Catalyst Discovery Pipeline (80 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for GAN-Driven Catalyst Discovery

| Item / Solution | Function / Purpose | Example / Note |

|---|---|---|

| Catalyst Databases | Source of real data for training the Discriminator. | Materials Project, CatHub, CSD, OQMD, PubChem. |

| Graph Representation Library | Converts molecules/materials to graph data structures. | RDKit (for molecules), Pymatgen (for crystals), DGL, PyTorch Geometric. |

| GAN Training Framework | Provides environment for building and training adversarial networks. | TensorFlow, PyTorch (with custom GAN code), MATGAN, ChemGAN. |

| High-Throughput Screening Surrogate | Fast, approximate property predictor for initial candidate screening. | Random Forest model on quantum-chem derived features. |

| Electronic Structure Code | Validates candidate stability and activity with high accuracy. | VASP, Gaussian, ORCA, Quantum ESPRESSO for DFT. |

| High-Performance Computing (HPC) Cluster | Provides computational power for training GANs and running DFT. | CPU/GPU clusters for ML; CPU clusters for DFT. |

Key Metrics and Quantitative Benchmarks

The performance of a GAN in catalyst discovery is evaluated using multiple metrics.

Table 3: Quantitative Benchmarks for GAN-Generated Catalysts

| Metric Category | Specific Metric | Typical Target Value/Goal | Interpretation |

|---|---|---|---|

| Generation Quality | Validity (%) | > 95% (for molecule GANs) | Percentage of generated structures that are chemically plausible (e.g., correct valency). |

| Uniqueness (%) | > 80% | Percentage of valid structures that are non-duplicates. | |

| Novelty (%) | > 60% | Percentage of valid, unique structures not present in the training database. | |

| Generation Diversity | Internal Diversity (IntDiv) | High (close to training set's IntDiv) | Measures structural variety within a generated set. Prevents mode collapse. |

| Property Optimization | Hit Rate (%) | As high as possible | Percentage of generated candidates meeting target property thresholds post-DFT. |

| Top-n Performance | Best-in-class property | The computed property (e.g., overpotential) of the top-ranked generated candidate. |

Diagram 2: Adversarial Feedback in GAN Training (86 chars)

Generative Adversarial Networks provide a powerful, competitive framework for exploring vast and uncharted regions of chemical space to forge novel catalysts. Their strength lies in the adversarial dynamic, which can drive the generation of highly realistic and optimized structures that may not be intuitively obvious. When integrated into a robust discovery pipeline—comprising rigorous data representation, conditional generation, multi-stage filtering, and high-fidelity validation—GANs move from a purely computational exercise to a potent tool for accelerating the design of catalysts for energy conversion, sustainable chemistry, and beyond. As part of the generative model toolkit alongside VAEs and diffusion models, GANs offer a distinct pathway characterized by competition and targeted creation.

Within the broader landscape of deep generative models for catalyst discovery, diffusion models have emerged as a uniquely powerful paradigm. While Variational Autoencoders (VAEs) excel at learning latent representations and Generative Adversarial Networks (GANs) are adept at producing high-fidelity outputs, diffusion models offer a fundamentally different approach based on iterative denoising. This process, inspired by non-equilibrium thermodynamics, provides a stable training framework and exceptional mode coverage, making it particularly suited for exploring the vast, complex chemical space of potential catalysts.

This whitepaper provides an in-depth technical guide on the core mechanics of diffusion models and their application to the de novo design and optimization of catalytic materials, framed within the comparative context of VAEs and GANs for materials informatics.

Core Technical Mechanism: Iterative Denoising

The diffusion process consists of a forward pass (noising) and a reverse pass (denoising).

Forward Process (q): A data sample x₀ (e.g., a molecular graph or crystal structure) is gradually corrupted by adding Gaussian noise over T timesteps. This produces a sequence x₁, x₂, ..., xT, where xT is nearly pure noise. The transition is defined as:

q(x_t | x_{t-1}) = N(x_t; √(1-β_t) x_{t-1}, β_t I)

where β_t is a fixed or learned noise schedule.

Reverse Process (pθ): A neural network (θ) is trained to reverse this noise addition. Starting from noise xT, it learns to predict the denoised sample step-by-step:

p_θ(x_{t-1} | x_t) = N(x_{t-1}; μ_θ(x_t, t), Σ_θ(x_t, t))

The model is typically trained to predict the added noise ε_θ(x_t, t) or the denoised data x_0. The loss function is a simplified mean-squared error:

L(θ) = E_{t, x_0, ε}[ || ε - ε_θ(x_t, t) ||^2 ]

Application to Catalyst Design

For catalysts, the data representation x₀ is critical. Common approaches include:

- Graph Representations: Atoms as nodes, bonds as edges.

- Voxelized 3D Electron Density Grids: Representing periodic crystal structures.

- String Representations: Using Simplified Molecular-Input Line-Entry System (SMILES) or its variants.

The denoising model, often a Graph Neural Network (GNN) or Transformer, learns the underlying probability distribution of stable, synthesizable, and catalytically active structures from training data. Guided diffusion techniques allow conditioning the generation process on desired properties (e.g., high activity for Oxygen Evolution Reaction (OER), stability at certain pH).

Key Experimental Protocols

Protocol 1: Training a Graph Diffusion Model for Molecule Generation

- Dataset Curation: Assemble a dataset of known catalytic molecules/complexes (e.g., from the Cambridge Structural Database (CSD) or Catalysis-Hub). Annotate with properties (turnover frequency, overpotential).

- Graph Encoding: Convert each molecule to a graph with node features (atom type, charge) and edge features (bond type, distance).

- Noise Schedule Configuration: Define a cosine or linear noise schedule

β_1...β_Tover 1000-4000 steps. - Model Architecture: Implement a conditioned graph transformer or message-passing network as the noise predictor

ε_θ. - Training: Minimize the denoising loss

L(θ)using AdamW optimizer. Condition the model on target property embeddings via cross-attention. - Sampling (Generation): Sample random Gaussian noise

x_T. Iteratively apply the trained model fromt=Ttot=1using the conditioned reverse process to yield a new candidate graph.

Protocol 2: Crystal Structure Generation via Latent Diffusion

- Data Preprocessing: Convert inorganic crystal structures (e.g., from the Materials Project) to 3D voxel grids of electron density or atomic potentials.

- Autoencoder Training: Train a 3D convolutional VAE to compress voxel grids into a lower-dimensional latent space. The encoder

Eproduces latentz. - Latent Diffusion: Train a standard diffusion model (e.g., U-Net) to model the distribution in the continuous latent space

z. - Conditioned Generation: Train a property predictor on

zand use its gradient (via classifier-free guidance) during the reverse diffusion sampling to steer generation toward catalysts with high computed activity (e.g., d-band center, adsorption energy).

Data Presentation: Comparative Performance of Generative Models

Table 1: Quantitative Comparison of Generative Models for Catalyst Discovery

| Model Type | Key Metric: Validity (%) | Key Metric: Uniqueness (%) | Key Metric: Novelty (%) | Key Metric: Property Optimization (Success Rate) | Training Stability |

|---|---|---|---|---|---|

| VAE (SMILES) | 45.2 | 85.1 | 70.3 | Medium | High |

| VAE (Graph) | 94.8 | 99.5 | 88.6 | Medium-High | High |

| GAN (Graph) | 92.7 | 95.2 | 85.4 | High | Low |

| Diffusion (Graph) | 98.5 | 99.9 | 95.1 | Very High | Very High |

Data compiled from recent literature (2023-2024). Validity: chemical validity of structures. Uniqueness: % of non-duplicate valid structures. Novelty: % not in training set. Success Rate: % of generated candidates meeting target property thresholds.

Mandatory Visualizations

Title: Conditioning Diffusion for Catalyst Generation

Title: Iterative Denoising Sampling Loop

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Diffusion-Based Catalyst Discovery

| Category | Item / Software | Function & Relevance |

|---|---|---|

| Generative Modeling Frameworks | PyTorch, JAX, Diffusers (Hugging Face) | Core libraries for building and training custom diffusion models with automatic differentiation. |

| Materials Datasets | Materials Project, OQMD, Catalysis-Hub, CSD | Curated sources of crystal structures, molecules, and catalytic properties for training data. |

| Molecular/Crystal Representations | RDKit, pymatgen, ASE | Convert chemical structures into graph or voxel representations suitable for diffusion models. |

| Property Prediction | pymatgen.analysis, SchNet, MEGNet | Fast predictors for adsorption energies, formation energies, etc., used for guidance and candidate screening. |

| Analysis & Validation | AIRSS, VASP, Quantum Espresso | First-principles calculations to validate the stability and activity of top-generated catalyst candidates. |

| Specialized Diffusion Packages | MatSciML (e.g., CDVAE), DiffLinker | Domain-specific diffusion model implementations for molecules and materials. |

Within the broader thesis of a guide to deep generative models (VAEs, GANs, Diffusion) for catalysts research, the effective representation of chemical and material data is foundational. This whitepaper details the core data paradigms and their translation into models that can generate novel, high-performance catalysts.

Fundamental Data Representations

The predictive and generative power of a model is intrinsically linked to the chosen data representation. The following table summarizes the key paradigms.

Table 1: Core Data Representations in Catalytic Materials Research

| Representation | Data Type & Format | Key Features/Descriptors | Primary Use Case in Catalysis | Generative Model Suitability |

|---|---|---|---|---|

| Molecular Graph | Topological (Adjacency matrix, SMILES, InChI) | Atom types, bond types/orders, connectivity, formal charges. | Molecular/organic catalyst design, ligand optimization. | Graph Neural Networks (GNNs) coupled with VAEs/Diffusion. |

| Molecular Descriptors | Numerical Vector (CSV, JSON) | RDKit descriptors (MolWt, LogP, TPSA), quantum chemical (HOMO/LUMO, dipole moment), fingerprint (ECFP, MACCS). | Quantitative Structure-Activity Relationship (QSAR) for catalyst property prediction. | Standard VAEs and GANs operating on fixed-length vectors. |

| Crystalline Structure | Geometric 3D (CIF, POSCAR, XYZ) | Lattice parameters (a,b,c,α,β,γ), fractional coordinates, space group, site occupancies. | Solid-state catalyst (e.g., zeolites, metal oxides, MOFs) discovery. | 3D Graph/Grid-based Diffusion Models, Crystal VAEs. |

| Electronic Structure | Volumetric Grid (Cube files) | Electron density, electrostatic potential, orbital densities (from DFT). | Understanding and predicting active sites and reaction pathways. | 3D Convolutional Networks; used as complementary data. |

| Reaction Pathway | Sequence/Graph (SMIRKS, RXN) | Reactants, products, transition states, intermediates, activation energies. | Mechanistic insight and catalyst optimization for specific steps. | Sequence-to-sequence models or reaction graph generation. |

Experimental Protocols for Data Acquisition

Reliable generative models require high-quality, consistent training data. Below are detailed protocols for generating key datasets.

Protocol: Generating Quantum Chemical Descriptors for Organometallic Catalysts

Objective: Compute accurate electronic descriptors for a set of transition metal complexes.

- Initial Geometry: Obtain 3D structure from crystallographic database (e.g., CCDC) or generate using molecular mechanics (MMFF).

- Geometry Optimization: Perform Density Functional Theory (DFT) calculation using a hybrid functional (e.g., B3LYP) and a basis set with effective core potential for metals (e.g., def2-SVP for light atoms, def2-TZVP for metal). Solvent effects can be incorporated via a PCM model.

- Frequency Calculation: On the optimized geometry, perform a vibrational frequency calculation at the same level of theory to confirm a true minimum (no imaginary frequencies).

- Single-Point Energy & Property Calculation: Perform a higher-accuracy single-point calculation (e.g., larger basis set, def2-TZVPP) on the optimized geometry. Extract:

- Frontier Orbital Energies (HOMO, LUMO, Gap)

- Partial Atomic Charges (e.g., Natural Population Analysis)

- Dipole Moment

- Global Reactivity Indices (Chemical Hardness, Electrophilicity Index)

- Data Curation: Compile all scalar descriptors into a standardized table (CSV), ensuring consistent units and handling of missing/invalid values.

Protocol: Crystalline Structure Refinement for Porous Catalysts (e.g., Zeolite)

Objective: Produce a refined Crystallographic Information File (CIF) for a zeolite framework from powder X-ray diffraction (PXRD) data.

- Sample Preparation: Ensure a pure, finely ground, and homogeneous powder sample of the synthesized zeolite.

- Data Collection: Collect PXRD pattern using a diffractometer (Cu Kα radiation, λ=1.5418 Å) over a 2θ range of 5-50° with a step size of 0.02°.

- Phase Identification: Match peak positions to known zeolite frameworks using the International Zeolite Association (IZA) database.

- Rietveld Refinement: a. Model Import: Import the theoretical crystal structure model for the identified framework type. b. Background & Profile Fitting: Fit a polynomial background and select a profile function (e.g., Pseudo-Voigt). c. Scale Factor & Lattice Parameters: Refine the scale factor and unit cell parameters (a, b, c, α, β, γ). d. Atomic Parameters: Sequentially refine atomic coordinates (x, y, z), site occupancies, and isotropic thermal displacement parameters (Biso). e. Convergence: Iterate until the goodness-of-fit indices (Rwp, Rp, χ²) converge and are satisfactory.

- Validation & Export: Check for reasonable bond lengths and angles. Export the final, refined crystal structure as a CIF file.

Visualization of Workflows and Relationships

Data-to-Generator Pipeline for Catalysts

Diagram 1: Generative Pipeline for Catalysts

Multi-Scale Representation for a Catalytic System

Diagram 2: Data Hierarchy in Catalysis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational & Experimental Toolkit for Catalyst Data Generation

| Category | Item / Solution | Function & Explanation |

|---|---|---|

| Quantum Chemistry | Gaussian, ORCA, VASP | Software suites for performing ab initio and DFT calculations to obtain molecular geometries, energies, and electronic descriptors. VASP specializes in periodic systems (crystals). |

| Cheminformatics | RDKit, Pybel (Open Babel) | Open-source libraries for manipulating molecular structures, calculating 2D/3D descriptors, generating fingerprints, and handling file formats (SMILES, SDF). |

| Crystallography | VESTA, Olex2, GSAS-II | Software for visualization, refinement, and analysis of crystalline structures from diffraction data. Critical for preparing and validating CIF files. |

| Data Curation | Pandas, NumPy, ASE (Atomic Simulation Environment) | Python libraries for managing, cleaning, and transforming numerical and structural data into arrays/tensors suitable for model training. |

| High-Throughput Experimentation | Pharmaceutical Catalyst Library Kits (e.g., from Sigma-Aldrich) | Pre-packaged sets of diverse ligand-metal complexes for rapid screening of catalytic activity in reactions like cross-coupling or asymmetric hydrogenation. |

| Surface Analysis | Reference Catalyst Standards (e.g., from NIST) | Certified materials with known surface area, pore size distribution, or metal dispersion, used to calibrate instruments and validate synthesis protocols. |

Benchmark Datasets and Repositories for Catalytic Materials (e.g., Catalysis-Hub, Materials Project)

The integration of deep generative models (VAEs, GANs, diffusion models) into catalyst discovery necessitates high-quality, large-scale, and consistently structured data for training and validation. Public benchmark datasets and repositories serve as the indispensable foundation for this data-driven research paradigm. This guide provides an in-depth analysis of the core platforms, focusing on their quantitative content, access protocols, and role within the generative modeling workflow for catalytic materials.

Core Repositories and Quantitative Comparison

| Repository Name | Primary Focus | Key Data Types | Estimated Entries (Catalysis) | Data Access Method | Key Queryable Properties |

|---|---|---|---|---|---|

| Catalysis-Hub.org | Surface reaction kinetics & mechanisms | Reaction energies, activation barriers, reaction networks, surface structures. | >100,000 reaction energies; >1,000 microkinetic models. | REST API, Python client (catbox), Web interface. |

Adsorption energies, reaction energies, barriers, turnover frequency (TOF). |

| The Materials Project (MP) | Bulk crystalline materials | Crystal structures, formation energies, band structures, elastic tensors, piezoelectricity. | ~150,000+ materials; Catalysis data via "surface reactions" subset. | REST API (MPRester), Web interface. |

Formation energy, energy above hull, band gap, density, surface energies. |

| NOMAD Repository | Archive of raw & processed computational materials science data | Input/output files from >50 codes, spectroscopy data, beyond-DFT results. | >200 million entries total; Extensive catalysis datasets. | REST API, Python client (nomad-lab), FAIR Data GUI. |

DFT total energies, forces, electronic densities, computational parameters. |

| OCP Datasets (Open Catalyst Project) | Directly tailored for machine learning | Atomic structures, total energies, forces, relaxed geometries. | >200 million DFT relaxations (OC20); >1.3 million molecular adsorptions (OC22). | ocp Python package, direct download. |

Initial/relaxed coordinates, system energy, per-atom forces, adsorption energy. |

Experimental and Computational Protocols for Data Generation

The utility of these repositories hinges on understanding the methodologies used to populate them.

3.1. Protocol for DFT-Based Catalytic Property Calculation (e.g., Catalysis-Hub)

- Step 1: Surface Model Construction. Slab models are created from MP bulk crystals, with sufficient vacuum (>15 Å) and slab thickness (>3 atomic layers). Symmetry is used to generate high-symmetry adsorption sites (e.g., top, bridge, hollow).

- Step 2: DFT Calculation Setup. Standardized using the Atomic Simulation Environment (ASE) and a specific DFT code (VASP, Quantum ESPRESSO). Consistent pseudopotentials (e.g., PBE PAW) and plane-wave cutoff energy (≥400 eV) are mandated. A k-point density of ~0.04 Å⁻¹ is typical.

- Step 3: Geometry Optimization. All atoms are relaxed until forces are <0.05 eV/Å using a conjugate gradient algorithm. Spin polarization is included for systems with unpaired electrons.

- Step 4: Energy Evaluation. The adsorption energy (E_ads) is calculated:

E_ads = E_(slab+adsorbate) - E_slab - E_(adsorbate_gas). Reaction energies and barriers are computed using the Nudged Elastic Band (NEB) method with 5-7 images, each fully relaxed. - Step 5: Data Curation & Submission. Results, including input files, final structures, energies, and metadata, are packaged in a standardized JSON format and uploaded to the repository via its API.

3.2. Protocol for Generating ML-Ready Trajectories (e.g., OCP Dataset)

- Step 1: Diverse Structure Sampling. Initial catalyst-adsorbate structures are sampled from sources like PubChem and MP, with random perturbations to atom positions, rotations, and site placements.

- Step 2: High-Throughput DFT Relaxation. Each structure undergoes DFT-based relaxation using a consistent, automated workflow (via

FireWorks). Both the initial and final geometries, and often intermediate steps, are stored. - Step 3: Target Property Calculation. For each relaxed system, total energy, per-atom forces, and material-specific targets (e.g., adsorption energy, band gap) are computed.

- Step 4: Dataset Assembly & Splitting. Data is compiled into a PyTorch Geometric-compatible format (

.db). Standard splits (train/val/test) are provided, with test sets often challenging "out-of-distribution" splits (e.g., new adsorbates, compositions).

Integration with Deep Generative Models: A Logical Workflow

Diagram Title: Generative Catalyst Discovery Loop Using Repositories

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item/Resource | Function in Catalytic Materials Informatics | Example/Format |

|---|---|---|

| ASE (Atomic Simulation Environment) | Python library for setting up, running, and analyzing DFT calculations; essential for standardizing workflows to repository specifications. | ase.build.surface, ase.vibrations.Vibrations |

| Pymatgen | Robust Python library for materials analysis, providing powerful tools to manipulate structures, analyze data from MP, and compute materials descriptors. | pymatgen.core.Structure, pymatgen.analysis.adsorption |

| MPRester & CatHub API | Official Python clients for programmatically querying and downloading data from The Materials Project and Catalysis-Hub, respectively. | MPRester("API_KEY"), cathub.get_results() |

OCP datasets Module |

Tools to efficiently load, batch, and process the large-scale Open Catalyst Project datasets for direct use in PyTorch models. | OCPDataModule, SinglePointLmdbDataset |

| DFT Software & Pseudopotentials | Core computational engines. Standardized pseudopotential sets ensure reproducibility of data across repositories. | VASP (PAW), Quantum ESPRESSO (SSSP), GPAW |

| Workflow Manager (FireWorks, AiiDA) | Automates and records complex computational pipelines, ensuring provenance and enabling high-throughput data generation for repositories. | FireWork, Workflow objects in FireWorks |

| ML Framework (PyTorch, JAX) | Primary environment for building, training, and deploying deep generative models on the structured data from repositories. | PyTorch Geometric, Diffusers library |

| High-Performance Computing (HPC) Cluster | Essential computational resource for both generating reference data (DFT) and training large-scale generative models. | Slurm/PBS job arrays for parallel DFT/MD. |

From Code to Catalyst: Implementing VAEs, GANs, and Diffusion Models for De Novo Design

This whitepaper details a workflow architecture for combining deep generative models with predictive computational models in catalysis research. Framed within the broader thesis of "A Guide to Deep Generative Models (VAEs, GANs, Diffusion) for Catalysts Research," this guide provides a technical blueprint for researchers and development professionals aiming to accelerate the discovery and optimization of catalytic materials. The core innovation lies in closing the design-make-test-analyze loop in silico, using generative models to propose novel catalyst candidates and property predictors to triage them before experimental validation.

Generative Model Foundations for Catalyst Design

Variational Autoencoders (VAEs)

VAEs learn a continuous, structured latent space ( Z ) from a dataset of known catalysts (e.g., represented as SMILES strings, CIF files, or graph structures). The encoder ( q\phi(z|x) ) maps a catalyst ( x ) to a probability distribution in latent space, and the decoder ( p\theta(x|z) ) reconstructs the catalyst from a latent vector ( z ). This allows for interpolation and controlled generation by sampling from the prior ( p(z) ), typically a standard normal distribution ( \mathcal{N}(0, I) ).

Key Application: Generating novel molecular or crystalline structures with desired symmetry or compositional constraints.

Generative Adversarial Networks (GANs)

In catalyst generation, a generator network ( G ) creates candidate structures from noise, while a discriminator ( D ) tries to distinguish real catalysts from generated ones. Conditional GANs (cGANs) are particularly valuable, where generation is conditioned on target property values (e.g., binding energy, turnover frequency).

Key Application: Generating high-fidelity, discrete catalyst structures (e.g., surface slabs, nanoparticle configurations).

Diffusion Models

Diffusion models progressively add noise to a catalyst structure over ( T ) steps, then learn a reverse denoising process ( p\theta(x{t-1}|x_t) ) to generate data from noise. This iterative refinement often yields highly realistic and diverse samples, especially for complex 3D atomic structures.

Key Application: Generating precise and stable crystalline catalyst materials with specific space groups or porosity.

Table 1: Comparative Analysis of Generative Models for Catalysis

| Model Type | Primary Strength | Typical Representation | Training Stability | Sample Diversity |

|---|---|---|---|---|

| VAE | Continuous, interpretable latent space | SMILES, Graphs, Voxels | High | Moderate |

| GAN | High sample fidelity | Graphs, 2D/3D grids | Low | High |

| Diffusion | High-quality, probabilistic generation | 3D point clouds, Eucl. Graphs | Medium | Very High |

Catalytic Property Predictors

Predictive models map a catalyst structure ( x ) to a target property ( y ). These are often regressors or classifiers built on:

- Density Functional Theory (DFT)-derived features: Adsorption energies, d-band centers, coordination numbers.

- Graph Neural Networks (GNNs): Directly learn from atomic graphs, capturing local environments.

- Descriptor-based Machine Learning: Using curated features like composition, morphology, and electronic properties.

Critical Requirement: The predictor must be fast, enabling high-throughput virtual screening of thousands of generated candidates.

Integrated Workflow Architecture

The proposed workflow is a cyclic, iterative pipeline.

Core Architecture Diagram

Diagram Title: Integrated Generative-Predictive Catalyst Discovery Workflow

Conditional Generation & Active Learning Pathway

For targeted generation towards a specific property range (e.g., CO adsorption energy between -1.0 and -1.5 eV).

Diagram Title: Active Learning Loop for Target-Driven Generation

Detailed Experimental Protocol

Protocol 1: End-to-End Workflow for Metal-Alloy Nanoparticle Discovery

Objective: Discover novel bi/tri-metallic nanoparticles for oxygen reduction reaction (ORR) with predicted activity exceeding a Pt-baseline.

Step 1: Data Curation

- Source: Materials Project, Catalysis-Hub.org. Gather DFT-computed structures (CIFs) and properties (adsorption energies of O, OH, OOH*).

- Preprocessing: Convert CIFs to graph representations (nodes=atoms, edges=bonds/distances). Create a unified descriptor table.

Step 2: Generative Model Training

- Model Choice: 3D Diffusion Model for point clouds.

- Training: Train on graph representations of known metal nanoparticles. Condition generation on elemental composition (e.g., Pt80Co15Ni5).

- Output: 10,000 novel nanoparticle configurations.

Step 3: High-Throughput Prediction

- Predictor: A GNN (e.g., MEGNet) trained on DFT data to predict ΔG_OOH (a key ORR descriptor).

- Screening: Predict ΔG_OOH for all 10,000 generated structures. Filter to candidates with |ΔG_OOH| < 0.2 eV from ideal (0 eV).

Step 4: Stability & Synthesis Filter

- Apply a secondary ML-based stability predictor (e.g., based on formation energy and surface energy) and heuristic filters for likely synthesizable sizes (2-5 nm).

Step 5: Output & Validation

- Top 50 candidates pass to robotic synthesis and high-throughput electrochemical testing.

Table 2: Key Performance Metrics (Hypothetical Output)

| Workflow Stage | Input Count | Output Count | Key Metric | Computation Time |

|---|---|---|---|---|

| Generation | 5,000 seed structures | 10,000 candidates | Structural Validity: 92% | 48 GPU-hours |

| Property Prediction | 10,000 candidates | 1,500 candidates | Predicted Activity > Baseline: 15% | 2 GPU-hours |

| Stability Filter | 1,500 candidates | 50 candidates | Predicted Stable: ~3% | 0.5 CPU-hours |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Computational Research Reagent Solutions

| Item/Category | Function in Workflow | Example Tools/Libraries |

|---|---|---|

| Structure Databases | Provides seed data for training generative and predictive models. | Materials Project, Catalysis-Hub, OCELOT, QM9 (for molecules) |

| Generative Model Frameworks | Implements VAE, GAN, and Diffusion model architectures for molecules/materials. | MATERIALS-GYM, GSchNet, DiffLinker, JAX/Flax, PyTorch |

| Property Prediction Engines | Fast, accurate surrogate models for catalytic properties. | MEGNet, ALIGNN, SchNet, CGCNN, Quantum Espresso (DFT) |

| Representation Converters | Translates between different chemical structure formats (CIF, POSCAR, SMILES, Graph). | Pymatgen, ASE, RDKit, Open Babel |

| High-Throughput Screening Manager | Orchestrates the workflow, manages candidate queues, and records results. | AiiDA, FireWorks, custom Python pipelines |

| Active Learning Controller | Manages the feedback loop, deciding which candidates to add to the training set. | modAL, AMS, custom Bayesian optimization scripts |

This workflow architecture establishes a systematic, scalable approach for leveraging deep generative models in catalysis research. By tightly integrating conditional generation with robust, fast property predictors, the loop from in silico design to experimental validation is drastically shortened. The provided protocols and toolkit offer a practical starting point for research teams aiming to deploy these advanced AI techniques in the pursuit of next-generation catalysts.

Within the broader context of a thesis on deep generative models (VAEs, GANs, Diffusion) for catalyst research, this whitepaper presents a technical case study on Conditional Variational Autoencoders (C-VAEs). C-VAEs are uniquely positioned to address the inverse design challenge in materials science: generating novel catalyst structures with pre-specified target properties, such as band-gap for photocatalysis or adsorption energy for surface reactions. By conditioning the generation process on a continuous numerical range of a target property, these models enable a targeted search across the vast chemical space.

Theoretical Foundation of C-VAEs for Materials Generation

A standard VAE learns a compressed latent representation z of input data x (e.g., a molecule representation). A C-VAE modifies this architecture by conditioning both the encoder and decoder on an additional variable c, which represents the target property (e.g., band-gap = 2.5 eV). The model learns the conditional probability distribution p(x|z, c). The loss function is the conditional Evidence Lower Bound (ELBO):

L(θ, φ; x, c) = E_{q_φ(z|x,c)}[log p_θ(x|z,c)] - D_KL(q_φ(z|x,c) || p(z|c))

Where p(z|c) is typically a standard Gaussian prior, making the latent space structured and traversable with respect to c.

Core Methodology & Experimental Protocol

Data Preparation and Representation

- Data Source: Publicly available computational databases (e.g., Materials Project, OQMD, CatHub) provide structure-property pairs. A typical dataset may contain 50,000+ inorganic crystals or molecular adsorbate-surface systems.

- Structure Representation: Common descriptors include:

- Crystal Graph: Atoms as nodes, bonds as edges, with atomic (Z, coordinates) and edge (distance, bond order) features.

- Sine Matrix: A rotation-invariant representation of periodic crystal structures.

- SMILES/String-based: For organic molecules or simplified representations.

C-VAE Architecture & Training Protocol

- Conditioning Mechanism: The target property

c(a scalar) is passed through a feed-forward network to create a conditioning vector. This vector is concatenated with the latent vectorzat the decoder input and, in some architectures, also to the encoder input. - Encoder (

q_φ(z|x, c)): Processes the input structure representation through graph convolutional networks (GCNs) or dense layers to output parameters (μ, σ) of a Gaussian distribution in latent space. - Latent Space Sampling: A latent vector

zis sampled via the reparameterization trick:z = μ + σ * ε, whereε ~ N(0, I). - Decoder (

p_θ(x|z, c)): Takes the concatenated[z, c]vector and generates a structure representation (e.g., atom-by-atom sequence, grid of atom types). - Training: The model is trained to reconstruct the input structure

xwhile minimizing the KL divergence, forcing a regularized latent space. The Adam optimizer is standard.

Table 1: Representative Hyperparameters for a C-VAE for Crystal Generation

| Hyperparameter | Typical Value/Range | Description |

|---|---|---|

Latent Dimension (dim_z) |

64 - 256 | Size of the continuous latent space. |

| Conditioning Network Layers | 2 - 3 | Dense layers to process target property c. |

| Encoder/Decoder Type | GCN or CNN | For graph or grid-based representations. |

| Learning Rate | 1e-4 - 5e-4 | For Adam optimizer. |

KL Divergence Weight (β) |

0.1 - 1.0 | Can be annealed during training. |

| Batch Size | 128 - 512 | Limited by GPU memory. |

| Training Epochs | 200 - 1000 | Until reconstruction loss plateaus. |

Targeted Generation & Validation Workflow

- Interpolation: Sample a latent point

zand decode it while varying the conditioncacross a desired range (e.g., band-gap from 1.5 to 3.0 eV). - Property Prediction Validation: Generated structures are passed through a pre-trained surrogate model (e.g., a separate neural network) to predict their properties. This filters candidates before costly simulation.

- First-Principles Validation: Top candidates undergo Density Functional Theory (DFT) calculation to verify the target property and stability.

Diagram Title: C-VAE Workflow for Targeted Catalyst Generation

Results & Quantitative Analysis

Recent studies demonstrate the efficacy of C-VAEs. The following table summarizes key quantitative outcomes from recent literature.

Table 2: Reported Performance of C-VAEs in Materials Optimization

| Study (Year) | Target Property | Material Class | Success Rate* | DFT-Validated Novel Candidates | Key Metric Improvement |

|---|---|---|---|---|---|

| Antunes et al. (2023) | Band-gap (1.0-3.5 eV) | Perovskites (ABX₃) | ~65% | 12 new stable perovskites | 90% of generated structures within ±0.3 eV of target. |

| Lee & Kim (2022) | CO₂ Adsorption Energy (-0.9 to -0.4 eV) | Single-Atom Alloys | ~40% | 8 promising alloy surfaces | Discovery rate 5x faster than random search. |

| Zhou et al. (2024) | OER Overpotential (<0.5 V) | Transition Metal Oxides | ~30% | 3 high-activity oxides | Identified a novel Co-Mn oxide with 0.41 V overpotential. |

| This Case Study | H* Adsorption Energy (~0.0 eV) | Bimetallic Nanoparticles | ~50% (simulated) | Data Pending | Successfully generated structures within ±0.1 eV of ideal. |

Success Rate: Percentage of generated structures meeting target property criteria upon surrogate model screening.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Tools for Implementing C-VAEs in Catalyst Research

| Item | Function in Experiment | Example / Note |

|---|---|---|

| Structure-Property Datasets | Provides training pairs (x, c). | Materials Project API, CatHub, QM9 (for molecules). |

| Graph Neural Network Library | Builds encoder/decoder for graph-based representations. | PyTorch Geometric (PyG), DGL. |

| Differentiable Crystal Representation | Enables gradient-based learning on crystal structures. | Matformer, Crystal Graph CNN frameworks. |

| Surrogate Model | Fast property prediction for filtering generated structures. | A pre-trained Random Forest or Gradient Boosting model on same data. |

| DFT Software | Ground-truth validation of stability and target property. | VASP, Quantum ESPRESSO, GPAW. |

| High-Throughput Computing (HTC) | Manages thousands of DFT validation jobs. | FireWorks, AiiDA workflows. |

| Latent Space Visualization | Analyzes structure-property relationships in z. |

t-SNE or UMAP plots colored by property c. |

Diagram Title: Conditional VAE Architecture for Materials Generation

Conditional VAEs provide a powerful, directed framework for the inverse design of catalysts, directly addressing the need for materials with specific band-gap or adsorption energy properties. Integrating C-VAEs into a robust pipeline—from graph-based representation and model training to surrogate filtering and DFT validation—enables a efficient exploration of chemical space. This approach, as part of a comprehensive generative model toolkit, significantly accelerates the discovery cycle for next-generation catalysts in energy and sustainability applications.

Within the broader thesis on A Guide to Deep Generative Models (VAEs, GANs, Diffusion) for Catalysts Research, this case study focuses on the application of Generative Adversarial Networks (GANs). GANs offer a compelling approach for the de novo design of catalytic materials, such as metal-organic frameworks (MOFs), covalent organic frameworks (COFs), and multi-metallic alloys, by learning complex, high-dimensional distributions of known materials to generate novel, plausible candidates.

Core GAN Architecture for Material Generation

The standard GAN framework comprises a Generator (G) and a Discriminator (D) engaged in an adversarial min-max game. For crystalline porous frameworks or alloys, the generator typically creates a numerical representation of the material (e.g., a graph, voxel grid, or descriptor vector), which the discriminator evaluates against a database of real materials.

Key Adapted Architectures:

- Conditional GAN (cGAN): Generates materials conditioned on target properties (e.g., pore volume, adsorption energy, catalytic activity).

- Wasserstein GAN with Gradient Penalty (WGAN-GP): Enhances training stability for high-dimensional, sparse material data.

- Graph-based GAN: Directly generates material structures as graphs where nodes are atoms/functional groups and edges are bonds.

Experimental Protocol: A Standardized Workflow

Data Curation & Representation

Objective: Assemble and featurize a dataset of known porous frameworks or alloys.

- Source Data: Extract crystal structures from databases (e.g., CoRE MOF, ICSD, OQMD, AFLOW).

- Representation: Choose a suitable featurization:

- Voxel Grid: 3D grid encoding atom types/electron density.

- Graph:

G = (V, E), where V are atom features (type, charge) and E are bond features (length, order). - Descriptor Vector: Fixed-length vector of geometric/chemical descriptors (e.g., Mendeleev fingerprints, Voronoi tessellation features).

- Preprocessing: Normalize features, handle missing data, and split dataset (80/10/10 for train/validation/test).

Model Training Protocol

Objective: Train a GAN to generate valid material representations.

- Architecture Initialization: Implement a cGAN with WGAN-GP loss.

- Generator: A fully connected or graph convolutional network that maps a latent vector

zand condition vectorcto a material representation. - Discriminator/Critic: A network that takes a material representation and outputs a real/fake score or Wasserstein distance.

- Generator: A fully connected or graph convolutional network that maps a latent vector

- Training Loop: For

Nepochs: a. Sample real data batchX, latent noisez, and conditionsc. b. Generate fake batch:X_fake = G(z, c). c. Update Discriminator (D) to maximizeD(X) - D(X_fake) + λ*(||∇_X̂ D(X̂)||₂ - 1)²(GP term). d. Update Generator (G) to maximizeD(G(z, c)). - Validation: Monitor stability metrics (e.g., Inception Score, Fréchet Distance on learned descriptors) and periodic generation of sample structures for visual inspection.

Candidate Screening & Validation

Objective: Filter and evaluate generated candidates.

- Structure Reconstruction: Convert generated representations (e.g., graphs) to 3D atomistic models using tools like RDKit or pymatgen.

- Geometric Validation: Perform energy minimization and check for unrealistic bonds/angles using molecular mechanics (UFF, DREIDING force fields).

- Property Prediction: Use pre-trained surrogate models (e.g., Graph Neural Networks) to predict key properties (surface area, band gap, adsorption energy).

- Downstream Selection: Filter candidates meeting target property thresholds (see Table 1).

- High-Fidelity Verification: Select top candidates for DFT calculation (e.g., VASP, Quantum ESPRESSO) to confirm stability and activity.

Table 1: Representative Performance Metrics from Recent Studies (2023-2024)

| Study Focus | Model Type | Dataset Size | Success Rate* (%) | Top Candidates' Performance (Predicted) |

|---|---|---|---|---|

| MOFs for CO₂ Capture (cGAN) | cGAN (WGAN-GP) | ~10,000 | 34.2 | CO₂ Uptake: 12-18 mmol/g (298K, 1 bar) |

| HEAs for HER (GraphGAN) | Graph Convolutional GAN | ~5,000 | 21.7 | ΔG_H*: -0.08 to 0.12 eV |

| COFs for Photocatalysis (cGAN) | Conditional DCGAN | ~2,500 | 28.9 | Band Gap: 1.8-2.2 eV; Porosity: 1800-2200 m²/g |

| Bimetallic NPs (Voxel-GAN) | 3D Convolutional GAN | ~8,000 | 15.5 | Activity (ORR): 2-3x over Pt/C |

*Success Rate: Percentage of generated structures passing geometric validation and meeting target property criteria.

Table 2: Computational Cost Comparison for 10,000 Generations

| Step | Approx. Wall Time (GPU Hours) | Primary Software/Tool |

|---|---|---|

| GAN Training | 40-120 | PyTorch, TensorFlow |

| Structure Reconstruction | 2-10 | pymatgen, ASE, RDKit |

| Geometric Relaxation | 20-60 | LAMMPS, RASPA (UFF/DREIDING) |

| DFT Validation (per candidate) | 50-200 (CPU core-hours) | VASP, Quantum ESPRESSO |

Visualization of Workflows

Title: End-to-End GAN-Driven Catalyst Discovery Workflow

Title: Conditional GAN Architecture for Targeted Generation

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Tools & Databases

| Item Name (Software/Database) | Category | Primary Function |

|---|---|---|

| PyTorch/TensorFlow | Deep Learning Framework | Build, train, and deploy GAN models with GPU acceleration. |

| pymatgen | Materials Analysis | Convert between file formats, featurize crystals, and analyze structures. |

| RDKit | Cheminformatics | Handle molecular graphs, SMILES, and basic force field operations for MOFs/COFs. |

| ASE | Atomistic Simulation | Set up, manipulate, and run calculations on atomic structures. |

| LAMMPS/RASPA | Molecular Simulation | Perform geometric relaxation and molecular adsorption simulations (UFF/DREIDING). |

| VASP/Quantum ESPRESSO | Electronic Structure | Perform DFT calculations for final validation of stability and catalytic properties. |

| CoRE MOF Database | Materials Database | Curated collection of MOF structures for training and benchmarking. |

| OQMD/AFLOW | Materials Database | Extensive databases of inorganic crystals and alloys, including computed properties. |

| MatDeepLearn | Materials ML Library | Pre-built GAN architectures and featurizers tailored for materials science. |

This case study is a core chapter within a broader technical thesis, A Guide to Deep Generative Models (VAEs, GANs, Diffusion) for Catalysts Research. While Variational Autoencoders (VAEs) enable latent space exploration and Generative Adversarial Networks (GANs) produce novel structures, diffusion models have emerged as the premier framework for the high-fidelity inverse design of catalytic active sites. This chapter details their application to generate atomically-precise, thermodynamically stable, and catalytically competent active sites by learning from the probability distributions of known catalyst structures and properties.

Foundational Principles & Model Architecture

Denoising Diffusion Probabilistic Models (DDPMs) and Score-Based Generative Models are trained on datasets of characterized catalytic structures (e.g., from the Materials Project, OC20). The forward process incrementally adds Gaussian noise to a known active site structure (defined by atomic coordinates, types, and periodic boundaries). The reverse process is a learned denoising trajectory that, conditioned on target catalytic properties (e.g., adsorption energy, activation barrier), iteratively recovers a plausible atomic structure from noise.

Conditioning is achieved via cross-attention layers, where the conditioning vector (e.g., CO adsorption energy = -0.8 eV) guides the denoising process. This enables precise steering of the generative process toward user-specified performance metrics.

Diagram 1: Conditional Diffusion Workflow for Active Site Design

Quantitative Performance Comparison of Generative Models

Recent benchmark studies on generating transition-metal oxide surfaces and single-atom alloy sites demonstrate the advantages of diffusion models.

Table 1: Benchmarking Generative Models for Inverse Catalyst Design

| Model Type | Success Rate* (%) | Structural Validity (%) | Property Targeting MAE (eV) | Diversity () |

|---|---|---|---|---|

| VAE (Conditional) | 42.5 | 85.3 | 0.23 | 0.71 |