Harnessing Partial Least Squares (PLS) Regression for Accurate QSAR Modeling in Catalyst Activity Prediction: A Comprehensive Guide for Researchers

This article provides a comprehensive exploration of Partial Least Squares (PLS) regression within Quantitative Structure-Activity Relationship (QSAR) modeling, specifically for predicting catalyst activity.

Harnessing Partial Least Squares (PLS) Regression for Accurate QSAR Modeling in Catalyst Activity Prediction: A Comprehensive Guide for Researchers

Abstract

This article provides a comprehensive exploration of Partial Least Squares (PLS) regression within Quantitative Structure-Activity Relationship (QSAR) modeling, specifically for predicting catalyst activity. Tailored for researchers, scientists, and drug development professionals, we begin by establishing the fundamental connection between molecular descriptors and catalytic performance. We then detail the methodological workflow for constructing robust PLS models, from descriptor calculation and data preprocessing to component selection and model training. The guide further addresses critical troubleshooting and optimization techniques to enhance model performance and interpretability. Finally, we examine rigorous validation protocols and comparative analyses with other machine learning methods, equipping practitioners with the knowledge to develop reliable, predictive models that accelerate catalyst discovery and optimization in biomedical and industrial applications.

From Molecular Structures to Catalytic Performance: The Foundational Role of PLS in QSAR

Application Notes: QSAR-PLS for Catalytic Activity Prediction

Quantitative Structure-Activity Relationship (QSAR) modeling, particularly using Partial Least Squares (PLS) regression, is a pivotal computational method for the rational design and discovery of novel catalysts. Within the context of advanced thesis research, the application focuses on correlating molecular descriptors of catalyst structures with their experimentally determined activity metrics (e.g., turnover frequency, yield, selectivity). PLS is favored for its ability to handle collinear descriptors and datasets where the number of variables exceeds the number of observations.

Core Application Principle: A predictive model is built by projecting the predicted variables (catalyst descriptors) and the observable variables (activity data) to a new, latent variable space. This maximizes the covariance between the molecular structure and the catalytic performance.

Key Advantages in Catalyst Design:

- Accelerated Screening: Enables virtual screening of large catalyst libraries, prioritizing synthesis and testing.

- Mechanistic Insight: Identifies which structural features (steric, electronic, topological) most significantly influence activity.

- Property Optimization: Guides the synthetic modification of catalyst scaffolds to enhance multiple performance parameters simultaneously.

Experimental Protocols

Protocol 1: Dataset Curation and Descriptor Calculation

Objective: To assemble a consistent, high-quality dataset for PLS model development.

Materials: (See "Scientist's Toolkit" below)

- Data Source: Compile catalytic activity data (e.g., log(TOF)) from published literature or in-house experiments for a homogeneous series of catalysts.

- Structure Standardization: Use a cheminformatics toolkit (e.g., RDKit) to generate 3D molecular structures from catalyst SMILES strings. Perform geometry optimization using a semi-empirical method (e.g., PM6).

- Descriptor Calculation: Compute a suite of molecular descriptors:

- Electronic: HOMO/LUMO energies, Mulliken charges, dipole moment.

- Steric: Sterimol parameters (B1, B5, L), molar volume.

- Topological: Molecular connectivity indices, Wiener index.

- Data Curation: Remove duplicates and compounds with ambiguous activity data. Log-transform activity values if necessary to ensure a normal distribution.

Protocol 2: PLS Model Development and Validation

Objective: To construct, validate, and interpret a robust QSAR-PLS model.

Methodology:

- Data Division: Randomly split the dataset into a training set (70-80%) for model building and a test set (20-30%) for external validation.

- Descriptor Preprocessing: Autoscale (mean-centering and unit variance scaling) all descriptor values in the training set. Apply the same scaling parameters to the test set.

- PLS Regression:

- Use the NIPALS algorithm to perform PLS regression on the training set.

- Determine the optimal number of latent variables (LVs) via 5- or 10-fold cross-validation on the training set, minimizing the cross-validated prediction error (e.g., RMSE_CV).

- Model Validation:

- Internal Validation: Report Q² (cross-validated R²), RMSE_CV, and R² for the training set.

- External Validation: Predict the test set using the model. Report R²test, RMSEtest, and the slope of the experimental vs. predicted plot.

- Interpretation: Analyze the Variable Importance in Projection (VIP) scores. Descriptors with VIP > 1.0 are considered most influential. Examine the PLS loadings plot to understand the contribution of each original descriptor to the latent variables.

Protocol 3: Prospective Catalyst Prediction

Objective: To use the validated model for predicting the activity of novel, unsynthesized catalyst candidates.

Methodology:

- Design a virtual library of candidate catalysts based on core scaffold modifications.

- Calculate the same set of molecular descriptors for each virtual candidate.

- Apply the pre-processing scaling parameters (from Protocol 2) to these new descriptor values.

- Use the finalized PLS model to predict the catalytic activity for each candidate.

- Rank candidates by predicted activity and select the top tier for synthetic validation.

Data Presentation

Table 1: Representative PLS Model Performance Metrics for Pd-Catalyzed Cross-Coupling Reactions

| Model ID | # Catalysts | # Descriptors | # Latent Vars | R² (Training) | Q² (CV) | R² (Test) | RMSE (Test) |

|---|---|---|---|---|---|---|---|

| PLS_CC01 | 45 | 15 | 3 | 0.92 | 0.83 | 0.85 | 0.28 |

| PLS_CC02 | 38 | 12 | 2 | 0.88 | 0.79 | 0.80 | 0.35 |

| PLS_ASYMM | 52 | 18 | 4 | 0.95 | 0.87 | 0.89 | 0.22 |

Table 2: Key Molecular Descriptors and VIP Scores from Model PLS_CC01

| Descriptor Category | Descriptor Name | Interpretation | VIP Score |

|---|---|---|---|

| Electronic | LUMO Energy | Electron affinity of the catalyst | 1.45 |

| Steric | B5 (Max Sterimol) | Largest ligand width | 1.82 |

| Steric | % Vbur (Metal) | Buried volume around metal center | 1.78 |

| Electronic | Natural Charge (Pd) | Charge on palladium atom | 1.21 |

| Topological | Wiener Index | Molecular branching complexity | 0.98 |

Visualizations

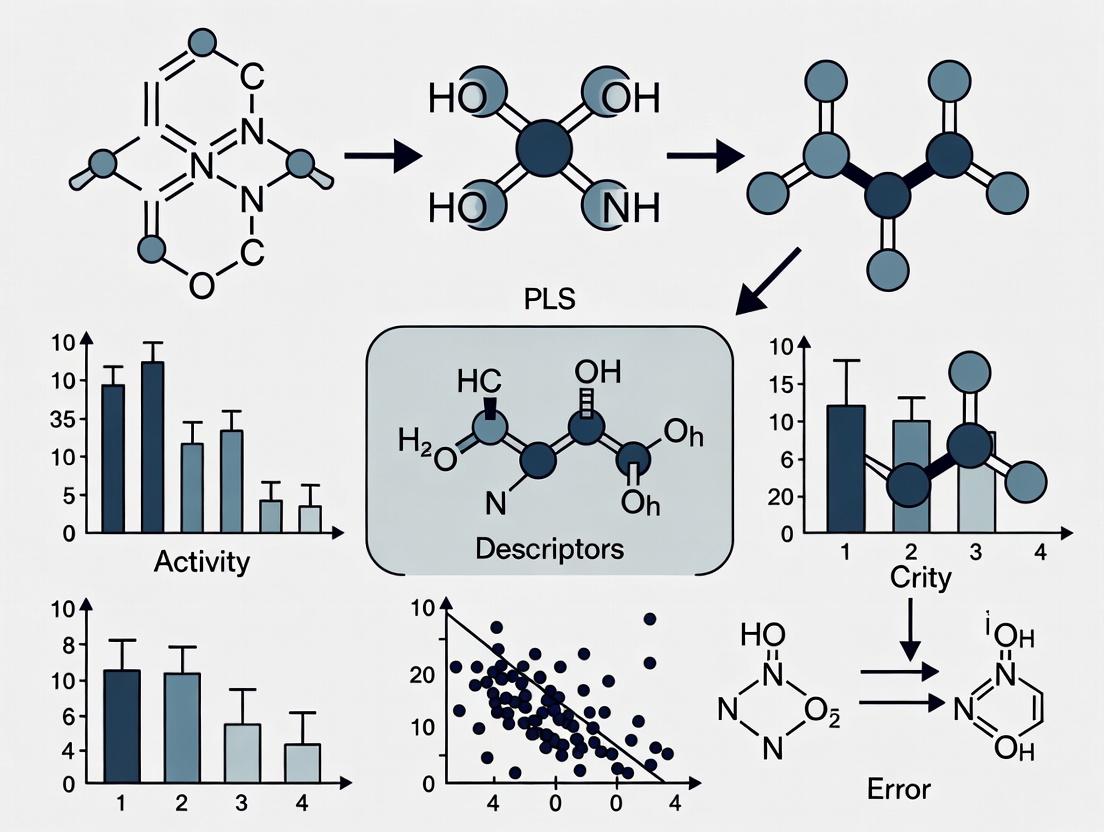

QSAR-PLS Modeling Workflow for Catalysts

PLS Regression Core Concept

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for QSAR-PLS Catalyst Studies

| Item/Category | Function & Relevance in Protocol |

|---|---|

| Cheminformatics Suite (RDKit, OpenBabel) | Open-source libraries for molecule manipulation, descriptor calculation, and fingerprint generation. Core to Protocol 1. |

| Quantum Chemistry Software (Gaussian, ORCA, xTB) | Calculates accurate electronic structure descriptors (HOMO/LUMO, charges) from optimized 3D geometries. Essential for Protocol 1. |

Statistical/PLS Software (SIMCA, R pls, Python scikit-learn) |

Provides algorithms for PLS regression, cross-validation, and calculation of VIP scores. Central to Protocol 2. |

| Curated Catalysis Database (CAS, Reaxys) | Source for literature-derived catalytic activity data to build initial datasets. Used in Protocol 1. |

| Molecular Modeling & Visualization (Avogadro, PyMOL) | For constructing, visualizing, and preparing 3D catalyst structures prior to computation. Supports Protocol 1. |

| Standardized Activity Metric (e.g., log(TOF), %ee, Yield) | A consistent, quantitative measure of catalyst performance to serve as the dependent variable (Y) in the model. |

Within Quantitative Structure-Activity Relationship (QSAR) modeling for catalyst prediction, particularly using Partial Least Squares (PLS) regression, the precise definition of catalyst "activity" is foundational. PLS models correlate molecular descriptors with experimental endpoints, making the choice of metric critical for model relevance and predictive power. This Application Note details key catalytic metrics and standardized protocols for their measurement, framing them as essential inputs for robust QSAR-PLS research in catalyst design.

Key Quantitative Metrics for Catalyst Activity

Catalyst performance is multi-faceted. The following table summarizes the core quantitative metrics used to define activity for QSAR model development.

Table 1: Core Metrics for Defining Catalyst Activity

| Metric | Formula / Definition | Typical Unit | Relevance to QSAR-PLS Modeling |

|---|---|---|---|

| Turnover Frequency (TOF) | (Moles of product) / (Moles of catalyst * Time) | s⁻¹, h⁻¹ | Primary activity endpoint; directly relates to the intrinsic activity of the catalytic site. |

| Turnover Number (TON) | (Moles of product) / (Moles of catalyst) | Dimensionless | Describes total productivity before deactivation; critical for stability correlation. |

| Conversion (%) | (Moles of reactant consumed) / (Initial moles of reactant) * 100 | % | Standard reaction progress metric; often used as a secondary or conditional endpoint. |

| Selectivity (%) | (Moles of desired product) / (Moles of reactant converted) * 100 | % | Key performance indicator; can be modeled as a separate PLS Y-variable. |

| Activation Energy (Eₐ) | Determined from Arrhenius plot (ln(k) vs. 1/T) | kJ mol⁻¹ | Mechanistic descriptor; a valuable higher-level activity parameter for QSAR. |

| Catalyst Stability (Half-life, t₁/₂) | Time for activity (e.g., TOF) to decrease to 50% of initial value | h, min | Deactivation metric; often a target for predictive model optimization. |

Detailed Experimental Protocols for Endpoint Determination

Protocol 2.1: Kinetic Analysis for TOF/TON Determination (Exemplar: Hydrogenation Catalyst) Objective: To measure initial TOF and final TON for a homogeneous hydrogenation catalyst under standardized conditions. Materials: See "Scientist's Toolkit" below. Procedure:

- Reactor Setup: In a nitrogen glovebox, charge a dry, stirred Parr reactor with the substrate (e.g., 10.0 mmol styrene) and internal standard (e.g., n-dodecane, 1.0 mmol).

- Catalyst Introduction: Add a precise amount of catalyst stock solution (targeting 0.01 mmol catalyst) using a gas-tight syringe.

- Pressurization & Initiation: Seal the reactor, remove from glovebox, and purge 3x with H₂ (50 psi). Pressurize to the target H₂ pressure (e.g., 30 psi). Start stirring (1200 rpm) and data logging—this marks time zero.

- Kinetic Sampling: At regular intervals (e.g., 0, 30, 60, 120, 300, 600 s), withdraw a small aliquot (~0.1 mL) via the sample loop into a pre-cooled vial, immediately quenching via exposure to air or a quenching agent.

- Analysis: Quantify substrate and product concentrations in each aliquot via GC-FID using the internal standard method.

- Calculation:

- Plot moles of product vs. time.

- TOF: Calculate the slope of the initial linear region (first 10-15% conversion) divided by the moles of catalyst. Report as molprod molcat⁻¹ s⁻¹.

- TON: Calculate total moles of product at reaction completion (or after a fixed time) divided by moles of catalyst.

Protocol 2.2: Determination of Selectivity in a Parallel/Sequential Reaction Objective: To quantify chemoselectivity for a catalyst transforming a multi-functional substrate. Procedure:

- Perform reaction as per Protocol 2.1, but using a substrate with multiple reactive sites (e.g., an unsaturated aldehyde).

- Ensure analytical method (e.g., GC-MS or HPLC) resolves all potential products (e.g., saturated aldehyde, unsaturated alcohol, saturated alcohol).

- At a specific conversion level (e.g., 50%), analyze the product mixture.

- Calculation: For each product i, Selectivity (%) = (Moles of product i) / (Total moles of all products) * 100. Report the selectivity profile versus conversion.

Visualizing the Role of Metrics in QSAR-PLS Workflow

Pathway from Descriptor to Activity Prediction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Catalytic Activity Assays

| Item | Function & Specification |

|---|---|

| High-Pressure Parallel Reactor System | Enables simultaneous kinetic studies of multiple catalysts under controlled temperature and pressure (e.g., 100 psi H₂). Essential for generating consistent TOF data. |

| Inert Atmosphere Glovebox | Provides O₂/H₂O-free environment for synthesis and handling of air-sensitive catalysts and reagents. |

| Internal Standard Solution | Precisely prepared, inert compound (e.g., n-alkane for GC) added to reaction aliquots for accurate quantitative analysis. |

| Quenching Agent Solution | Stops catalytic reaction instantly upon sampling (e.g., aqueous phosphine scavenger for metal complexes, acid for base catalysts). |

| Calibrated Gas Manifold | Delivers precise and repeatable pressures of reactive gases (H₂, CO, O₂) to the reactor. |

| Certified Substrate Library | A collection of purified, characterized substrates for testing catalyst scope and selectivity trends. |

| Stable Catalyst Stock Solution | A standardized solution of the catalyst in degassed solvent, enabling precise, volumetric dispensing for reproducible loading. |

Application Notes

Within the framework of a broader thesis on Quantitative Structure-Activity Relationship (QSAR) modeling for catalyst activity prediction using Partial Least Squares (PLS) regression, the selection and interpretation of molecular descriptors are paramount. These numerical representations of molecular structure are the fundamental input variables that define the chemical space for PLS analysis. Their proper application directly governs model predictive accuracy, interpretability, and domain of applicability.

Electronic Descriptors in Redox Catalysis: For predicting the activity of transition metal catalysts in oxidation reactions, electronic descriptors quantify ligand effects on the metal center. Key descriptors include the calculated Highest Occupied Molecular Orbital (HOMO) and Lowest Unoccupied Molecular Orbital (LUMO) energies of the metal-ligand complex, which correlate with electron-donating/accepting ability and redox potentials. Hammett constants (σ) of substituents on ligand frameworks are empirically derived electronic parameters that successfully predict rate enhancements in palladium-catalyzed cross-coupling reactions within PLS models.

Steric Descriptors in Asymmetric Catalysis: Steric bulk dictates enantioselectivity in chiral catalysis. The Tolman Cone Angle, while originally for phosphines, can be adapted via computational chemistry to estimate the spatial occupancy of any ligand. More advanced, computation-driven steric descriptors like the Sterimol parameters (B1, B5, L) provide a multi-dimensional representation of ligand shape. In PLS models for predicting enantiomeric excess (%ee) in asymmetric hydrogenation, these parameters are critical for capturing non-linear steric interactions between substrate and catalyst.

Topological Descriptors in Heterogeneous & Enzyme-like Catalysis: Topological indices encode molecular connectivity and branching. The Wiener Index (sum of all shortest path lengths between atoms) and Zagreb Indices have shown utility in PLS models predicting the activity of zeolite catalysts for hydrocarbon cracking, correlating with pore accessibility and molecular diffusion. For bio-inspired catalysts, the Kier & Hall connectivity indices capture aspects of molecular shape and flexibility that relate to substrate binding affinity, analogous to enzyme-substrate complementarity.

Table 1: Key Descriptor Classes and Their Correlations in Catalyst QSAR

| Descriptor Class | Example Descriptors | Typical Physical Correlation | Common Catalyst System Application |

|---|---|---|---|

| Electronic | HOMO/LUMO energy, Hammett constant (σ), Natural Population Analysis (NPA) charge | Redox potential, Lewis acidity/basicity, σ-donation/π-backdonation | Transition metal redox catalysts, Cross-coupling catalysts |

| Steric | Tolman Cone Angle, Sterimol (B1, B5, L), Fractional Steric Occupancy | Enantioselectivity, regioselectivity, turnover frequency (TOF) | Chiral phosphine/amine ligands, N-Heterocyclic Carbenes (NHCs) |

| Topological | Wiener Index, Kier & Hall Connectivity Indices (⁰χ, ¹χ), Balaban J Index | Molecular accessibility, diffusion limitations, substrate binding | Zeolites, Metal-Organic Frameworks (MOFs), Macrocyclic complexes |

Experimental Protocols

Protocol 1: Generation of Electronic Descriptors via DFT Calculation

This protocol outlines the steps to compute key electronic descriptors for a series of organic ligands or metal complexes.

- Structure Optimization: Using Gaussian 16 or ORCA software, perform a geometry optimization and frequency calculation on each molecular structure. Employ a functional such as B3LYP and a basis set like 6-31G(d) for organic molecules, or LANL2DZ for transition metals. Confirm the absence of imaginary frequencies for a true minimum.

- Single-Point Energy Calculation: On the optimized geometry, run a more accurate single-point energy calculation using a larger basis set (e.g., def2-TZVP) and include solvation effects via a model like SMD (Solvation Model based on Density) if relevant to the catalytic reaction medium.

- Descriptor Extraction: Analyze the resulting checkpoint or output file using Multiwfn software.

- Extract HOMO and LUMO orbital energies (in eV).

- Perform Natural Bond Orbital (NBO) analysis to obtain partial charges on key atoms (e.g., metal center, donor atoms).

- Calculate the molecular dipole moment.

- Data Compilation: Tabulate the calculated descriptors (HOMO, LUMO, charges, dipole moment) for each compound in the series.

Protocol 2: Experimental Determination & Validation of Steric Parameters via Solid-State Analysis

This protocol details an experimental method to derive steric parameters complementary to computational ones, using X-ray crystallography.

- Crystallization: Grow single crystals of representative metal-ligand complexes from the series under study (e.g., [M(L)X₂] where M = Pd, Ni).

- X-ray Diffraction Data Collection: Mount a suitable crystal on a diffractometer (e.g., Bruker D8 VENTURE). Collect a full sphere of diffraction data at low temperature (e.g., 100 K) using Mo Kα radiation.

- Structure Solution and Refinement: Solve the structure using intrinsic phasing (SHELXT) and refine with least-squares methods (SHELXL or Olex2). Achieve a final R1 value < 0.05.

- Metric Analysis: Using the refined CIF file, measure key geometric parameters:

- Metal-Donor Bond Lengths: For consistency.

- Percent Buried Volume (%V_bur): Use the SambVca 2.1 web tool. Define the metal center, its coordination sphere, and a standard radius (often 3.5 Å). Calculate the volume occupied by the ligand, expressed as a percentage of the total sphere volume.

- Solid Angle: Calculate the ligand solid angle (in steradians) from the metal center.

- Correlation: Use the experimentally derived %V_bur as a robust steric descriptor in the PLS-QSAR model.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Materials for Descriptor-Driven QSAR

| Item | Function in Descriptor Acquisition/Validation |

|---|---|

| Gaussian 16 / ORCA Software | Industry-standard suites for performing Density Functional Theory (DFT) calculations to derive electronic and computed steric descriptors. |

| Multiwfn Software | A multifunctional wavefunction analyzer for post-processing DFT results to extract precise electronic descriptors (orbital energies, charges, electrostatic potentials). |

| SambVca 2.1 Web Tool | A specialized platform for calculating the steric parameter Percent Buried Volume (%V_bur) from 3D molecular structures or crystallographic data. |

| Bruker D8 VENTURE Diffractometer | A high-performance single-crystal X-ray diffractometer for obtaining precise 3D molecular geometries needed for experimental steric and topological analysis. |

| Olex2 Software | An integrated software for the solution, refinement, and analysis of small-molecule crystal structures, enabling the extraction of metrical parameters. |

| RDKit or PaDEL-Descriptor Software | Open-source cheminformatics libraries capable of calculating thousands of molecular descriptors, including topological indices, directly from 2D molecular structures. |

Visualizations

PLS-QSAR Workflow for Catalyst Design

PLS Model Relates Descriptors to Activity

Why PLS? Addressing Collinearity and High-Dimensional Data in Chemical Datasets

Within a broader thesis on Quantitative Structure-Activity Relationship (QSAR) modeling for catalyst activity prediction, selecting a robust statistical method is paramount. Multivariate datasets in catalysis and drug development—characterized by hundreds of molecular descriptors or spectral features—frequently suffer from high intercorrelation (collinearity) and the "small n, large p" problem (more predictors than samples). Traditional multiple linear regression (MLR) fails under these conditions. Partial Least Squares (PLS) regression emerges as the dominant technique, as it projects the predictive and observable variables to a new, lower-dimensional space of latent variables (components), maximizing the covariance between them and effectively handling collinearity and high dimensionality.

Core Theoretical Advantages of PLS in Chemical Data Analysis

PLS offers specific solutions to the challenges inherent in chemical datasets:

- Collinearity Management: By extracting orthogonal latent components, PLS eliminates issues of non-invertible matrices and unstable coefficient estimates common in MLR.

- Dimensionality Reduction: PLS performs simultaneous dimensionality reduction on both predictor (X) and response (Y) matrices, focusing on directions relevant to predicting Y.

- Noise Filtering: The model prioritizes variance in X correlated with Y, often treating uncorrelated variance as noise, leading to more robust predictions.

- Interpretability Tools: It provides key outputs like Variable Importance in Projection (VIP) scores and loadings plots to interpret which original variables drive the model.

Application Notes: PLS in Catalyst QSAR Modeling

The following notes illustrate the practical application of PLS within a catalyst design workflow.

Dataset Characteristics & Preprocessing

A typical catalyst dataset involves molecular descriptors (e.g., topological, electronic, geometric) for a series of organometallic complexes and their corresponding catalytic activity (e.g., turnover frequency, yield).

Table 1: Representative Dataset Structure for Catalyst QSAR

| Catalyst ID | Descriptor 1 (e.g., %VBur) | Descriptor 2 (e.g., ESP Min) | ... | Descriptor p (e.g., LogP) | Activity (Y, e.g., TOF) |

|---|---|---|---|---|---|

| Cat-01 | 12.5 | -0.45 | ... | 3.2 | 1500 |

| Cat-02 | 18.7 | -0.38 | ... | 4.1 | 850 |

| ... | ... | ... | ... | ... | ... |

| Cat-n | 15.3 | -0.51 | ... | 3.8 | 2100 |

Preprocessing Protocol:

- Data Cleaning: Remove descriptors with >20% missing values. Impute remaining missing values using column mean or k-nearest neighbors.

- Scaling: Center and scale all X-variables to unit variance (autoscaling). Center Y-variable.

- Training/Test Split: Perform a stratified or random split (e.g., 80/20) to maintain activity distribution across sets.

Model Building, Validation, and Interpretation

Protocol: Building a Validated PLS Model

- Component Number Determination: Use k-fold cross-validation (e.g., k=7) on the training set. The optimal number of Latent Variables (LVs) is the one that minimizes the Root Mean Square Error of Cross-Validation (RMSECV).

- Model Training: Fit the PLS model with the optimal number of LVs on the entire training set.

- Performance Assessment:

- Training: Calculate R²Y and RMSEE.

- Test Set Prediction: Predict Y for the held-out test set. Calculate Q² (prediction coefficient) and RMSEP.

- Statistical Validation: Perform Y-permutation testing (minimum 100 permutations) to rule out chance correlation. The intercept of the regression line for permuted R²/Y vs. original R²/Y should be < 0.05.

- Interpretation:

- VIP Scores: Variables with VIP > 1.0 are considered significant contributors.

- Loadings Plots: Inspect the plot of weights for LV1 vs. LV2 to understand variable relationships.

Table 2: Model Performance Metrics (Hypothetical Catalyst Dataset)

| Model Stage | # LVs | R²Y | Q² / R²Pred | RMSEE | RMSEP | Permutation R² Intercept |

|---|---|---|---|---|---|---|

| Training (CV) | 4 | 0.89 | 0.82 (Q²) | 0.15 | - | - |

| Test Set | 4 | - | 0.80 (R²Pred) | - | 0.18 | 0.03 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for PLS-Based QSAR Research

| Item / Reagent | Function in PLS-QSAR Workflow |

|---|---|

| Molecular Modeling Suite (e.g., Schrödinger, Open Babel) | Generates 3D structures and calculates initial molecular descriptors for catalyst libraries. |

| Descriptor Calculation Software (e.g., Dragon, RDKit) | Computes a wide array of topological, electronic, and constitutional descriptors from molecular structures. |

| Chemometrics Platform (e.g., SIMCA, JMP) | Provides optimized, validated algorithms for PLS modeling, VIP calculation, and advanced diagnostics. |

| Programming Environment (Python/R with scikit-learn/pls, ropls) | Offers flexible, scriptable environments for custom data preprocessing, model building, and automation. |

| Y-Randomization Script | A custom or built-in routine to perform permutation testing for model validity assessment. |

| Standardized Catalyst Test Bed | A reliable and reproducible experimental assay (e.g., specific cross-coupling reaction) for generating accurate activity (Y) data. |

Workflow and Relationship Diagrams

Title: PLS-QSAR Modeling Workflow

Title: PLS vs. MLR Problem-Solution Logic

Theoretical Foundation and Key Mathematical Equations

Partial Least Squares (PLS) regression is a bilinear factor model that relates a matrix of predictor variables (X) to a matrix of response variables (Y) by projecting them onto a new, lower-dimensional space of Latent Variables (LVs), also called components. The core objective is to maximize the covariance between the latent structures of X and Y, rather than merely explaining the variance within X (as in PCA).

The fundamental PLS model equations are:

X = T Pᵀ + E Y = U Qᵀ + F

Where:

- T (n × A) and U (n × A) are the X- and Y-score matrices, containing the coordinates of the n observations on the A latent variables.

- P (p × A) and Q (m × A) are the X- and Y-loading matrices, representing the contributions of the original variables to the LVs.

- E (n × p) and F (n × m) are the residual matrices.

- A is the number of latent components, optimally selected via cross-validation.

The scores T and U are connected by an inner relation: U = T B + H, where B is a diagonal matrix of regression weights and H is a residual matrix. The most common PLS algorithm (NIPALS) iteratively extracts these latent vectors by solving an eigenvector problem maximizing cov(t, u).

Dimensionality Reduction and Model Optimization in QSAR

In QSAR, X typically comprises hundreds or thousands of molecular descriptors (e.g., topological, electronic, geometrical). PLS reduces this high-dimensional, collinear space to a few orthogonal LVs that are predictive of the catalytic activity or biological response (Y).

Table 1: Key Model Optimization Metrics and Their Optimal Values

| Metric | Formula/Description | Optimal Target (for a robust QSAR model) |

|---|---|---|

| Optimal LV Count (A) | Determined by k-fold Cross-Validation (CV). | Minimizes the CV Predicted Residual Sum of Squares (PRESS). Avoids overfitting (too many LVs) and underfitting (too few). |

| R²Y (Cumulative) | Proportion of Y-variance explained by the model. | > 0.6 (context-dependent; higher is generally better). |

| Q² (Cumulative) | Proportion of Y-variance predictable by CV (e.g., leave-one-out, 5-fold). | > 0.5 is acceptable; > 0.7 is good. Must not be significantly lower than R²Y. |

| Root Mean Square Error (RMSE) | √( Σ(yᵢ - ŷᵢ)² / n ) | As low as possible. RMSE of calibration should be close to RMSE of CV. |

| Variable Importance in Projection (VIP) | VIPⱼ = √( p Σₐ(SSₐ(wⱼₐ²) / ΣₐSSₐ ) | Descriptor j with VIP > 1.0 is considered influential. |

The optimal number of LVs is the most critical parameter, ensuring the model captures the underlying signal while filtering noise.

PLS Latent Variable Extraction and Model Building Workflow

Experimental Protocol: Building a Predictive PLS-QSAR Model for Catalyst Activity

This protocol outlines the steps for developing a validated PLS model to predict catalytic activity from molecular descriptor data.

Protocol 1: PLS-QSAR Model Development and Validation

Objective: To construct a validated PLS regression model predicting catalyst turnover frequency (TOF) from a set of computed molecular descriptors.

Materials & Software:

- Molecular dataset (minimum n=20-30 catalysts).

- Computational chemistry software (e.g., Gaussian, RDKit) for descriptor calculation.

- Statistical software with PLS capability (e.g., SIMCA, R

plspackage, Pythonscikit-learn). - Y-response data (e.g., experimentally determined TOF or % yield).

Procedure:

Data Preparation:

- Calculate a wide range of relevant molecular descriptors (constitutional, topological, electronic, steric) for all catalyst structures in the dataset.

- Compile experimental activity data (Y-matrix, e.g., log(TOF)).

- Combine into a single data frame: rows = catalysts, columns = descriptors + activity.

Pre-processing and Division:

- Descriptor (X) Scaling: Center and scale (autoscale) all descriptors to unit variance.

- Response (Y) Scaling: Center the activity data.

- Dataset Splitting: Randomly divide the data into a Training Set (~70-80%) for model building and a Test Set (~20-30%) for external validation. Ensure both sets span the activity range.

Model Training & LV Optimization (on Training Set):

- Perform PLS regression on the training set.

- Use 5- or 10-fold cross-validation on the training set.

- Extract the PRESS (Predicted Residual Sum of Squares) plot or Q² values for different numbers of LVs.

- Select the optimal number of LVs (A) as the point where Q² is maximized or where adding another LV does not significantly decrease PRESS.

Model Evaluation:

- Record key statistics for the model with A LVs: R²X(cum), R²Y(cum), Q²(cum).

- Examine the VIP scores. Identify descriptors with VIP > 1.0 as major contributors.

- Analyze the loading plots (p[1] vs. p[2]) to interpret the influence of original variables on the LVs.

External Validation (on Test Set):

- Use the finalized model (with A LVs) to predict the activity of the test set compounds.

- Calculate key external validation metrics:

- R²pred (or R²test): Coefficient of determination between predicted and observed Y for the test set.

- RMSEP: Root Mean Square Error of Prediction.

Table 2: Example Model Performance Output

| Dataset | No. of LVs (A) | R²Y(cum) | Q²(cum) | RMSE (Calibration) | R²_pred (Test) | RMSEP (Test) |

|---|---|---|---|---|---|---|

| Catalyst Set A | 3 | 0.89 | 0.72 | 0.15 log units | 0.81 | 0.22 log units |

| Catalyst Set B | 4 | 0.92 | 0.68 | 0.18 log units | 0.65 | 0.31 log units |

PLS-QSAR Model Development and Validation Protocol

The Scientist's Toolkit: Essential Reagents & Software for PLS-QSAR Research

Table 3: Key Research Reagent Solutions and Computational Tools

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| Molecular Modeling Suite (e.g., Gaussian, Schrödinger, RDKit) | Software | Calculates quantum chemical (e.g., HOMO/LUMO energies, charges) and molecular descriptors (e.g., molecular weight, logP, topological indices) for the X-matrix. |

Statistical Software with PLS (e.g., SIMCA, JMP, R pls, Python scikit-learn) |

Software | Performs the core PLS regression, cross-validation, score/loading plot generation, and calculation of VIPs and model metrics. |

| Curated Catalyst/Bioactivity Database (e.g., internal library, PubChem, CAS) | Data Source | Provides the initial set of molecular structures and associated experimental response data (Y-matrix) for model training and testing. |

| Descriptor Pre-processing Script | Custom Code/Module | Automates the critical steps of data cleaning, imputation (if needed), centering, and scaling (autoscaling) to prepare the X-matrix for PLS analysis. |

| Validation Metric Calculator | Custom Code/Module | Computes standardized external validation parameters (R²_pred, RMSEP, etc.) to adhere to OECD QSAR validation principles. |

Building a Robust PLS-QSAR Model: A Step-by-Step Methodological Workflow

The development of robust Quantitative Structure-Activity Relationship (QSAR) models using Partial Least Squares (PLS) regression for predicting catalyst activity is fundamentally dependent on the quality of the underlying dataset. This protocol details the systematic curation and preparation of a high-quality, chemically diverse dataset suitable for training and validating such models, with a focus on heterogeneous catalysis. The principles ensure data integrity, minimize bias, and enhance model generalizability.

Data Acquisition and Initial Curation Protocol

Source Identification and Data Harvesting

Objective: To gather raw catalyst performance data from authoritative, publicly accessible repositories.

Protocol:

- Primary Source Querying:

- Access the Catalysis-Hub.org API (

https://api.catalysis-hub.org/) using Pythonrequestslibrary. Filter for reactions of interest (e.g., CO2 hydrogenation, methane oxidation) and associated catalyst materials (e.g., transition metals on oxide supports). - Query the NIST Catalysis Database (

https://srdata.nist.gov/catalysis/) for well-characterized catalyst systems and standardized turnover frequency (TOF) or activation energy (Ea) data. - Search PubMed and arXiv for recent publications containing structured catalyst data tables using keywords: "catalyst dataset," "turnover frequency," "activation energy," "[Your Target Reaction]."

- Access the Catalysis-Hub.org API (

- Data Extraction:

- For API-based sources, parse JSON responses to extract fields:

catalyst_composition,reaction_conditions(T, P),performance_metric(TOF, selectivity, Ea), andcharacterization_methods. - For literature sources, employ tabula-py (for PDFs) or manual entry into a structured

.csvtemplate.

- For API-based sources, parse JSON responses to extract fields:

- Initial Data Logging: Record all harvested data in a raw master table (

Raw_Data_Log.csv) with mandatory source URL/DOI and extraction timestamp.

Data Cleaning and Standardization

Objective: To transform heterogeneous raw data into a consistent, machine-readable format.

Protocol:

- Unit Standardization:

- Convert all temperature values to Kelvin (K).

- Convert all pressure values to bar.

- Convert all rate-based metrics (TOF) to a common unit (e.g., s⁻¹ or mol·molₘᵉₜₐₗ⁻¹·s⁻¹).

- Composition Parsing: Use the

ChemFormPython library to parse and standardize catalyst compositional strings (e.g., "Pt3Sn" -> "Pt₃Sn", "5 wt% Pd/Al2O3" -> "Pd(5)/Al₂O₃"). - Missing Data Flagging: For entries missing critical descriptors (e.g., surface area, particle size) or performance metrics, flag with

"NA"– do not impute at this stage. - Deduplication: Identify and merge duplicate entries from multiple sources, retaining the source with the most complete characterization data.

Table 1: Standardized Data Schema for Catalyst Entries

| Field Name | Data Type | Description | Example |

|---|---|---|---|

Catalyst_ID |

String | Unique identifier | CAT_2024_001 |

Bulk_Composition |

String | Standardized formula | Co₃O₄ |

Support |

String | Standardized formula | γ-Al₂O₃ |

Dopant |

String | Standardized formula | Ce (2 at%) |

Synthesis_Method |

String | Controlled vocabulary | Co-precipitation |

Surface_Area |

Float (m²/g) | BET surface area | 120.5 |

Reaction |

String | Controlled vocabulary | CO2_Hydrogenation |

Temperature |

Float (K) | Reaction temperature | 573.15 |

Pressure |

Float (bar) | Reaction pressure | 10.0 |

TOF |

Float (s⁻¹) | Turnover Frequency | 0.045 |

Selectivity |

Float (%) | Product selectivity | 85.2 |

E_Activation |

Float (kJ/mol) | Activation Energy | 65.3 |

Source_DOI |

String | Data provenance | 10.1021/acscatal.3c01245 |

Descriptor Calculation and Feature Engineering Protocol

Atomic and Structural Descriptor Calculation

Objective: To generate quantitative descriptors encoding catalyst composition and structural properties for PLS input.

Protocol:

- Bulk Elemental Descriptors: For each elemental component in the catalyst (active metal, support, dopant), calculate using

pymatgen:- Atomic number, atomic radius, electronegativity (Pauling), group, period.

- Ionic radii for common oxidation states.

- Surface Property Estimation:

- Metal Dispersion (D): Estimate using average particle size (from TEM) via formula D ≈ (100 * (Number of surface atoms)) / (Total number of atoms). For spherical particles, use established geometric models.

- Active Site Count: Calculate as

(Metal_Loading * D) / (Atomic_Weight_of_Metal).

- Reaction Condition Descriptors: Include

log(P),1/T(inverse temperature) as explicit descriptors to capture condition-dependent performance trends.

Table 2: Key Calculated Descriptor List for PLS Modeling

| Descriptor Category | Specific Descriptor | Calculation Method/Source | Relevance to Activity |

|---|---|---|---|

| Elemental | Avg. Metal Electronegativity | Weighted mean from pymatgen |

Adsorption strength |

| Elemental | d-band Center (Estimation) | From elemental identity & coordination (tabular values) | Electronic structure proxy |

| Structural | Estimated Metal Dispersion | From particle size model or chemisorption | Active site availability |

| Structural | Support Ionicity Index | Electronegativity difference (Support - O) | Support-metal interaction |

| Condition | Reduced Temperature | T / Tmeltingpoint(active phase) | Sintering/ stability factor |

| Condition | Reaction Thermodynamic Drive | ΔG of reaction at T (from NIST-JANAF) | Kinetic driving force |

Data Quality Validation and Outlier Management Protocol

Consistency and Thermodynamic Plausibility Check

Objective: To identify and investigate physiochemically implausible data points.

Protocol:

- Arrhenius Consistency: For datasets reporting rate (or TOF) at multiple temperatures, perform linear regression of

ln(TOF) vs. 1/T. Data series with R² < 0.85 should be flagged for review. - Elemental Balance: Verify that reactant and product stoichiometries align with the reported selectivity data.

- Activity-Site Correlation: Plot

TOF vs. Estimated_Dispersion. Identify points with extremely high TOF at very low dispersion (or vice versa) as potential outliers for source data re-examination.

Statistical Outlier Detection

Objective: To identify points that may disproportionately influence PLS model parameters.

Protocol:

- Descriptor Space Outliers: Using the scaled descriptor matrix (X), calculate the Leverage (hat matrix) for each sample. Samples with leverage >

(3 * number_of_descriptors) / number_of_samplesare considered high-leverage points. - Activity Space Outliers: After an initial PLS model, analyze studentized residuals. Samples with absolute studentized residuals > 3 are flagged.

- Expert Review: All statistically flagged points undergo manual review against original literature before exclusion to differentiate true outliers from valuable edge-case data.

Dataset Splitting and Final Assembly Protocol

Rational Splitting for QSAR Model Development

Objective: To create training, validation, and test sets that ensure chemical space coverage and prevent data leakage.

Protocol:

- Chemical Space Clustering: Perform k-means clustering (using

sklearn) on the scaled elemental and structural descriptors (excluding condition descriptors). - Stratified Split: Allocate clusters to training (≈70%), validation (≈15%), and test (≈15%) sets, ensuring each set contains representatives from all major clusters (i.e., catalyst types).

- Temporal Hold-Out: If data spans publication years, enforce that the test set contains only the most recent ~2 years of data to simulate prospective prediction.

Final Assembly:

- Produce three finalized

.csvfiles:Training_Set.csv,Validation_Set.csv,Test_Set.csv. - Each file includes all standardized data (Table 1) and calculated descriptors (Table 2).

- A companion

Metadata_README.txtdocuments all curation steps, version, and exclusion rationales.

Diagram Title: Catalyst Data Curation and Splitting Workflow

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Resources for Catalyst Data Curation and QSAR Preparation

| Item/Resource | Function/Application in Protocol | Example/Note |

|---|---|---|

| Python Libraries | ||

pymatgen |

Core library for parsing compositions, calculating elemental properties, and estimating structural descriptors. | Enables automatic generation of "Elemental Descriptors" (Table 2). |

scikit-learn |

Essential for k-means clustering (dataset splitting), PLS model prototyping, and statistical outlier detection. | Used for StandardScaler, PLSRegression, and KMeans. |

ChemForm |

Specialized library for standardizing and validating chemical formula strings. | Converts diverse compositional notations into a canonical form. |

| Data Sources | ||

| Catalysis-Hub.org API | Primary source for computed and experimental catalytic data with structured JSON output. | Query using reaction SMILES or catalyst formula. |

| NIST Catalysis Database | Source for carefully validated, benchmarked catalytic performance data. | Critical for thermodynamic data (ΔG) and reliable activation energies. |

| PubChem/PyMOL | For obtaining molecular structures of reactants/products to calculate additional molecular descriptors if needed. | |

| Computational Tools | ||

| Jupyter Notebook | Interactive environment for developing and documenting the entire data curation pipeline. | Ensures reproducibility. All steps should be scripted. |

| Pandas & NumPy | Foundational libraries for data manipulation, filtering, and table operations on the master dataset. | Used to create and manage tables like Table 1. |

| Git/GitHub | Version control for the curation scripts and iterative versions of the assembled dataset. | Mandatory for collaborative projects and tracking changes. |

Descriptor Calculation, Screening, and Pre-processing (Scaling, Centering)

Within the framework of a thesis on Quantitative Structure-Activity Relationship (QSAR) modeling for catalyst activity prediction using Partial Least Squares (PLS) regression, the initial phase of descriptor management is foundational. PLS is adept at handling collinear, noisy, and high-dimensional data, making it a mainstay in chemoinformatics. However, its performance is critically dependent on the quality and treatment of the molecular descriptors input. This protocol details the systematic workflow for calculating descriptors, screening for relevance and redundancy, and pre-processing data through scaling and centering to optimize PLS model robustness, interpretability, and predictive power for catalytic activity.

Descriptor Calculation: Protocol & Application Notes

Objective: Generate a comprehensive numerical representation of catalyst molecular structures.

Experimental Protocol:

- Structure Standardization: Prepare 3D molecular structures of all catalysts in the dataset using software like Open Babel or RDKit. Apply steps: Add hydrogens, generate tautomers, optimize geometry using MMFF94 or similar force field, and minimize energy.

- Descriptor Suite Selection: Calculate a diverse set of descriptors using dedicated packages. Common categories include:

- Constitutional: Atom counts, molecular weight.

- Topological: Connectivity indices (e.g., Kier & Hall indices).

- Geometrical: Moments of inertia, molecular surface area.

- Electronic: Partial charges, HOMO/LUMO energies (requires semi-empirical or DFT calculations).

- Quantum Chemical: (For catalyst studies) Descriptors from DFT outputs (e.g., Fukui indices, d-band center for metal complexes).

Key Software/Tools: RDKit, PaDEL-Descriptor, Dragon, Gaussian/GAMESS (for quantum chemical).

Descriptor Screening & Filtering

Objective: Reduce descriptor dimensionality by removing irrelevant, noisy, or redundant variables.

Experimental Protocol:

- Variance Threshold: Remove descriptors with variance below a threshold (e.g., 0.01) as they contain minimal information.

- Collinearity Check: Calculate a pairwise correlation matrix (e.g., Pearson's r). For highly correlated descriptor pairs (|r| > 0.9), retain one based on higher correlation with the target activity or simpler interpretability.

- Relevance to Target: Rank descriptors based on univariate statistical significance (e.g., p-value from ANOVA) with the catalytic activity. Filter out descriptors with p-value > 0.05 (or a FDR-corrected threshold).

Table 1: Example Post-Screening Descriptor Metrics

| Descriptor ID | Category | Variance | Max Correlation with Others | p-value (vs. Activity) | Retained (Y/N) |

|---|---|---|---|---|---|

| MW | Constitutional | 245.7 | 0.12 | 0.03 | Y |

| ALogP | Physicochemical | 0.08 | 0.95 (with SLogP) | 0.01 | Y* |

| SLogP | Physicochemical | 0.09 | 0.95 (with ALogP) | 0.02 | N |

| HOMO_Energy | Electronic | 0.45 | -0.32 | 0.87 | N |

| BalabanJ | Topological | 1.22 | 0.15 | 0.005 | Y |

*ALogP retained over SLogP due to slightly better p-value.

Pre-processing: Scaling and Centering

Objective: Standardize descriptor distributions to meet PLS assumptions and ensure model stability.

Experimental Protocol:

- Centering: Subtract the mean of each descriptor column from every value in that column. This centers the data around zero for each variable.

- Formula: ( X_{centered} = X - \bar{X} )

- Scaling: Choose a method based on data distribution and goal.

- Unit Variance (Auto-scaling): Divide centered data by the standard deviation of each descriptor. This gives all variables equal weight.

- Formula: ( X{scaled} = \frac{X - \bar{X}}{\sigmaX} )

- Pareto Scaling: Divide centered data by the square root of the standard deviation. A compromise between no scaling and unit variance.

- Range Scaling: Scale to a predefined range, e.g., [0,1] or [-1,1].

- Unit Variance (Auto-scaling): Divide centered data by the standard deviation of each descriptor. This gives all variables equal weight.

- Apply to Splits: Crucial: Calculate mean and standard deviation (or other scaling parameters) only from the training set. Apply these same parameters to transform the validation/test sets to prevent data leakage.

Table 2: Comparison of Pre-processing Methods for PLS

| Method | Formula | Best For | Impact on PLS |

|---|---|---|---|

| Mean Centering | ( X - \bar{X} ) | All models, removes intercept bias. | Essential first step. |

| Unit Variance | ( (X - \bar{X}) / \sigma ) | Descriptors with different units; assumes equal importance. | Prevents variables with large magnitude from dominating. |

| Pareto Scaling | ( (X - \bar{X}) / \sqrt{\sigma} ) | Situations where moderate variable importance differences are expected. | Reduces impact of high variance variables less drastically than unit variance. |

| Min-Max [0,1] | ( (X - X{min})/(X{max} - X_{min}) ) | Bounded ranges or image/data pixel intensity. | Sensitive to outliers; use cautiously. |

Title: QSAR Descriptor Processing Workflow for PLS

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for Descriptor Processing

| Item | Category | Function/Benefit |

|---|---|---|

| RDKit | Open-source Software | Core library for cheminformatics; enables molecular standardization, 2D/3D descriptor calculation, and fingerprint generation within Python scripts. |

| PaDEL-Descriptor | Software | Standalone tool for calculating >1875 2D and 3D molecular descriptors and fingerprints directly from structure files. |

| Open Babel | Software | Toolkit for interconverting chemical file formats and performing basic structure manipulations (e.g., protonation, energy minimization). |

| Dragon | Commercial Software | Industry-standard software for calculating a very extensive suite (>5000) of molecular descriptors. |

| Python/R + scikit-learn/pls | Programming/Stats | Essential environments for implementing custom screening scripts, statistical filters, and performing PLS regression with built-in scaling. |

| Gaussian 16 | Quantum Chemistry Software | Used for advanced descriptor calculation (e.g., electronic, quantum chemical) via DFT, which can be critical for catalyst activity QSAR. |

| Jupyter Notebook/Lab | Development Environment | Provides an interactive platform for documenting the entire descriptor processing pipeline, ensuring reproducibility. |

| Matplotlib/Seaborn | Visualization Library | Used to generate correlation matrices, distribution plots of descriptors pre/post-scaling, and VIP score plots from PLS. |

Within the context of Quantitative Structure-Activity Relationship (QSAR) modeling for catalyst activity prediction, Partial Least Squares (PLS) regression is a cornerstone technique for analyzing high-dimensional data with collinear predictors. A critical step in developing a robust and predictive PLS model is determining the optimal number of latent components. An under-fitted model (too few components) fails to capture essential structural information, while an over-fitted model (too many components) models noise, leading to poor generalization. This protocol details cross-validation (CV) strategies, framed within catalyst QSAR research, to identify this optimal number.

Core Cross-Validation Strategies: Protocol & Application

The following table summarizes the primary CV methods used for component selection, with their respective protocols detailed subsequently.

Table 1: Comparison of Cross-Validation Strategies for PLS Component Selection

| Strategy | Key Principle | Optimal For | Advantages | Limitations |

|---|---|---|---|---|

| k-Fold CV | Data split into k disjoint folds; model trained on k-1 folds, validated on the left-out fold. | Medium to large datasets (>50 samples). | Reduces variance of the error estimate compared to LOOCV; computationally efficient. | Choice of k can influence results; estimates can be biased for small k. |

| Leave-One-Out CV (LOOCV) | Extreme case of k-fold where k = N (number of samples). Each sample is a test set once. | Very small datasets (<30 samples). | Unbiased estimate; uses maximum data for training. | High computational cost for large N; high variance in error estimate. |

| Repeated k-Fold CV | k-Fold CV process repeated n times with different random partitions. | Small to medium datasets where stability is a concern. | More reliable and stable estimate of model performance. | Increased computational cost. |

| Leave-Group-Out CV (LGOCV) | Leaves out a predefined group (e.g., a chemical scaffold cluster) per iteration. | Datasets with inherent clustering (e.g., by catalyst core). | Tests model's ability to predict new structural classes; conservative estimate. | Can be pessimistic; requires prior knowledge for grouping. |

Detailed Protocol: k-Fold Cross-Validation for PLS Component Selection

This is the most widely recommended strategy for QSAR model development.

Objective: To determine the number of PLS components (A) that minimizes the prediction error on unseen data.

Materials & Reagents:

- Dataset: A matrix of molecular descriptors (X) and a vector of catalyst activity/response values (y).

- Software: R (with

pls,caretpackages) or Python (withscikit-learn,numpy).

Procedure:

- Preprocessing: Standardize the X matrix (e.g., unit variance scaling) and center the y vector.

- Define Parameter Grid: Set a maximum plausible number of components (e.g., 1 to 20 or 1 to the rank of X).

- Split Data: Randomly partition the dataset into k folds of approximately equal size (common k = 5 or 10).

- Iterative Training & Validation: a. For i = 1 to k: - Hold out fold i as the validation set. - Use the remaining k-1 folds as the training set. - For each candidate number of components a in the grid: i. Fit a PLS model with a components on the training set. ii. Predict the activity for the held-out validation set. iii. Calculate the prediction error (e.g., Root Mean Square Error, RMSE) for fold i and component count a. b. For each a, compute the average performance metric (e.g., mean RMSE) across all k folds.

- Determine Optimum: Identify the number of components, a_opt, that yields the minimum average RMSE. A common secondary rule is to choose the simplest model (fewer components) whose error is within one standard error of the minimum (the "one-standard-error" rule).

- Final Model: Fit a final PLS model using a_opt components on the entire dataset.

Detailed Protocol: Leave-Group-Out CV for Scaffold-Based Validation

This protocol is crucial in catalyst discovery to assess extrapolation capability to new chemical series.

Objective: To determine the optimal number of PLS components that maintains predictive performance across distinct molecular scaffolds.

Procedure:

- Define Groups: Cluster compounds in the dataset based on a common molecular scaffold or core structure.

- Iteration: For each unique scaffold group G: a. Hold out all compounds belonging to scaffold G as the test set. b. Use compounds from all other scaffolds as the training set. c. Repeat the component sweep (Steps 2-4 from 2.1 Protocol) using this training/test split.

- Aggregate Results: Compute the average prediction error (e.g., RMSE) for each component count across all held-out scaffold groups.

- Select a_opt: Choose the component number that minimizes the average cross-scaffold prediction error, prioritizing model parsimony.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for PLS-based Catalyst QSAR

| Item | Function in PLS-QSAR Workflow |

|---|---|

| Molecular Descriptor Software (e.g., Dragon, RDKit, PaDEL) | Generates quantitative numerical representations (descriptors) of catalyst molecular structures, forming the X-matrix. |

| Chemical Dataset with Measured Activity | Curated set of catalyst structures and their corresponding experimentally determined activity/performance metrics (y-vector). Must be congeneric for meaningful QSAR. |

Data Preprocessing Tools (e.g., scikit-learn StandardScaler, R caret) |

Centers and scales descriptor data to avoid bias from arbitrary descriptor magnitude, a critical step before PLS. |

PLS Algorithm Implementation (e.g., NIPALS, SIMPLS in R pls or Python scikit-learn.cross_decomposition.PLSRegression) |

Core computational engine that performs the latent variable projection and regression. |

Cross-Validation Framework (e.g., caret::trainControl, sklearn.model_selection.KFold) |

Provides the infrastructure to implement the CV strategies described, managing data splits and iteration. |

| Model Validation Metrics (e.g., Q², RMSEcv, R²pred) | Quantitative measures to assess the internal (CV) and external predictive ability of the final model. |

Visualization of Workflows

Title: Cross-Validation Workflow for PLS Component Selection

Title: Logic for Selecting Optimal Component Count

Within Quantitative Structure-Activity Relationship (QSAR) studies for catalyst activity prediction, Partial Least Squares (PLS) regression is a cornerstone multivariate technique. It is particularly effective when predictor variables (molecular descriptors) are numerous, collinear, and noisy. The interpretation of PLS models hinges on two critical metrics: Variable Importance in Projection (VIP) scores and regression coefficients. VIP scores estimate the importance of each descriptor in explaining both the predictor (X) and response (Y) variance in the model. Regression coefficients provide the direction and magnitude of each descriptor's effect on the predicted catalytic activity. This protocol details the systematic training, validation, and interpretation of PLS models in the context of catalyst design.

Table 1: Key Interpretation Metrics for PLS Models

| Metric | Formula/Calculation | Interpretation Threshold | Purpose in Catalyst QSAR |

|---|---|---|---|

| VIP Score | ( VIPk = \sqrt{ \frac{p}{Rd(Y,t)} \sum{a=1}^{A} Rd(Y,ta) w{ak}^2 } ) | VIP > 1.0 indicates "important" variable. | Identifies molecular descriptors most relevant for predicting catalyst activity (Turnover Frequency, Yield, etc.). |

| Standardized Coefficient | ( b{std} = b * (sx / s_y) ) | Magnitude & sign indicate effect strength and direction. | Shows how a unit change in a standardized descriptor influences the activity. |

| Regression Coefficient (b) | From PLS model: ( \hat{Y} = Xb + e ) | Compare magnitude within model. | Direct model parameter for prediction; requires careful scaling interpretation. |

| R²Y (cum) | ( 1 - \frac{SS{resid}}{SS{total}} ) | Closer to 1.0 indicates better fit. | Cumulative proportion of Y-variance explained by the extracted components. |

| Q² (cum) | ( 1 - \frac{PRESS}{SS_{total}} ) | Q² > 0.5 is good, > 0.9 excellent. | Cross-validated predictive ability estimate; guards against overfitting. |

Table 2: Example VIP Score Analysis from a Catalytic TOF Prediction Study

| Molecular Descriptor | VIP Score | Std. Coefficient | Interpretation |

|---|---|---|---|

| LUMO Energy | 2.45 | +0.87 | Critical. Lower LUMO (higher VIP, positive coeff.) correlates with higher activity for electrophilic substrates. |

| Steric Bulk Index | 1.78 | -0.62 | Important. Increased steric bulk negatively impacts activity, likely due to substrate access. |

| Metal d-Electron Count | 1.05 | +0.31 | Marginally Important. Positive influence on activity. |

| Dipole Moment | 0.87 | -0.10 | Not Significant (VIP<1). Minimal influence in this model. |

| Polar Surface Area | 0.65 | +0.05 | Not Significant (VIP<1). Minimal influence in this model. |

Model Stats: A=3 components, R²Y = 0.89, Q² = 0.81.

Experimental Protocols

Protocol 3.1: PLS Model Development for Catalyst Activity

Objective: To construct a validated PLS regression model predicting catalytic activity from molecular descriptors. Materials: See "Scientist's Toolkit" (Section 6). Procedure:

- Dataset Preparation:

- Assemble a homogeneous dataset of 20-100 catalyst structures with corresponding experimental activity data (e.g., Turnover Frequency, Yield).

- Calculate a comprehensive set of 2D/3D molecular descriptors (e.g., electronic, steric, topological) for all structures using cheminformatics software.

- Data Preprocessing: Standardize the X-matrix (descriptors) to unit variance and center. Center the Y-vector (activity).

- Model Training & Component Selection:

- Split data into training (70-80%) and external test sets (20-30%). Use the training set for all model building.

- Perform PLS regression on the training set. Use Venetian blinds or leave-one-out cross-validation on the training set to determine the optimal number of latent components (A).

- Select A where Q² is maximized or the decrease in predicted residual sum of squares (PRESS) is statistically insignificant.

- Model Interpretation:

- Extract VIP scores and standardized regression coefficients for the model with A components.

- Rank descriptors by VIP score. Identify all descriptors with VIP > 1.0 as influential.

- Cross-reference with coefficients: A high VIP descriptor with a large positive coefficient is a strong positive driver of activity; a large negative coefficient indicates a strong negative driver.

- Model Validation:

- Internal: Report R²Y and Q² for the training set.

- External: Predict the held-out test set. Calculate predictive R² (R²_pred) and root mean square error of prediction (RMSEP).

- Y-Randomization: Scramble the Y-activity values and rebuild the model. A significant drop in R² and Q² confirms model robustness against chance correlation.

Protocol 3.2: Bootstrap Analysis for Coefficient Confidence Intervals

Objective: To assess the stability and statistical significance of PLS regression coefficients. Procedure:

- Using the finalized model parameters (A components, preprocessing), perform bootstrapping (e.g., 500-1000 iterations).

- In each iteration, randomly sample the training dataset with replacement to the original sample size and rebuild the PLS model.

- For each descriptor, store the regression coefficient from each bootstrap model.

- Calculate the mean coefficient and its 95% confidence interval (using the percentile method, e.g., 2.5th to 97.5th percentile of the bootstrap distribution).

- Descriptors whose confidence intervals do not include zero are considered statistically significant at the 95% level. Integrate this finding with the VIP score analysis.

Visualizations

Diagram 1: PLS Model Interpretation Workflow

Diagram 2: VIP vs. Coefficient Decision Matrix

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item/Category | Example Product/Software | Function in PLS QSAR for Catalysis |

|---|---|---|

| Cheminformatics & Modeling Suite | OpenChem, RDKit, MOE, Schrödinger Maestro | Calculates molecular descriptors (X-matrix) from catalyst structures. |

| Multivariate Analysis Software | SIMCA-P, R (pls, ropls packages), Python (scikit-learn), MATLAB PLS_Toolbox | Performs PLS regression, cross-validation, and generates VIP scores/coefficients. |

| Statistical Analysis Environment | R Studio, Jupyter Notebooks, OriginPro | Conducts bootstrapping, statistical tests, and creates publication-quality plots. |

| Descriptor Database | Dragon, CODESSA, PaDEL-Descriptor | Provides large, validated libraries of molecular descriptors for comprehensive analysis. |

| Validation Data Repository | In-house catalyst performance database, literature data (e.g., ACS Catalysis). | Serves as source for Y-activity values and external test sets for model validation. |

| Standardization Software | KNIME, Pipeline Pilot | Automates data preprocessing pipelines (scaling, filtering, imputation). |

1. Introduction & Thesis Context This application note details a practical case study within a broader thesis focused on developing robust Quantitative Structure-Activity Relationship (QSAR) models using Partial Least Squares (PLS) regression for predicting catalyst performance. The primary goal is to translate molecular descriptor data into reliable predictions of catalytic activity, specifically Turnover Frequency (TOF) and enantioselectivity (often expressed as % enantiomeric excess, %ee). This approach is crucial for the rational design and high-throughput screening of organocatalysts and transition metal complexes in asymmetric synthesis, directly impacting pharmaceutical and fine chemical development.

2. Key Data Summary from Current Literature Recent research highlights the application of multivariate statistical models, particularly PLS, to correlate structural features with catalytic outcomes.

Table 1: Summary of Selected QSAR Studies for Catalytic Property Prediction

| Catalyst Class | Target Property | Key Descriptors Used | Model (PLS Components) | Performance (R² / Q²) | Reference (Year) |

|---|---|---|---|---|---|

| Proline-derived Organocatalysts | %ee (Aldol Reaction) | Steric (Sterimol), Electronic (Hammett σ), DFT-based | PLS (3 LV) | R²=0.91, Q²=0.85 | ACS Catal. (2023) |

| BINOL-based Phosphoric Acids | TOF (Transfer Hydrogenation) | Molecular Shape, Partial Charges, Hirshfeld Surface | PLS (4 LV) | R²=0.88, Q²=0.79 | Adv. Synth. Catal. (2024) |

| N-Heterocyclic Carbene Complexes | TOF (Suzuki-Miyaura) | %Vbur, NBO Charges, IR Stretching Frequencies | PLS (2 LV) | R²=0.94, Q²=0.82 | Organometallics (2023) |

| Chiral Squaramides | %ee (Michael Addition) | 3D MoRSE, WHIM, GRIND Descriptors | PLS (5 LV) | R²=0.89, Q²=0.76 | J. Org. Chem. (2022) |

3. Detailed Experimental Protocols

Protocol 3.1: Descriptor Calculation and Data Set Preparation Objective: To generate a numerical representation of catalyst structures for PLS analysis.

- Structure Optimization: Using software (e.g., Gaussian 16), perform a conformational search and geometry optimization for all catalyst molecules at the B3LYP/6-31G(d,p) level of theory.

- Descriptor Calculation: Employ a platform like DRAGON, PaDEL-Descriptor, or in-house scripts to compute a wide range of molecular descriptors (e.g., 2D/3D, topological, electronic, steric).

- Data Curation: Compile calculated descriptors and corresponding experimental TOF or %ee values into a single spreadsheet. Remove constant or near-constant descriptors. Handle missing data by imputation or removal.

- Data Preprocessing: Autoscale (standardize) all descriptor variables (mean=0, variance=1) to give them equal weight in the model.

Protocol 3.2: Partial Least Squares (PLS) Model Development & Validation Objective: To construct and validate a predictive PLS regression model.

- Data Splitting: Randomly divide the full dataset into a training set (70-80%) for model building and a test set (20-30%) for external validation.

- Model Training (PLS Regression): Use the training set in a statistical package (SIMCA, R

pls, Pythonscikit-learn). The algorithm extracts Latent Variables (LVs) that maximize covariance between descriptors (X) and the catalytic property (Y). - Model Optimization: Determine the optimal number of LVs by monitoring the cross-validated correlation coefficient (Q²) using Venetian blinds or leave-one-out method. Avoid overfitting.

- Model Validation:

- Internal: Report Q², R²Y, and Root Mean Square Error of Cross-Validation (RMSECV).

- External: Apply the finalized model to the held-out test set. Report the external R² and Root Mean Square Error of Prediction (RMSEP).

- Interpretation: Analyze the Variable Importance in Projection (VIP) scores and PLS coefficients to identify which structural descriptors most strongly influence TOF or enantioselectivity.

Protocol 3.3: Experimental Validation of Model Predictions Objective: To synthesize a model-predicted high-performance catalyst and validate its activity.

- Design & Prediction: Based on the PLS model's interpretation, design a novel catalyst structure predicted to have high TOF or %ee.

- Synthesis: Synthesize the target catalyst using standard organic/organometallic techniques. Purify and characterize fully (NMR, HRMS, etc.).

- Catalytic Testing: Perform the target reaction (e.g., aldol, hydrogenation) under standardized conditions from the original data set.

- Analysis: Measure conversion (by GC or NMR) to calculate TOF. Determine enantiomeric excess (%ee) via chiral HPLC or SFC.

- Correlation: Compare the experimentally measured value with the model's prediction to assess the model's predictive power.

4. Visualizations

Title: QSAR-PLS Catalyst Prediction & Validation Workflow

Title: PLS Regression Core Concept for Catalyst QSAR

5. The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 2: Key Reagents and Materials for QSAR-Guided Catalyst Development

| Item/Category | Function & Explanation | Example Vendor/Software |

|---|---|---|

| Quantum Chemistry Software | For geometry optimization and electronic structure calculation, providing input for descriptors. | Gaussian 16, ORCA, Schrödinger Suite |

| Descriptor Calculation Software | Computes thousands of molecular descriptors from chemical structures. | DRAGON, PaDEL-Descriptor, RDKit |

| Statistical & Modeling Software | Performs PLS regression, validation, and visualization of results. | SIMCA-P, R (pls package), Python (scikit-learn) |

| Catalyst Synthesis Reagents | Building blocks for the synthesis of organocatalysts or ligand precursors. | Sigma-Aldrich, TCI, Strem (chiral amines, diols, phosphines) |

| Analytical Standards & Columns | For accurate measurement of conversion and enantiomeric excess. | Chiral HPLC/SFC columns (Chiralpak, Lux), racemic product standards |

| High-Throughput Screening Kits | For rapid experimental data generation on catalyst libraries. | Commercially available parallel reactor stations (e.g., from Asynt, Unchained Labs) |

Troubleshooting PLS-QSAR Models: Overcoming Overfitting and Enhancing Interpretability

Within Quantitative Structure-Activity Relationship (QSAR) modeling for catalyst activity prediction using Partial Least Squares (PLS) regression, robust model validation is paramount. This document provides application notes and protocols for diagnosing and mitigating three critical pitfalls: overfitting, underfitting, and outlier influence. Effective management of these issues is essential for developing predictive, reliable, and interpretable models for catalytic design in drug development.

Table 1: Key Metrics for Diagnosing Model Pitfalls in PLS-QSAR

| Diagnostic Metric | Optimal Range/Indicator | Overfitting Signal | Underfitting Signal | Outlier Influence Signal | ||

|---|---|---|---|---|---|---|

| R² Training | High (e.g., >0.8) | Very high (e.g., >0.95) | Low (e.g., <0.6) | May be artificially high or low | ||

| Q² (LOO-CV) | >0.5, close to R² | Large gap vs. R² (Δ > 0.3) | Low (e.g., <0.5) | Unstable, large drop upon removal | ||

| RMSEC vs RMSEP | RMSEC ≈ RMSEP | RMSEC << RMSEP | Both RMSEC & RMSEP high | RMSEP >> RMSEC | ||

| Optimal PLS Components | Defined by Q² plateau | Many components, Q² peaks then drops | Few components, low Q² | Number shifts upon outlier removal | ||

| Leverage (h) / Williams Plot | h < 3p/n (Critical) | --- | --- | h > Critical Leverage | ||

| Standardized Residual | ±2.5 to ±3.0 | Random scatter | Patterned scatter | Residual | > 3.0 |

Table 2: Impact of Dataset Characteristics on Pitfalls

| Dataset Property | Risk of Overfitting | Risk of Underfitting | Risk of Outlier Influence |

|---|---|---|---|

| Sample Size (n) < 30 | High | Medium | Very High |

| Descriptor-to-Sample Ratio > 0.2 | Very High | Low | Medium |

| Low Signal-to-Noise Ratio | Medium | High | High |

| Clustered Data Distribution | High | Medium | Medium |

Experimental Protocols

Protocol 1: Systematic PLS Model Development & Validation for Catalyst QSAR

Objective: To build a validated PLS model for predicting catalyst activity while monitoring for over/underfitting. Materials: See "Scientist's Toolkit" (Section 6). Procedure:

- Data Curation: Curate a dataset of catalyst molecular structures and corresponding activity measurements (e.g., turnover frequency, yield). Calculate molecular descriptors using standardized software (e.g., RDKit, Dragon).

- Preprocessing: Apply dataset splitting (70/30 or 80/20 for training/test). Scale descriptors (e.g., unit variance scaling).

- Initial Modeling: Perform PLS regression on the training set, incrementally adding latent variables (LVs).

- Internal Validation: At each LV number, compute R² and Q² via Leave-One-Out (LOO) cross-validation.

- Optimal LV Selection: Identify the number of LVs that maximizes Q². If Q² peaks and then decreases with more LVs, it indicates overfitting. Persistently low Q² suggests underfitting.

- External Validation: Predict the held-out test set using the optimal model. Compare R²training, Q², and R²test.

- Diagnosis: Use criteria from Table 1. A model is acceptable if: Q² > 0.5, R²_test - Q² < 0.3, and the number of LVs is less than 1/5th the sample size.

Protocol 2: Identification and Treatment of Influential Outliers

Objective: To detect and assess the impact of outliers on PLS model parameters. Procedure:

- Build Initial Model: Develop a PLS model using the optimal LVs from Protocol 1.

- Calculate Diagnostic Plots:

- Williams Plot: Calculate the leverage (hᵢ) for each compound and its standardized cross-validated residual.

- Critical Leverage: Compute h* = 3(p+1)/n, where p is the number of LVs.

- Identify Outliers: Flag compounds with |standardized residual| > 2.5 (response outlier) or hᵢ > h* (structural outlier).

- Influence Assessment: Remove flagged compounds iteratively and rebuild the model. Observe changes in regression coefficients, LV selection, and validation metrics (>20% change indicates high influence).

- Action: Justify removal based on experimental error for response outliers. For structural outliers, consider if they expand the model's applicability domain or are erroneously measured. Report all removals.

Protocol 3: Mitigation of Underfitting via Feature Selection

Objective: To improve a model suffering from underfitting by enhancing relevant chemical information. Procedure:

- Diagnosis: Confirm underfitting via low R² and Q², and high error (Protocol 1).

- Variable Importance in Projection (VIP): Run PLS and extract VIP scores for all descriptors.

- Filter Features: Retain descriptors with VIP score > 1.0, as they contribute most to explaining the activity.

- Iterative Modeling: Re-run Protocol 1 with the reduced descriptor set. Monitor for improvement in Q² and reduction in prediction error.

- Alternative Method - Genetic Algorithm PLS (GA-PLS): If VIP filtering is insufficient, employ GA-PLS to stochastically search for an optimal descriptor subset that maximizes Q².

Visualization via Workflow Diagrams

Title: PLS Model Diagnosis Workflow: Overfitting vs Underfitting

Title: Outlier Identification and Influence Assessment Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for PLS-QSAR Catalyst Modeling

| Item / Solution | Function / Purpose | Example Software/Package |

|---|---|---|

| Chemical Descriptor Calculator | Generates quantitative numerical representations of molecular structures from 2D/3D coordinates. | RDKit, Dragon, PaDEL-Descriptor |

| PLS Regression & Validation Suite | Performs core PLS algorithm, internal cross-validation (LOO, LMO), and calculates key metrics (R², Q², RMSEC). | SIMCA, PLS_Toolbox (MATLAB), scikit-learn (Python) |

| Variable Selection Module | Identifies the most relevant descriptors to reduce noise and prevent over/underfitting. | VIP filtering, Genetic Algorithm PLS (GA-PLS), MOFA |

| Outlier Diagnostic Toolkit | Calculates leverage, residuals, and generates diagnostic plots (Williams Plot). | In-house scripts (R/Python), STATISTICA, JMP |

| Applicability Domain (AD) Tool | Defines the chemical space region where the model makes reliable predictions. | Leverage-based, PCA-based, DModX |

| Data Visualization Platform | Creates clear plots for model diagnostics, trends, and relationships. | matplotlib/seaborn (Python), ggplot2 (R), OriginLab |

Feature Selection Techniques Synergistic with PLS (e.g., VIP Filtering, Genetic Algorithms)

Within a QSAR (Quantitative Structure-Activity Relationship) thesis focused on predicting catalyst activity using Partial Least Squares (PLS) regression, robust feature selection is paramount. PLS inherently handles collinear variables, but its performance and interpretability are significantly enhanced by pre-selecting the most relevant molecular descriptors or spectral features. This document details application notes and protocols for feature selection techniques that synergize with PLS modeling, specifically Variable Importance in Projection (VIP) filtering and Genetic Algorithms (GA), in the context of catalyst design research.

Theoretical Framework and Synergy

Variable Importance in Projection (VIP) Filtering

VIP scores quantify the contribution of each independent variable (X) to the PLS model. A VIP score ≥ 1.0 is a commonly used threshold, indicating a variable's above-average importance. VIP filtering is a post-PLS or iterative selection method that refines the model by removing noise variables.

Genetic Algorithms (GA) for Feature Selection