Machine Learning for Catalyst Optimization: A Data-Driven Approach to Balancing Performance and Economic Viability

This article explores the transformative role of machine learning (ML) in streamlining the discovery and optimization of catalysts, with a specific focus on integrating techno-economic criteria for sustainable and cost-effective...

Machine Learning for Catalyst Optimization: A Data-Driven Approach to Balancing Performance and Economic Viability

Abstract

This article explores the transformative role of machine learning (ML) in streamlining the discovery and optimization of catalysts, with a specific focus on integrating techno-economic criteria for sustainable and cost-effective chemical processes. We cover foundational ML concepts and the critical need for data-driven approaches in heterogeneous catalysis. The article details methodologies like artificial neural networks (ANNs) and ensemble models for predicting catalyst performance and process yields, demonstrating their application in reactions such as VOC oxidation and biofuel production. Furthermore, it addresses troubleshooting and optimization strategies, including feature importance analysis and hyperparameter tuning, to enhance model reliability. Finally, we discuss validation frameworks and comparative techno-economic assessments, highlighting how ML accelerates the development of high-performance, economically viable catalysts for industrial applications.

The Foundation of ML in Catalysis: Core Concepts and the Economic Imperative

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the most effective machine learning models for predicting catalytic activity, and how do I choose one?

Machine learning model selection depends on your specific data characteristics and prediction goals. Based on current research, the following models have demonstrated strong performance in heterogeneous catalysis applications:

- Artificial Neural Networks (ANNs) are particularly effective for modeling the non-linear relationships common in catalytic processes and have been successfully used to predict hydrocarbon conversion in VOC oxidation studies [1].

- Random Forests (RF) and other ensemble methods provide high interpretability and have been used for feature attribution, aiding in the design of catalysts like transition metal phosphides [2].

- Generative Adversarial Networks (GANs) are emerging as powerful tools for exploring uncharted material spaces and generating novel candidate catalyst structures by learning from existing datasets [2] [3].

For initial projects, start with Random Forests or ANNs for property prediction. For generative design of new catalyst materials, explore GANs or Variational Autoencoders (VAEs) [3].

Q2: My ML model's predictions do not align with my experimental results. What could be wrong?

Misalignment between prediction and experiment is a common challenge. We recommend investigating the following areas:

- Check for Data Outliers: Use statistical methods like Principal Component Analysis (PCA) and feature importance analysis (e.g., SHAP) to detect and understand outliers in your training data that may be skewing the model [2].

- Re-evaluate Feature Selection: The electronic structure of a catalyst, particularly d-band descriptors (like d-band center, d-band filling, and d-band width), are critical for determining adsorption energies and catalytic performance. Ensure your model includes these key physical and electronic descriptors [2].

- Verify Data Consistency: Manually extracting catalyst data from literature can be tedious and error-prone. Inconsistencies or a lack of standardization in training data is a known hurdle for reliable reverse catalyst design [2].

Q3: How can I integrate economic criteria into my machine learning-driven catalyst optimization?

You can directly incorporate economic objectives into the optimization framework. One demonstrated approach involves:

- Developing a highly accurate predictive model (e.g., an Artificial Neural Network) for your target catalytic performance metric, such as hydrocarbon conversion [1].

- Using this model as a "digital twin" within an optimization loop. The optimization algorithm (e.g., Compass Search) is then tasked with finding input variables that minimize a combined cost function, which includes both the catalyst cost and the energy consumption required to achieve a target conversion level [1]. Studies show that in such optimizations, catalyst cost often dominates the objective function, leading to the selection of the most economically viable option [1].

Troubleshooting Common Experimental Issues

Issue: Poor Reproducibility in Catalyst Synthesis and Performance

| Potential Cause | Diagnostic Check | Corrective Action |

|---|---|---|

| Inconsistent Precipitant Mixing | Review synthesis protocol for stirring rate, time, and addition method. | Ensure continuous stirring for a fixed period (e.g., 1 hour) at room temperature during precursor precipitation [1]. |

| Incomplete Washing of Precipitate | Check the pH of the washing liquor. | Wash the precipitate with distilled water multiple times until the washing liquor achieves a near-neutral pH [1]. |

| Variation in Calcination Conditions | Verify furnace temperature calibration and atmosphere control. | Calcine the precursor in a furnace under a static air atmosphere, ensuring consistent temperature and duration across all batches [1]. |

Issue: Low Contrast in Data Visualization and Diagrams

Adhering to accessibility guidelines is crucial for creating clear and inclusive figures for publications and presentations.

- Requirement: Ensure the contrast ratio between text/foreground color and background color is at least 4.5:1 [4].

- Solution: Use the approved color palette below and an online contrast checker to validate your choices. The following palette provides a range of options that meet these standards when paired correctly.

Research Reagent Solutions for Cobalt-Based Catalyst Synthesis

The table below details key reagents used in the synthesis of Co₃O₄ catalysts via precipitation, as cited in ML-guided research [1].

| Research Reagent | Function in Synthesis | Example Source & Purity |

|---|---|---|

| Cobalt Nitrate Hexahydrate (Co(NO₃)₂·6H₂O) | Primary cobalt precursor providing Co²⁺ ions for precipitation. | Sigma-Aldrich, 98% [1] |

| Oxalic Acid Dihydrate (H₂C₂O₄•2H₂O) | Precipitating agent yielding a cobalt oxalate (CoC₂O₄) precursor. | Alfa Aesar, 98% [1] |

| Sodium Carbonate (Na₂CO₃) | Precipitating agent yielding a cobalt carbonate (CoCO₃) precursor. | Sigma-Aldrich, 99% [1] |

| Sodium Hydroxide (NaOH) | Precipitating agent yielding a cobalt hydroxide (Co(OH)₂) precursor. | Chimie-plus Laboratory, 99% [1] |

| Ammonium Hydroxide (NH₄OH) | Precipitating agent yielding a cobalt hydroxide (Co(OH)₂) precursor. | Chimie-plus Laboratory, 25-28% [1] |

| Urea (CO(NH₂)₂) | Precipitant precursor; decomposes in aqueous solution to facilitate precipitation of CoCO₃. | Not specified [1] |

Experimental Protocols & Workflows

Protocol 1: Synthesis of Co₃O₄ Catalysts via Precipitation [1]

- Solution Preparation: Prepare 100 mL of an aqueous solution of your chosen precipitating agent (e.g., 0.22 M oxalic acid).

- Precipitation: Under continuous stirring, add the precipitant solution to 100 mL of an aqueous Co(NO₃)₂·6H₂O solution (0.2 M). Continue stirring for 1 hour at room temperature.

- Separation & Washing: Separate the resulting precipitate by centrifugation. Wash with distilled water multiple times until the washing liquor reaches a near-neutral pH.

- Hydrothermal Aging (Optional): Transfer the precipitate to a Teflon-lined autoclave and heat in an oven at 80 °C for 24 hours.

- Drying & Calcination: Harvest the solid by centrifugation, wash, and dry at 80 °C overnight. Finally, calcine the dried precursor in a furnace under static air.

Protocol 2: Machine Learning Workflow for Catalyst Optimization with Economic Criteria [1]

- Data Collection: Compile a dataset of various catalysts and their key properties (e.g., composition, surface area, d-band electronic features) and performance metrics (e.g., conversion, selectivity) [1] [2].

- Model Training & Selection: Train multiple supervised learning models (e.g., 600 ANN configurations, Random Forests) to map catalyst features to performance. Use techniques like k-fold cross-validation to select the best-performing model [1].

- Economic Objective Function Definition: Define an optimization function that combines catalyst cost and operational energy consumption required to meet a target performance (e.g., 97.5% conversion) [1].

- Optimization: Use an optimization algorithm (e.g., Compass Search, Bayesian Optimization) with the trained ML model to find the input catalyst properties that minimize the economic objective function [1] [2].

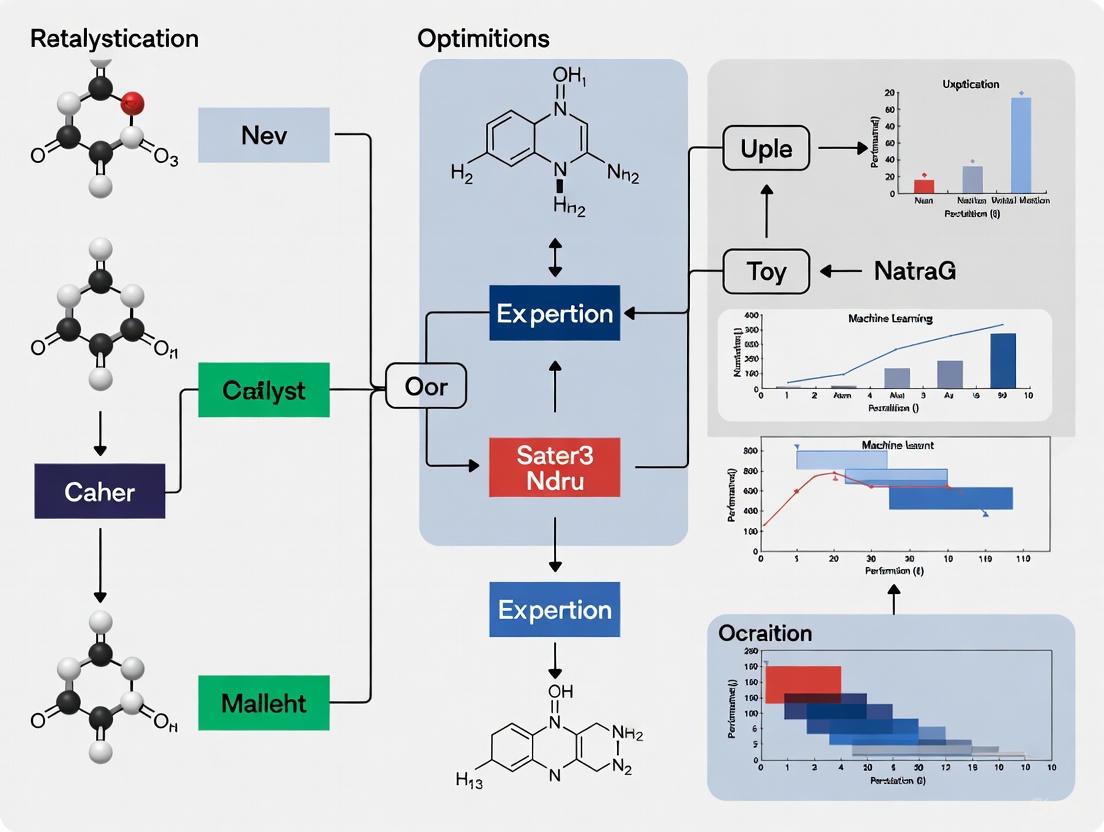

Workflow Visualization

ML-Driven Catalyst Design and Optimization

Electronic Structure Descriptors in Catalyst Design

Troubleshooting Guide: Techno-Economic Analysis in ML-Guided Catalyst Development

Frequently Asked Questions (FAQs)

1. What is the primary goal of integrating techno-economic analysis (TEA) with machine learning (ML) in catalyst development? The primary goal is to translate research gains into potential economic and commercial advances by evaluating production costs based on current performance and established improvement targets. This helps in assessing the potential economic feasibility of a process configuration and determining the potential for near-, mid-, and long-term deployment success [5].

2. Our ML model for catalyst performance shows high accuracy, but the economic projections are unrealistic. What could be wrong? This common issue often arises from a disconnect between the model's input variables and real-world economic constraints. Ensure your optimization framework includes both catalyst costs and energy consumption as minimization targets. For instance, in cobalt-based catalyst optimization for VOC oxidation, the analysis selected the cheapest catalyst when economic criteria were properly integrated, showing practically negligible influence of energy cost [1].

3. Which ML algorithms are most effective for catalyst optimization with economic criteria? Artificial Neural Networks (ANNs) are particularly effective due to the nonlinear nature of chemical processes. Research has successfully used hundreds of ANN configurations alongside supervised regression algorithms to model hydrocarbon conversion and optimize input variables to minimize both catalyst costs and energy consumption for target conversion rates [1].

4. How can we validate that our ML-guided catalyst design is economically viable? Use a structured optimization analysis that employs your best-performing neural networks to simultaneously minimize catalyst costs and energy consumption for reaching target conversion levels (e.g., 97.5% conversion). Compare your results with existing commercial catalysts and published results to validate economic viability [1].

5. What are the key techno-economic criteria to consider when evaluating catalysts for VOC oxidation? The essential criteria include catalyst costs, energy consumption required to achieve target conversion (e.g., 97.5%), and the combined cost of catalyst and energy. The optimization should select variables that minimize these factors while maintaining performance [1].

Common Experimental Issues & Solutions

| Problem | Symptoms | Possible Causes | Solution Steps |

|---|---|---|---|

| Economic Model Mismatch | ML predictions don’t align with cost projections; optimal catalyst is commercially unviable. | Input variables lack economic weighting; energy costs not properly quantified. | 1. Integrate catalyst cost and energy consumption directly into the loss function [1].2. Use optimization frameworks like Compass Search to minimize combined costs [1].3. Validate against known commercial catalyst economic data. |

| Poor Model Generalization | Model works on training data but fails with new catalyst compositions or conditions. | Overfitting; insufficient feature diversity in training data; inadequate validation. | 1. Increase dataset size with diverse catalyst properties [1].2. Implement ensemble methods combining multiple ML algorithms [1].3. Use automated ML (AutoML) for robust feature engineering and model selection [6]. |

| Inconclusive Optimization | Optimization analysis fails to identify a clear "best" catalyst based on properties. | Conflicting property-performance relationships; inadequate physical property characterization. | 1. Expand characterization to include more intrinsic properties (e.g., electronic structure, morphology) [1].2. Apply explainable AI to identify key performance factors [1].3. Cross-reference ML results with traditional kinetic studies. |

| Automated ML Pipeline Failure | Automated ML jobs fail without clear error messages; pipeline runs stall. | Resource constraints; data formatting issues; software dependency conflicts. | 1. Check child job logs and std_log.txt for detailed error traces [7].2. For pipeline runs, identify failed nodes marked in red for specific error messages [7].3. Verify data preprocessing and normalization steps in the AutoML pipeline [6]. |

Experimental Protocol: ML-Guided Catalyst Development with TEA

This protocol outlines the methodology for developing and evaluating cobalt-based catalysts for VOC oxidation, integrating machine learning with techno-economic analysis, as derived from recent research [1].

1. Catalyst Preparation via Precipitation

- Materials: Cobalt nitrate hexahydrate (Co(NO₃)₂·6H₂O) precipitating agents (oxalic acid, sodium carbonate, sodium hydroxide, ammonium hydroxide, urea).

- Procedure:

- Prepare 100 mL aqueous solution of precipitating agent (e.g., 0.22 M H₂C₂O₄·2H₂O).

- Add this solution to 100 mL of Co(NO₃)₂·6H₂O (0.2 M) solution under continuous stirring for 1 hour at room temperature.

- Separate the precipitate by centrifugation and wash with distilled water repeatedly until washing liquor reaches near-neutral pH.

- Transfer the precipitate to a Teflon-lined autoclave and hydrothermally treat at 80°C for 24 hours.

- Recover the precursor by centrifugation, wash, and dry overnight at 80°C.

- Calcine the dried precursor in a static air atmosphere to obtain the final Co₃O₄ catalyst.

2. Data Collection for ML Modeling

- Performance Data: Collect hydrocarbon conversion data for target VOCs (toluene, propane) across different experimental conditions.

- Catalyst Properties: Characterize physical and chemical properties including surface area, morphology, electronic structure, and composition.

- Economic Parameters: Quantize catalyst preparation costs (precursor, energy consumption) and operational energy requirements.

3. Machine Learning Model Development

- Algorithm Selection: Train multiple models, focusing on Artificial Neural Networks (ANNs) for their nonlinear modeling capabilities. Complement with other supervised regression algorithms.

- Model Training: Develop hundreds of ANN configurations using custom software. Utilize Scikit-Learn, TensorFlow, or PyTorch for algorithm implementation.

- Validation: Use k-fold cross-validation and hold-out testing to ensure model robustness and prevent overfitting.

4. Techno-Economic Optimization

- Framework Development: Create an optimization framework using the best-performing neural networks.

- Objective Function: Minimize combined costs including catalyst cost and energy consumption required for 97.5% VOC conversion.

- Optimization Algorithm: Implement algorithms such as Compass Search to identify optimal input variables balancing performance and economics.

Research Reagent Solutions

| Reagent / Material | Function in Catalyst Synthesis | Key Economic Considerations |

|---|---|---|

| Cobalt Nitrate Hexahydrate | Primary cobalt source forming precipitate with various agents. | Significant cost driver; use slight excess of cheaper precipitating agent to maximize yield and minimize cobalt loss [1]. |

| Oxalic Acid | Precipitating agent forming cobalt oxalate precursor. | cheaper alternative to cobalt nitrate; enables stoichiometrically controlled, thermodynamically favorable reaction [1]. |

| Sodium Carbonate | Precipitating agent forming cobalt carbonate precursor. | Cost-effective option; helps minimize overall catalyst manufacturing costs [1]. |

| Urea | Precipitant precursor generating carbonate ions in situ. | Low-cost agent; enables complete precipitation of cobalt ions to optimize material utilization [1]. |

| Sodium Hydroxide | Precipitating agent forming cobalt hydroxide precursor. | Economical choice; ensures high yield of precursor through complete conversion of Co²⁺ [1]. |

Techno-Economic Optimization Data Framework

| Optimization Variable | Impact on Catalyst Performance | Impact on Economics | ML Modeling Approach |

|---|---|---|---|

| Precursor Type | Determines catalyst morphology, surface area, and active sites. | Major cost factor; selection prioritizes cheapest effective option. | Categorical variable in ANN models; optimized for cost vs. performance. |

| Calcination Temperature | Affects crystallinity, surface area, and catalytic activity. | Energy-intensive step; higher temperatures increase manufacturing costs. | Continuous variable; optimized to balance activity gains with energy costs. |

| Metal Loading | Directly influences active site density and conversion efficiency. | Impacts material costs; optimal loading minimizes cobalt usage. | Numerical variable with constraint-based optimization. |

| Surface Area | Correlates with accessibility of active sites. | Not a direct cost factor, but influences activity required for energy efficiency. | Target property predicted by ML models from synthesis conditions. |

| Energy Consumption (97.5% Conversion) | Determines operating temperature and conditions. | Major operational expense; minimized in techno-economic optimization. | Key output variable in ANN models; directly included in cost minimization. |

Integrated Workflow for ML-Guided Catalyst Design with TEA

The diagram below illustrates the integrated methodology for combining machine learning with techno-economic analysis in catalyst development.

Troubleshooting Guides & FAQs

Algorithm Selection and Performance

Q: My ML model for predicting catalyst yield is achieving high accuracy on training data but performs poorly on new experimental data. What is the cause and how can I resolve this?

A: This is a classic case of overfitting, where your model has memorized the training data noise instead of learning the underlying pattern. Solutions include:

- Data Augmentation: Increase your dataset size and diversity. In catalysis, this can be done through techniques like varying precipitating agents (e.g., H₂C₂O₄, Na₂CO₃, NaOH) during catalyst synthesis to create a more robust training set [1].

- Algorithm Choice: Switch to or incorporate ensemble methods like Random Forest, which builds multiple decision trees and aggregates their results, making it less prone to overfitting than a single complex model [8].

- Validation Technique: Implement rigorous validation protocols, such as training on one set of catalyst compositions (e.g., Co-C₂O₄) and testing on entirely different ones, to ensure generalizability [1].

Q: How do I choose the right machine learning algorithm for my specific catalytic optimization problem?

A: The choice depends on your data size, type, and the problem's nature.

- For Small Datasets or Linear Relationships: Start with simpler models like Linear Regression or Multiple Linear Regression (MLR). These are interpretable and can effectively model well-behaved systems, such as using DFT-calculated descriptors to predict activation energies [8].

- For Complex, Non-linear Relationships: Use Artificial Neural Networks (ANNs) or Gradient Boosting methods (e.g., XGBoost). ANNs excel at capturing the non-linear nature of chemical processes, making them highly efficient for modeling catalyst performance [1].

- For Categorical Classification or High-Dimensional Data: Random Forest is a robust choice, as it can handle hundreds of molecular descriptors and provides insights into feature importance [8].

- For Generative Catalyst Design: To explore beyond existing catalyst libraries, use Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs). These can generate novel catalyst structures by learning from broad reaction databases [2] [9].

Data and Feature Management

Q: What are the most critical electronic-structure descriptors for predicting adsorption energies in alloy catalysts, and how can I validate their importance?

A: d-band electronic descriptors are fundamental for predicting adsorption energies of key intermediates like C, O, N, and H [2] [10].

- Key Descriptors: The most influential are d-band center (average energy of d-electron states), d-band filling, d-band width, and the d-band upper edge relative to the Fermi level [2].

- Validation with SHAP: Use SHapley Additive exPlanations (SHAP) analysis to quantify the contribution of each descriptor to your model's predictions. For instance, studies show that d-band filling is often critical for predicting the adsorption energies of C, O, and N, while the d-band center is more important for H adsorption [2]. This moves beyond simple correlation to a causal understanding.

Q: My dataset is limited and lacks standardization, which hinders model training. What strategies can I use?

A: This is a common challenge in catalysis informatics.

- Leverage Pre-trained Models: Use models that have been pre-trained on large, diverse reaction databases like the Open Reaction Database (ORD). These models can then be fine-tuned on your smaller, specific dataset, significantly improving performance and generalization [9].

- Data Cleaning and Preprocessing: Perform data cleaning to remove duplicates, correct errors, and ensure consistency. For feature engineering, apply Principal Component Analysis (PCA) to reduce the dimensionality of your descriptor set, which helps to eliminate noise and redundancy [2] [10].

- Utilize Specialized Databases: For heterogeneous catalysis, consult specialized databases like CatApp and Catalysis-Hub.org, which provide standardized datasets of reaction and activation energies [10].

Economic and Experimental Integration

Q: How can I integrate economic criteria, like catalyst cost and energy consumption, into the ML-driven optimization process?

A: You can frame this as a multi-objective optimization problem.

- Define Cost Functions: Quantify your economic targets. For example, define an objective function that minimizes both the catalyst cost (based on raw material prices) and the energy consumption required to achieve a target conversion (e.g., 97.5%) [1].

- Apply Optimization Algorithms: Use optimization frameworks like Bayesian Optimization or the Compass Search algorithm. These algorithms can use your trained ML model (e.g., an ANN) as a digital surrogate to efficiently navigate the variable space and find catalyst compositions that balance performance with economic constraints [1]. The optimization will typically select the cheapest viable catalyst unless energy costs are prohibitive [1].

Key Machine Learning Algorithms: Performance and Applications

Table 1: Comparison of Key ML Algorithms in Catalysis Research

| Algorithm | Primary Use Case | Key Advantages | Common Catalytic Applications | Reported Performance Metrics |

|---|---|---|---|---|

| Linear Regression [8] | Regression (Continuous output) | Simple, interpretable, fast, good baseline model. | Modeling power-law rate expressions; predicting activation energies from DFT descriptors. | R² = 0.93 for predicting C–O bond cleavage activation energies [8]. |

| Random Forest [8] | Classification & Regression | Robust to overfitting, handles high-dimensional data, provides feature importance. | Classifying catalyst performance; predicting reaction yields; analyzing ligand steric/electronic effects. | Can achieve full classification performance for catalyst evaluation [2]. |

| Artificial Neural Networks (ANNs) [1] | Regression & Classification | Captures complex, non-linear relationships; high accuracy for chemical processes. | Digital twins for catalyst performance; predicting VOC oxidation conversion; modeling adsorption energies. | Used to optimize input variables to minimize catalyst cost and energy consumption [1]. |

| Generative Adversarial Networks (GANs) [2] | Generative Design | Explores uncharted material space; generates novel catalyst candidates. | Identifying and classifying potential catalysts by analyzing electronic structures. | Used with Bayesian optimization to refine predictions and discover new materials [2]. |

| Variational Autoencoders (VAEs) [9] | Generative & Predictive Design | Generates novel catalysts conditioned on reaction components; can predict performance. | Inverse design of catalysts for given reactants and products; yield prediction. | Competitive RMSE and MAE in yield prediction across various reaction classes [9]. |

Experimental Protocols for Key Workflows

Protocol: Building a Predictive Model for Catalyst Performance

This protocol outlines the steps for creating an ML model to predict catalyst activity based on experimental data, incorporating economic optimization.

1. Data Curation

- Source Data: Compile a dataset of catalyst properties and their performance metrics. Data can be sourced from in-house experiments, high-throughput testing, or specialized databases like CatApp [10].

- Feature Selection: Identify and calculate relevant features. For alloy catalysts, this includes electronic-structure descriptors (d-band center, width, filling) and compositional/structural features [2] [10].

- Economic Data: Incorporate cost data for catalyst precursors (e.g., Co(NO₃)₂·6H₂O, H₂C₂O₄, NaOH) and energy costs for calcination processes [1].

2. Model Training and Validation

- Data Splitting: Split the dataset into a training set (~80%) and a test set (~20%). The test set must not be used for model adjustment to ensure an unbiased evaluation of generalizability [10].

- Algorithm Training: Train multiple algorithms (e.g., ANN, Random Forest) on the training set. For ANNs, explore different architectures (layers, nodes) and activation functions (ReLU, sigmoid) [1] [11].

- Model Validation: Evaluate the best-performing model on the held-out test set using metrics like Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), or R² [9].

3. Optimization and Analysis

- Economic Optimization: Use an optimization algorithm (e.g., Compass Search) with the validated ML model to find input variables that minimize a combined cost function (catalyst cost + energy cost) for a target conversion [1].

- Feature Importance: Perform SHAP analysis on the model to identify which descriptors (e.g., d-band filling) are most critical for predictive accuracy, aiding in fundamental understanding and future feature selection [2].

Protocol: High-Throughput Screening with Pre-trained MLFFs

This protocol uses pre-trained Machine Learning Force Fields (MLFFs) for rapid screening of catalyst candidates, as applied in CO₂ to methanol conversion studies [12].

1. Search Space Definition

- Select a set of metallic elements (e.g., Co, Ni, Cu, Zn, Pt, Rh) that are relevant to your target reaction and available in MLFF training databases like the Open Catalyst Project (OC20) [12].

- Compile a list of stable single metals and bimetallic alloys from materials databases (e.g., Materials Project) for these elements.

2. Adsorption Energy Calculation

- Use pre-trained MLFFs (e.g., Equiformer V2 from OCP) to rapidly calculate adsorption energies for key reaction intermediates (e.g., *H, *OH, *OCHO) across multiple surface facets and binding sites of each candidate material [12].

- This step can be over 10,000 times faster than direct DFT calculations, enabling the generation of hundreds of thousands of data points.

3. Descriptor Creation and Candidate Selection

- Create Adsorption Energy Distributions (AEDs): For each catalyst, aggregate the calculated adsorption energies into a distribution (AED) that captures the energetic landscape across its various surface sites [12].

- Unsupervised Learning: Use clustering algorithms (e.g., Hierarchical Clustering) and similarity metrics (e.g., Wasserstein distance) to compare the AEDs of new candidates to those of known high-performing catalysts [12].

- Propose new catalyst candidates (e.g., ZnRh, ZnPt₃) based on their similarity to effective benchmarks.

Visualization of Workflows

General ML Workflow for Catalyst Design

General ML Workflow for Catalyst Design

Reaction-Conditioned Generative Model (CatDRX)

Reaction-Conditioned Generative Model

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational and Experimental Reagents for ML-Driven Catalyst Research

| Reagent / Resource | Type | Function / Application | Example Use Case |

|---|---|---|---|

| Cobalt Nitrate (Co(NO₃)₂·6H₂O) [1] | Chemical Precursor | Common cobalt source for precipitation synthesis of Co₃O₄ catalysts. | Used with precipitants like oxalic acid or sodium carbonate to create diverse catalyst precursors for ML modeling. |

| Precipitating Agents (H₂C₂O₄, Na₂CO₃, NaOH) [1] | Chemical Modifier | Determines the morphology and properties of the catalyst precursor during synthesis. | Creating a varied dataset of catalysts for training ML models to understand the impact of synthesis route on performance. |

| Open Catalyst Project (OCP) MLFFs [12] | Computational Tool | Pre-trained ML force fields for rapid and accurate calculation of adsorption energies. | High-throughput screening of nearly 160 metallic alloys for CO₂ to methanol conversion by generating adsorption energy distributions (AEDs). |

| SHAP (SHapley Additive exPlanations) [2] | Analysis Framework | Explains the output of any ML model by quantifying the contribution of each input feature. | Identifying that d-band filling is a more critical descriptor than d-band center for predicting O adsorption energy on a specific alloy set. |

| Materials Project Database [12] [10] | Data Resource | Open-access database of computed crystal structures and properties for inorganic materials. | Sourcing a list of stable, experimentally observed crystal structures for single metals and bimetallic alloys to define a search space. |

| CatDRX Framework [9] | Generative AI Model | A reaction-conditioned VAE for generating novel catalyst structures and predicting their performance. | Inverse design of catalysts for a specific reaction by inputting desired reactants and products, then generating optimized catalyst structures. |

Troubleshooting Guide: Machine Learning for Catalyst Optimization

This guide addresses common challenges researchers face when applying Machine Learning (ML) to catalyst optimization, helping you identify and resolve issues with data, models, and economic integration.

FAQ 1: Why does my ML model fail to predict catalyst activity accurately?

- Problem: The model's predictions do not align with experimental results, showing high error rates.

- Diagnosis: This often stems from non-standardized or low-quality data, or the use of inappropriate model descriptors that do not capture the critical factors influencing catalytic activity [1] [13] [14].

- Resolution:

- Audit Your Data: Ensure your dataset includes consistent and comprehensive catalyst properties. The table below outlines key properties and their relevance [1] [14].

- Verify Data Sources: Use standardized databases like the Catalyst Property Database (CPD) to acquire and benchmark data, ensuring consistency in measurements and conditions [14].

- Re-evaluate Descriptors: Employ feature selection techniques or AI-driven descriptor discovery methods (e.g., SISSO) to identify the most relevant physical and chemical properties for your specific catalytic reaction [15].

FAQ 2: How can I integrate economic criteria into my catalyst optimization workflow?

- Problem: The ML model identifies a high-performance catalyst, but its synthesis is prohibitively expensive for industrial application.

- Diagnosis: The optimization function is solely based on performance metrics (e.g., activity, selectivity) and does not include techno-economic constraints [1] [16].

- Resolution:

- Define a Cost Function: Develop a function that combines catalyst cost (precursor materials, synthesis) and operational energy consumption [1].

- Implement Multi-Objective Optimization: Use the ML model not just to maximize conversion, but to find the optimal trade-off between performance and cost. For instance, an optimization framework can be set up to minimize the combined cost of the catalyst and energy required to achieve a target conversion (e.g., 97.5%) [1].

- Screen for Cost-Effective Precursors: Prioritize cheaper precipitating agents and synthesis routes during the data generation and candidate screening phase [1].

FAQ 3: Why is the experimental performance of my ML-predicted catalyst poor?

- Problem: A catalyst, predicted by the ML model to be high-performing, shows low activity or stability in lab experiments.

- Diagnosis: The "synthesis gap" – the model may not account for how synthesis conditions (precursor, temperature, atmosphere) affect the final catalyst's composition, structure, and morphology [13].

- Resolution:

- Include Synthesis Parameters: Expand your feature set to include synthesis variables such as calcination temperature, precipitant type, and solvent [1] [13].

- Optimize Synthesis with ML: Use ML not only for initial screening but also to optimize the synthesis conditions for a given catalyst composition. This creates a critical feedback loop from characterization to synthesis [13].

- Characterize the Output: Perform physical characterization (e.g., microscopy, spectroscopy) on the synthesized catalyst to confirm it matches the intended design and use this data to refine the ML model [13].

Critical Data Tables for Catalyst Optimization

Table 1: Key Catalytic Properties and Performance Descriptors

This table summarizes intrinsic catalyst properties that serve as critical data inputs for effective ML models.

| Property | Description | Relevance to ML Model |

|---|---|---|

| Composition | Elemental and phase composition (e.g., Co3O4, H-ZSM-5) | Determines fundamental catalytic activity and is a primary feature for screening [1] [17] [16]. |

| Surface Area | Total accessible surface area (m²/g) | Often correlates with activity; a key parameter for reactivity models [17]. |

| Acid Site Density | Concentration and strength of acid sites | Critical descriptor for reactions like dehydration and cracking [17] [16]. |

| Morphology | Particle size, shape, and crystal facet | Influences exposure of active sites and reaction pathways [13]. |

| Adsorption Energy | Energy of reactant binding to the catalyst surface | A fundamental quantum-mechanical descriptor for activity and selectivity [14] [15]. |

| Conversion & Selectivity | Reaction-specific performance metrics (%, X, S) | The primary target outputs (labels) for supervised learning models [1] [16]. |

Table 2: Key Process Parameters and Economic Criteria

This table outlines critical process variables and economic factors that must be integrated with catalyst properties for a holistic optimization.

| Parameter | Description | Relevance to ML Model |

|---|---|---|

| Temperature | Reaction temperature (°C) | A dominant variable; optimization finds the balance between activity and energy cost [1] [17]. |

| Catalyst Concentration | Catalyst loading (wt.%) | Impacts reaction rate and process economics; optimized to reduce material use [17]. |

| Feedstock Composition | Type and purity of reactants (e.g., VOC type, plastic type) | A key feature for generalizing models across different feedstocks [17] [14]. |

| Precursor Cost | Cost of catalyst raw materials | A direct input for techno-economic optimization functions [1]. |

| Synthesis Conditions | Calcination temperature, precipitating agent | Features that bridge the "synthesis gap" between design and real-world performance [1] [13]. |

| Energy Consumption | Energy required for conversion | A key cost metric to be minimized alongside catalyst cost [1]. |

Experimental Protocols

Protocol 1: Machine Learning-Guided Optimization of Cobalt-Based Catalysts for VOC Oxidation

This methodology details the integration of artificial neural networks (ANNs) with economic criteria for catalyst optimization [1].

- Dataset Curation: Collect a consistent dataset from controlled experiments. Data should include:

- Input Features: Catalyst properties (composition, surface area), synthesis parameters (precipitant type, calcination temperature), and process conditions (reaction temperature).

- Output Label: Hydrocarbon conversion (e.g., for toluene and propane) [1].

- Model Training and Selection:

- Train a large number of ANN configurations (e.g., 600) and other supervised learning algorithms (e.g., from Scikit-Learn) on the dataset.

- Select the best-performing model based on predictive accuracy on a held-out test set [1].

- Economic Optimization:

- Define an objective function that combines the cost of the catalyst and the energy required to achieve a target conversion (e.g., 97.5%).

- Use an optimization algorithm (e.g., Compass Search) with the trained ANN to find the input variable values (catalyst properties and process parameters) that minimize this total cost [1].

Protocol 2: Response Surface Methodology for Optimizing Catalytic Pyrolysis

This protocol uses a Design of Experiments (DOE) approach to efficiently optimize process parameters for oil yield from plastic waste [17].

- Experimental Design:

- Select key factors: e.g., catalyst type (natural zeolite, Al₂O₃, SiO₂), temperature (350–450 °C), and catalyst concentration (wt.%).

- Create an experimental design matrix (e.g., an L9 orthogonal array) to systematically explore the factor space with a minimal number of experiments [17].

- Execution and Analysis:

- Run experiments according to the design matrix, keeping other parameters (heating rate, residence time) constant.

- Measure the response variable (oil yield). Use RSM to fit a regression model (e.g., a quadratic polynomial) to the data, establishing a quantitative relationship between factors and the response [17].

- Process Optimization:

Workflow Visualization

ML-Driven Catalyst Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Catalyst Research |

|---|---|

| Cobalt Nitrate (Co(NO₃)₂·6H₂O) | A common precursor for synthesizing cobalt oxide-based catalysts (e.g., Co₃O₄) for reactions like VOC oxidation [1]. |

| Precipitating Agents (e.g., Oxalic Acid, Na₂CO₃, Urea) | Used in co-precipitation synthesis to form catalyst precursors (e.g., cobalt oxalate, carbonate). The choice of precipitant influences the final catalyst's morphology and activity [1]. |

| Zeolites (Natural and Synthetic, e.g., ZSM-5) | Solid acid catalysts with high surface area and hydrothermal stability. Used in pyrolysis and cracking reactions to improve oil yield and selectivity [17]. |

| Alumina (Al₂O₃) and Silica (SiO₂) | Common catalyst supports or active components. Provide high surface area and can be tuned for acidity. Used as catalysts in pyrolysis optimization studies [17]. |

| High/Low-Density Polyethylene (HDPE/LDPE) | Model feedstocks for catalytic pyrolysis experiments, representing a significant portion of plastic waste streams [17]. |

Frequently Asked Questions

Q1: What are the most common data-related mistakes in machine learning for catalysis, and how can I avoid them? The most common data-related mistakes include insufficient understanding of the data, inadequate data preprocessing, and data leakage. To avoid these, conduct thorough exploratory data analysis (EDA) to understand feature distributions and relevance. Always handle missing values and scale numerical features, ensuring these steps are fitted only on the training data to prevent data leakage. Utilizing pipelines can automate and standardize this process, ensuring consistency [18].

Q2: My ML model for catalyst performance is not generalizing well. What should I check in my dataset? Poor generalization often stems from data quality issues or a lack of representative features. First, verify your dataset for missing values, outliers, and inconsistent scaling. Second, ensure your feature set adequately captures the physicochemical properties of the catalysts. Techniques like Automatic Feature Engineering (AFE) can systematically generate and select relevant features from a library of elemental properties, which is particularly useful for small datasets common in catalysis [19].

Q3: How can I perform meaningful catalyst optimization with limited data? When working with small datasets, leverage feature engineering and selection techniques tailored for limited data. The AFE method generates numerous higher-order features through mathematical operations on primary physicochemical descriptors and selects the most informative subset for the specific catalysis. This approach, combined with simple, robust regression models like Huber regression, helps avoid overfitting and captures essential trends without requiring large amounts of data [19].

Q4: What is the role of economic criteria in machine learning-guided catalyst design? Machine learning models can be integrated with techno-economic analysis to optimize catalyst properties not just for performance, but also for cost and energy consumption. An optimization framework can use trained neural networks to minimize both catalyst costs and the energy required to achieve a target conversion, helping to identify commercially viable catalysts [1].

Troubleshooting Guides

Problem: Inadequate Feature Set Leading to Poor Model Performance

Issue: The model fails to capture the underlying structure-property relationships, resulting in low predictive accuracy for catalyst performance.

Solution: Implement systematic feature engineering.

- Step 1: Construct a Primary Feature Library. Assemble a wide range of general physicochemical properties (e.g., elemental descriptors, atomic properties) for all catalyst components from available databases [19].

- Step 2: Apply Commutative Operations. Account for elemental composition and notational invariance by computing features using operations like maximum, minimum, and weighted average across the catalyst's components [19].

- Step 3: Synthesize Higher-Order Features. Generate a large pool of candidate features by creating nonlinear functions and products of the primary features to capture complex, combinatorial interactions [19].

- Step 4: Select an Optimal Feature Subset. Use a supervised learning objective (like minimizing cross-validation error) to select a small, powerful set of features from the large pool. This automates the testing of numerous hypotheses [19].

The workflow for this solution is outlined in the diagram below.

Problem: Model Performs Well on Training Data but Fails on New Catalyst Formulations (Overfitting)

Issue: The model has high accuracy on its training data but shows a significant drop in performance when predicting the performance of unseen catalysts, indicating overfitting.

Solution: Adopt a robust validation framework and simplify the model.

- Step 1: Re-assess Your Data. Ensure your dataset is large and diverse enough to be representative of the chemical space you are exploring. If not, consider active learning strategies to acquire more informative data points [19].

- Step 2: Use Strong Validation Techniques. Employ leave-one-out cross-validation (LOOCV) or repeated cross-validation to get a more realistic estimate of model performance on small datasets [19].

- Step 3: Apply Regularization. Use simpler, more interpretable models like Huber Regression, which is robust to outliers and naturally resistant to overfitting due to its linear nature [19].

- Step 4: Tune Hyperparameters. Use methods like Bayesian optimization to find the optimal hyperparameters for your model, which can help prevent both overfitting and underfitting [20] [21].

Experimental Protocols & Data Presentation

Protocol: Automatic Feature Engineering (AFE) for Catalyst Discovery

This protocol is adapted from methodologies that use AFE to design catalysts without prior knowledge of the target catalysis [19].

- Dataset Curation: Compile a dataset of catalyst compositions and their corresponding performance metrics (e.g., yield, conversion temperature). For a supported multi-element catalyst, this includes the elemental composition of each sample.

- Primary Feature Assignment: For each catalyst in the dataset, compute a set of primary features by applying commutative operations (e.g., maximum, minimum, weighted average) to a library of physicochemical properties for the constituent elements.

- Higher-Order Feature Synthesis: Generate a large number of compound features (often 10³–10⁶) by applying mathematical functions to the primary features and creating products of these functions. This accounts for nonlinearity and complex interactions.

- Feature Selection and Model Building: Use a feature selection wrapper to find the combination of features that minimizes the prediction error in cross-validation using a simple, robust regression algorithm like Huber regression.

- Model Validation: Validate the final model rigorously using leave-one-out cross-validation and report the mean absolute error (MAE) relative to the experimental error and the span of the target variable.

Quantitative Comparison of Common ML Algorithms in Catalysis

The table below summarizes the characteristics of algorithms commonly used in catalysis research, helping you select an appropriate one [22] [1] [8].

| Algorithm | Best Use Case in Catalysis | Key Advantages | Common Performance Metrics |

|---|---|---|---|

| Linear Regression / Huber Regression | Establishing baseline models; small datasets with engineered features. | Simple, interpretable, robust to overfitting (especially Huber). | R², Mean Absolute Error (MAE) |

| Artificial Neural Networks (ANNs) | Modeling complex, non-linear relationships in high-dimensional data. | High predictive power for large, well-structured datasets. | R², MAE, Root Mean Square Error (RMSE) |

| Random Forest | Rapid screening and prediction of catalytic activity; handling diverse descriptor types. | Handles non-linearity well; provides feature importance scores. | R², MAE, F1-score (for classification) |

| Support Vector Machines (SVM) | Modeling with a clear margin of separation in descriptor space. | Effective in high-dimensional spaces. | R², MAE |

Protocol: ML-Guided Catalyst Optimization with Economic Criteria

This protocol details a methodology for optimizing catalysts based on both performance and economic factors [1].

- Data Collection & Model Training: Collect a dataset of catalysts with measured performance (e.g., conversion of VOCs like toluene). Train a high-accuracy predictive model, such as an ensemble of Artificial Neural Networks (ANNs), to map catalyst properties to performance.

- Define Optimization Objective: Formulate an objective function that combines catalyst cost and the energy consumption required to achieve a target performance level (e.g., 97.5% conversion).

- Run Optimization Algorithm: Use an optimization algorithm (e.g., Compass Search) to find the values of the input variables (catalyst properties) that minimize the objective function.

- Validation: Compare the catalyst identified by the optimization process against known commercial or literature catalysts to validate its practicality and superiority.

The following diagram illustrates this integrated optimization workflow.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Experiment | Technical Notes |

|---|---|---|

| Cobalt Nitrate Hexahydrate (Co(NO₃)₂·6H₂O) | Common precursor for synthesizing cobalt oxide (Co₃O₄) catalysts. | Provides the source of active cobalt metal. High purity (e.g., 98%) is recommended for reproducible results [1]. |

| Precipitating Agents (e.g., Oxalic Acid, Sodium Carbonate, Urea) | Used in co-precipitation synthesis to form insoluble cobalt precursors (oxalate, carbonate, hydroxide). | The choice of precipitating agent influences the morphology, surface area, and ultimately the catalytic activity of the final Co₃O₄ [1]. |

| Feature Engineering Library (e.g., XenonPy) | A curated collection of physicochemical properties for elements. | Serves as the foundational database for generating primary features in Automatic Feature Engineering (AFE) [19]. |

| Scikit-Learn Python Library | Provides a wide array of machine learning algorithms and preprocessing tools. | Essential for implementing regression models, feature selection, and creating preprocessing pipelines [1] [8]. |

Methodologies and Real-World Applications: From Prediction to Optimization

Workflow of an ML-Guided Catalyst Design Project

The general workflow for a machine learning-guided catalyst design project follows a structured sequence from data collection to final catalyst selection and validation. This process integrates computational methods, machine learning models, and experimental validation to efficiently discover and optimize new catalytic materials [1] [10].

Essential Research Reagent Solutions

Table 1: Key research reagents, computational tools, and their functions in ML-guided catalyst design.

| Item Name | Type/Class | Primary Function in Workflow |

|---|---|---|

| Cobalt Nitrate Hexahydrate (Co(NO₃)₂·6H₂O) [1] | Chemical Precursor | Source of cobalt cations for synthesizing cobalt-based oxide catalysts. |

| Various Precipitating Agents (Na₂CO₃, NaOH, H₂C₂O₄, etc.) [1] | Chemical Reagent | Initiate precipitation to form catalyst precursors (e.g., CoCO₃, Co(OH)₂, CoC₂O₄). |

| Scikit-Learn [1] | Software Library | Provides accessible Python implementations of eight major supervised regression ML algorithms for building predictive models. |

| TensorFlow / PyTorch [1] | Software Library | Enables the creation and training of complex models like Artificial Neural Networks (ANNs). |

| Atomic Simulation Environment (ASE) [10] | Computational Tool | Provides modules for high-throughput ab initio simulations, including geometry optimization and transition-state search. |

| Python Materials Genomics (pymatgen) [10] | Computational Tool | A robust library for materials analysis, useful for automating simulation tasks and analyzing crystal structures. |

| Open Catalyst Project (OCP) MLFF [12] | Pre-trained Model | Provides machine-learned force fields for rapid, quantum-accurate calculation of adsorption energies, accelerating screening. |

| Materials Project Database [10] [12] | Online Database | Provides open-source access to computed properties of known and predicted inorganic crystals for initial data sourcing. |

Detailed Experimental Protocols

Protocol: Catalyst Synthesis via Precipitation and Calcination

This protocol details the synthesis of cobalt-based catalysts (e.g., Co₃O₄), as described in recent ML-guided research [1].

Precipitation:

- Prepare a 100 mL aqueous solution of the selected precipitating agent (e.g., 0.22 M Na₂CO₃, 0.44 M NaOH).

- In a separate container, prepare a 100 mL aqueous solution of Co(NO₃)₂·6H₂O (0.2 M).

- Add the precipitating agent solution to the cobalt nitrate solution under continuous stirring for 1 hour at room temperature.

- Separate the resulting precipitate by centrifugation.

Washing:

- Wash the precipitate with distilled water multiple times until the washing liquor reaches a near-neutral pH.

- This step removes residual ions and soluble by-products like nitric acid or sodium nitrate [1].

Hydrothermal Aging (Optional):

- Transfer the washed precipitate into a Teflon-lined stainless-steel autoclave.

- Seal the autoclave and place it in an oven at 80 °C for 24 hours.

Drying and Calcination:

- Harvest the solid via centrifugation and dry it overnight in an oven at 80 °C.

- Finally, calcine the dried precursor in a furnace under a static air atmosphere to obtain the final metal oxide catalyst.

Protocol: Building an ML Model for Catalyst Performance Prediction

This protocol outlines the steps for developing a machine learning model to predict catalytic activity, such as hydrocarbon conversion [1] [10].

Dataset Construction:

- Source Data: Compile a dataset from experimental results (e.g., conversion rates, reaction conditions) and/or theoretical calculations (e.g., adsorption energies from DFT or MLFFs) [1] [12].

- Data Cleaning: Perform data cleaning to remove duplicates, correct errors, and ensure consistency. This is a critical foundation for effective model training [10].

Feature Engineering:

- Define Descriptors: Identify and calculate relevant catalyst descriptors. These can be intrinsic properties (e.g., elemental properties, d-band center) or structural features [10].

- Example: For CO₂ to methanol conversion, key descriptors might be derived from the adsorption energy distributions (AEDs) of critical intermediates like *H, *OH, and *OCHO across different catalyst facets [12].

Model Training and Validation:

- Algorithm Selection: Test multiple supervised regression algorithms (e.g., Artificial Neural Networks (ANNs), Support Vector Machines (SVMs), Random Forests) to identify the best performer [1].

- Data Splitting: Reserve a portion of the data (e.g., ~20%) as a test set that is never used for model training or adjustment [10].

- Training: Train several hundred model configurations (e.g., 600 ANN configurations) to ensure robustness [1].

- Validation: Evaluate the final model's performance on the held-out test set to assess its generalization ability [10].

Protocol: High-Throughput Screening Using Adsorption Energy Distributions (AEDs)

This advanced protocol uses pre-trained models for large-scale computational screening [12].

Search Space Definition:

- Select metallic elements that are relevant to the target reaction (e.g., CO₂ to methanol) and available in the training data of force field models (e.g., the OC20 database). Example elements include Cu, Ni, Zn, Pt, Rh [12].

- Search materials databases (e.g., Materials Project) for stable crystal structures of these metals and their bimetallic alloys.

Surface and Adsorbate Configuration:

- Generate multiple surface facets for each material (e.g., Miller indices from -2 to 2).

- Identify the most stable surface termination for each facet.

- Engineer surface-adsorbate configurations for key reaction intermediates.

AED Calculation with MLFF:

- Use a pre-trained Machine Learning Force Field (MLFF), such as the OCP equiformer_V2, to rapidly optimize the thousands of surface-adsorbate configurations and calculate their adsorption energies [12].

- Aggregate these energies into an Adsorption Energy Distribution (AED) for each material, which serves as a comprehensive descriptor of its catalytic property landscape.

Candidate Identification:

- Use unsupervised machine learning (e.g., hierarchical clustering) and statistical analysis to compare the AEDs of new materials against those of known high-performing catalysts [12].

- Propose candidate materials (e.g., ZnRh, ZnPt₃) with AEDs similar to effective catalysts for experimental testing.

Techno-Economic Optimization Framework

Integrating economic criteria is a crucial final step in the ML-guided design process. An optimization framework can be developed to minimize both catalyst costs and the energy consumption required to achieve a target conversion (e.g., 97.5% VOC oxidation) [1]. This analysis often reveals that the cheapest catalyst compatible with performance targets is the most economically viable option, as the influence of energy cost can be practically negligible compared to catalyst cost [1].

Table 2: Key optimization variables and economic criteria for catalyst selection.

| Optimization Variable | Description | Economic Consideration |

|---|---|---|

| Catalyst Cost | Cost of precursor materials and synthesis. | Often the dominant factor in optimization; the cheapest effective catalyst is typically selected [1]. |

| Energy Consumption | Energy required to achieve target conversion (e.g., reactor temperature). | Can have a "practically negligible influence" on total cost compared to catalyst cost in some analyses [1]. |

| Hydrocarbon Conversion | Target performance metric (e.g., 97.5% conversion). | A fixed constraint in the optimization problem; the system is optimized to meet this target at minimum cost [1]. |

Troubleshooting Guide & FAQs

Q1: My model performs well on training data but poorly on new, unseen catalyst compositions. What is happening and how can I fix it?

A: This is a classic sign of overfitting [21].

- Solutions: [21]

- Apply regularization techniques (L1/L2) to penalize overly complex models.

- Use cross-validation during training to get a better estimate of real-world performance.

- Simplify the model or collect more training data, especially for underrepresented regions of the catalyst space.

- For neural networks, use dropout layers.

Q2: My model fails to capture clear patterns in the catalyst data, even on the training set. What should I do?

A: This indicates underfitting [21].

- Solutions: [21]

- Increase model complexity (e.g., use a deeper neural network, or a more powerful algorithm).

- Add more relevant features or descriptors based on domain knowledge of catalysis [10].

- Reduce regularization, as you may be overly penalizing the model.

- Perform hyperparameter tuning to find a better model configuration.

Q3: I am getting inaccurate predictions for adsorption energies, even when using a pre-trained ML force field. What could be the cause?

A: This is often a problem of data quality or domain mismatch.

- Solutions:

- Benchmark your setup: As done in recent studies, explicitly validate the MLFF's predictions for a few key materials and adsorbates against DFT calculations to establish the expected error bar (e.g., MAE of ~0.16 eV) [12].

- Check adsorbate compatibility: Ensure the adsorbates you are testing (e.g., *OCHO) were included or are well-represented in the original training data for the MLFF [12].

- Validate across materials: Test the MLFF's accuracy across different types of materials (pure metals, alloys) in your study, as performance can vary [12].

Q4: How can I identify which catalyst features (descriptors) are most important for my model's predictions?

A: This falls under feature importance analysis and model explainability.

- Solutions: [21]

- Use built-in feature importance analysis from algorithms like Random Forest.

- Apply model-agnostic explainability tools like SHAP (SHapley Additive exPlanations) or LIME to understand how each descriptor impacts individual predictions [21].

- This not only builds trust in the model but can also provide scientific insights into the key factors governing catalytic performance [10].

Q5: My high-throughput screening suggests a catalyst should be active, but experimental validation fails. Why might this happen?

A: This is a common challenge in computational materials science.

- Potential Reasons and Solutions:

- Synthesisability: The predicted material may not be stable or synthesizable under realistic conditions. Integrate stability metrics into the screening criteria [10] [3].

- Missing Descriptors: The model may be missing crucial descriptors related to the reaction environment, catalyst stability under operating conditions, or selectivity, leading to an incomplete picture of performance [10].

- Data Drift: The experimental conditions (e.g., pressure, impurities) may differ from the idealized conditions used in the computational training data. Ensure your training data is as representative as possible of real-world conditions [23].

Frequently Asked Questions (FAQs)

Q1: For a catalyst design project with tabular data containing categorical features (e.g., precipitant type, catalyst support) and numerical properties, which algorithm is most suitable out-of-the-box?

A1: For heterogeneous data mixing categorical and numerical features, CatBoost is often the most suitable choice. It natively handles categorical features without requiring extensive pre-processing (e.g., one-hot encoding), which prevents information loss and reduces training time [24]. While ANNs can be effective, they typically require careful data scaling and encoding, and may need larger datasets to perform well [24]. Random Forest also handles mixed data types robustly, but may not always achieve the same peak accuracy as well-tuned boosted algorithms [25].

Q2: My dataset for predicting catalyst activity is relatively small (~1000 data points). Will a complex model like XGBoost overfit?

A2: With a small dataset, the risk of overfitting is high for any complex model. However, this can be mitigated. Tree-based ensembles like Random Forest and XGBoost are non-parametric and can generalize well if properly regularized [25]. XGBoost incorporates regularization parameters directly into its objective function to combat overfitting [25]. For very small datasets, a carefully tuned Random Forest or a simpler model might be a more robust starting point. Using ANNs with small data is generally not advised unless using specific data-efficient architectures [26].

Q3: I am under tight computational constraints and need a model that trains quickly. What are my best options?

A3: LightGBM is explicitly designed for fast training and is often significantly faster than XGBoost and CatBoost [27]. Random Forest training can also be efficient because trees are built independently and in parallel [25]. ANNs, especially deeper architectures, often require the most computational resources and time for training [24]. CatBoost can be faster than XGBoost on some tasks, but its performance is highly dependent on hyper-parameter tuning [24].

Q4: In catalyst optimization, I need to understand which features (e.g., surface area, binding energy) are most important. Which models provide the best interpretability?

A4: Tree-based algorithms (Random Forest, XGBoost, CatBoost) are excellent for feature importance analysis. They can quantitatively rank features based on their contribution to model predictions, such as through Gini importance or permutation importance [10]. This is invaluable for catalyst design to identify key descriptors. While there are methods to interpret ANNs (e.g., SHAP, LIME), they are generally less intuitive and direct than the built-in importance metrics from tree-based models.

Troubleshooting Guides

Problem 1: Poor Model Generalization (Overfitting)

Symptoms: High accuracy on training data, but significantly lower accuracy on validation/test data.

Solutions:

- For XGBoost/CatBoost/LightGBM:

- Increase Regularization: Tune hyperparameters like

reg_lambda(L2) andreg_alpha(L1) regularization in XGBoost, orl2_leaf_regin CatBoost [25]. - Reduce Model Complexity: Decrease

max_depthand increasemin_data_in_leaf. - Use Stochastic Boosting: Lower the learning rate and increase the number of estimators. Use row subsampling (

subsample) and column subsampling (colsample_bytree/colsample_bylevel) during training [25].

- Increase Regularization: Tune hyperparameters like

- For Random Forest:

- For ANN:

- Apply L1/L2 regularization or Dropout layers.

- Reduce the number of layers and neurons per layer (network complexity).

- Ensure you have a sufficiently large dataset or use data augmentation techniques.

Problem 2: Handling Class Imbalance in Catalyst Classification

Scenario: You are classifying catalysts as "high-performance" vs. "low-performance," but the positive class is rare (e.g., only 1-5% of your data).

Solutions:

- Algorithm Choice: XGBoost and CatBoost often perform well on imbalanced data, especially when combined with sampling techniques [28].

- Data-Level Techniques: Use upsampling methods like SMOTE (Synthetic Minority Oversampling Technique) or ADASYN to generate synthetic samples for the minority class. A 2025 study found that XGBoost combined with SMOTE consistently achieved the highest F1 score across varying imbalance levels, from moderate to extreme [28].

- Algorithm-Level Techniques: Use the

scale_pos_weightparameter in XGBoost or theclass_weightsparameter in CatBoost and Scikit-learn to assign higher costs to misclassifying the minority class.

Problem 3: Long Training Times for Large Datasets

Symptoms: Model training takes impractically long, slowing down the research cycle.

Solutions:

- Choose a Faster Algorithm: Switch to LightGBM, which uses histogram-based techniques and grows trees leaf-wise, making it often 7+ times faster than XGBoost for large datasets [27].

- Utilize Approximate Methods: For XGBoost, use the

tree_method='approx'or'hist'parameters to speed up training. - Leverage Parallel Processing: Ensure you are using all available CPU cores. Most tree-based algorithms (RF, XGBoost, LightGBM) have built-in support for parallel training. CatBoost is efficient on GPUs for large datasets [24].

- For ANN: Utilize GPU acceleration (e.g., with CUDA) and frameworks like TensorFlow or PyTorch.

Quantitative Performance Comparison

The table below summarizes a quantitative comparison of different algorithms from a study on intrusion detection in wireless sensor networks, providing concrete metrics for comparison [29].

Table 1: Algorithm Performance Metrics Comparison [29]

| Algorithm | R² | MAE | MSE | RMSE |

|---|---|---|---|---|

| CatBoost (with PSO) | 0.9998 | 0.6298 | 0.6018 | 0.7758 |

| XGBoost | 0.9992 | 1.0916 | 1.6319 | 1.2775 |

| LightGBM | 0.9989 | 1.2607 | 2.1271 | 1.4585 |

| Random Forest (RF) | 0.9988 | 1.3372 | 2.3281 | 1.5258 |

| Decision Tree (DT) | 0.9976 | 1.7846 | 4.6347 | 2.1528 |

Experimental Protocol for Catalyst Optimization

The following workflow is adapted from a machine learning-guided study on cobalt-based catalyst design for VOC oxidation [1].

Objective: To model hydrocarbon conversion and optimize input variables to minimize both catalyst costs and energy consumption for achieving a target conversion (e.g., 97.5%) [1].

Data Collection & Preprocessing:

- Data Source: Collect data from experimental synthesis and characterization of catalysts. For the referenced study, this included five Co₃O₄ catalysts prepared via precipitation using different precipitants (e.g., H₂C₂O₄, Na₂CO₃, NaOH) [1].

- Feature Engineering: Define input features, which can include intrinsic catalyst properties (e.g., surface area, particle size), synthesis conditions, and economic/energy cost criteria [1].

- Data Splitting: Split the dataset into training, validation, and test sets.

Model Training and Validation:

- Train Multiple Algorithms: Fit a suite of supervised regression algorithms. The referenced study trained 600 Artificial Neural Network (ANN) configurations and 8 other supervised regression algorithms (e.g., from Scikit-Learn) for robust comparison [1].

- Hyperparameter Tuning: Use optimization techniques like Grid Search, Random Search, or Bayesian Optimization to tune hyperparameters for each algorithm type. Particle Swarm Optimization (PSO) can also be used for metaheuristic optimization [29].

Model Selection & Optimization:

- Performance Evaluation: Select the best-performing model based on metrics like R², MAE, MSE, or RMSE on the validation set (see Table 1 for examples).

- Framework for Optimization: Develop an optimization application (e.g., in Excel-VBA or Python) that uses the best-trained model (e.g., the top-performing ANN) [1].

- Cost Minimization: Use an optimization algorithm (e.g., Compass Search) to find the input variable values that minimize a combined cost function, balancing catalyst cost and energy consumption required to reach the target conversion [1].

Workflow Diagram for Catalyst ML Optimization

The diagram below illustrates the core machine learning workflow for catalyst optimization, integrating the key stages from data preparation to final deployment.

Research Reagent Solutions for Catalyst ML

This table details key computational "reagents" – the algorithms, software, and data tools – essential for building ML models in catalyst design.

Table 2: Essential Research Reagents for Catalyst ML [1] [26] [10]

| Research Reagent | Function / Purpose | Example Use Case in Catalyst ML |

|---|---|---|

| Artificial Neural Networks (ANNs) | Powerful nonlinear function approximators for complex, high-dimensional data. | Digital twin for predicting catalyst performance (e.g., styrene production, VOC conversion) [1]. |

| Tree-Based Ensembles (RF, XGBoost, etc.) | Robust, interpretable models for tabular data, handling mixed data types and implicit feature selection. | Predicting adsorption energies or catalytic activity from elemental and structural descriptors [25] [10]. |

| Gaussian Process Regression (GPR) | Provides uncertainty estimates alongside predictions, ideal for active learning and guiding data acquisition. | Initial exploratory phase for learning potential energy surfaces and identifying novel reaction pathways [26]. |

| Scikit-Learn Library | Comprehensive Python library offering a unified interface for many ML algorithms and preprocessing tools. | Rapid prototyping and benchmarking of various supervised regression algorithms (SVM, RF, etc.) [1]. |

| Atomic Simulation Environment (ASE) | Open-source Python package for setting up, controlling, and analyzing atomistic simulations. | High-throughput DFT calculations to generate training data for ML models (energies, forces, structures) [10]. |

| CatApp / Catalysis-Hub | Specialized databases for catalytic surfaces, providing reaction/activation energies from DFT calculations. | Source of standardized data for training ML models on adsorption energies and reaction mechanisms [10]. |

Troubleshooting Guide: Common Experimental Challenges in Catalyst Testing

Table 1: Troubleshooting Common Catalyst Preparation and Performance Issues

| Problem Observed | Potential Causes | Recommended Solutions |

|---|---|---|

| Low VOC Conversion Efficiency | Catalyst fouling (coking), improper calcination temperature, low surface area, or precursor contamination. | Inspect for pressure drops across the catalyst bed indicating fouling; clean or replace the catalyst [30]. Verify calcination temperature and time; ensure thorough washing of precipitated precursors to neutral pH [1]. |

| Poor Catalyst Selectivity (Undesired Byproducts) | Incorrect cobalt oxidation state, unfavorable coordination environment, or presence of competing reaction pathways [31]. | Use operando techniques to monitor the cobalt oxidation state (Co(III)/Co(II) ratio) under reaction conditions; pre-oxidize catalyst at high temperature (e.g., 600°C in oxygen) to establish active spinel phase [31]. |

| Catalyst Deactivation Over Time | Sintering of active phases, leaching of cobalt species, poisoning by agents like silicon, phosphorus, lead, or zinc [32] [33]. | Characterize catalyst morphology changes; be aware of Co3O4's instability in acidic conditions [33]. Perform a complete analysis of the waste stream composition to exclude poisoning agents [32]. |

| High Operational Cost in Scaling | Energy-intensive operating temperatures, expensive catalyst precursors, or low catalyst lifetime. | Optimize input variables (e.g., catalyst properties) using neural networks to minimize combined catalyst and energy costs [1]. Consider heat recovery systems (recuperative or regenerative) to reduce fuel usage [34]. |

| Irreproducible Synthesis Results | Inconsistent precipitation rates, washing, drying, or calcination procedures [1]. | Standardize synthesis protocol: strict control of precipitant concentration, stirring time (1 hour), room temperature precipitation, and calcination under static air [1]. |

Frequently Asked Questions (FAQs) on Catalyst Optimization

Q1: What are the key physical properties of cobalt-based catalysts that most significantly impact their performance in VOC oxidation? Machine learning analysis of cobalt-based catalysts has shown that optimization frameworks can identify the key properties that minimize cost and energy consumption for achieving high VOC conversion (e.g., 97.5%) [1]. Modeling with hundreds of artificial neural networks (ANNs) helps map features like electronic structure and atomic/physical characteristics to performance, allowing researchers to prioritize the most critical characterization techniques and intrinsic properties during development [1].

Q2: How can machine learning be practically integrated into our catalyst development workflow? A practical ML-guided workflow involves: (1) building a defined dataset of various catalysts and their properties; (2) identifying key features such as electronic structure and physical characteristics; and (3) using ML tools like artificial neural networks (ANNs) or Scikit-Learn algorithms to detect patterns and develop performance models [1]. Automated ML processes can build better models, understand mechanisms, and offer new insights, ultimately correlating and optimizing catalyst properties based on both economic and energy criteria [1].

Q3: Our catalyst shows good initial activity but degrades quickly. What are the common causes? Rapid deactivation is often linked to catalyst fouling or structural changes under reaction conditions. Studies using operando transmission electron microscopy (OTEM) have revealed that cobalt oxide catalysts undergo a complex network of solid-state processes, including exsolution, diffusion, and defect formation, which can distort the catalyst lattice and degrade performance [31]. Additionally, the catalyst can be poisoned by specific agents in the gas stream; thus, a full stream analysis is recommended [32].

Q4: From a techno-economic perspective, what are the major cost drivers for a catalytic oxidation system? The total cost of ownership for a catalytic oxidizer includes both capital and ongoing operational costs. A primary operational cost is utility consumption, which is why catalytic oxidizers are designed to operate at lower temperatures (650°F to 1000°F) to reduce fuel use [34] [32]. Furthermore, catalyst cost and lifetime are significant factors. ML-guided optimization studies aim to select catalysts that balance initial cost with the energy consumption required to meet conversion targets, often finding that the cheapest catalyst has a dominant influence on overall cost [1].

Experimental Protocols & Workflow

Detailed Methodology: Catalyst Synthesis and Evaluation

Protocol 1: Preparation of Co₃O₄ Catalysts via Precipitation [1]

- Precipitation: Add 100 mL of an aqueous precipitant solution (e.g., 0.22 M oxalic acid, 0.22 M sodium carbonate, or 0.44 M sodium hydroxide) to 100 mL of a 0.2 M aqueous solution of Co(NO₃)₂·6H₂O under continuous stirring for 1 hour at room temperature.

- Aging and Harvesting: Transfer the obtained precipitate to a Teflon-lined autoclave and age it in an oven at 80 °C for 24 hours. Subsequently, harvest the precipitate at room temperature via centrifugation.

- Washing: Wash the precipitate repeatedly with distilled water until the washing liquor achieves a near-neutral pH. This step is critical to remove residual ions and byproducts like nitrate salts.

- Drying and Calcination: Dry the washed solid overnight at 80 °C. Finally, calcine the precursor in a furnace under a static air atmosphere to obtain the final Co₃O₄ catalyst.

Protocol 2: Machine Learning-Guided Performance Optimization [1]

- Data Collection: Compile a dataset from experimental results, including catalyst properties and their corresponding performance in VOC oxidation (e.g., conversion of toluene and propane).

- Model Fitting: Fit the conversion datasets to a large number of Artificial Neural Network (ANN) configurations (e.g., 600) using custom software or libraries like TensorFlow/PyTorch. Alternatively, test supervised regression algorithms from Scikit-Learn.

- Variable Optimization: Develop an optimization framework using the best-performing neural networks. Apply algorithms like Compass Search to optimize input variables (catalyst properties) with the objective of minimizing both catalyst costs and the energy consumption required to reach a target conversion (e.g., 97.5%).

Machine Learning Optimization Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Cobalt Catalyst Research

| Item | Function / Relevance in Research | Example from Literature |

|---|---|---|

| Cobalt Nitrate Hexahydrate (Co(NO₃)₂·6H₂O) | Common cobalt precursor salt for precipitation synthesis. | Used as the Co²⁺ source in all precipitation reactions [1]. |