Overcoming Mode Collapse in GANs: A Guide for Stable Catalyst Materials Discovery

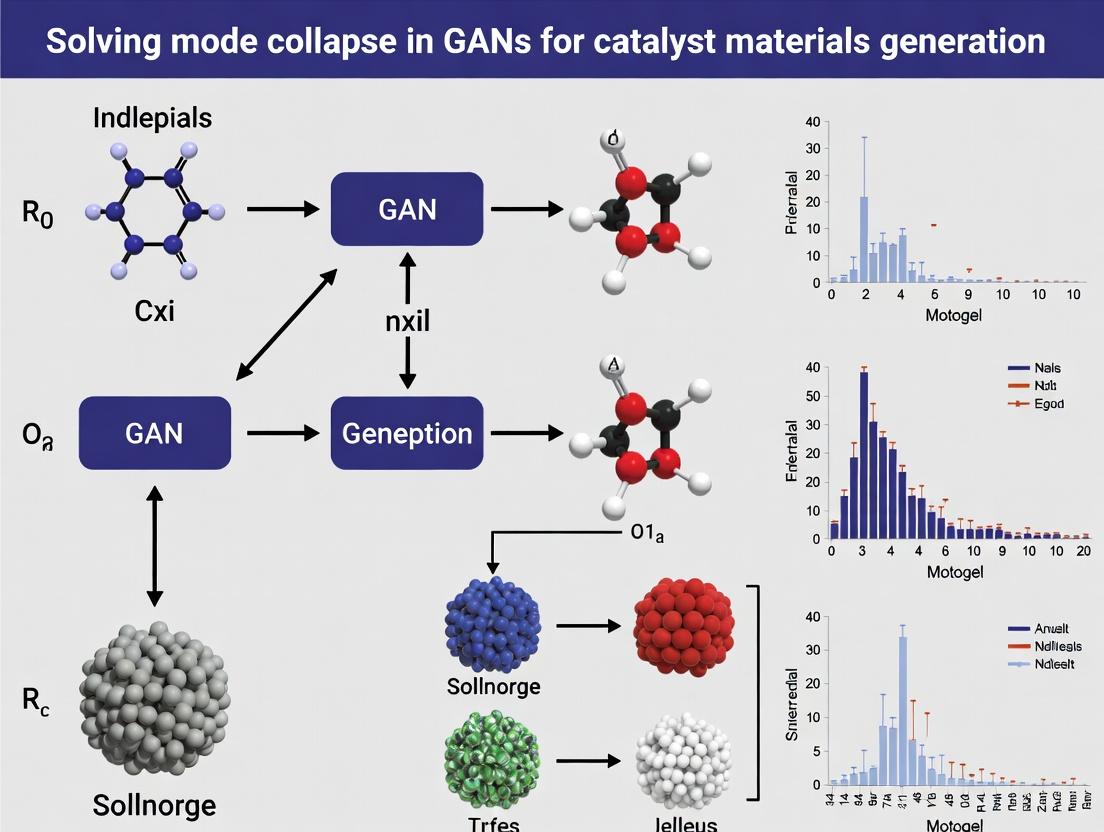

This article provides a comprehensive guide for researchers and material scientists on solving mode collapse in Generative Adversarial Networks (GANs) for catalyst generation.

Overcoming Mode Collapse in GANs: A Guide for Stable Catalyst Materials Discovery

Abstract

This article provides a comprehensive guide for researchers and material scientists on solving mode collapse in Generative Adversarial Networks (GANs) for catalyst generation. We first explore the foundational challenge of mode collapse and its detrimental impact on material diversity. We then detail cutting-edge methodological solutions, including architectural and training innovations. A practical troubleshooting section addresses common implementation pitfalls. Finally, we present validation frameworks and comparative analyses of leading techniques, concluding with the implications for accelerating the discovery of novel, high-performance catalytic materials.

Understanding Mode Collapse: The Fundamental Barrier to Diverse Catalyst Discovery with GANs

What is Mode Collapse in GANs? A Conceptual Breakdown for Material Scientists.

Article Context

This article is a technical support center for researchers engaged in a thesis project focused on Solving mode collapse in GANs for catalyst materials generation research. The following guides and FAQs are designed to assist scientists in diagnosing and troubleshooting common GAN failures during materials discovery workflows.

Conceptual Breakdown & Troubleshooting Guides

FAQ 1: What is mode collapse in the context of generating catalyst materials?

Answer: Mode collapse occurs when the Generative Adversarial Network (GAN) produces a limited variety of output structures, repeatedly generating very similar or identical candidate materials. Instead of exploring the vast compositional and structural space (e.g., diverse metal alloys, perovskite families, or MOF topologies), the generator "collapses" to a few modes it finds easy to fool the discriminator with. For catalyst research, this means your GAN might propose the same doped graphene structure or a single type of active site repeatedly, ignoring other potentially superior catalysts.

FAQ 2: How can I experimentally detect mode collapse in my materials GAN?

Answer: Monitor these key failure signs during training:

- Low Diversity in Descriptors: Calculated material descriptors (e.g., formation energy, band gap, d-band center, porosity) cluster tightly in a small region of the parameter space.

- High Fréchet Distance: The Fréchet Inception Distance (FID) or its materials-specific equivalent remains high or increases, indicating poor distribution matching between generated and real data.

- Generator Loss Crashes: The generator loss drops precipitously and remains very low while the discriminator loss rises, indicating the discriminator is no longer providing useful gradients.

Quantitative Detection Metrics Table

| Metric | Healthy GAN Indication | Mode Collapse Indication | Measurement Interval |

|---|---|---|---|

| Descriptor Variance | High variance across key features (e.g., Ehull, element count). | Low variance; generated samples are statistically similar. | Every 1000 training iterations. |

| FID (or custom metric) | Score decreases steadily and converges to a low value. | Score plateaus at a high value or becomes unstable. | Every epoch. |

| Generator Loss | Oscillates within a stable range. | Drops to near zero and stays there. | Every iteration/batch. |

FAQ 3: What are the main experimental protocols to mitigate mode collapse?

Answer: Implement these methodologies in your training pipeline:

Protocol 1: Mini-batch Discrimination

- Objective: Allow the discriminator to look at multiple data samples in combination.

- Procedure: Modify the discriminator network to compute a feature vector for each sample in a mini-batch. Compute the L1-distance between these vectors and sum the distances for each sample. Concatenate this summary statistic to the discriminator's feature map for that sample.

- Expected Outcome: The discriminator can identify if the generator is producing low-diversity batches, providing stronger gradients to encourage variety.

Protocol 2: Wasserstein Loss with Gradient Penalty (WGAN-GP)

- Objective: Use a more stable loss function that correlates with sample quality.

- Procedure:

- Replace standard GAN loss with the Wasserstein (Earth-Mover) distance.

- Remove logarithms from the loss functions.

- Clip critic/discriminator weights to a small range (e.g., [-0.01, 0.01]) OR apply a gradient penalty term to enforce a Lipschitz constraint.

- The loss for a batch is:

L = E[D(x_fake)] - E[D(x_real)] + λ * E[(||∇_x̂ D(x̂)||₂ - 1)²]where x̂ are random interpolates between real and fake samples.

- Expected Outcome: Smoother, more stable training with a loss value that tracks generation quality.

Protocol 4: Unrolled GANs (Conceptual)

- Procedure: The generator updates its parameters based on the discriminator's future state. The discriminator is "unrolled" for K steps during generator training, simulating its reaction to the new generator.

- Use Case: Effective for periodic or discrete material structures where mode collapse is severe.

FAQ 4: How do I adapt these solutions for a catalyst discovery pipeline?

Answer: Integrate domain-specific knowledge:

- Curriculum Learning: Start training on a simpler, more uniform subset of your catalyst database (e.g., single-metal oxides) before gradually introducing complexity (alloys, doped systems, multicomponent perovskites).

- Feature Engineering: Augment the discriminator's input with physically meaningful conditional vectors (e.g., target adsorption energy, desired stability, synthesis constraints). This guides the generator towards diverse yet relevant regions of chemical space.

- Validation Set: Hold out a distinct class of catalysts (e.g., sulfides) from your training set. Periodically, use the generator to create candidates for this held-out class. Failure to generate any plausible candidates is a strong sign of mode collapse, not just overfitting.

Experimental Workflow Diagram

Diagram Title: GAN Training & Mode Collapse Mitigation Workflow

Logical Diagram: GAN Architecture & Collapse

Diagram Title: GAN Training Loop & Mode Collapse State

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in GANs for Materials | Example / Note |

|---|---|---|

| WGAN-GP Loss Function | Replaces standard GAN loss to improve training stability and mitigate collapse. Provides meaningful loss gradients. | torch.nn implementation with gradient penalty term (λ=10). |

| Mini-batch Discrimination Layer | Enables discriminator to assess sample diversity within a batch, penalizing repetitive outputs. | Custom PyTorch/TF layer appended to the discriminator network. |

| Spectral Normalization | Regularization technique applied to discriminator weights to control its Lipschitz constant, stabilizing training. | Applied as a wrapper to each layer in the discriminator/critic. |

| Training Dataset (Real) | Curated set of known catalyst structures and properties. The "ground truth" for the discriminator. | e.g., OQMD, Materials Project, ICSD, or proprietary DFT data. |

| Material Descriptor Library | Set of quantifiable features (e.g., SOAP, Coulomb matrix, Ewald sum) to numerically assess sample diversity. | Used in metrics like FID or Jensen-Shannon divergence. |

| Conditional Vector (c) | Auxiliary input (e.g., target property, space group) guiding the generator to produce specific material classes. | Concatenated with noise vector z as input to Generator. |

Technical Support Center

Troubleshooting Guide: GAN Mode Collapse in Catalyst Discovery

Issue 1: Generator produces repetitive, low-diversity catalyst candidates.

- Problem: The generator network collapses to producing only a few, chemically similar structures, failing to explore the vast high-dimensional composition/coordination space.

- Diagnosis: Monitor the diversity metrics (e.g., formula uniqueness, fingerprint Tanimoto similarity) of generated samples over training epochs. A rapid drop and plateau indicates mode collapse.

- Solution: Implement a mini-batch discrimination layer in the discriminator to give it access to multiple data points simultaneously, allowing it to detect and penalize lack of diversity.

Issue 2: Discriminator becomes too strong, causing gradient vanishing.

- Problem: Training loss shows the discriminator rapidly reaching near-zero loss, after which the generator fails to learn (gradients become negligible).

- Diagnosis: Check the discriminator's accuracy. If it approaches 100% early in training, it's overpowering the generator.

- Solution: Apply label smoothing (using soft labels like 0.9 and 0.1 instead of 1 and 0) for discriminator targets to prevent over-confident predictions. Alternatively, use Wasserstein GAN with Gradient Penalty (WGAN-GP) loss, which provides more stable gradients.

Issue 3: Generated catalysts are chemically invalid or unstable.

- Problem: The GAN proposes catalyst structures with incorrect valences, unrealistic bond lengths, or high formation energies.

- Diagnosis: Integrate a rule-based or ML-based validator into the generation loop. Calculate the percentage of generated structures that pass basic chemical validity checks.

- Solution: Use a reinforcement learning (RL) reward wrapper around the generator, where the reward function includes penalties for chemical invalidity and bonuses for predicted stability/activity.

Frequently Asked Questions (FAQs)

Q1: What specific metrics should I track to diagnose mode collapse in my catalyst GAN? A: Track both adversarial and domain-specific metrics. Table 1: Key Metrics for Diagnosing Mode Collapse in Catalyst GANs

| Metric Category | Specific Metric | Target Value/Behavior | Measurement Frequency |

|---|---|---|---|

| Adversarial | Discriminator Loss | Should oscillate, not converge to zero. | Every epoch |

| Adversarial | Generator Loss | Should show downward trend with oscillations. | Every epoch |

| Diversity | Inception Score (IS)* | Higher is better, indicating recognizable & diverse classes. | Every 100 epochs |

| Diversity | Frechet Distance (FD) | Lower distance to reference data indicates closer distribution. | Every 100 epochs |

| Chemical | Unique Valid Structures (%) | Should increase and stabilize at a high value (e.g., >80%). | Every epoch |

| Chemical | Average Formation Energy | Should trend toward the distribution of known stable catalysts. | Every 50 epochs |

Note: IS often adapted using a proxy classifier trained on known catalyst classes. *Note: FD calculated using features from a materials property predictor network.

Q2: How can I incorporate prior domain knowledge (e.g., Sabatier principle, d-band theory) to guide the GAN and prevent nonsensical exploration? A: Use a conditional GAN (cGAN) or a hybrid model. Provide the generator and discriminator with conditional vectors encoding key principles (e.g., desired adsorption energy ranges, target element identities, coordination number constraints). This reduces the effective search space and anchors exploration in physically meaningful regions.

Q3: My workflow is computationally expensive. What's a minimal protocol to test if a new anti-collapse technique is working? A: Follow this reduced-scale experimental protocol:

- Data: Select a constrained dataset (e.g., perovskite oxides ABO3 with 20 known compositions).

- Baseline Model: Train a standard Deep Convolutional GAN (DCGAN) on elemental fractions and lattice parameters for 1000 epochs. Record diversity metrics.

- Intervention Model: Train an identical DCGAN architecture but integrate the proposed technique (e.g., add spectral normalization) for 1000 epochs.

- Evaluation: Compare the two models using the Fréchet Distance between generated and training data distributions in a learned feature space from a small property predictor. A significantly lower FD for the intervention model indicates success.

Q4: Are there specific neural network architectures more resilient to mode collapse for materials generation? A: Yes, recent evidence points to transformer-based architectures and diffusion models as being less prone to mode collapse than traditional GANs for structured data generation. However, for rapid screening, a Wasserstein GAN with Gradient Penalty (WGAN-GP) using a relatively simple multilayer perceptron (MLP) is a robust and computationally efficient starting point.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a GAN-based Catalyst Discovery Pipeline

| Item | Function in the Experiment | Example/Specification |

|---|---|---|

| Reference Dataset | Provides the real data distribution for the discriminator to learn. | Materials Project API data, ICSD, OQMD. Filtered for specific reaction (e.g., OER). |

| Descriptor Suite | Encodes crystal structures into numerical vectors for the neural network. | Sine Coulomb Matrix, Ewald Sum Matrix, Site Fingerprints (using libraries like matminer). |

| Stability Validator | Filters generated candidates by basic chemical viability. | PyDiatools for symmetry, pymatgen for structure analysis, ML formation energy predictor. |

| Property Predictor | Provides rapid screening for target properties (activity, selectivity). | Pre-trained graph neural network (e.g., MEGNet) or a simple ridge regression model on derived features. |

| Anti-Collapse Module | Algorithmic component to enforce diversity. | Mini-batch discrimination layer, Spectral Normalization, or a pre-trained contrastive learning encoder for diversity reward. |

Experimental Workflow Diagram

Title: GAN Training Loop for Catalyst Discovery

Mode Collapse Mitigation Strategies Diagram

Title: Strategies to Solve GAN Mode Collapse

Technical Support Center: Troubleshooting Mode Collapse in Catalyst GANs

FAQ 1: What are the primary indicators of mode collapse in my catalyst generation experiment? A: Key indicators include:

- Low Diversity in Output: Generated catalyst structures are nearly identical despite varying random noise input vectors.

- High Fréchet Inception Distance (FID) or low Inception Score (IS): Quantitative metrics show poor diversity and quality compared to your real training dataset.

- Generator Loss Convergence to a Low, Stable Value: This may indicate the generator has "found" a single successful mode to fool the discriminator, rather than exploring the full data distribution.

- Discriminator Loss Reaching Zero: This suggests the discriminator has become too weak, failing to provide useful gradients to the generator.

FAQ 2: My GAN generates plausible but repetitive perovskite structures. How can I force exploration of other compositions? A: This is a classic sign of partial mode collapse. Implement the following protocol:

- Immediately calculate diversity metrics (see Table 1).

- Switch to or modify a GAN architecture designed for mode diversity:

- Use a Wasserstein GAN with Gradient Penalty (WGAN-GP) to stabilize training.

- Implement Mini-batch Discrimination (add a feature to the discriminator that allows it to assess the diversity of an entire batch).

- Introduce Historical Averaging or Experience Replay for the generator.

- Revise your training data: Ensure your training set of known catalysts is itself diverse and representative of the chemical space you wish to explore.

FAQ 3: How do I quantitatively measure mode collapse to track the effectiveness of my interventions? A: Use the following metrics on a held-out validation set of real catalyst structures.

Table 1: Key Quantitative Metrics for Assessing Mode Collapse

| Metric | Formula/Description | Ideal Range | Interpretation for Catalysts |

|---|---|---|---|

| Fréchet Inception Distance (FID) | Distance between feature vectors of real and generated data using a pre-trained network (e.g., on material fingerprints). | Lower is better (<50 is often good). | Measures similarity in feature space. A high FID suggests poor quality/diversity. |

| Inception Score (IS) | exp( E{x~pG} [ KL( p(y|x) || p(y) ) ] ) | Higher is better. | Assesses both quality (clear prediction by classifier) and diversity (marginal distribution p(y) has high entropy). |

| Nearest Neighbor Analysis | Ratio of average nearest neighbor distance between real/real vs. generated/generated sets. | ~1.0 | A ratio <<1 indicates generated samples are tightly clustered (collapse). |

Experimental Protocol: Calculating FID for Catalyst Ensembles

- Feature Extraction: Use a pre-trained graph neural network (e.g., on the Materials Project database) to convert each catalyst structure (real and generated) into a 512-dimensional feature vector.

- Calculate Statistics: Compute the mean (μr, μg) and covariance (Σr, Σg) of the feature vectors for the real data and generated data sets.

- Compute FID: FID = ‖μr - μg‖² + Tr(Σr + Σg - 2(Σr Σg)^(1/2))

- Repeat this calculation every 1000 generator iterations during training to plot FID over time.

FAQ 4: What are the most effective algorithmic fixes for mode collapse in materials GANs? A: Based on current literature, the following methodologies show high efficacy:

Table 2: Algorithmic Interventions and Protocols

| Intervention | Implementation Protocol | Expected Outcome |

|---|---|---|

| WGAN-GP | 1. Remove log loss. 2. Use linear output in Discriminator (Critic). 3. Add gradient penalty term λ(‖∇D(x̂)‖₂ - 1)² to loss. 4. Train Critic more (e.g., 5x) than Generator per iteration. | Stabilized training, improved gradient flow, better coverage of data modes. |

| Mini-batch Discrimination | 1. In Discriminator, compute a feature matrix for each sample in the batch. 2. Calculate L1 distances between samples. 3. Output a diversity feature appended to the discriminator's input. | Discriminator can reject batches with low diversity, forcing generator to produce varied outputs. |

| Unrolled GANs | 1. For the generator update, compute the discriminator's loss on "unrolled" future states (k steps ahead). 2. Optimize generator against this future-aware discriminator. | Prevents generator from over-optimizing for the current, weak discriminator state. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Catalyst GAN Research

| Item | Function in Experiment |

|---|---|

| Curated Catalyst Datasets (e.g., from Materials Project, Catalysis-Hub) | Provides the real, structured training data (e.g., CIF files, formation energies, adsorption energies) for the GAN. |

| Graph Neural Network (GNN) Featurizer (e.g., MEGNet, SchNet) | Converts atomic structures into graph representations or feature vectors for the discriminator and for FID calculation. |

| Differentiable Crystal Graph Generator | A neural network architecture (Generator) that builds crystal structures from noise, often operating on latent graph representations. |

| WGAN-GP or PacGAN Framework Code | The core training algorithm modified to penalize mode collapse. Often requires custom implementation in PyTorch/TensorFlow. |

| High-Throughput DFT Calculation Queue (e.g., using VASP, Quantum ESPRESSO) | Used to validate the stability and activity of novel catalyst candidates generated by the GAN. |

Visualizations of GAN Training and Collapse

Title: Standard GAN Training Loop for Catalysts

Title: Mode Collapse in Catalyst GANs

Title: WGAN-GP Training with Diversity Check

Troubleshooting Guides & FAQs

FAQ 1: How can I tell if my generative model is suffering from mode collapse for catalyst discovery? Mode collapse in catalyst generation is characterized by the model producing a very limited variety of proposed material compositions or structures, despite being trained on a diverse dataset. Key indicators include:

- Low Diversity in Output: The generator repeatedly proposes the same or very similar candidates (e.g., minor variations of Pt-based alloys) while ignoring other promising classes (e.g., perovskites, metal-organic frameworks).

- Discriminator Overconfidence: The discriminator loss rapidly approaches zero, indicating it can trivially distinguish all generated samples from real data, a sign the generator is not learning the full data distribution.

- Stagnant Metric Scores: While Inception Score (IS) may remain high if the collapsed mode is a "safe" candidate, the Fréchet Distance (FID) and specifically the Precision/Recall for Distributions metrics will show poor coverage of the real catalyst space.

FAQ 2: What are the most effective quantitative metrics to track during training to detect early signs of mode collapse? Relying on a single metric is insufficient. Monitor the following suite of metrics, ideally summarized per training epoch:

| Metric | Formula/Description | Healthy Range Indicator | Mode Collapse Warning Sign |

|---|---|---|---|

| Fréchet Inception Distance (FID) | Measures distance between real and generated feature distributions. Use a materials-centric feature extractor. | Steady decrease, then plateau. | Stops improving or increases sharply. |

| Precision & Recall (Distribution) | Precision: Quality of generated samples. Recall: Coverage of real data modes. | Both values are high and in balance (e.g., ~0.6+). | High Precision but very low Recall (<0.3). |

| Number of Unique Samples | Count of chemically distinct outputs (using fingerprint similarity < 0.9). | Increases and stabilizes at a high fraction of batch size. | Plateaus at a very low number (<10% of batch). |

| Discriminator Loss Variance | Variance of discriminator predictions on generated data. | Maintains moderate variance. | Variance collapses to near zero. |

FAQ 3: What experimental protocol can I run to definitively confirm mode collapse? Protocol: Latent Space Interpolation and Property Distribution Analysis.

- Sample Generation: Generate 1000 candidate materials from random latent vectors

z. - Feature Calculation: Compute a definitive feature set for each candidate (e.g., using Magpie descriptors, SOAP vectors, or Morgan fingerprints).

- Dimensionality Reduction: Use UMAP or t-SNE to project both real training data and generated samples into a 2D/3D latent space.

- Visual & Quantitative Analysis:

- Visual: Plot the projections. A healthy model shows generated samples (blue) overlapping with all clusters of real data (red). Mode collapse shows generated samples concentrated in one small cluster.

- Quantitative: Calculate the percentage of real data clusters (defined via DBSCAN) that contain at least one generated sample. Coverage < 40% strongly suggests collapse.

Experimental Workflow for Diagnosing Mode Collapse

FAQ 4: My model has collapsed. What are my immediate mitigation steps? Immediate interventions to test:

- Switch or Tune the Loss Function: Replace standard minimax loss with Wasserstein loss (WGAN-GP) or add a uniqueness penalty term to the generator loss that penalizes similarity between generated samples in a batch.

- Adjust Training Dynamics: Freeze the generator and train the discriminator for several extra steps per cycle to prevent it from becoming too strong too quickly.

- Implement Mini-Batch Discrimination: Enable the discriminator to look at multiple samples simultaneously, helping it detect a lack of diversity.

- Inject Noise: Add small amounts of noise to the discriminator's input or the latent vector

z.

Mitigation Strategies & Their Target

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Catalyst GAN Research | Example/Note |

|---|---|---|

| Wasserstein GAN with Gradient Penalty (WGAN-GP) | A stable GAN architecture that provides meaningful loss gradients, reducing the risk of collapse. | Replaces discriminator with a critic; enforces 1-Lipschitz constraint via gradient penalty. |

| Precision & Recall for Distributions (PRD) | Metrics to separately quantify the quality (precision) and coverage (recall) of generated catalysts. | Python library prdc available. Critical for diagnosing partial collapse. |

| Mathematical Descriptor Libraries (Magpie, matminer) | Provides fixed-length feature vectors for inorganic materials, enabling FID and diversity calculations. | Converts crystal structure or composition into numerical descriptors. |

| Structural Fingerprints (SOAP, CM) | Atom-centered density correlations providing detailed structural similarity metrics for diversity checks. | More rigorous than composition-only checks. Use DScribe library. |

| Uniqueness/Diversity Loss Term | A penalty added to generator loss to directly encourage variation in outputs. | e.g., λ * (1 / pairwise_distance(fingerprints_of_batch)) |

| Mini-Batch Discrimination Layer | A discriminator layer that allows it to compare a sample to others in the batch, detecting similarity. | Standard in many GAN implementations (PyTorch/TF). |

| Jupyter Notebooks with rdkit/pymatgen | Essential environment for scripting analysis pipelines, computing descriptors, and visualizing molecules/crystals. | Enables rapid prototyping of diagnostic protocols. |

Advanced Architectures & Training Strategies for Robust Catalyst GANs

Troubleshooting Guides & FAQs

FAQ: Training Instability and Mode Collapse

Q1: During catalyst material generation, my GAN produces only a few repeating, unrealistic molecular structures instead of a diverse set. What is the primary cause and immediate fix? A1: This is classic mode collapse. The generator finds a few samples that reliably fool the discriminator and stops exploring. Immediate fixes:

- Switch your loss function: Replace the standard minimax (log loss) with a Least Squares GAN (LSGAN) loss. This penalizes samples that are far from the decision boundary, pushing for more diversity.

- Apply gradient penalty: Implement Wasserstein GAN with Gradient Penalty (WGAN-GP). It enforces a Lipschitz constraint via a gradient norm penalty, leading to more stable and diverse training.

- Protocol: Add a gradient penalty term to your WGAN loss:

λ * (||∇_ŷ D(ŷ)||_2 - 1)^2, whereŷis a random interpolation between real and generated samples, andλis typically 10.

- Protocol: Add a gradient penalty term to your WGAN loss:

Q2: My generator loss collapses to zero while the discriminator/ critic loss remains high. The generated outputs are poor. What's wrong? A2: This indicates a training imbalance, likely due to an overpowered discriminator/critic. The generator fails to learn meaningful gradients.

- Solution: Apply Spectral Normalization to the discriminator/critic. This technique constrains the Lipschitz constant of the network by normalizing the spectral norm of each weight layer, preventing it from becoming too powerful too quickly.

- Protocol: Wrap each convolutional/linear layer in your discriminator with spectral normalization. Most modern deep learning libraries (PyTorch's

torch.nn.utils.spectral_norm) offer a one-line implementation.

Q3: How do I quantitatively choose between WGAN-GP, LSGAN, and Spectral Normalization for my catalyst dataset? A3: The choice depends on your dataset size and desired stability. Use the following comparative metrics from recent literature on molecular generation:

Table 1: Comparative Performance of Advanced GAN Stabilization Techniques

| Technique | Core Mechanism | Key Hyperparameter(s) | Inception Score (↑) (on Molecular Benchmarks)* | Frechet Distance (↓) (on Molecular Benchmarks)* | Training Stability | Recommended For |

|---|---|---|---|---|---|---|

| WGAN-GP | Wasserstein distance + gradient penalty | Penalty coefficient (λ=10), Critic iterations (n_critic=5) | 8.21 ± 0.15 | 28.4 ± 1.2 | Very High | Smaller, complex datasets (e.g., rare-earth catalysts) |

| LSGAN | Least squares loss function | None critical | 7.95 ± 0.18 | 35.7 ± 2.1 | High | General use, easier implementation |

| Spectral Norm GAN | Weight matrix spectral normalization | Learning rate (often lower, e.g., 2e-4) | 8.05 ± 0.13 | 32.8 ± 1.8 | High | Very deep networks or when mode collapse is severe |

*Representative values from studies on QM9 and ZINC250k molecular datasets. Higher Inception Score (IS) and lower Frechet Distance (FD) indicate better diversity and fidelity.

Q4: When implementing WGAN-GP for generating porous catalyst structures, my training becomes extremely slow. How can I optimize it? A4: The gradient penalty computation requires a backward pass on interpolated samples, increasing cost.

- Optimization Protocol:

- Compute penalty on a subset: Apply the gradient penalty not on the full batch, but on a random subset (e.g., 25-50%).

- Use one-sided penalty: Implement the one-sided variant

max(0, (||∇_ŷ D(ŷ)||_2 - 1)^2)which is theoretically justified and can be faster. - Adjust critic iterations: Reduce

n_critic(e.g., from 5 to 3 or 1) and monitor the Wasserstein distance estimate; it should roughly correlate with sample quality.

Q5: I've stabilized training, but how do I quantitatively evaluate if the generated catalyst materials are truly novel and valid? A5: Stability is a means to an end. For catalyst generation, you must also assess chemical validity and novelty.

- Experimental Validation Protocol:

- Internal Validity: Use a rule-based or ML-based validator (e.g., RDKit's

SanitizeMolor a pretrained property predictor) to check the percentage of generated samples that are chemically plausible. Target >90%. - Uniqueness: Compute the fraction of unique, valid structures from a large sample (e.g., 10,000).

- Novelty: Check the valid, unique structures against known material databases (e.g., Materials Project, COD). A high novelty rate is desired for discovery.

- Property Distribution: Compare the distributions of key quantum chemical properties (e.g., HOMO-LUMO gap, formation energy) between generated and real datasets using metrics like the Earth Mover's Distance (EMD).

- Internal Validity: Use a rule-based or ML-based validator (e.g., RDKit's

Workflow & Relationship Diagrams

GAN Stabilization Decision Workflow

GAN Loss Function Logical Relationships

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Stable Catalyst GAN Experiments

| Item / Solution | Function in the Experiment | Example / Specification |

|---|---|---|

| Stabilized GAN Codebase | Foundation for implementing WGAN-GP, LSGAN, and Spectral Normalization. | PyTorch-GAN library, or custom implementations from recent papers (e.g., pytorch-gan-collections). |

| Molecular/Crystal Structure Dataset | Real, clean data for training the discriminator and benchmarking. | QM9, Materials Project API, OMDB, or proprietary catalyst datasets (e.g., transition metal complexes). |

| Chemical Validation Suite | To filter and evaluate the validity of generated catalyst structures. | RDKit (for organic molecules), pymatgen/pymatgen.io.ase (for crystals), internal rule sets. |

| Descriptor/Property Calculator | To translate generated structures into quantitative metrics for evaluation. | RDKit descriptors, DFT calculators (VASP, Quantum ESPRESSO), or fast ML surrogate models. |

| High-Performance Compute (HPC) Node with GPU | To handle the computational load of training GANs and optional property validation. | NVIDIA A100/V100 GPU, 32+ GB RAM. Essential for large-scale 3D crystal generation. |

| Visualization & Analysis Toolkit | To inspect generated structures, loss curves, and metric distributions. | VESTA (for crystals), Matplotlib/Seaborn, TensorBoard/Weights & Biases for training logs. |

Technical Support Center

Troubleshooting Guide

Issue 1: Generator Produces Identical or Near-Identical Catalyst Structures

- Symptoms: Low diversity in generated candidates, repetitive active site motifs, convergence to a single output.

- Diagnosis: Classic mode collapse in the GAN. The generator has found a single output that reliably fools the discriminator, halting exploration.

- Solution A - Implement Mini-batch Discrimination: Integrate a mini-batch discrimination layer into the discriminator.

- Action: Modify your discriminator network. After an intermediate layer, compute a matrix of distances (e.g., L1) for a specific feature

f(x_i)across all samples in the mini-batch. Output a per-sample diversity feature vectoro(x_i)summarizing its similarity to the batch, concatenated to the discriminator's next layer. - Protocol: See "Experimental Protocol 1: Implementing Mini-batch Discrimination" below.

- Action: Modify your discriminator network. After an intermediate layer, compute a matrix of distances (e.g., L1) for a specific feature

- Solution B - Apply Feature Matching: Add a feature matching loss term to the generator's objective.

- Action: Instead of solely maximizing the discriminator's output, train the generator to match the statistics (e.g., mean) of intermediate feature representations in the discriminator for real and generated data.

- Protocol: See "Experimental Protocol 2: Implementing Feature Matching" below.

Issue 2: Training Instability with New Diversity Layers

- Symptoms: Loss oscillations, NaN values, failure to converge after implementing mini-batch discrimination.

- Diagnosis: The additional complexity or scale of diversity features can destabilize the adversarial balance.

- Solution: Adjust hyperparameters. Reduce the learning rate by a factor of 2-5. Scale down the output of the mini-batch discrimination layer (

o(x_i)) using a small multiplicative weight (e.g., 0.1) before concatenation. Ensure gradient clipping is applied.

Issue 3: Generated Catalysts are Diverse but Non-Physically Plausible

- Symptoms: Unrealistic bond lengths, coordination numbers, or formation energies despite high structural diversity.

- Diagnosis: The diversity-enforcing mechanisms are working but are insufficiently constrained by physical/chemical rules.

- Solution: Augment the discriminator's input or loss function. Incorporate a pre-trained physics-informed model (e.g., a DFT-based property predictor) as a regularization term. The discriminator should penalize structures with high predicted energy above the convex hull.

Frequently Asked Questions (FAQs)

Q1: Should I use mini-batch discrimination, feature matching, or both? A1: They address mode collapse differently. Mini-batch discrimination provides the discriminator with batch-level context, while feature matching stabilizes generator training. They are complementary. For catalyst generation, we recommend starting with feature matching for stability, and adding mini-batch discrimination if diversity remains low. See Table 1 for a comparison.

Q2: What is the computational overhead of these methods? A2: Table 1: Computational & Performance Comparison

| Method | Training Time Increase | Memory Overhead | Primary Benefit | Best For |

|---|---|---|---|---|

| Mini-batch Discrimination | ~10-15% | Moderate (batch matrix) | Explicit diversity enforcement | Severe mode collapse |

| Feature Matching | ~5-10% | Low | Training stability | Oscillating/unstable training |

| Combined | ~15-25% | Moderate | Stability + Diversity | Complex, multi-property spaces |

Q3: How do I integrate these into my existing catalyst GAN pipeline? A3: See the experimental protocols below. The key is modular insertion: Feature matching modifies the generator loss function. Mini-batch discrimination inserts a new layer module into the discriminator architecture.

Q4: How do I quantitatively evaluate the diversity of generated catalysts? A4: Use a combination of metrics:

- Internal Diversity: Compute the average pairwise dissimilarity (e.g., Euclidean distance in a learned material descriptor space like SOAP) within a large batch of generated samples. Higher is better.

- Coverage: Measure the fraction of real data distribution (e.g., from a test set of known catalysts) that is represented within a radius in descriptor space by at least one generated sample.

- Property Space Span: Calculate the range (max-min) or standard deviation of key predicted properties (e.g., adsorption energy, band gap) across generated samples.

Experimental Protocols

Experimental Protocol 1: Implementing Mini-batch Discrimination

- Input: Let

f(x_i) ∈ R^Abe an intermediate feature vector for sampleiin the discriminator. - Transformation: Apply a learnable tensor

T ∈ R^(A×B×C)to produce a matrixM_i ∈ R^(B×C)for each sample. - Cross-sample Comparison: For a mini-batch of size

n, compute the L1-distance betweenM_iandM_jfor allj != i, and apply a negative exponential:c_b(x_i, x_j) = exp(-||M_{i,b} - M_{j,b}||_1). - Diversity Feature: For sample

iand rowb, sum over all other samples:o(x_i)_b = ∑_{j=1, j≠i}^n c_b(x_i, x_j). - Concatenation: The vector

o(x_i) ∈ R^Bis concatenated to the discriminator's feature layer, providing batch context.

Experimental Protocol 2: Implementing Feature Matching

- Forward Pass: During generator training, pass a batch of real data

{x_real}and generated data{x_gen}through the discriminator. - Feature Extraction: Extract the activations from an intermediate layer

lof the discriminator for both batches,f(x_real)andf(x_gen). - Loss Calculation: Compute the feature matching loss

L_FMas the mean squared error between the statistical means of these features:L_FM = ||E[f(x_real)] - E[f(x_gen)]||_2^2. - Generator Update: Modify the generator's total loss to be

L_G_total = L_G_original + λ * L_FM, whereλis a weighting hyperparameter (typical range: 0.1 to 1.0).

Diagrams

Title: GAN Training with Mini-batch Discrimination

Title: Feature Matching Loss Calculation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Catalyst GAN Research

| Item/Component | Function in Experiment | Key Considerations for Catalyst Research |

|---|---|---|

| Graph/Structure Encoder (e.g., CGCNN, SchNet) | Converts atomic structure (graph) into a latent representation. | Must be invariant to rotations/translations. Critical for capturing local coordination. |

| Conditioning Vector | A latent vector encoding target properties (e.g., high activity for specific reaction). | Enables targeted generation. Can include descriptors like adsorption energy, d-band center. |

| Differentiable Crystallographic Sampler | Converts generator's output into a valid 3D atomic structure (e.g., via fractional coordinates). | Must enforce periodic boundary conditions for bulk/surface catalysts. |

| Physics-Informed Validator | A pre-trained model (e.g., ML potential, property predictor) to assess physical plausibility. | Used to filter or penalize unrealistic generations (e.g., high-energy structures). |

| Material Descriptor (e.g., SOAP, ACSF) | Quantitative fingerprint of local atomic environments for diversity/metric calculation. | Used in mini-batch distance calculation and final diversity evaluation. |

| Stabilizing Optimizer (e.g., AdamW) | Optimizer for training the GAN networks. | Use with gradient clipping. Lower learning rates (~1e-5) are often needed for stability with feature matching. |

Technical Support Center

Troubleshooting Guides

Issue: Mode Collapse in Catalyst Candidate Generation

- Problem: The generator produces a limited variety of molecular structures, repeatedly outputting similar or identical catalyst candidates, failing to explore the full chemical space.

- Root Cause (Thesis Context): In the broader thesis on solving mode collapse for catalyst materials generation, this often stems from the discriminator becoming too strong too quickly, providing gradients that are uninformative for the generator to improve.

- Solution:

- Apply Gradient Penalty (WGAN-GP): Implement a Wasserstein GAN with Gradient Penalty to stabilize training and provide better gradients.

- Adjust Information Term Weight (InfoGAN): If using InfoGAN, incrementally increase the lambda parameter for the mutual information term to prevent it from overpowering the adversarial loss early on.

- Mini-batch Discrimination: Integrate a mini-batch discrimination layer into the discriminator to allow it to assess diversity across samples.

Issue: Poor Correlation Between Latent Codes and Material Properties

- Problem: In Conditional or InfoGAN setups, varying the structured latent codes (e.g., for bond length, electronegativity) does not result in predictable changes in the generated catalyst's properties.

- Root Cause: The generator is ignoring the latent codes, potentially due to an improperly balanced loss function or insufficient training data for certain property values.

- Solution:

- Validate Code Conditioning: Isolate the generator's conditional input and verify that the gradient from the conditional loss is propagating correctly.

- Strengthen the Q-Network (InfoGAN): Enhance the auxiliary Q-network's architecture or increase the weight of the mutual information loss to enforce a stronger link between codes and output features.

- Stratified Data Sampling: Ensure your training batches contain balanced examples across the range of desired conditional properties.

Issue: Unphysical or Invalid Molecular Structures Generated

- Problem: The generator outputs structures with incorrect valencies, unrealistic bond angles, or chemically impossible atom placements.

- Root Cause: The adversarial training process lacks explicit rules of chemistry; it only learns from the data distribution provided.

- Solution:

- Post-generation Validity Filter: Implement a rule-based or machine learning-based validator to filter outputs.

- Incorporating Validity Rewards: Use a reinforcement learning (RL) framework where the generator receives a negative reward for generating invalid structures, integrating this penalty into the GAN loss.

Frequently Asked Questions (FAQs)

Q1: For catalyst generation, should I use Conditional GAN (CGAN) or InfoGAN? A: The choice depends on your control objective.

- Use Conditional GAN (CGAN) when you have a known, labeled property (e.g., target activity > 5.0, specific crystal system) you want to condition the generation on. The condition

yis an explicit input. - Use InfoGAN when you want to discover and control interpretable, latent factors (e.g., continuous codes for porosity, discrete codes for symmetry type) in an unsupervised manner. It learns these representations during training.

Q2: How do I quantitatively measure mode collapse in my catalyst GAN experiments? A: Track these metrics during training:

- Inception Score (IS) / Modified IS: Measures both quality and diversity of generated samples. Requires a pre-trained classifier for your material domain.

- Frechet Distance (FD): Calculates the distance between feature distributions of real and generated data in a pretrained network's latent space. Lower FD indicates better distribution matching.

- Number of Unique Valid Structures: A direct count of chemically valid and distinct catalysts generated over a large sample set.

Table: Quantitative Metrics for Assessing GAN Performance in Catalyst Generation

| Metric Name | Optimal Value | What it Measures | Interpretation for Catalyst Research |

|---|---|---|---|

| Inception Score (IS) | Higher is better | Quality & Diversity | High score suggests diverse, classifiable (e.g., by structure type) catalysts. |

| Frechet Distance (FD) | Lower is better | Distribution Similarity | Low FD means generated catalysts' feature distribution closely matches the real dataset. |

| Percent Valid Structures | ~100% | Chemical Plausibility | Percentage of generated candidates that obey chemical rules. Critical for downstream screening. |

| Property Prediction RMSE | Lower is better | Property Control Accuracy | Root Mean Square Error between target and predicted properties of generated structures. |

Q3: What is a detailed experimental protocol for training a Conditional GAN for perovskite catalyst generation? A: Protocol: CGAN Training for Perovskites with Target Formation Energy.

- Data Preparation:

- Curate a dataset of perovskite structures (e.g., ABX₃) with associated calculated formation energies (Ef).

- Represent structures as 2D pixel maps (for CNN-based GAN) or as graphs (for Graph Neural Network-based GAN).

- Normalize Ef values to a [-1, 1] range. This normalized value is your condition label

y.

- Model Architecture:

- Generator (G): Takes a noise vector

zand conditionyas input. Use fully connected or convolutional layers. The conditionyis typically concatenated withzat the input and/or at several hidden layers. - Discriminator (D): Takes either a real or generated structure and the condition

yas input. It must learn to judge if the structure is real and matches the provided condition.

- Generator (G): Takes a noise vector

- Training Loop:

- For N training iterations:

- Sample a mini-batch of real data

(X_real, y_real). - Sample noise

zand a target conditiony_target. - Generate fake data:

X_fake = G(z, y_target). - Update Discriminator

Dto maximizeD(X_real, y_real) - D(X_fake, y_target). - Update Generator

Gto maximizeD(G(z, y_target), y_target).

- Sample a mini-batch of real data

- Use techniques like label smoothing and one-sided label noise for stability.

- For N training iterations:

- Validation:

- Periodically generate samples for a range of

y_targetconditions. - Use a separate property predictor model to evaluate if the generated structures exhibit the target E_f.

- Periodically generate samples for a range of

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for a Catalyst Material GAN Pipeline

| Item / Reagent | Function in the Experiment |

|---|---|

| Crystallographic Database (e.g., Materials Project, ICSD) | Source of real, stable material structures for training the discriminator. Provides the "ground truth" distribution. |

| Density Functional Theory (DFT) Software (e.g., VASP, Quantum ESPRESSO) | Computes the target material properties (formation energy, band gap, adsorption energy) for the training dataset and for validating generated candidates. |

| Graph Representation Library (e.g., Pymatgen, RDKit) | Converts atomic structures into machine-readable formats (graphs, descriptors, fingerprints) suitable for neural network input. |

| Differentiable Validity Checker | A neural network or differentiable function that assesses chemical validity, allowing gradient-based correction during generation. |

| WGAN-GP or Spectral Normalization | Algorithmic "reagents" applied to the training loop to enforce Lipschitz continuity, preventing mode collapse and gradient vanishing/exploding. |

Experimental Workflow & Logical Diagrams

Title: Workflow for Conditional GAN in Catalyst Generation

Title: InfoGAN Architecture for Unsupervised Code Discovery

Troubleshooting Guides & FAQs

Q1: My GAN training loss converges quickly to a constant value, and the generator outputs nearly identical material structures. What is happening? A: This is a classic symptom of mode collapse. The generator has found one or a few outputs that reliably fool the discriminator, halting meaningful exploration. Recommended steps:

- Immediate Check: Inspect your minibatch discrimination layer. Ensure the

num_kernelsanddim_per_kernelparameters are correctly set for your catalyst descriptor dimensionality (e.g., 128 and 16 respectively). A too-low value fails to provide sufficient minibatch statistics. - Protocol Adjustment: Implement or increase the strength of the Gradient Penalty (WGAN-GP). The critical hyperparameter

lambda_gpis often set to 10. Re-run with: - Data Review: Verify the diversity of your training set. Use PCA on the feature vectors of your real catalyst data to confirm a multi-modal distribution.

Q2: After implementing WGAN-GP, training becomes unstable and slow. What can I optimize? A: WGAN-GP requires more discriminator (critic) steps per generator step and careful tuning.

- Hyperparameter Table:

Parameter Recommended Range Function Critic Iterations per Generator Step ( n_critic)3 - 5 Balances training stability and speed. Batch Size 64 - 128 Larger batches improve gradient penalty estimation. Learning Rate ( lr)1e-4 - 5e-4 Lower than standard Adam rates are typical. Optimizer Beta1 ( beta1)0.0, 0.5 Lowering Beta1 improves stability for WGAN-GP. - Protocol: Use the RMSProp or Adam optimizer with

beta1=0.5. Start withn_critic=5, batch size=64,lr=2e-4. Monitor the critic's loss; it should oscillate around a value rather than diverge.

Q3: How do I quantitatively measure mode collapse for catalyst data during training? A: Rely on multiple metrics, not just loss. Implement the following periodic evaluation protocol:

- Inception Score (IS) / Fréchet Distance Adaptation: While standard IS uses an image classifier, for catalyst materials, use a pre-trained property predictor (e.g., activity, stability) as your "inception network." Calculate statistics on generated samples.

- Precision & Recall for Distributions: Use the k-nearest neighbors method. For 10,000 generated (

S_g) and real (S_r) samples:- Precision: Fraction of

S_gwhose manifold (in feature space) is within the manifold ofS_r. - Recall: Fraction of

S_rwhose manifold is withinS_g.

- Precision: Fraction of

- Metric Tracking Table (Example from a Training Run):

Low precision/high recall indicates mode collapse; both should converge towards high values.Epoch Generator Loss Critic Loss Precision Recall Predicted Property Diversity (Std Dev) 10k -1.23 0.45 0.05 0.90 0.12 20k -0.85 0.21 0.65 0.75 0.58 30k -0.78 0.18 0.82 0.80 0.87

Q4: My generated catalyst candidates are chemically invalid or contain unrealistic bond lengths. How can the GAN learn chemical constraints? A: The GAN needs explicit guidance on chemical rules.

- Solution: Integrate a Rule-Based Discriminator or a Validity Penalty. Append a auxiliary network to the discriminator that predicts a "validity score" based on known chemical rules (e.g., coordination number ranges, electronegativity differences).

- Protocol: The generator's loss function becomes:

G_loss = -D_fake + lambda_validity * ValidityPenalty(G(z)). Start withlambda_validity=0.1and increase as needed. - Pre-processing: Ensure your data representation (e.g., graph, voxel, descriptor) inherently encodes spatial/chemical relationships, such as using Coulomb Matrices or Smooth Overlap of Atomic Positions (SOAP) descriptors.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Mode-Collapse-Resistant GAN for Catalysts |

|---|---|

| WGAN-GP Framework | Replaces traditional GAN loss; uses Earth Mover distance and gradient penalty to enforce Lipschitz constraint, enabling stable training and better coverage of data modes. |

| Minibatch Discrimination Layer | Allows the discriminator to compare a sample to an entire batch, providing the generator with a gradient based on within-batch diversity, combating collapse. |

| Spectral Normalization | Applied to discriminator weights to control its Lipschitz constant. A simpler, often more stable alternative to gradient penalty. |

| Chemistry-Aware Feature Descriptor (e.g., SOAP, ACSF) | Encodes atomic structure into a fixed-length vector that preserves chemical environmental information, providing a meaningful latent space for generation. |

| Pre-Trained Property Predictor | Acts as an evaluation network for adapted metrics (FID, Precision/Recall) and can be used as a auxiliary task for conditional generation. |

| Curriculum Learning Scheduler | Gradually increases the complexity of the generation task (e.g., starting with simple molecules) to stabilize early training. |

Key Methodologies & Visualizations

Anti-Collapse GAN Training & Eval Workflow

Loss Behavior: Standard GAN vs WGAN-GP

Debugging and Fine-Tuning GANs for Reliable Catalyst Generation

Troubleshooting Guides & FAQs

Q1: During data preprocessing for our catalyst materials dataset, the generated structures are physically unrealistic. What could be the cause? A: This is often due to improper normalization or scaling of atomic coordinate and lattice parameter data. Catalyst materials data often contains mixed units (Ångströms for coordinates, eV for energies). Failing to separately scale these heterogeneous feature sets can corrupt the physical relationships the GAN must learn. A common protocol is Min-Max scaling per feature type (e.g., coordinates scaled to [0,1], formation energies scaled to [-1,1]). Ensure your preprocessing pipeline does not violate periodic boundary conditions when applying augmentations like random rotations to crystal structures.

Q2: How do I choose initial learning rates for the Generator (G) and Discriminator (D) to prevent immediate mode collapse? A: In catalyst generation, the discriminator often becomes too strong too quickly. Use a differential learning rate where D's LR is lower than G's LR. A recommended starting point from recent literature is:

- Generator (G) LR: 1e-4

- Discriminator (D) LR: 1e-5 These should be tuned using a small grid search. Monitor the loss ratio; if D loss converges to zero rapidly, the ratio is imbalanced.

Q3: Our GAN generates only a few, repeated catalyst prototypes despite varied training data. How can we diagnose network imbalance? A: This is classic mode collapse. Diagnose by tracking the following metrics during training:

Table 1: Key Metrics for Diagnosing GAN Imbalance

| Metric | Calculation/Description | Healthy Range | Indication of Imbalance |

|---|---|---|---|

| D Loss | Binary cross-entropy | Oscillates, does not go to 0 | D loss → 0: D too strong |

| G Loss | Binary cross-entropy | Oscillates, shows trends | G loss → high constant: G failing |

| Loss Ratio | log(Dloss / Gloss) | Stays within [-1, 1] | Ratio > 1: D dominant; Ratio < -1: G dominant |

| Inception Score (IS)* | Calculated using a property classifier | Steady increase | Plateau or drop indicates collapse |

| Fréchet Distance* | Distance in feature space between real & fake batches | Should decrease | Sharp increase indicates distribution shift |

* Requires a pre-trained neural network regressor/classifier trained on real catalyst data to predict a key property (e.g., adsorption energy).

Q4: What are concrete experimental protocols to mitigate mode collapse in our catalyst GAN project? A: Protocol 1: Implement Mini-batch Discrimination.

- Methodology: Modify the Discriminator to receive information about an entire batch of samples, not just one at a time. Append side information (the output of a learned tensor applied to the batch) to the D's feature vector for each sample. This allows D to detect if a batch contains very similar (collapsed) samples, providing a gradient signal for G to diversify.

- Implementation: Add a mini-batch discrimination layer in the middle of D before the final classification layer.

Protocol 2: Use Two-Time Scale Update Rule (TTUR) with Gradient Penalty.

- Methodology: Apply the TTUR (see Q2) combined with a Wasserstein loss with Gradient Penalty (WGAN-GP). This stabilizes training by enforcing a Lipschitz constraint on D via a penalty on the gradient norm, preventing overly confident D predictions that drive collapse.

- Steps:

- Use the Wasserstein loss objective.

- After forward pass of a batch of real+fake data, interpolate points uniformly along lines connecting real and fake data points in latent space.

- Calculate the gradient of D's outputs with respect to these interpolated samples.

- Add a loss term:

Lambda * (||gradient||_2 - 1)^2. Lambda is typically set to 10. - Update G and D with their separate learning rates.

Protocol 3: Periodic Validation with a Physical Property Predictor.

- Methodology: Every n training iterations, sample a batch from G and evaluate it using an external, pre-trained neural network that predicts a critical catalyst property (e.g., CO adsorption energy, formation energy). Plot the distribution of this property versus the training data distribution.

- Success Criterion: The generated property distribution should maintain similar variance and mode coverage as the training data. A collapsing distribution signals onset of mode collapse.

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Catalyst GAN Experiments

| Item | Function in Experiment |

|---|---|

| OQMD (Open Quantum Materials Database) | Primary source of clean, DFT-verified crystal structures and formation energies for training data. |

| ASE (Atomic Simulation Environment) | Python library for manipulating, filtering, and applying symmetry operations to crystal structure data during preprocessing. |

| pymatgen | Used for featurization of crystals (converting structures to descriptors like Coulomb matrices or Sine matrices) as GAN input. |

| WGAN-GP Loss Function | A key "reagent" in the loss function space, providing more stable gradients than vanilla GAN loss, crucial for handling sparse catalyst data. |

| Pre-trained Property Predictor | A separately trained CNN on catalyst properties. Acts as a validation "assay" to quantify the physical realism of generated materials. |

| Learning Rate Scheduler (Cosine Annealing) | Dynamically adjusts LR during training to help escape local minima that can lead to collapsed modes. |

Visualizations

Title: Catalyst Data Preprocessing Workflow

Title: Balanced vs. Collapsed GAN Training Dynamics

Title: Protocol for Validating Against Mode Collapse

Troubleshooting Guides & FAQs

Q1: During GAN training for catalyst generation, my Inception Score (IS) plateaus while FID worsens. What does this indicate and how should I proceed? A: This discrepancy typically signals mode collapse with poor sample quality. A high IS suggests the generator is producing a few "confident" but similar catalyst structures, while a high FID indicates these samples are far from the real catalyst distribution.

- Troubleshooting Steps:

- Verify Dataset: Ensure your training set of known catalyst materials is diverse and preprocessed consistently.

- Adjust Loss Weights: For WGAN-GP or similar, slightly increase the gradient penalty coefficient (e.g., from 10 to 50) to improve critic stability.

- Implement Mini-batch Discrimination: This helps the discriminator detect similarity between samples, providing a gradient signal to escape collapse.

- Schedule Learning Rates: Apply a gradual decay to the generator's learning rate after initial stable periods.

Q2: My calculated FID score is anomalously low (near zero) early in training. Is this good? A: No, this is a common red flag. It often means the generator is replicating training samples (overfitting) or the features extracted are not meaningful for catalyst diversity.

- Troubleshooting Steps:

- Inspect Generated Samples: Use t-SNE or PCA to visually compare generated and real catalyst feature vectors (e.g., from a materials property predictor). Look for overlapping clusters.

- Change Feature Extractor: For catalyst domains, the standard Inception network may be unsuitable. Fine-tune a network on a relevant catalyst property database (e.g., from the Materials Project) to extract features before calculating FID.

- Add Noise: Introduce small stochastic variations to the generator's input vector (z) each epoch.

Q3: How do I choose between IS and FID for monitoring catalyst diversity in my specific experiment? A: Use them conjunctively as they measure complementary aspects. See the table below.

| Metric | What It Measures | Strength for Catalysts | Weakness for Catalysts | Recommended Use |

|---|---|---|---|---|

| Inception Score (IS) | Quality & diversity within generated set. High IS = recognizable, diverse classes. | Fast to compute. Good for tracking emergence of distinct catalyst classes (e.g., metal-organic frameworks vs. perovskites). | Requires a relevant classifier. Insensitive to mode collapse if each mode is "sharp". | Early-stage training monitor. Pair with a classifier trained on catalyst types. |

| Fréchet Inception Distance (FID) | Distance between generated and real data distributions in feature space. Lower FID = closer distributions. | More sensitive to mode collapse and overall sample quality. Correlates well with human judgment of material plausibility. | Requires a large sample size (>5k) for stability. Computationally heavier. Sensitive to feature extractor choice. | Primary metric for final model evaluation and checkpoint selection. |

Q4: What is a practical protocol for implementing IS/FID in a catalyst GAN pipeline? A: Follow this detailed methodology:

- Step 1: Feature Model Preparation

- Train or select a classifier (for IS) and feature extractor (for FID) on a curated dataset of catalyst materials (e.g., CataNet-2024). Use features from the penultimate layer.

- Step 2: Sample Generation & Feature Extraction

- At training checkpoint k, generate 10,000 catalyst structures using the generator G_k.

- For each generated structure and a held-out set of 10,000 real catalysts, compute the feature vector f using your pre-trained model.

- For IS, also compute the classifier predictions p(y\|x).

- Step 3: Score Calculation

- IS: Calculate KL divergence:

exp(E_x[ KL( p(y|x) || p(y) ) ]). Use base e. Higher is better. - FID: Calculate Fréchet Distance:

||μ_r - μ_g||^2 + Tr(Σ_r + Σ_g - 2*sqrt(Σ_r*Σ_g)). Lower is better. (μ=mean, Σ=covariance matrix, r=real, g=generated).

- IS: Calculate KL divergence:

- Step 4: Visualization & Logging

- Plot IS (↑) and FID (↓) vs. training iterations. A healthy training run shows IS rising and FID falling concurrently.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Catalyst GAN Research |

|---|---|

| Pre-trained Graph Neural Network (GNN) | Serves as the feature extractor for FID, converting catalyst molecular graphs or crystal structures into meaningful latent vectors. |

| Catalyst-Specific Classifier | A neural network trained to categorize catalyst types (e.g., homogeneous, heterogeneous, enzyme). Essential for calculating a relevant Inception Score. |

| Curated Catalyst Database (e.g., CataNet, NOMAD) | Provides the real data distribution for FID calculation and GAN training. Must be cleaned and featurized (e.g., using SOAP or Coulomb matrices). |

| Gradient Penalty Regularizer (λ) | A hyperparameter in WGAN-GP to enforce Lipschitz constraint, stabilizing training and mitigating mode collapse. |

| Mini-batch Discrimination Layer | A network module added to the discriminator to allow it to compare across samples, providing diversity signals to the generator. |

Workflow & Pathway Diagrams

Title: GAN Catalyst Diversity Evaluation Workflow

Title: Mode Collapse Diagnosis & Mitigation Pathway

Technical Support Center: Troubleshooting Mode Collapse in GANs for Catalyst Generation

FAQs & Troubleshooting Guides

Q1: My GAN consistently generates the same few, unrealistic catalyst structures. What are the primary tuning knobs to address this mode collapse?

A1: Mode collapse often stems from an imbalance between the generator (G) and discriminator (D). Your primary tuning knobs are:

- Noise Vector (z) Dimensionality: A higher-dimensional latent space encourages exploration of diverse structures.

- Learning Rate Ratio (LRG / LRD): A ratio that is too high can cause G to "overpower" D, leading to collapse.

- Mini-batch Discrimination: A technical feature to help D detect similarities between samples.

- Gradient Penalty (e.g., WGAN-GP): Stabilizes training by enforcing a Lipschitz constraint.

Q2: How do I quantitatively decide if increasing the noise vector dimensionality is improving exploration?

A2: Track the following metrics over training epochs. Improvement is indicated by an increase in diversity metrics without a severe drop in fidelity metrics.

| Metric | Formula/Description | Target Trend for Improved Exploration | Typical Baseline for Catalyst GANs |

|---|---|---|---|

| Inception Score (IS) | Exp( E_x[ KL(p(y|x) || p(y)) ] ) | Increase (but can be fooled) | 2.5 - 4.5 (domain-dependent) |

| Fréchet Distance (FD) | Distance between real & fake feature distributions | Decrease (indicates better fidelity) | Lower is better, no fixed range |

| Number of Unique Samples | % of unique structural fingerprints in a batch | Increase | Aim for >70% uniqueness in a batch |

| Coverage | % of real data modes captured by generated data | Increase | Target >80% coverage |

Q3: What is a robust experimental protocol for tuning the Generator and Discriminator learning rates?

A3: Follow this systematic grid search protocol:

- Fix a Baseline: Start with a known stable architecture (e.g., DCGAN or WGAN-GP).

- Set Variable Ranges: Define a logarithmic grid for LRG and LRD (e.g.,

[1e-5, 2e-5, 5e-5, 1e-4, 2e-4]). - Hold Noise Constant: Use a fixed, moderately high noise dimension (e.g., 128) for this search.

- Run Short Experiments: Train each (LRG, LRD) pair for a fixed number of epochs (e.g., 5000).

- Evaluate: Calculate FD and Coverage on a held-out validation set of known catalyst structures.

- Analyze: Plot FD vs. Coverage. The optimal region is the Pareto front—low FD and high Coverage.

Q4: After tuning, my GAN explores diverse structures but they are chemically invalid (poor fidelity). How can I recover fidelity?

A4: This indicates over-exploration. Implement a fidelity recovery protocol:

- Introduce a Validity Critic: Add a secondary, pre-trained network that predicts chemical stability or formation energy, providing an additional loss signal to G.

- Apply Gentle Regularization: Slightly increase gradient penalty weight (λ from 10 to ~50 in WGAN-GP) or add a small L2 regularization to G.

- Perform Annealed Noise Scaling: Gradually reduce the standard deviation of the input noise vector over later training epochs.

- Fine-tune with a Lower LRG: Once diverse modes are found, reduce LRG by 10x for 1000-2000 epochs to refine structures.

Visual Workflows & Relationships

Title: GAN Mode Collapse Troubleshooting Workflow

Title: Learning Rate Ratio Effects on GAN Training

The Scientist's Toolkit: Key Research Reagents & Solutions

| Item | Function in Catalyst GAN Experiments | Example/Note |

|---|---|---|

| Wasserstein GAN with Gradient Penalty (WGAN-GP) | Training stability framework. Replaces binary cross-entropy with Earth Mover's distance and adds a penalty on gradient norm. | Critical default choice to mitigate mode collapse. Penalty weight (λ) typically = 10. |

| Structural Fingerprint (e.g., Coulomb Matrix, SOAP) | Numerical representation of atomic structure. Used to calculate diversity and fidelity metrics like Coverage and FD. | SOAP (Smooth Overlap of Atomic Positions) is often preferred for periodicity in catalysts. |

| Mini-batch Discrimination Layer | Added to the Discriminator to allow it to assess an entire batch of samples, helping detect mode collapse. | Especially useful in earlier GAN architectures (e.g., DCGAN). |

| Learning Rate Scheduler (Cyclic) | Periodically varies the learning rate within a band to help escape training plateaus and saddle points. | Can be applied to either G or D, but caution is required to maintain balance. |

| Validity Prediction Network | Pre-trained surrogate model (e.g., a graph neural network) that predicts a catalyst property (e.g., adsorption energy). Guides G towards physically plausible structures. | Acts as a regularizer for fidelity. Often fine-tuned alongside the GAN. |

| Noise Vector Sampler | Defines the distribution of the latent space input (z). Typically a Gaussian or Uniform distribution. | Exploration can be nudged by slightly increasing the variance of the distribution. |

Technical Support Center

Troubleshooting Guides

Issue 1: Generator produces identical, non-diverse catalyst structures (Mode Collapse).

- Symptoms: The Generator (

G) outputs a very limited set of perovskite (e.g., only SrTiO₃) or spinel structures, regardless of the input noise vector. Discriminator (D) loss rapidly approaches zero. - Diagnosis: The

Dbecomes too strong too quickly, providing no useful gradient forGto learn from.Gfinds a single "fooling" sample and collapses. - Solution Steps:

- Implement Wasserstein Loss with Gradient Penalty (WGAN-GP): Replace standard GAN loss. This provides stable gradients.

- Adjust Training Ratio: Move from a 1:1

D:Gupdate ratio to a 1:5 or 1:10 ratio (n_critic = 5). This preventsDfrom outpacingG. - Add Mini-batch Discrimination: Modify the final layer of

Dto look at multiple samples in a batch, helping it detect lack of diversity. - Introduce Historical Averaging: Penalize

Gfor parameters that drift too far from their historical average.

Issue 2: Generated catalysts are chemically invalid or violate Pauling's rules.

- Symptoms: Structures have unrealistic ionic coordination (e.g., Ti⁴+ in a tetrahedral site with excessive local charge imbalance).

- Diagnosis: The

G's latent space is not constrained by physical/chemical rules. - Solution Steps:

- Incorporate a Validity Classifier: Pre-train a separate neural network to predict chemical stability from structural descriptors. Use its prediction as an auxiliary loss for

G. - Use Conditional GANs (cGAN): Condition generation on target properties (e.g., band gap > 2.5 eV, formation energy < 0 eV). This guides the search space.

- Post-processing with a Rule-Based Filter: Implement a script that rejects generated structures failing basic geometric and electrostatic validation checks.

- Incorporate a Validity Classifier: Pre-train a separate neural network to predict chemical stability from structural descriptors. Use its prediction as an auxiliary loss for

Issue 3: Training is highly unstable and losses oscillate wildly.

- Symptoms: Generator and Discriminator losses do not converge but show large, regular oscillations.

- Diagnosis: The optimization process is unstable, often due to high learning rates or poorly balanced network capacities.

- Solution Steps:

- Apply Spectral Normalization: Enforce a Lipschitz constraint on both

GandDby normalizing the weight matrices in each layer. This is more stable than gradient clipping. - Use Optimizers with Momentum: Switch from Adam to RMSprop or use Adam with a reduced beta1 (e.g., 0.5 instead of 0.9).

- Two-Timescale Update Rule (TTUR): Use a lower learning rate for

Dthan forG(e.g.,lr_D = 1e-4,lr_G = 4e-4).

- Apply Spectral Normalization: Enforce a Lipschitz constraint on both

FAQs

Q1: What are the first diagnostic checks when I suspect mode collapse? A1: Run these checks:

- Visualize Outputs: Plot 100 generated structures in a 2D t-SNE or PCA projection alongside your training data. Collapse is indicated by a tight, single cluster.

- Monitor Losses: Plot

DandGlosses. A rapidly falling then flatDloss with a risingGloss is a classic sign. - Calculate Metrics: Compute the Fréchet Inception Distance (FID) or, for materials, the Validity Rate using a pretrained property predictor. A stable, low FID or high validity rate indicates healthy training.

Q2: My GAN generates good structures, but they lack novel, high-performing catalysts. Why?

A2: Your GAN is likely replicating the training data distribution. To discover novel, high-performing candidates, you need to search the latent space. Use a genetic algorithm or Bayesian optimization on the G's input noise vector (z), using a target property predictor (e.g., for oxygen evolution reaction activity) as the fitness function.

Q3: How much training data do I need to stabilize a GAN for oxide catalysts?

A3: While GANs are data-hungry, data augmentation and transfer learning can help. A minimum viable dataset is ~5,000 unique, relaxed structures from sources like the Materials Project. Augment this with symmetry operations and small perturbations. Pre-training the D as an autoencoder on a larger unlabeled dataset can also improve performance.

Q4: Are there specific architectures better suited for crystal graph generation? A4: Yes. Standard CNNs/MLPs treat structures as images/voxels, losing geometric information. Consider:

- Graph Neural Network (GNN)-based GANs: Represent the crystal structure as a graph (atoms as nodes, bonds as edges). This respects periodic boundaries and is invariant to rotation/translation.

- Variational Autoencoder (VAE)-GAN Hybrids: The VAE provides a structured latent space, which can be more easily sampled and interpolated than a standard GAN's noise vector.

Table 1: Performance of GAN Stabilization Techniques on a Perovskite Oxide Dataset (ABO₃)

| Technique | Avg. Structural Validity Rate (%) | Avg. Formation Energy (MAE, eV/atom) | Diversity (FID Score) | Training Stability (Epochs to Convergence) |

|---|---|---|---|---|

| Standard GAN (Baseline) | 12.5 | 0.45 | 85.2 | Did not converge |

| + WGAN-GP | 58.7 | 0.28 | 42.1 | ~35k |

| + WGAN-GP + Spectral Norm | 74.3 | 0.21 | 28.5 | ~25k |

| + cGAN + Validity Classifier | 92.1 | 0.15 | 18.9 | ~15k |

Table 2: Key Hyperparameters for a Stabilized GAN (cGAN with WGAN-GP)

| Parameter | Generator (G) |

Discriminator (D) |

Common |

|---|---|---|---|

| Learning Rate | 4e-4 | 1e-4 | - |

| Optimizer | Adam (beta1=0.5, beta2=0.9) | Adam (beta1=0.5, beta2=0.9) | - |

| Batch Size | - | - | 64 |

Noise Vector (z) Dim |

128 | - | - |

Condition (y) Dim |

10 (e.g., target band gap, A-site element) | 10 | - |

| Gradient Penalty Weight (λ) | - | - | 10 |

n_critic (D updates per G update) |

- | - | 5 |

Experimental Protocols

Protocol 1: Implementing WGAN-GP for a Crystal Graph GAN

- Modify Loss Function: Replace the standard GAN loss (binary cross-entropy) with the Wasserstein loss. The objective is to minimize for

Gand maximize forD:L = E[D(x)] - E[D(G(z))] + λ * GP, whereGPis the gradient penalty. - Compute Gradient Penalty (GP): For each batch, sample a random interpolation

x_hatbetween a real samplexand a generated sampleG(z):x_hat = ε*x + (1-ε)*G(z), whereε ~ U(0,1). Compute the gradient of the discriminator's output w.r.t.x_hat:gradients = ∇_x_hat D(x_hat). The penalty isGP = (||gradients||₂ - 1)². - Update Discriminator: Calculate

Dloss (L_D = D(G(z)) - D(x) + λ*GP). UpdateDweightsn_critictimes per training iteration. - Update Generator: Calculate

Gloss (L_G = -D(G(z))). UpdateGweights once.

Protocol 2: Generating and Validating a Novel Catalyst

- Condition Specification: Define your target property vector

y(e.g.,[Band_Gap=3.2, Stability_Phase='Perovskite', A_Site_Element='La']). - Sampling: Sample a random noise vector

zfrom a normal distribution. Concatenatezandyas input to the trained GeneratorG. - Structure Decoding: The Generator outputs a candidate crystal structure (e.g., as a CIF file or a set of fractional coordinates).

- DFT Validation (Mandatory): Perform first-principles Density Functional Theory (DFT) calculations on the top-

Ngenerated candidates:- Relaxation: Fully relax the ionic positions and cell volume.

- Property Calculation: Calculate the formation energy, electronic band structure, and predicted catalytic activity descriptor (e.g., O p-band center for oxides).

- Stability Check: Confirm thermodynamic stability via a convex hull analysis.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in GAN for Catalysts |

|---|---|

| PyTorch/TensorFlow (Deep Learning Frameworks) | Provides the core environment for building, training, and evaluating GAN models with GPU acceleration. |

| Pymatgen (Python Materials Genomics) | Used to process, featurize, and validate crystal structures. Converts between file formats and calculates structural descriptors. |

| Materials Project API | Primary source for obtaining training data: thousands of relaxed, calculated crystal structures and their properties. |

| ASE (Atomic Simulation Environment) | Interfaces with DFT codes (VASP, Quantum ESPRESSO) for the essential validation and property calculation of generated candidates. |

| DGL-LifeSci or PyTorch Geometric | Libraries for implementing Graph Neural Network (GNN) architectures, which are ideal for representing crystal structures. |

| WandB (Weights & Biases) | Tracks hyperparameters, loss functions, and generated samples in real-time, crucial for diagnosing instability. |

Visualizations

Title: Mode Collapse Diagnostic Flowchart

Title: Stabilized Catalyst Generation & Validation Pipeline

Benchmarking Success: Validating and Comparing GANs for Catalytic Material Design

Troubleshooting Guides & FAQs

Q1: During catalyst GAN training, my generated samples show extremely low structural diversity. All output molecules look nearly identical. What is wrong and how can I fix it? A: This is a classic symptom of mode collapse. Implement quantitative diversity metrics to diagnose.