The Catalyst Descriptor Dilemma: Balancing Speed and Accuracy for Drug Discovery Breakthroughs

This article examines the critical tradeoff between computational speed and predictive accuracy in catalyst descriptor models, a cornerstone of modern computer-aided drug development and materials science.

The Catalyst Descriptor Dilemma: Balancing Speed and Accuracy for Drug Discovery Breakthroughs

Abstract

This article examines the critical tradeoff between computational speed and predictive accuracy in catalyst descriptor models, a cornerstone of modern computer-aided drug development and materials science. We provide a foundational understanding of descriptor types and their inherent precision-cost relationships. Methodological approaches for building efficient models are explored, followed by practical troubleshooting and optimization strategies to navigate this tradeoff in real-world applications. Finally, we discuss rigorous validation frameworks and comparative analyses to assess model performance. This comprehensive guide equips researchers with the knowledge to strategically select and optimize descriptor models to accelerate innovation while maintaining scientific rigor.

Understanding the Core Tradeoff: What Are Catalyst Descriptors and Why Does the Speed-Accuracy Dilemma Exist?

Technical Support Center: Troubleshooting Guide & FAQs

This support center addresses common computational and experimental issues encountered when defining and calculating catalyst descriptors, framed within the research context of balancing model accuracy with computational speed.

Frequently Asked Questions (FAQs)

Q1: My DFT calculation for adsorption energy is failing to converge. What are the primary stability levers to adjust? A: Failure to converge in Density Functional Theory (DFT) calculations is often related to electronic or ionic steps. Follow this protocol:

- Increase electronic step convergence criteria: Gradually increase

EDIFF(e.g., from 1E-4 to 1E-5 or 1E-6 eV). - Improve initial geometry: Use a pre-optimized structure from a faster method (e.g., PM6, PM7).

- Modify the smearing width (SIGMA): For metallic systems, increase SIGMA (e.g., to 0.2 eV) to improve orbital occupancy convergence.

- Use a better initial guess: Read wavefunctions from a previous, similar calculation (

ISTART = 1). Thesis Context: Over-tightening convergence criteria (Step 1) maximizes accuracy but severely impacts speed. Start with looser criteria and tighten only as necessary for descriptor stability.

Q2: How do I choose between a simple descriptor (e.g., d-band center) and a complex one (e.g., Machine Learning (ML)-derived feature) for high-throughput screening? A: The choice is dictated by the target fidelity of your screening stage.

- Primary Screening (1,000+ candidates): Use ultra-fast descriptors: Pauling Electronegativity, Atomic Radius, Bulk Coordination Number. Accuracy is secondary to speed.

- Secondary Screening (100-1,000 candidates): Use intermediate descriptors: d-band center from a simplified slab model, generalized coordination number (GCN), scaling relations. This is the core tradeoff zone.

- Tertiary Validation (<50 candidates): Use high-accuracy descriptors: DFT-calculated adsorption energies at full coverage, activation barriers (NEB), ab-initio molecular dynamics (AIMD) stability checks. Thesis Context: The optimal research pipeline uses a cascade of models, where speed-focused models filter candidates for accuracy-focused models.

Q3: My ML model for catalytic activity overfits to the training data despite using a large descriptor set. How can I improve generalizability? A: Overfitting indicates your model complexity is too high for your data. Implement this workflow:

- Feature Selection: Apply LASSO (L1) regularization or recursive feature elimination to identify the 5-10 most physically meaningful descriptors.

- Data Augmentation: Use the Open Catalyst Project (OC2) dataset or apply scaling relations to synthetically generate more training points.

- Simplify the Model: Switch from a deep neural network to a gradient-boosted tree (e.g., XGBoost) for smaller datasets (<10,000 points).

- Validation: Ensure you are using a strict temporal or compositional hold-out test set, not random cross-validation. Thesis Context: Increasing descriptor complexity does not guarantee better model performance. Parsimonious models with key physical descriptors often offer the best speed/accuracy balance.

Q4: I need to calculate the Turnover Frequency (TOF) descriptor. What is the minimal experimental dataset required for a reliable microkinetic model (MKM)? A: A reliable MKM for TOF requires kinetic data across a range of conditions. Follow this experimental protocol:

Protocol: Data Collection for Microkinetic Modeling

- Measure reaction rates at a minimum of three different temperatures (e.g., 200°C, 225°C, 250°C) to obtain apparent activation energy.

- Measure reaction orders for each reactant by varying partial pressures (e.g., 0.2, 0.5, 1.0 atm) while holding others constant at a single temperature.

- Perform catalyst characterization post-reaction: Use XRD or TEM to confirm stability, and XPS to confirm oxidation state.

- Input data into MKM software (e.g., CatMAP, Kinetics). The model must simultaneously fit all data from steps 1 and 2. The calculated TOF is your activity descriptor. Thesis Context: Experimental descriptor determination (like TOF) is high-accuracy but low-speed, serving as the ultimate benchmark for computational descriptor accuracy.

Table 1: Accuracy vs. Speed Trade-off for Common Catalyst Descriptors

| Descriptor Category | Example Descriptors | Typical Calculation Time | Typical Error vs. Experiment | Best Use Case |

|---|---|---|---|---|

| Empirical / Simple | Pauling Electronegativity, Ionic Radius | Seconds | > 0.5 eV | Initial Trend Screening |

| Geometric | Coordination Number, Generalized CN (GCN) | Minutes | ~0.2 - 0.3 eV | Extended Surface Screening |

| Electronic (DFT-Lite) | d-band center (simplified slab), Bader Charge | Hours | ~0.1 - 0.2 eV | Focused Metal/Alloy Study |

| Electronic (DFT-High) | Full Adsorption Energy, Activation Barrier (NEB) | Days to Weeks | < 0.1 eV | Final Candidate Validation |

| Machine Learning | SOAP, Graph Neural Net (GNN) Features | Minutes (after training) | Variable (0.05 - 0.3 eV) | High-throughput Virtual Screening |

Table 2: Troubleshooting DFT Convergence Parameters (VASP)

| Parameter | Symbol (VASP) | Recommended Value for Start | Value for High Accuracy | Trade-off Impact |

|---|---|---|---|---|

| Electronic Convergence | EDIFF | 1E-4 eV | 1E-6 eV | Major Speed Impact |

| Ionic Convergence | EDIFFG | -0.05 eV/Å | -0.01 eV/Å | Major Speed Impact |

| Smearing Width | SIGMA | 0.2 eV | 0.05 eV | Stability vs. Accuracy |

| Plane-Wave Cutoff | ENCUT | 1.3*max(ENMAX) | 1.5*max(ENMAX) | Major Speed Impact |

| k-point Spacing | KSPACING | 0.5 Å⁻¹ | 0.2 Å⁻¹ | Major Speed Impact |

Experimental & Computational Workflows

Diagram 1: Catalyst Descriptor Development Pipeline

Diagram 2: Troubleshooting DFT Convergence

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational & Experimental Materials

| Item Name | Category | Function / Purpose |

|---|---|---|

| VASP / Quantum ESPRESSO | Software | Ab-initio DFT code for calculating electronic structure descriptors (d-band, adsorption energy). |

| CatMAP / ASE (Atomistic Simulation Environment) | Software | Python libraries for constructing microkinetic models and high-throughput descriptor calculation workflows. |

| OC2 (Open Catalyst 2020) Dataset | Data | > 1 million DFT relaxations for training ML models on catalyst surfaces, bridging speed (ML) and accuracy (DFT). |

| Standard Redox Catalyst Library (e.g., Strem Chemicals) | Experimental | Well-characterized metal complexes (e.g., Ir, Ru, Pd) for benchmarking experimental vs. computed descriptors. |

| High-Throughput Reactor System (e.g., Amtech, HEL) | Equipment | Allows parallel testing of catalyst candidates under identical conditions, generating fast experimental activity descriptors. |

| XPS/UPS Reference Samples (e.g., Au, Cu, Graphite) | Experimental | Calibration standards for aligning experimental binding energy (a descriptor) with computed Fermi levels. |

Technical Support Center

Troubleshooting Guide: Common Computational & Fidelity Issues

Issue 1: Model Predictions are Inaccurate Despite High-Complexity Descriptors

- Symptoms: High-fidelity predictions on training set but poor generalization to unseen catalyst data; overfitting.

- Possible Cause: Descriptor complexity (e.g., many-body interaction terms, high-dimensional fingerprints) has exceeded the available training data, capturing noise.

- Solution:

- Implement rigorous cross-validation (e.g., leave-one-cluster-out for catalysts).

- Apply regularization techniques (L1/Lasso, L2/Ridge) during model training.

- Reduce descriptor dimensionality using principal component analysis (PCA) or feature selection based on physical relevance.

- Augment training data with computational data from lower-fidelity methods.

Issue 2: Descriptor Calculation is Prohibitively Slow for High-Throughput Screening

- Symptoms: Workflow bottleneck is descriptor generation (e.g., quantum-mechanical charge density analysis), not model inference.

- Possible Cause: Using electronic-structure-level descriptors for a screening task requiring millions of data points.

- Solution:

- Implement a multi-fidelity screening approach (see Workflow Diagram).

- Cache frequently calculated descriptors for common molecular fragments or catalyst cores.

- Switch to lower-cost descriptor families (e.g., composition-based, geometric) for initial screening, reserving complex descriptors for final candidates.

Issue 3: Inconsistent Results Between Different Descriptor Software Packages

- Symptoms: The same molecular structure yields different descriptor values (e.g., different connectivity fingerprints) depending on the toolkit used.

- Possible Cause: Differences in algorithmic implementation, normalization, or underlying parameterizations.

- Solution:

- Standardize all input structures using a consistent force-field minimization protocol.

- For the project, commit to a single software package (e.g., RDKit, Dragon) for a given descriptor type.

- Document all software versions and calculation parameters in metadata.

- Validate descriptor generation on a small set of benchmark structures.

Frequently Asked Questions (FAQs)

Q1: How do I quantitatively decide between a simple and a complex descriptor for my catalyst design project? A: Conduct a Pareto front analysis. For a representative subset of your data, plot the predictive accuracy (e.g., R², MAE) against the computational cost (CPU-seconds) for multiple descriptor families. The optimal descriptor lies on the Pareto front, representing the best accuracy for a given cost. See Table 1 for a simplified example.

Q2: Can I combine simple and complex descriptors into a single model? A: Yes, this is a common strategy. You can create a hierarchical or "delta-learning" model. A fast model using simple descriptors makes an initial prediction, and a secondary model, using complex descriptors, learns the correction term to achieve higher fidelity. This often optimizes the speed/accuracy trade-off.

Q3: What is the most common mistake in setting up descriptor-based machine learning for catalysis? A: Neglecting domain-aware train/test splits. Random splitting can lead to data leakage and optimistic performance. Always split data by catalyst family, core metal, or synthesis batch to ensure the model's ability to generalize to truly novel compounds is tested.

Q4: Are there standardized benchmark datasets for evaluating descriptor performance in catalysis? A: Yes, several have emerged. Common benchmarks include the CatApp dataset for surface adsorption energies, the QM9 dataset for organic molecule properties (as a proxy for ligand space), and Open Catalyst Project datasets for reaction energies on surfaces. Using these allows for direct comparison with published literature.

Data Presentation

Table 1: Comparative Analysis of Descriptor Families for Catalytic Turnover Frequency (TOF) Prediction Example data based on a hypothetical ligand-screening study for a hydrogenation reaction.

| Descriptor Family | Avg. Calc. Time per Compound (s) | Mean Absolute Error (MAE) [log(TOF)] | Required Input Data | Best Use Case |

|---|---|---|---|---|

| Compositional (e.g., Stoichiometry) | < 0.01 | 1.85 | Chemical Formula | Ultra-fast preliminary filtering of implausible elements. |

| 1D & 2D Molecular (e.g., RDKit Fingerprints) | 0.1 - 0.5 | 0.92 | 2D Molecular Structure | High-throughput virtual screening of ligand libraries. |

| 3D Geometric (e.g., SOAP, Coulomb Matrix) | 2 - 10 | 0.65 | Relaxed 3D Geometry (FF) | Structure-activity modeling where shape is key. |

| Electronic (e.g., DFT-derived Charges) | 300 - 1000+ | 0.41 | DFT-Optimized Geometry & Electronic Structure | High-fidelity prediction for lead optimization. |

Experimental Protocols

Protocol 1: Benchmarking Descriptor Performance for a Regression Task Objective: To evaluate the accuracy/computational cost trade-off for different descriptor families in predicting catalyst activity. Materials: See "The Scientist's Toolkit" below. Method:

- Dataset Curation: Assemble a consistent dataset of catalyst structures and corresponding target property (e.g., adsorption energy, reaction barrier).

- Descriptor Calculation: For each catalyst in the dataset, calculate descriptors using the specified software/packages for each family (Compositional, 2D, 3D, Electronic). Record the precise wall-clock time for each calculation.

- Model Training & Validation: For each descriptor set: a. Split data into training (70%), validation (15%), and test (15%) sets using a scaffold split based on catalyst core. b. Train a standardized model (e.g., a Random Forest or a Kernel Ridge Regression model) on the training set. Hyperparameters are tuned on the validation set. c. Evaluate the final model on the held-out test set. Record performance metrics (MAE, R²).

- Analysis: Plot the test set MAE (y-axis) against the average descriptor calculation time (x-axis) on a log-log scale to visualize the Pareto front.

Protocol 2: Implementing a Multi-Fidelity Screening Workflow Objective: To efficiently screen a large catalyst library by strategically applying high-cost descriptors only to promising candidates. Method:

- First-Pass Filter: Calculate low-cost descriptors (e.g., 2D fingerprints) for the entire virtual library (e.g., 1M compounds). Train a fast model or apply simple heuristic rules to filter down to a shortlist (e.g., 10k compounds).

- Second-Pass Evaluation: For the shortlist, calculate medium-cost descriptors (e.g., 3D geometric descriptors from force-field geometries). Train a more accurate model to rank candidates, selecting a few hundred top performers.

- Final Validation: For the top candidates, perform high-fidelity descriptor calculation (e.g., DFT-based features) and prediction using the most accurate, pre-trained model. Output the final ranked list of 10-50 candidates for experimental validation. (See the Multi-Fidelity Screening Workflow Diagram).

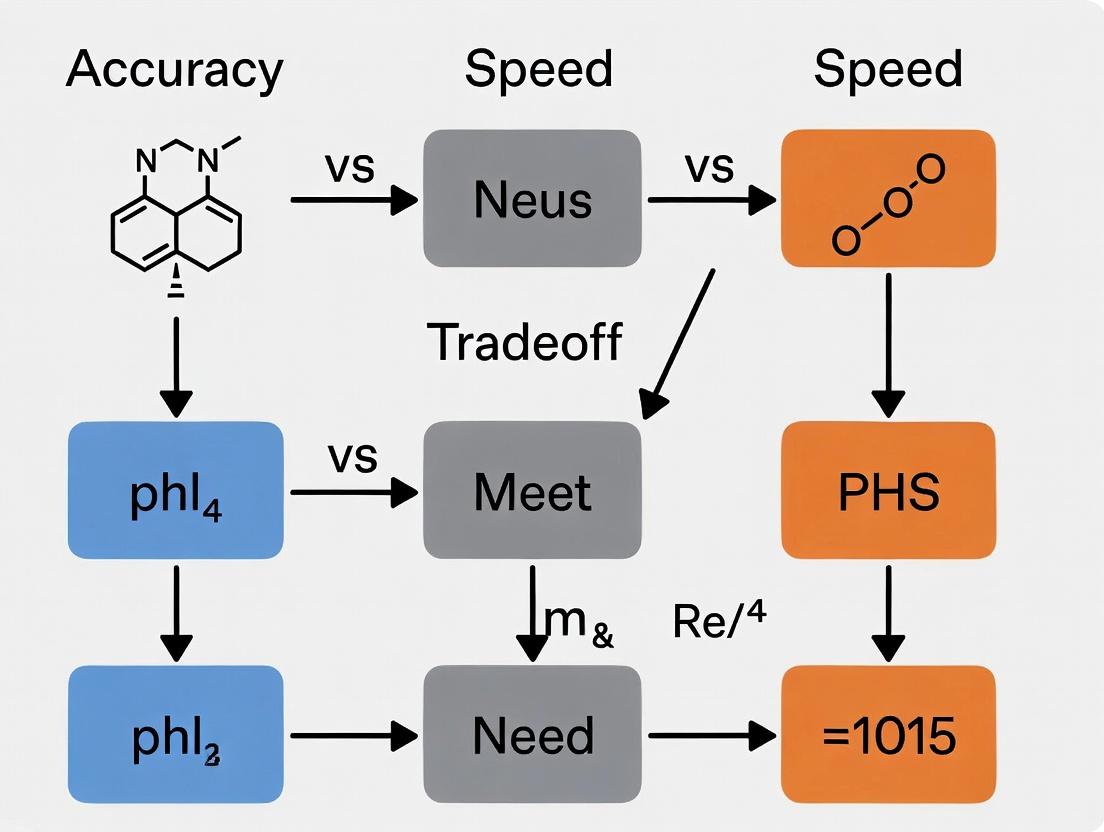

Mandatory Visualization

Title: Multi-Fidelity Catalyst Screening Workflow

Title: Logical Flow of the Descriptor Complexity Trade-Off

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Descriptor-Based Catalyst Research |

|---|---|

| RDKit | Open-source cheminformatics toolkit for generating 2D molecular descriptors, fingerprints, and basic 3D conformers. Essential for high-throughput ligand screening. |

| Dragon | Commercial software offering a very large and diverse set of molecular descriptors (5000+), useful for comprehensive feature space exploration. |

| DScribe / librascal | Python libraries specifically designed for creating atomistic structure descriptors like SOAP, ACSF, and MBTR, crucial for 3D geometric modeling. |

| Atomic Simulation Environment (ASE) | Python framework for setting up, running, and analyzing electronic structure calculations, which are the source of highest-fidelity descriptors. |

| Quantum Espresso / VASP | Electronic structure calculation software (DFT) used to compute ground-state energies, electron densities, and other quantum-mechanical properties that serve as input for complex descriptors. |

| OCP Datasets | Large-scale, curated datasets (e.g., OC20) of catalyst structures and properties, providing essential benchmarks for training and testing models. |

| scikit-learn | The fundamental Python library for machine learning model training, hyperparameter tuning, and validation (train/test splits, cross-validation). |

Technical Support & Troubleshooting Center

This support center addresses common issues encountered in computational drug and catalyst development, framed within the research context of optimizing the accuracy vs. speed tradeoff in catalyst descriptor models.

FAQs & Troubleshooting Guides

Q1: During virtual screening, my molecular docking run yields an excessively high number of false-positive hits with unrealistic binding affinities. What could be the cause? A: This is often a scoring function issue. Many fast scoring functions (e.g., empirical, force-field-based) prioritize speed over accuracy. To troubleshoot:

- Verify Parameterization: Ensure the scoring function parameters are appropriate for your target class (e.g., GPCR vs. kinase).

- Post-Processing: Apply a consensus scoring approach. Use 2-3 different scoring functions and only retain hits ranked highly by all.

- Solvent & Entropy: Check if your protocol implicitly or explicitly accounts for solvent effects and entropic contributions. Their omission speeds up calculations but cripples accuracy.

- Experimental Protocol: Implement a re-docking and cross-docking validation. Re-dock a known co-crystallized ligand to validate pose reproduction (RMSD < 2.0 Å). Cross-docking tests with multiple protein conformations assess model robustness.

Q2: My machine learning model for reaction yield prediction performs well on training data but fails on new substrate scopes. How can I improve its generalizability? A: This indicates overfitting, often due to descriptor choice in the speed-accuracy tradeoff.

- Descriptor Audit: Simple, fast descriptors (e.g., count-based fingerprints) may lack chemical nuance. Test more informative, but computationally heavier, descriptors (e.g., quantum mechanical partial charges, steric maps).

- Data Scope: Ensure your training set encompasses a broad and representative chemical space. Use clustering algorithms on descriptors to identify underrepresented regions.

- Regularization: Increase regularization hyperparameters (L1/L2) to penalize model complexity.

- Experimental Protocol: Employ a rigorous train-validation-test split stratified by key reaction components. Implement k-fold cross-validation and monitor learning curves for signs of overfitting.

Q3: When using catalyst descriptors for high-throughput design, the computational cost of generating the descriptors becomes the bottleneck. How can I speed this up? A: This is the core speed-accuracy tradeoff. Solutions involve pre-computation or model simplification.

- Descriptor Tiering: Implement a multi-stage screening funnel. Use ultra-fast, low-resolution descriptors (e.g., elemental properties) for initial filtering, then apply accurate, slow descriptors (e.g., DFT-derived steric/electronic maps) only to shortlisted candidates.

- Look-Up Databases: Use pre-computed descriptor databases (e.g., for common ligand fragments) instead of calculating on-the-fly.

- Surrogate Models: Train a fast machine learning model (like a neural network) to predict high-accuracy descriptor values from low-cost inputs.

- Hardware/Software: Utilize GPU-accelerated quantum chemistry software (e.g., GPU-enabled DFT) and parallelize computations across clusters.

Q4: My cheminformatics pipeline for library enumeration is failing due to invalid chemical structures or valency errors. Where should I check? A: This is typically a rule-based issue in the reaction SMARTS or enumeration engine.

- Validate Reaction SMARTS: Use a tool like RDKit's

ReactionFromSmartsfunction with parameteruseSmiles=Truefor stricter validation. Test the transformation on a small set of known substrates. - Sanitization Steps: Ensure your workflow includes chemical sanitization steps (e.g., RDKit's

SanitizeMol) post-enumeration to catch valency errors. - Error Logging: Implement structured error logging to capture and analyze failing substructures, which often reveal problematic SMARTS patterns.

Q5: The predicted optimal catalyst from my design algorithm performs poorly in the actual lab experiment. What are the systematic reconciliation steps? A: This discrepancy highlights the gap between in silico models and real-world complexity.

- Model Feedback Loop: Ensure your model incorporates not just intrinsic activity descriptors, but also stability and decomposition descriptors under reaction conditions.

- Condition Parameters: Verify that your computational model's assumptions (temperature, solvent, concentration) match the experimental conditions. Small changes can drastically alter the rate-determining step.

- Experimental Protocol:

- Control Experiment: Re-run the reaction with a known, reliable catalyst to confirm experimental setup fidelity.

- Characterization: Perform post-reaction analysis (e.g., NMR, MS) on the reaction mixture to detect catalyst decomposition or unknown poisoning species.

- Microkinetic Modeling: Incorporate the proposed catalyst into a microkinetic model that includes side reactions to predict practical, not just theoretical, performance.

Quantitative Data: Accuracy vs. Speed in Descriptor Models

Table 1: Comparison of Common Catalysts/ Molecular Descriptors

| Descriptor Type | Example(s) | Relative Speed (Arb. Units) | Typical Use Case | Key Limitation |

|---|---|---|---|---|

| 1D/Count-Based | Molecular Weight, Atom Counts, Crippen LogP | 1000 (Fastest) | High-throughput initial filtering | Lacks stereochemical & 3D information. |

| 2D/ Topological | Morgan Fingerprints (ECFP), Path-based Fingerprints | 100 | Similarity search, QSAR, initial VS | Cannot distinguish conformers or stereoisomers. |

| 3D/ Geometric | Pharmacophore Features, Steric Bulk Parameters (e.g., Tolman Cone Angle) | 10 | Docking, Conformation-sensitive tasks | Dependent on input conformation; slower generation. |

| Quantum Mechanical (QM) | DFT-derived Charges (NBO), Frontier Orbital Energies (HOMO/LUMO), Steric Maps (%Vbur) | 1 (Slowest) | Catalyst design, Mechanism study, High-accuracy scoring | Computationally expensive; not for ultra-large libraries. |

Table 2: Performance Tradeoffs in Virtual Screening Methodologies

| Screening Method | Approx. Compounds/ Day* | Avg. Enrichment Factor (EF1%) | Typical Scenario |

|---|---|---|---|

| Ligand-Based Similarity (e.g., Tanimoto on ECFP4) | 1,000,000+ | 5 - 15 | When active reference ligands are known. Prioritizes speed. |

| Structure-Based Docking (Fast Scoring, e.g., Vina) | 100,000 - 500,000 | 8 - 20 | When a protein structure is available. Balanced approach. |

| Structure-Based Docking (Precise Scoring, e.g., MM/GBSA) | 100 - 1,000 | 15 - 30+ | For final lead optimization on a small, focused library. Prioritizes accuracy. |

| AI/ML-Based Prediction (Pre-trained model) | 1,000,000+ | Variable; can be very high | When high-quality, large training sets exist for the target. |

* Throughput estimates depend heavily on hardware and software implementation.

Experimental Protocols

Protocol 1: Validating a Virtual Screening Workflow (Target: Kinase Inhibitor Discovery)

- Data Curation: Compile a benchmark dataset (e.g., DUD-E) containing known actives and decoys for a specific kinase target.

- Protein Preparation: From a crystal structure (PDB), remove water, add hydrogens, assign protonation states, and generate receptor grids.

- Screening: Run the entire dataset through the docking software (e.g., Glide SP mode for speed, XP for accuracy).

- Analysis: Calculate the Enrichment Factor (EF) at 1% of the screened database and plot the Receiver Operating Characteristic (ROC) curve.

- Tradeoff Analysis: Repeat steps 3-4 with a faster, less precise scoring function and a slower, more precise one. Compare computational time vs. EF/ROC AUC.

Protocol 2: Training a Reaction Yield Prediction Model

- Dataset Assembly: Gather a consistent dataset from literature (e.g., Buchwald-Hartwig couplings) with SMILES for reactants, catalyst, ligand, and reported yield.

- Descriptor Calculation: Compute molecular descriptors for all components. Balance choice: fast fingerprints vs. QM descriptors.

- Feature Engineering: Create a combined feature vector for each reaction. May include categorical one-hot encoding for solvent/base.

- Model Training: Split data (80/10/10 train/validation/test). Train a model (e.g., Random Forest, Gradient Boosting, or Neural Net). Use validation set for hyperparameter tuning.

- Validation: Evaluate on the held-out test set. Report key metrics: Mean Absolute Error (MAE), R². Critically, analyze failure cases for chemical insights.

Diagrams

Title: Decision Funnel for Catalyst Design: Balancing Speed and Accuracy

Title: Virtual Screening & Experimental Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational & Experimental Resources

| Item/Reagent | Function/Role in Development |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation, and fingerprint generation. Essential for preprocessing. |

| Schrödinger Suite, AutoDock Vina, GOLD | Docking software for predicting ligand binding modes and affinities to target proteins. Core of structure-based screening. |

| Gaussian, ORCA, PySCF | Quantum chemistry software for computing high-accuracy electronic structure descriptors (e.g., orbital energies, electrostatic potentials). |

| Catalyst Library (e.g., BIDP, MCF) | Commercially available, diverse sets of phosphine/ligand structures for high-throughput experimentation (HTE) in catalysis. |

| HEPES or TRIS Buffer | Common biological buffers for maintaining pH in enzymatic assays during validation of virtual screening hits. |

| LC-MS with ELSD/CAD | Liquid Chromatography-Mass Spectrometry with Evaporative Light Scattering/Charged Aerosol Detection for reaction monitoring and yield determination without standard curves. |

| Multi-well Reaction Blocks | Hardware for parallel synthesis and catalyst testing, enabling rapid experimental validation of computational predictions. |

Technical Support Center: Troubleshooting & FAQs

Q1: My DFT-based descriptor calculation is taking over 72 hours per catalyst candidate. Is this expected, and how can I triage the issue? A: Yes, this is often expected for high-accuracy electronic structure descriptors (e.g., d-band center, formation energy). First, verify your computational parameters. High accuracy settings inherently increase time.

Triage Steps:

- Check Convergence Settings: Tightening convergence criteria (energy, force, electron density) for higher accuracy increases SCF cycle count exponentially.

- Verify k-point Density: A denser k-mesh for Brillouin zone integration, critical for accuracy in periodic systems, increases calculation time with O(n³).

- Monitor Functional/Basis Set: Hybrid functionals (HSE06) or large basis sets (def2-TZVP) are more accurate but significantly slower than GGA-PBE or smaller sets.

Experimental Protocol for Baseline Timing:

- Objective: Establish a speed vs. accuracy baseline.

- Method:

- Select a standard test structure (e.g., fcc Pt(111) slab).

- Run single-point energy calculations with incrementally higher accuracy settings.

- Record wall time and key output descriptor values.

- Analysis: Plot accuracy (e.g., ΔE vs. converged value) against compute time. The curve will show a sharp increase in time for marginal gains beyond a threshold.

Q2: When using ML-potential accelerated descriptors, I get fast but unreliable results for novel alloy compositions. How do I debug this? A: This indicates an out-of-distribution (OOD) problem for the machine-learned potential (MLP) or descriptor model. High-accuracy descriptors require generalized, robust models, which are slower to train and evaluate.

Troubleshooting Guide:

- Check Training Domain: Compare the elemental composition and geometry of your novel alloy to the training data of the MLP. Large deviations mean OOD.

- Perform Uncertainty Quantification: Use models that provide uncertainty estimates (e.g., ensemble, Gaussian process). High uncertainty flags unreliable predictions.

- Validate with Sparse High-Accuracy Points: Re-calculate descriptors for 5-10% of your candidates using the slower, high-accuracy method (e.g., DFT) to validate MLP outputs.

Q3: My high-throughput screening pipeline is bottlenecked by the computation of charge-density-derived descriptors. Are there proven approximations? A: Yes, but all involve a controlled trade-off. The fundamental bottleneck is that accurate electron density resolution requires fine grids and expensive computations.

FAQs on Approximations:

| Approximation Method | Typical Speed Gain | Expected Accuracy Drop (Quantitative) | Best Use Case |

|---|---|---|---|

| Reduced DFT Precision (e.g., cut-off energy) | 2x - 5x | Formation energy error: ±0.05 eV/atom | Preliminary filtering of vast spaces (>10⁶ compounds) |

| Linear Scaling DFT | 10x - 100x (for large systems) | Band edge error: ±0.1 eV | Large nanostructures or complex interfaces |

| Semi-empirical Methods (e.g., GFN-xTB) | 100x - 1000x | Reaction barrier error: ±0.3 eV | Pre-optimization and molecular dynamics sampling |

| Low-fidelity ML Descriptors | 1000x+ | Compositional property error: ±10% variance | Extremely early-stage prioritization |

Protocol for Implementing Approximations:

- Define an acceptable error margin for your target property (e.g., ∆Predictive Error < 0.1 eV).

- Run a calibration set of 50-100 known materials with both high-accuracy and approximate methods.

- Compute the root-mean-square error (RMSE) and maximum absolute error. If errors are within margin, the approximation is valid for your specific study.

Supporting Visualizations

Title: The High-Accuracy Descriptor Computation Bottleneck

Title: Multi-Tier Screening Workflow to Manage Bottleneck

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Descriptor Computation | Notes on Speed/Accuracy Trade-off |

|---|---|---|

| VASP / Quantum ESPRESSO | First-principles DFT software for calculating electronic-structure descriptors. | Higher accuracy settings (INCAR, .in) directly increase compute time. Essential for final-tier validation. |

| GPUMD / LAMMPS (with ML Potentials) | Molecular dynamics with ML potentials for rapid structural sampling and descriptor extraction. | Speed gain is massive (1000x), but accuracy is limited by the potential's training domain. |

| DScribe / ASAP | Python libraries for generating atomic-structure descriptors (e.g., SOAP, ACSF). | Very fast, but descriptors may lack explicit electronic information critical for catalysis. |

| CATLAS Database | Pre-computed materials database containing descriptors for known and hypothetical compounds. | Eliminates calculation time entirely for included materials, but cannot be used for truly novel systems. |

| SISSO | Software for identifying compact, physically interpretable descriptors from large feature spaces. | Reduces subsequent evaluation time, but the initial feature calculation and SISSO search are computationally intensive. |

Building Efficient Models: Methodologies for Balancing Descriptor Performance in Practice

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: In a multi-stage catalyst screening workflow, my initial fast descriptor filter eliminates all candidates, leaving no compounds for the accurate model. What could be wrong? A: This is often caused by an excessively stringent threshold on the fast descriptor. The fast stage is designed for high recall, not high precision.

- Troubleshooting Steps:

- Check Threshold Value: Compare your threshold against values reported in literature for similar descriptors (see Table 1). Temporarily disable the threshold and examine the distribution of fast descriptor scores for your library.

- Verify Descriptor Calculation: Ensure the fast descriptor is being computed correctly for all compounds. A systematic error (e.g., failed conformational analysis) could yield non-physical values.

- Calibrate with a Hold-Out Set: Use a small, known set of active/inactive catalysts to calibrate the cutoff. The threshold should pass >95% of known actives in this set.

Q2: The computational cost of my hierarchical workflow is higher than expected, negating the speed benefits. How can I optimize it? A: This indicates inefficiency in the workflow orchestration or descriptor cost profiling.

- Troubleshooting Steps:

- Profile Each Stage: Measure the exact CPU time for the fast descriptor (FD) and accurate descriptor (AD) calculation per molecule. Your FD should be at least 100-1000x faster than your AD for the workflow to be beneficial.

- Implement Batch Processing: Ensure the slower AD stage is only run on large, batched subsets from the fast filter to minimize overhead.

- Review Data Transfer: Check for unnecessary I/O or data reformatting between stages. The workflow should pass only compound identifiers and minimal critical data.

Q3: The final prediction accuracy of my multi-stage model is worse than using the accurate descriptor alone on the entire library. Why does this happen? A: This suggests the fast descriptor is filtering out compounds that the accurate descriptor would correctly identify as active ("false negatives" at the filter stage).

- Troubleshooting Steps:

- Analyze Filter Errors: Run the accurate descriptor on a random sample of compounds rejected by the fast filter. If any are true positives, your filter is too aggressive.

- Assess Descriptor Orthogonality: The fast and accurate descriptors should capture complementary chemical information. Calculate correlation metrics between them; high correlation may mean the filter adds no value, while very low correlation may mean it misses key features. Aim for moderate correlation.

- Consider a Two-Stage ML Model: Instead of a hard filter, use the fast descriptor as a feature in a preliminary ML classifier trained to maximize recall.

Q4: How do I choose the optimal number of stages in a hierarchical workflow for catalyst discovery? A: The optimal number balances marginal screening cost against marginal improvement in accuracy.

- Methodology:

- Start with a clear profile of descriptor costs (time, resources) and accuracies (see Table 1).

- Construct a cost-accuracy curve. Add stages only if the reduction in compounds for the next, costlier stage saves more time than the cost of running the additional filter.

- Typically, 2-3 stages are optimal. A common pattern is: Stage 1: Ultra-fast 2D fingerprint or count-based filter (e.g., MACCSS keys, MolWt, logP). Stage 2: Moderate-cost 3D or electronic descriptor (e.g., DFTB partial charges, Crippen logP). Stage 3: High-accuracy, expensive method (e.g., full DFT reaction energy, DLPNO-CCSD(T)).

Key Experimental Protocols Cited

Protocol 1: Benchmarking Descriptor Speed-Accuracy Trade-off

- Compound Library: Curate a diverse set of 1000-5000 catalyst candidates with known experimental activity labels (e.g., TOF, yield).

- Descriptor Calculation: For each candidate, compute a panel of N descriptors (D1...Dn) ranging from fast (e.g., Mordred 2D) to slow (e.g., DFT-derived).

- Timing: Record wall-clock time for each descriptor calculation per molecule, performed on a standardized computing node.

- Accuracy Assessment: For each descriptor, train a simple model (e.g., Random Forest) using 5-fold cross-validation. Use ROC-AUC as the primary accuracy metric.

- Analysis: Plot Accuracy (ROC-AUC) vs. Log(Speed) for all descriptors to establish the Pareto frontier.

Protocol 2: Implementing and Validating a Two-Stage Screening Funnel

- Workflow Setup: Script a pipeline where Stage 1 uses a fast descriptor (FD) with a threshold (T1) to filter a virtual library (VL). Stage 2 applies an accurate descriptor/model (AD) only to the subset that passes T1.

- Threshold Calibration: On a validation set, adjust T1 to achieve a target recall (e.g., 95-99%) of known active catalysts.

- Performance Evaluation: Measure the total compute time and final predictive accuracy (e.g., precision at top 5%) of the hierarchical workflow on a held-out test set.

- Control Experiment: Run the AD on the entire VL. Compare total time and accuracy to the hierarchical approach to calculate speedup factor and any accuracy cost.

Data Presentation

Table 1: Comparative Profile of Common Catalysis Descriptors in Hierarchical Workflows

| Descriptor Class | Example Descriptors | Avg. Time per Molecule (CPU sec)* | Typical Accuracy (ROC-AUC) | Suggested Workflow Stage |

|---|---|---|---|---|

| Ultra-Fast 2D | MACCSS Keys, RDKit 2D Descriptors, Molecular Weight | < 0.01 | 0.55 - 0.70 | Initial Bulk Filter (Stage 1) |

| Fast 3D/DFTB | GFNn-xTB Energy, RMSD to Template, Crippen LogP | 0.1 - 10 | 0.65 - 0.78 | Secondary Filter (Stage 1/2) |

| Moderate-Cost QM | PM7, DFT (B97-3c, r^2^SCAN-3c) Single Point | 30 - 300 | 0.75 - 0.85 | Primary Scoring (Stage 2) |

| High-Accuracy QM | DLPNO-CCSD(T), DFT with Implicit Solvation, NEB TS Search | 500 - 10^5^ | 0.80 - 0.95 | Final Evaluation (Stage 3) |

- Benchmarked on a single CPU core for a molecule with ~50 atoms. * Accuracy is project-dependent; ranges are illustrative for catalysis property prediction.*

Visualizations

Title: Three-Stage Hierarchical Screening Funnel for Catalyst Discovery

Title: Descriptor Hierarchy for Speed-Accuracy Trade-off

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools for Hierarchical Catalyst Modeling

| Tool / Reagent | Function in Workflow | Example / Note |

|---|---|---|

| RDKit / Mordred | Generates ultra-fast 2D molecular descriptors and fingerprints for initial screening. | Open-source. Calculates 1800+ 2D descriptors in milliseconds. |

| xtb (GFNn-xTB) | Provides fast, semi-empirical quantum mechanical properties (geometry, energy, charges) for 10k-100k compounds. | Key for Stage 2 filtering. GFN2-xTB offers good speed/accuracy balance. |

| ASE (Atomic Simulation Environment) | Manages workflow, connects descriptor calculators, and handles molecular I/O between stages. | Python framework essential for scripting the multi-stage pipeline. |

| Psi4 / ORCA | Performs higher-accuracy DFT or wavefunction calculations for the final evaluation stage. | Used on the <1% of compounds that pass initial filters. |

| scikit-learn / LightGBM | Builds machine learning models that use hierarchical descriptors as features for activity prediction. | Enables non-linear combination of fast and accurate descriptor data. |

| SLURM / Nextflow | Manages job scheduling and computational resource allocation across different workflow stages. | Critical for running large-scale, heterogeneous computational campaigns. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My surrogate model trained on approximate descriptors shows a >15% drop in validation accuracy compared to the high-fidelity model. What are the primary factors to investigate? A: Investigate the following in order:

- Descriptor Fidelity: Quantify the mean squared error (MSE) between your approximate and high-fidelity descriptors. A correlation coefficient below 0.8 often leads to significant downstream accuracy loss.

- Non-Linearity Mapping: The relationship between approximation error and model error is non-linear. Small descriptor errors in critical catalytic regions (e.g., adsorption site geometry) can be magnified.

- Data Alignment: Ensure the training data for the surrogate covers the same chemical space as the target application. Use Principal Component Analysis (PCA) to visualize descriptor space coverage.

Q2: During hyperparameter optimization for the surrogate model, what metrics should I prioritize to balance the accuracy-speed tradeoff? A: Use a multi-objective metric. Track and weight the following:

| Metric | Target for Catalyst Screening | Rationale |

|---|---|---|

| Inference Speed (ms/prediction) | < 50 ms | Enables high-throughput virtual screening. |

| Mean Absolute Error (MAE) vs. High-Fidelity Model | < 0.15 eV (for adsorption energies) | Maintains predictive utility for activity trends. |

| Spearman's Rank Correlation (ρ) | > 0.90 | Critical for correctly prioritizing candidate catalysts. |

| Model Size (MB) | < 500 MB | Facilitates deployment on edge or limited-resource systems. |

Q3: I am getting inconsistent results when switching between graph-based (e.g., GNN) and vector-based (e.g., RF, NN) surrogate models. Which is more suitable for approximate descriptors? A: The choice depends on the type of approximation:

- Use Graph-Based Models (GNNs) when your approximation simplifies computational method (e.g., DFTB vs. DFT) but retains full structural information. GNNs can learn to compensate for systematic electronic structure errors.

- Use Vector-Based Models (RF, DNN) when your approximation also reduces structural complexity (e.g., using Coulomb Matrices instead of 3D electron density). They perform better on fixed-length, simplified representations.

Experimental Protocol for Benchmarking Descriptor Approximations:

- Dataset Curation: Select a benchmark set of 500-1000 catalytic structures (e.g., from CatApp, OC20).

- Descriptor Generation:

- Compute high-fidelity descriptors (e.g., Projected Density of States, Bader charges) using DFT (high accuracy, slow).

- Compute approximate descriptors (e.g., Orbital Field Matrix, Simplified SOAP) using semi-empirical methods or heuristics (lower accuracy, fast).

- Surrogate Model Training: Train identical model architectures (e.g., a 3-layer MLP) on two datasets: (A) high-fidelity descriptors, (B) approximate descriptors.

- Evaluation: Validate on a hold-out set against DFT-calculated target properties (e.g., formation energy, adsorption energy). Measure MAE, RMSE, and inference time.

Q4: How can I diagnose if my approximate descriptors are losing critical chemical information? A: Perform a Descriptor Sensitivity Analysis.

- Perturb the input molecular geometry along known catalytic reaction coordinates (e.g., bond stretching at the active site).

- Track the change in both high-fidelity and approximate descriptor vectors.

- Compute the Jacobian matrix for each. A significant reduction in the norm of the Jacobian for approximate descriptors indicates loss of sensitivity to chemically important degrees of freedom.

Research Reagent Solutions

| Item | Function in Experiment | Example / Specification |

|---|---|---|

| High-Fidelity DFT Code | Generates the "ground truth" data and reference descriptors. | VASP, Quantum ESPRESSO (PSLibrary pseudopotentials recommended). |

| Approximate Descriptor Generator | Quickly computes the low-cost input features for the surrogate model. | DScribe library (for SOAP, MBTR), RDKit (for topological fingerprints). |

| Surrogate Model Framework | Provides flexible architectures for training and rapid inference. | PyTorch or TensorFlow with JIT compilation; Scikit-learn for baseline models. |

| Benchmark Catalyst Dataset | Provides standardized structures and target properties for training & validation. | Open Catalyst Project (OC20) DATASET, Materials Project API. |

| Hyperparameter Optimization Tool | Automates the search for the best speed/accuracy trade-off. | Optuna or Ray Tune (supports parallel, distributed search). |

Visualizations

Diagram: Workflow for Training & Validating a Surrogate Model

Diagram: Accuracy vs. Speed Trade-off Decision Logic

Descriptor Selection and Dimensionality Reduction Techniques (e.g., PCA, Autoencoders)

Troubleshooting Guides & FAQs

Q1: My PCA-transformed catalyst descriptors show poor predictive accuracy in my speed-optimized model. What could be wrong?

A: This is a classic symptom of variance loss or irrelevant signal retention. First, verify the explained variance ratio. In catalyst research, the first few PCs often capture bulk material properties but may lose critical electronic surface descriptors. Check your scree plot. If the elbow is not sharp, you may be retaining too many noisy components that harm generalization. Reconstruct your original data from the PCA and compare key descriptor distributions (e.g., d-band center, coordination number) to ensure they are preserved. A common fix is to use domain-informed scaling before PCA: scale electronic descriptors differently from geometric ones. If the problem persists, switch to kernel PCA for non-linear relationships or use a feature selection method (e.g., mRMR) before PCA to remove truly irrelevant features.

Q2: The training loss of my variational autoencoder (VAE) for descriptor compression converges, but the reconstruction error for adsorption energy descriptors remains high. How do I debug this?

A: High reconstruction error on specific, critical descriptors indicates the VAE latent space is under-sized or the training is prioritizing common, low-variance features. Implement a weighted reconstruction loss. Assign higher loss weights to key catalytic descriptors (e.g., adsorption energies, activation barriers) during training. Monitor the loss per descriptor type. Secondly, validate the latent space continuity: interpolate between two known catalyst points in latent space and use a surrogate model to predict activity. If the predicted activity changes erratically, the latent space is discontinuous—increase the KL divergence weight. Ensure your batch contains diverse catalyst types (e.g., metals, oxides, single-atom) to prevent mode collapse.

Q3: When using t-SNE for visualizing high-dimensional catalyst libraries, my plots show severe overlap between active and inactive clusters. Is the technique failing?

A: t-SNE is for visualization, not for dimensionality reduction for modeling. Overlap can arise from: 1) Perplexity mismatch: For a typical catalyst library of 1k-10k materials, a perplexity between 30-50 is advisable. Tune it. 2) Descriptor scale variance: t-SNE is sensitive to scale. Standardize all descriptors (e.g., z-score). 3) The underlying truth: The overlap may accurately reflect that your current descriptor set cannot separate active from inactive catalysts—this is a feature engineering issue. Use t-SNE as a diagnostic: if overlap persists after parameter tuning, you need more discriminative descriptors (e.g., reaction-path-specific descriptors). Consider using UMAP as an alternative; it often preserves more global structure.

Q4: After implementing a stacked autoencoder for dimensionality reduction, my QSAR model is faster but consistently underestimates the activity of high-throughput screening (HTS) "hit" catalysts. Why?

A: This points to information loss in the compression bottleneck specifically affecting the "active" region of descriptor space. The autoencoder may be optimized for average-case reconstruction, smoothing out rare but critical extreme values. Troubleshoot as follows:

- Analyze latent space density: Plot the density of your training set vs. HTS hits in the first 2 latent dimensions. If hits lie in low-density regions, the autoencoder was not sufficiently trained on analogous structures. Augment training with known actives.

- Implement a custom loss function: Penalize reconstruction error of the target property (e.g., turnover frequency) more heavily than the raw descriptor error.

- Consider an adversarial component: Train a discriminator to ensure the latent space of active catalysts matches the distribution of the full training set, preventing the "forgetting" of active regions.

Q5: I need to select the top k descriptors from a set of 500 for a rapid, interpretable model. Correlation-based filtering removes important non-linear relationships. What robust, fast method do you recommend?

A: For the accuracy-speed tradeoff, use Maximum Relevance Minimum Redundancy (mRMR) or LASSO regression. mRMR is excellent for maintaining a diverse, informative descriptor set without high inter-correlation, preserving model interpretability. LASSO directly selects features for a linear model, favoring speed. The protocol below compares both.

Experimental Protocols

Protocol 1: Benchmarking PCA vs. Autoencoders for Descriptor Compression

Objective: Evaluate the trade-off between prediction accuracy and inference speed using linear (PCA) and non-linear (autoencoder) dimensionality reduction on a catalyst dataset.

- Data: 10,000 catalyst descriptors from the CatHub database (electronic, geometric, compositional). Target property: Adsorption energy of O.

- Preprocessing: Remove descriptors with >20% missing values. Impute remainder with median. Apply RobustScaler.

- Dimensionality Reduction:

- PCA: Retain components explaining 95%, 90%, and 85% variance.

- Autoencoder: Architectures: (a) Linear: 500->100->500, (b) Non-linear: 500->250->100->250->500 with ReLU. Train for 100 epochs, MSE loss.

- Modeling: Train separate Ridge Regression models on each reduced dataset.

- Evaluation: 5-fold cross-validation. Record Mean Absolute Error (MAE) and model inference time (ms/prediction) on a hold-out test set.

Protocol 2: mRMR for Interpretable Descriptor Selection

Objective: Select a compact, interpretable descriptor set for fast, approximate activity prediction.

- Data: Same as Protocol 1.

- Selection: Apply mRMR (mutual information criteria) to select the top 10, 20, and 30 descriptors most relevant to the target property with minimal mutual redundancy.

- Modeling: Train a simple linear regression on each selected subset.

- Evaluation: Compare MAE, R², and inference speed against models using PCA-reduced features. Analyze selected descriptors for chemical interpretability.

Data Tables

Table 1: Performance Comparison of Dimensionality Reduction Techniques on Catalyst Dataset

| Technique | # Output Dims | MAE (eV) ± Std | R² | Inference Speed (ms/pred) | Training Time (s) |

|---|---|---|---|---|---|

| Baseline (All Features) | 500 | 0.128 ± 0.012 | 0.89 | 2.1 | - |

| PCA (95% Var) | 45 | 0.131 ± 0.013 | 0.88 | 0.3 | 12 |

| PCA (90% Var) | 28 | 0.135 ± 0.014 | 0.87 | 0.2 | 10 |

| PCA (85% Var) | 19 | 0.145 ± 0.015 | 0.85 | 0.2 | 9 |

| Linear AE (Latent=50) | 50 | 0.130 ± 0.013 | 0.88 | 0.5 | 305 |

| Non-linear AE (Latent=50) | 50 | 0.122 ± 0.011 | 0.90 | 0.6 | 580 |

| mRMR (Top 20) | 20 | 0.139 ± 0.014 | 0.86 | 0.1 | 8 |

Table 2: Key Research Reagent Solutions & Materials

| Item | Function in Descriptor Research |

|---|---|

| RDKit | Open-source cheminformatics toolkit for generating molecular and compositional descriptors (e.g., Morgan fingerprints, molecular weight). |

| DScribe | Python library for creating atomistic descriptors, especially for surface and bulk materials (e.g., SOAP, MBTR, LODE). |

| Matminer | Platform for data mining materials properties; provides featurizers for composition, structure, and bands. |

| scikit-learn | Essential for implementing PCA, various feature selection methods, and regression models for benchmarking. |

| PyTorch/TensorFlow | Frameworks for building and training custom autoencoder architectures for non-linear dimensionality reduction. |

| CatHub Database | A curated source of catalytic data and calculated descriptors for training and validation. |

Visualizations

Title: PCA Workflow for Catalyst Descriptors

Title: Accuracy vs. Speed Trade-off Decision Path

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During library enumeration, my pipeline crashes with a "MemoryError" when processing large combinatorial libraries. How can I proceed? A: This typically occurs when 3D conformational generation and descriptor calculation are attempted in a single, in-memory step. Implement a chunked processing workflow. Protocol: 1. Use a library enumeration tool (e.g., RDKit) to output the SMILES list. 2. Split the list into chunks of 50,000-100,000 compounds. 3. For each chunk, generate 3D conformers (using OMEGA or RDKit ETKDG) and calculate descriptors separately, writing results to disk immediately. 4. Use a database (SQLite) or incremental file appends to aggregate results. This trades a minor speed penalty for vastly reduced RAM footprint.

Q2: The combined descriptor matrix has highly correlated features, leading to model instability. What is the recommended feature selection process? A: High correlation between 2D (e.g., MACCS keys) and 3D (e.g., Pharmacophore) descriptors is common. A rigorous, two-step filter is advised. Protocol: 1. Variance Threshold: Remove features with variance < 0.01 (or near-zero variance). 2. Correlation Filter: Calculate pairwise Pearson correlation for all remaining features. For any pair with |r| > 0.95, remove the one with lower variance or higher redundancy. 3. Model-Based Selection: Apply a univariate feature selection method (ANOVA F-value) or tree-based importance (from a Random Forest trained on a subset) to select the top 500-1000 features for final modeling.

Q3: My pipeline's runtime is dominated by 3D geometric descriptor calculation, negating the speed benefits. How can I optimize this? A: This highlights the core accuracy-speed trade-off. Implement a staged or "fail-fast" screening protocol. Protocol: 1. First Pass (Speed): Screen the entire library using fast 2D descriptors (ECFP4, RDKit descriptors) with a simple, pre-trained model (e.g., Random Forest). Retain the top 20%. 2. Second Pass (Accuracy): Process only this enriched subset with slow, accurate 3D/complex descriptors (e.g., Quantum Chemical, GRID descriptors). Use a more sophisticated model (e.g., SVM, Neural Network) for final ranking. This balances overall throughput with predictive accuracy where it counts.

Q4: When integrating descriptors from different sources (RDKit, PaDEL, in-house), the feature matrices have inconsistent row orders. How to ensure alignment?

A: This is a critical data integrity issue. Never rely on list order. Use a unique, immutable compound identifier as the joining key.

Protocol: 1. Assign a unique ID (e.g., UUID) to each enumerated compound SMILES before any processing. 2. Ensure every descriptor calculation script and output file includes this ID column. 3. Use a Pandas DataFrame or SQL JOIN operation (pd.merge or SQL JOIN) on the ID key to integrate all descriptor tables. Always verify row counts after each merge.

Q5: The predictive performance of my model varies drastically between different external test sets. How can I improve generalization? A: This often stems from " descriptor domain shift," where new compounds occupy underrepresented chemical space in the training data. Protocol: 1. Apply Applicability Domain (AD) Analysis: Use a method like leverage (Williams plot) or distance to training data in PCA space. Flag predictions for compounds outside the AD. 2. Diverse Training Data: Ensure your training set covers broad chemical space by using clustering (e.g., Butina clustering on ECFP4 fingerprints) for representative selection. 3. Simplify Model: Reduce overfitting by using the feature selection from Q2 and employing stricter regularization (e.g., higher L1/L2 penalties in linear models).

Data Presentation

Table 1: Performance vs. Runtime Trade-off for Descriptor Types

| Descriptor Class | Example Descriptors | Avg. Time per Compound (ms)* | Typical Use Case | Key Accuracy Metric (AUC-ROC)† |

|---|---|---|---|---|

| 1D/2D (Fast) | Molecular Weight, LogP, MACCS, ECFP4 | 0.1 - 10 | Initial Filtering, High-Throughput | 0.70 - 0.80 |

| 3D Geometric | Pharmacophore, WHIM, 3D MoRSE | 50 - 500 | Enriched Library Screening | 0.75 - 0.85 |

| 3D Quantum (Slow) | Partial Charges, MEP, DFT-based | > 1000 | Lead Optimization, Final Ranking | 0.80 - 0.90 |

| Mixed Pipeline | ECFP4 + Pharmacophore + Selected QC | ~100 (after filtering) | Balanced High-Throughput Screening | 0.82 - 0.88 |

*Measured on a standard CPU core. †Dependent on specific target and data quality.

Table 2: Troubleshooting Summary: Common Errors & Solutions

| Error Symptom | Likely Cause | Immediate Fix | Long-Term Solution |

|---|---|---|---|

| MemoryError | Whole-library in-memory processing | Process data in chunks | Implement a disk-backed database pipeline |

| Low Model Accuracy | High feature correlation, overfitting | Apply correlation filter | Implement rigorous train/test splits & AD |

| Pipeline Inconsistency | Misaligned compound IDs | Manual inspection & reordering | Enforce UUID use at the start of workflow |

| Extreme Runtime | Over-reliance on slow 3D descriptors | Apply a fast pre-filter (2D) | Design a staged screening funnel |

Experimental Protocols

Protocol: Standardized Benchmarking for Mixed-Descriptor Pipelines

- Dataset Curation: Use a public benchmark (e.g., DUD-E, DEKOIS 2.0). Split into training (60%), validation (20%), and hold-out test (20%) sets using scaffold splitting to assess generalization.

- Descriptor Calculation:

- 2D Module: Calculate ECFP4 (radius=2, 2048 bits) and 200 RDKit 2D descriptors using RDKit. Standardize (zero mean, unit variance).

- 3D Module: Generate up to 5 conformers per compound using RDKit ETKDGv3. Calculate Pharm2D3D descriptors (from RDKit) for each conformer and take the mean vector.

- Feature Integration & Selection: Concatenate 2D and 3D matrices using aligned compound IDs. Apply a) Near-zero variance removal, b) Pairwise correlation filter (threshold |r|=0.95), c) Select top 500 features by Random Forest importance on validation set.

- Modeling & Validation: Train a Gradient Boosting Machine (e.g., XGBoost) with 5-fold cross-validation on the training set. Optimize hyperparameters (maxdepth, learningrate) on the validation set. Evaluate final model on the hold-out test set using AUC-ROC, Enrichment Factor (EF1%), and BEDROC.

Protocol: Implementing a Chunked Processing Workflow

- Input: A

.smifile with 1 million SMILES strings. - Script Logic:

Mandatory Visualizations

Title: Staged Screening Pipeline for Speed/Accuracy Balance

Title: Logical Relationship of Descriptors to Core Thesis

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Mixed-Descriptor Pipeline |

|---|---|

| RDKit | Open-source toolkit for core cheminformatics: SMILES parsing, 2D descriptor calculation, fingerprint generation (ECFP), and 3D conformer generation. |

| Open Babel / OEchem | Toolkits for file format conversion and fundamental molecular manipulation, ensuring interoperability between pipeline modules. |

| OMEGA (OpenEye) | High-performance, rule-based 3D conformer generator; faster and more empirically tuned than stochastic methods for large libraries. |

| PaDEL-Descriptor | Standalone software for calculating a comprehensive suite of 1D, 2D, and 3D descriptors (1875+), useful for feature diversity. |

| XGBoost / Scikit-learn | Machine learning libraries for building and evaluating models on mixed-descriptor data, supporting efficient regularization. |

| SQLite Database | Lightweight, file-based database system for reliably storing and joining chunked descriptor outputs and compound metadata. |

| KNIME / Nextflow | Workflow management platforms to visually design, execute, and reproducibly run the entire multi-step pipeline. |

Navigating Pitfalls: Troubleshooting Common Issues and Optimizing the Tradeoff for Your Project

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My catalyst descriptor model's accuracy has plateaued. How do I determine if the issue is poor data quality or a model architecture limitation?

A: Follow this diagnostic protocol to isolate the cause.

Experiment: Data Subset Validation.

- Protocol: Partition your dataset into three tiers based on a quality confidence score (e.g., experimental replicate consistency, measurement uncertainty). Train and evaluate your model separately on the high-confidence subset (Tier 1) and the full dataset.

- Interpretation: A significant accuracy increase on Tier 1 indicates data quality is a primary bottleneck. Similar performance suggests an architectural or hyperparameter limitation.

Key Data Table: Model Performance vs. Data Quality Tier

| Data Quality Tier | Sample Size | MAE (eV) | R² | Training Time (hrs) |

|---|---|---|---|---|

| Tier 1 (High) | 850 | 0.23 | 0.91 | 3.2 |

| Tier 2 (Medium) | 1200 | 0.31 | 0.86 | 4.1 |

| Full Dataset | 2500 | 0.38 | 0.82 | 7.5 |

Table 1: Example results showing performance degradation with lower-quality data, pointing to data quality as a key bottleneck.

Q2: My model is too slow for high-throughput virtual screening. Should I simplify the model or invest in more computational resources?

A: The decision depends on the sensitivity of your accuracy to descriptor complexity. Perform a complexity-ablation study.

- Experiment: Complexity vs. Performance Trade-off.

- Protocol: Systematically reduce model complexity (e.g., number of GNN layers, descriptor dimensionality) and/or feature sophistication (e.g., replace learned representations with simpler heuristic descriptors). Measure the impact on prediction error and inference speed per catalyst candidate.

- Interpretation: Plot the accuracy-speed Pareto frontier. If small complexity sacrifices yield large speed gains, model simplification is viable. If accuracy drops precipitously, parallelization or hardware acceleration may be better.

Q3: How can I quickly assess if a performance issue is rooted in the training data's coverage of chemical space?

A: Perform a k-nearest neighbors (k-NN) similarity analysis for erroneous predictions.

Protocol:

- Identify a set of high-error predictions (e.g., absolute error > 0.5 eV).

- For each error case, find the k most structurally similar catalysts in the training set using a fingerprint-based Tanimoto similarity.

- Calculate the average prediction error for those k neighbors.

Key Data Table: Error Analysis via Training Set Similarity

| High-Error Test Sample | Predicted ΔG (eV) | Actual ΔG (eV) | Avg. Similarity to Top 5 Train Neighbors | Avg. Error of Train Neighbors (eV) |

|---|---|---|---|---|

| Catalyst_A | 1.05 | 0.42 | 0.31 | 0.41 |

| Catalyst_B | -0.21 | 0.78 | 0.67 | 0.12 |

| Catalyst_C | 2.15 | 1.88 | 0.89 | 0.05 |

Table 2: Catalyst_A's low similarity and high neighbor error suggest a data gap. Catalyst_B's high similarity but large error may indicate a localized model failure or outlier.

Q4: I suspect label noise (experimental inaccuracy) is harming my model. How can I confirm and mitigate this?

A: Implement a robust loss function and compare performance.

Protocol:

- Retrain your model using a loss function robust to label noise (e.g., Generalized Cross Entropy, Huber loss) alongside your standard Mean Squared Error (MSE).

- Evaluate both models on a small, meticulously curated validation set assumed to have very high label fidelity.

Key Data Table: Robust Loss Impact on Noisy Data

| Loss Function | MAE on Full Test Set (eV) | MAE on Curated Validation Set (eV) | Training Epochs to Converge |

|---|---|---|---|

| MSE | 0.41 | 0.35 | 120 |

| Huber Loss | 0.38 | 0.32 | 145 |

| Symmetric MAE | 0.36 | 0.34 | 180 |

Table 3: Improved performance on the high-fidelity curated set with robust losses suggests label noise is a meaningful bottleneck.

Mandatory Visualizations

Diagram 1: Diagnostic workflow for performance bottlenecks.

Diagram 2: The accuracy-speed Pareto frontier for model selection.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Relevance to Catalyst Descriptor Research |

|---|---|

| High-Throughput Experimentation (HTE) Robotic Platforms | Automates synthesis and testing of catalyst libraries, generating large, consistent datasets critical for training data-hungry ML models. |

| Quantum Chemistry Software (e.g., VASP, Gaussian) | Provides high-accuracy ab initio descriptors (formation energies, d-band centers) and synthetic data for pre-training or augmenting experimental datasets. |

| Graph Neural Network (GNN) Frameworks (e.g., PyTorch Geometric, DGL) | Essential libraries for building descriptor models that directly learn from catalyst structure graphs, capturing local coordination environments. |

| Uncertainty Quantification (UQ) Tools (e.g., ensemble methods, MC dropout) | Allows models to estimate prediction confidence, flagging low-quality data points and guiding targeted data acquisition. |

| Automated Feature Extraction Libraries (e.g., matminer, dscribe) | Generate vast sets of heuristic compositional and structural descriptors for traditional ML models, enabling rapid baseline establishment. |

| Active Learning Loop Controllers (e.g., ChemOS, custom scripts) | Orchestrates the iterative cycle of model prediction, experimental proposal, and data incorporation to optimize the accuracy/data efficiency trade-off. |

Troubleshooting Guides & FAQs

Q1: My DFT-calculated descriptor values vary significantly between different simulation packages (e.g., VASP vs. Quantum ESPRESSO). Which parameters should I standardize first to ensure reproducibility?

A: The primary culprits are often the plane-wave energy cutoff and k-point sampling density. To ensure consistent descriptor calculation (e.g., d-band center, adsorption energies), standardize these core parameters:

- Plane-Wave Energy Cutoff: Use a convergence test. Increase the cutoff until the total energy of your catalyst slab model changes by less than 1 meV/atom.

- k-points: Use a Monkhorst-Pack grid. For surface calculations, ensure the grid is dense enough in the surface plane. A common starting point is a 3x3x1 grid for a (111) surface slab, but you must converge the relevant property (e.g., binding energy).

Q2: When using Active Learning for high-throughput screening of bimetallic catalysts, my model keeps sampling similar compositions, missing potentially promising regions. How can I improve the sampling diversity?

A: This indicates your acquisition function may be too exploitative. Implement a diversity-promoting strategy:

- Adjust the acquisition function: If using Expected Improvement (EI), add a small random component or switch to Thompson Sampling.

- Cluster-based sampling: Periodically perform a clustering analysis (e.g., using K-Means on the descriptor space) and select the next sample from the least explored cluster.

- Use a hybrid query strategy: Combine an exploitative metric (like predicted performance) with an explorative metric (like distance to nearest training point) using a weighted sum. The weight (

β) controls the trade-off. Experiment withβvalues between 0.2 (more explorative) and 0.8 (more exploitative).

Table: Effect of Acquisition Function Tuning on Sampling Diversity

| Acquisition Function | Hyperparameter | Typical Value Range | Effect on Speed | Effect on Discovery of Novel Catalysts |

|---|---|---|---|---|

| Expected Improvement (EI) | ξ (exploration) |

0.01 - 0.1 | Fast convergence | Low; can get stuck |

| Upper Confidence Bound (UCB) | κ (exploration) |

2.0 - 5.0 | Slower convergence | High; broad exploration |

| Mixed Strategy (EI + Diversity) | β (diversity weight) |

0.2 - 0.5 | Moderate | Significantly Improved |

Q3: Switching from a Random Forest to a Graph Neural Network (GNN) for predicting catalytic activity slowed my training by over 10x. Is this expected, and how can I optimize the GNN architecture for speed without massive accuracy loss?

A: Yes, this is expected due to the complexity of message-passing operations. Key optimization levers:

- Reduce Graph Convolution Layers: Start with 2-3 layers. Each layer aggregates information from neighbors; too many are unnecessary for most local catalytic properties.

- Use Simpler Pooling: Replace global attention pooling with mean or sum pooling.

- Optimize Node Representation: Use lower-dimensional initial atom embeddings (e.g., 64 instead of 128).

- Batch Size and Hardware: Utilize GPU acceleration with optimized libraries (PyTorch Geometric, DGL) and increase batch size to the maximum your GPU memory allows.

Table: GNN Architecture Tuning for Speed/Accuracy Trade-off

| Architectural Component | Faster Configuration | Standard Configuration | Approximate Speedup | Typical Accuracy Impact |

|---|---|---|---|---|

| Convolution Layers | 2 | 5 | 2.0x | < 5% loss if system is local |

| Hidden Dimension | 64 | 256 | ~4.0x | Varies; needs validation |

| Pooling Method | Sum Pooling | Attention Pooling | 1.5x | Can be significant for complex systems |

| Composite Optimized Model | 2 Layers, Dim 64, Sum Pool | 5 Layers, Dim 256, Attn Pool | ~8-10x | Requires careful per-dataset evaluation |

Q4: My SOAP (Smooth Overlap of Atomic Positions) descriptors for alloy nanoparticles are too high-dimensional, causing my Gaussian Process Regression (GPR) model to train slowly. What are my options?

A: You need to reduce descriptor dimensionality while preserving chemical information.

- Principal Component Analysis (PCA): Standard linear method. Retain components explaining >95% variance.

- Sparsification: Use

fps(farthest point sampling) orcurmatrix decomposition to select a subset of representative SOAP vectors. - Use Dimensionality-Reduced GPR: Implement a Sparse Gaussian Process model that uses inducing points, dramatically reducing the kernel matrix size.

Experimental Protocol: Benchmarking Descriptor Calculation Parameters Objective: To determine the optimal plane-wave cutoff and k-point density for calculating the d-band center descriptor for transition metal surfaces.

- System Setup: Build a 3-layer, 3x3 supercell slab model of Pt(111) with 15 Å vacuum.

- Cutoff Convergence:

- Fix k-points to a dense grid (e.g., 4x4x1).

- Calculate the total energy of the slab for cutoffs: 300, 350, 400, 450, 500, 550 eV.

- Plot Energy vs. Cutoff. Choose the cutoff where energy change is <1 meV/atom.

- k-point Convergence:

- Fix the cutoff to the value from step 2.

- Calculate the d-band center for k-point grids: 2x2x1, 3x3x1, 4x4x1, 5x5x1, 6x6x1.

- Plot d-band center vs. k-point density. Choose the grid where the value converges within 0.05 eV.

- Validation: Recalculate a known adsorption energy (e.g., *OH) using the converged parameters and compare to literature benchmarks.

Diagram: Workflow for Descriptor-Based Catalyst Screening

Diagram: Accuracy vs. Speed Trade-off Levers

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials & Software for Catalyst Descriptor Modeling

| Item/Reagent | Function in Research | Example/Notes |

|---|---|---|

| DFT Software | Calculates electronic structure, the source of fundamental descriptors. | VASP, Quantum ESPRESSO, GPAW. Choice affects speed and required parameter tuning. |

| Descriptor Generation Library | Automates extraction of numerical features from DFT outputs. | DScribe, CatLearn, ASAP. Critical for standardizing feature sets. |

| Machine Learning Framework | Platform for building, training, and validating predictive models. | scikit-learn (RF, GPR), PyTorch/TensorFlow (GNNs), GPyTorch. |

| Active Learning Loop Manager | Orchestrates the iterative querying and model updating process. | Custom scripts using modAL, deepchem, or AMP. |

| High-Performance Computing (HPC) Cluster | Provides the computational power for parallel DFT calculations and ML training. | Essential for realistic throughput. GPU nodes accelerate GNN training. |

| Catalyst Database | Source of initial training data and benchmark structures. | Catalysis-Hub, Materials Project, NOMAD. Provides seed data for transfer learning. |

In catalyst descriptor model research, the accuracy-versus-speed tradeoff is a fundamental consideration. During early-stage exploration and large-scale library enumeration, computational speed is often prioritized to rapidly identify promising regions of chemical space. This technical support center provides guidance for navigating this tradeoff, troubleshooting common issues, and implementing efficient protocols.

FAQs & Troubleshooting Guides

Q1: My high-throughput virtual screening (HTVS) workflow is failing to generate descriptors for metal-organic complexes. What could be the cause? A: This is often due to improper handling of non-standard bonding or metal coordination. Ensure your molecular featurization tool (e.g., RDKit) has the correct parameters for handling organometallic bonds. Pre-process structures with a tool like Open Babel to assign correct bond orders before descriptor calculation.

Q2: During library enumeration, my model's performance drops significantly compared to smaller, curated test sets. How should I debug this? A: This indicates a potential domain shift or overfitting. First, check the distribution of key descriptors (e.g., molecular weight, logP) in your enumerated library versus your training set. Use a simple, fast model (like a Random Forest) for initial sanity checks to see if the performance drop is consistent across model complexities.

Q3: What are the key checks before launching a large-scale enumeration to ensure computational efficiency? A: 1) Pilot Batch: Run a 1% sample to gauge runtime and memory use. 2) Descriptor Validation: Ensure no single descriptor calculation is a bottleneck. 3) Data Pipeline: Confirm your storage and data retrieval can handle the output volume.

Q4: When using a simplified, fast model for early exploration, how do I know if its predictions are trustworthy enough to proceed? A: Establish correlation metrics with your higher-accuracy model on a hold-out set. If available, use experimental data from a small, diverse validation set. The table below provides quantitative guidelines for acceptable early-stage model performance.

Table 1: Performance Thresholds for Speed-Optimized Early-Stage Models

| Model Type | Minimum Acceptable R² (vs. High-Accuracy Model) | Maximum Acceptable RMSE Increase | Recommended Validation Set Size |

|---|---|---|---|

| Linear Model (e.g., Ridge) | 0.70 | 25% | 50-100 compounds |

| Light Gradient Boosting (LGBM) | 0.80 | 15% | 100-200 compounds |

| Graph Neural Network (Fast) | 0.75 | 20% | 150-300 compounds |

Experimental Protocols

Protocol 1: Benchmarking Speed vs. Accuracy for Descriptor Models Objective: To systematically evaluate the tradeoff between computation time and predictive accuracy for different descriptor sets and algorithms.

- Data Curation: Select a benchmark dataset (e.g., CatHub for catalysis) with known catalytic properties.

- Descriptor Calculation: Compute three descriptor sets:

- Set A (Speed): 10-20 classical descriptors (e.g., electronic, steric).

- Set B (Balanced): 50-100 descriptors including selected fingerprint bits.

- Set C (Accuracy): 500+ descriptors or full molecular graph.

- Model Training: Train three model classes (Linear, Random Forest, GNN) on each set using 5-fold cross-validation.

- Metrics: Record total CPU hours for descriptor calculation + training. Measure prediction R² and RMSE on a held-out test set.

- Analysis: Plot Pareto frontiers (Accuracy vs. Log(Speed)) to identify optimal operating points for early vs. late-stage research.

Protocol 2: High-Throughput Virtual Screening (HTVS) Workflow for Catalyst Library Enumeration Objective: To rapidly screen >10^5 candidate catalysts using a tiered modeling approach.

- Library Generation: Use a rule-based builder (e.g., RDKit's

EnumerateLibrary) to create virtual library from core scaffolds and functional groups. - Tier 1 Filtering (Ultra-Fast): Apply simple property filters (MW < 900, logP < 5) and a rules-based score (e.g., functional group toxicity).

- Tier 2 Screening (Fast): Calculate Set A descriptors. Use a pre-trained, simple linear model to score all compounds. Retain top 20%.

- Tier 3 Ranking (Balanced): On the reduced set, calculate Set B descriptors. Apply a more accurate ensemble model (e.g., LGBM) for final ranking.

- Output: Generate a prioritized list with scores, key descriptors, and structural alerts for experimental follow-up.

Visualizations

Title: Decision Flowchart for Speed vs. Accuracy in Catalyst Research

Title: Tiered High-Throughput Virtual Screening (HTVS) Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Speed-Optimized Catalyst Discovery